## Diagram: Comparison of AI Reasoning Methods on a Knowledge-Based Question

### Overview

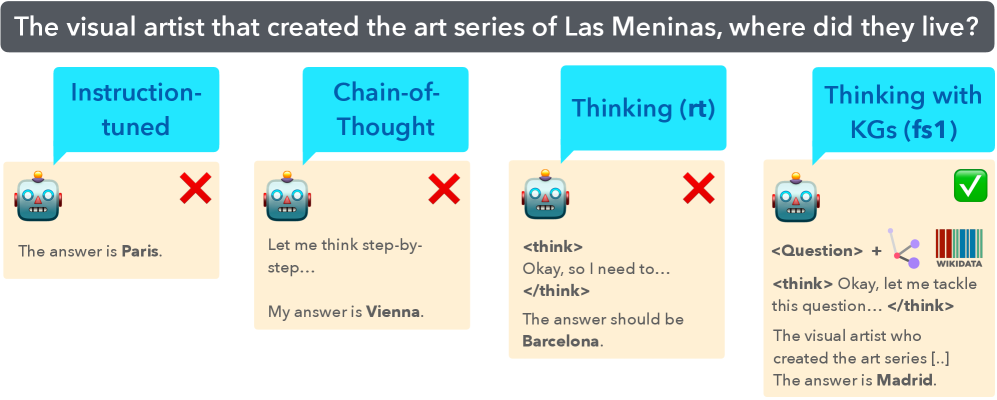

The image is a comparative diagram illustrating four different AI reasoning approaches applied to the same factual question. It visually demonstrates the varying accuracy of these methods, with only the final approach yielding the correct answer. The diagram is structured as a horizontal sequence of four panels, each representing a distinct method.

### Components/Axes

* **Header (Top Banner):** A dark gray banner spanning the width of the image contains the central question in white text: "The visual artist that created the art series of Las Meninas, where did they live?"

* **Panels (Four Columns):** The main body consists of four vertical panels, each with a light beige background. From left to right, they are labeled:

1. **Instruction-tuned** (Cyan speech bubble)

2. **Chain-of-Thought** (Cyan speech bubble)

3. **Thinking (rt)** (Cyan speech bubble)

4. **Thinking with KGs (fs1)** (Cyan speech bubble)

* **Common Elements per Panel:**

* A cyan speech bubble at the top containing the method's name.

* A stylized robot head icon (blue and gray with orange antenna) on the left.

* A correctness indicator on the right: a red "X" for incorrect answers, a green checkmark for the correct answer.

* Text output from the AI model below the robot icon.

### Detailed Analysis

**Panel 1: Instruction-tuned**

* **Method Label:** "Instruction-tuned"

* **Correctness Indicator:** Red "X" (Incorrect)

* **AI Output Text:** "The answer is **Paris.**" (The word "Paris" is in bold).

**Panel 2: Chain-of-Thought**

* **Method Label:** "Chain-of-Thought"

* **Correctness Indicator:** Red "X" (Incorrect)

* **AI Output Text:** "Let me think step-by-step... My answer is **Vienna.**" (The word "Vienna" is in bold).

**Panel 3: Thinking (rt)**

* **Method Label:** "Thinking (rt)"

* **Correctness Indicator:** Red "X" (Incorrect)

* **AI Output Text:** " The answer should be **Barcelona.**" (The word "Barcelona" is in bold).

**Panel 4: Thinking with KGs (fs1)**

* **Method Label:** "Thinking with KGs (fs1)"

* **Correctness Indicator:** Green checkmark (Correct)

* **AI Output Text:** "<Question> + [Icon of a knowledge graph (nodes and edges)] + [Wikidata logo] The visual artist who created the art series [..] The answer is **Madrid.**" (The word "Madrid" is in bold). The "[..]" indicates an ellipsis in the transcribed text.

### Key Observations

1. **Progression of Complexity:** The methods evolve from a simple direct answer ("Instruction-tuned") to a process involving step-by-step reasoning ("Chain-of-Thought"), internal monologue ("Thinking (rt)"), and finally, reasoning augmented by external knowledge ("Thinking with KGs").

2. **Accuracy Correlation:** There is a direct correlation between the method's complexity (specifically, the integration of external knowledge) and its accuracy. The first three methods, which rely solely on internal model parameters, produce incorrect answers (Paris, Vienna, Barcelona).

3. **Visual Cues for Correctness:** The diagram uses universal symbols (red X, green checkmark) to immediately convey the success or failure of each approach, making the conclusion visually intuitive.

4. **Spatial Grounding:** The legend (method labels in cyan bubbles) is consistently placed at the top of each panel. The correctness indicator is consistently placed to the right of the robot icon. The final, correct panel is distinguished by additional visual elements (knowledge graph icon, Wikidata logo) placed between the question tag and the thinking tag.

### Interpretation

This diagram serves as a pedagogical or illustrative tool to argue for the necessity of **grounding AI reasoning in external knowledge bases** (like Wikidata/Knowledge Graphs) for factual accuracy.

* **What it demonstrates:** It suggests that standard instruction-tuned models and even those employing chain-of-thought or internal reasoning ("rt") can confidently generate plausible but incorrect answers ("hallucinations") when their training data is insufficient or imprecise for a specific factual query. The question about Diego Velázquez (the artist of *Las Meninas*) and his residence is a precise historical fact that requires verified data.

* **How elements relate:** The progression shows a logical argument: simple prompting fails, structured internal reasoning fails, but when the model's reasoning process is augmented with a direct query to a structured knowledge source (Wikidata), it succeeds. The inclusion of the Wikidata logo is a key visual anchor for this argument.

* **Notable implication:** The diagram implies that for reliable factual question-answering, especially on niche or precise topics, AI systems benefit significantly from architectures that can retrieve and incorporate information from curated knowledge graphs rather than relying solely on parametric knowledge learned during training. The "(fs1)" in the final label may refer to a specific technique or model variant designed for this fusion.