\n

## Scatter Plot: Test Set Accuracy vs. Number of Network Parameters

### Overview

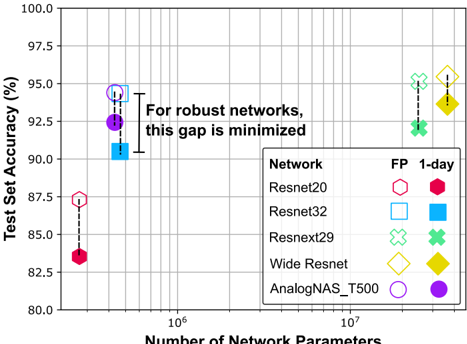

This image presents a scatter plot comparing the test set accuracy of different neural networks against the number of network parameters. Each network is represented by a distinct marker, and error bars indicate the variability in accuracy. The plot aims to illustrate the relationship between model complexity (number of parameters) and performance (test set accuracy), with a focus on minimizing the gap between performance metrics for robust networks.

### Components/Axes

* **X-axis:** Number of Network Parameters (logarithmic scale, ranging from approximately 10<sup>6</sup> to 10<sup>7</sup>).

* **Y-axis:** Test Set Accuracy (%) (ranging from 80% to 100%).

* **Legend:** Located in the bottom-right corner, categorizes the networks by marker shape and color.

* **Network:** Resnet20, Resnet32, Resnext29, Wide Resnet, AnalogNAS\_T500

* **FP:** (likely representing a training paradigm) 1-day, indicated by color.

* **Text Annotation:** "For robust networks, this gap is minimized" is positioned in the center of the plot.

### Detailed Analysis

The plot displays data points for each network under different training conditions (FP). Each data point includes an error bar representing the standard deviation or confidence interval of the accuracy.

* **Resnet20 (Pink Hexagon):**

* FP 1-day: Approximately (1.2 x 10<sup>6</sup> parameters, 86.5% accuracy) with an error bar extending from approximately 85.5% to 87.5%.

* **Resnet32 (Blue Square):**

* FP 1-day: Approximately (2.5 x 10<sup>6</sup> parameters, 90.5% accuracy) with an error bar extending from approximately 89.5% to 91.5%.

* **Resnext29 (Green Cross):**

* FP 1-day: Approximately (7.5 x 10<sup>6</sup> parameters, 95.5% accuracy) with an error bar extending from approximately 94.5% to 96.5%.

* **Wide Resnet (Yellow Diamond):**

* FP 1-day: Approximately (7.5 x 10<sup>6</sup> parameters, 93.0% accuracy) with an error bar extending from approximately 92.0% to 94.0%.

* **AnalogNAS\_T500 (Purple Circle):**

* FP 1-day: Approximately (2.5 x 10<sup>6</sup> parameters, 92.5% accuracy) with an error bar extending from approximately 91.5% to 93.5%.

The trend for most networks is that as the number of parameters increases, the test set accuracy also increases. However, the rate of increase appears to diminish with larger models.

### Key Observations

* Resnext29 achieves the highest accuracy among the networks tested.

* Resnet20 has the lowest accuracy.

* The gap between the accuracy of Resnet20 and Resnet32 is significant.

* AnalogNAS\_T500 and Resnet32 have similar numbers of parameters but different accuracies.

* The error bars suggest that the accuracy of Resnext29 is more consistent than that of other networks.

### Interpretation

The data suggests a positive correlation between the number of network parameters and test set accuracy, but this relationship is not linear. Increasing model complexity (parameters) generally leads to improved performance, but there are diminishing returns. The annotation "For robust networks, this gap is minimized" implies that a robust network should have a small difference between its performance metrics (potentially training and test accuracy, or different evaluation criteria). The plot highlights that simply increasing the number of parameters does not guarantee a robust network. The choice of network architecture (e.g., Resnext29) and training procedure (FP) are also crucial factors. The varying error bar lengths indicate that some networks are more sensitive to variations in training data or initialization than others. The plot is useful for comparing the performance of different network architectures and identifying potential trade-offs between model complexity and accuracy.