## Line Charts: Performance Comparison of Prover Models with/without Apollo

### Overview

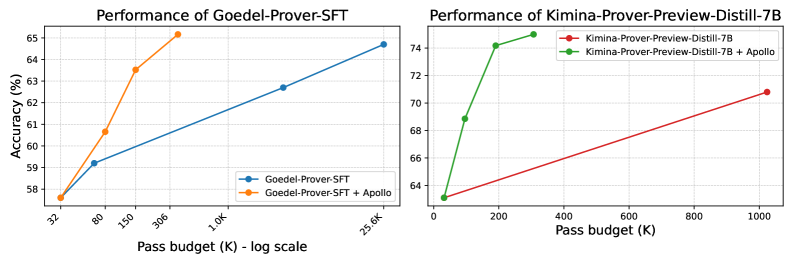

The image contains two side-by-side line charts comparing the accuracy performance of two prover models (Goedel-Prover-SFT and Kimina-Prover-Preview-Distill-7B) with and without Apollo enhancements across different pass budgets. Both charts use accuracy (%) on the y-axis and pass budget (K) on the x-axis, with distinct scaling and data patterns.

### Components/Axes

**Left Chart (Goedel-Prover-SFT):**

- **Title:** "Performance of Goedel-Prover-SFT"

- **X-axis:** "Pass budget (K) - log scale" (markers: 32, 80, 150, 306, 1.0K, 25.6K)

- **Y-axis:** "Accuracy (%)" (range: 57.5% to 65%)

- **Legend:**

- Blue line: "Goedel-Prover-SFT"

- Orange line: "Goedel-Prover-SFT + Apollo"

- **Data Points:**

- Blue line: (32K, 58%), (80K, 59.2%), (150K, 60.5%), (306K, 61.8%), (1.0K, 62.7%), (25.6K, 64.5%)

- Orange line: (32K, 57.5%), (80K, 60.8%), (150K, 63.4%), (306K, 65%), (1.0K, 63.2%), (25.6K, 64.5%)

**Right Chart (Kimina-Prover-Preview-Distill-7B):**

- **Title:** "Performance of Kimina-Prover-Preview-Distill-7B"

- **X-axis:** "Pass budget (K)" (markers: 0, 200, 400, 600, 800, 1000)

- **Y-axis:** "Accuracy (%)" (range: 63.5% to 74.5%)

- **Legend:**

- Red line: "Kimina-Prover-Preview-Distill-7B"

- Green line: "Kimina-Prover-Preview-Distill-7B + Apollo"

- **Data Points:**

- Red line: (0K, 63.5%), (200K, 66.2%), (400K, 67.8%), (600K, 69.1%), (800K, 70.3%), (1000K, 70.5%)

- Green line: (0K, 63.5%), (200K, 74%), (400K, 72.5%), (600K, 68.7%), (800K, 66.5%), (1000K, 70.5%)

### Detailed Analysis

**Left Chart Trends:**

1. **Goedel-Prover-SFT (Blue):** Shows a consistent upward trend, increasing from 58% at 32K to 64.5% at 25.6K. The log scale emphasizes performance gains at lower budgets.

2. **Goedel-Prover-SFT + Apollo (Orange):** Starts slightly below the base model (57.5% vs 58%) but surpasses it at 150K (63.4% vs 60.5%). Peaks at 306K (65%) before converging with the base model at 25.6K (64.5%).

**Right Chart Trends:**

1. **Kimina-Prover-Preview-Distill-7B (Red):** Steady linear increase from 63.5% at 0K to 70.5% at 1000K, with no plateaus.

2. **Kimina-Prover-Preview-Distill-7B + Apollo (Green):** Sharp initial spike to 74% at 200K (10.5% improvement), followed by a decline to 66.5% at 800K, then recovery to 70.5% at 1000K.

### Key Observations

1. **Apollo's Impact on Goedel-Prover-SFT:**

- Provides a 1.3% accuracy boost at 150K but requires 306K pass budget to reach peak performance.

- Performance converges with the base model at higher budgets (25.6K), suggesting diminishing returns.

2. **Apollo's Impact on Kimina-Prover:**

- Delivers a dramatic 10.5% accuracy boost at 200K but causes overfitting-like behavior (performance drop to 66.5% at 800K).

- Final accuracy matches the base model at 1000K, indicating unstable gains.

3. **Model Behavior:**

- Goedel-Prover-SFT shows stable scaling with budget.

- Kimina-Prover-Preview-Distill-7B exhibits linear scaling without saturation.

- Apollo's benefits are context-dependent: helpful for Goedel at mid-budgets but destabilizing for Kimina at higher budgets.

### Interpretation

The data suggests that Apollo's effectiveness varies significantly between models:

- For **Goedel-Prover-SFT**, Apollo acts as a performance enhancer at mid-range budgets but becomes redundant at higher budgets.

- For **Kimina-Prover-Preview-Distill-7B**, Apollo introduces instability, causing performance degradation after initial gains. This could indicate overfitting to specific data patterns or architectural incompatibilities.

The contrasting results highlight the importance of model-specific optimization when integrating enhancements like Apollo. While Apollo improves efficiency for Goedel-Prover-SFT, it may require careful tuning or architectural modifications to stabilize performance for Kimina-Prover-Preview-Distill-7B.