## Line Chart: Federated Learning Performance on CIFAR-10

### Overview

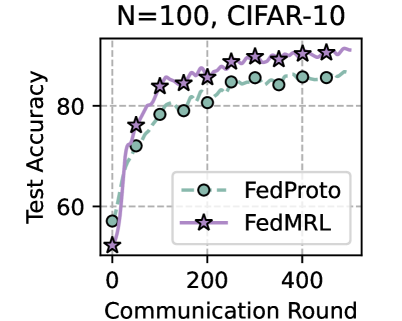

The image is a line chart comparing the test accuracy of two federated learning algorithms, FedProto and FedMRL, over 500 communication rounds. The experiment uses the CIFAR-10 dataset with N=100 (likely indicating 100 clients or participants). The chart demonstrates that both methods improve over time, but FedMRL achieves higher final accuracy and a faster convergence rate after an initial period.

### Components/Axes

* **Title:** "N=100, CIFAR-10" (Top center)

* **Y-Axis:** Label is "Test Accuracy". The scale runs from approximately 50 to 90, with major tick marks labeled at 60 and 80.

* **X-Axis:** Label is "Communication Round". The scale runs from 0 to 500, with major tick marks labeled at 0, 200, and 400.

* **Legend:** Located in the bottom-right quadrant of the chart area.

* **FedProto:** Represented by a dashed green line with circular markers (○).

* **FedMRL:** Represented by a solid purple line with star markers (☆).

* **Grid:** A light gray dashed grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

**Data Series & Trends:**

1. **FedProto (Green line, ○ markers):**

* **Trend:** Shows a steep, logarithmic-style increase in accuracy that gradually plateaus.

* **Approximate Data Points:**

* Round 0: ~58%

* Round ~50: ~72%

* Round ~100: ~78%

* Round ~150: ~80%

* Round ~200: ~82%

* Round ~250: ~84%

* Round ~300: ~85%

* Round ~350: ~86%

* Round ~400: ~87%

* Round ~450: ~88%

* Round 500: ~88%

2. **FedMRL (Purple line, ☆ markers):**

* **Trend:** Starts lower than FedProto but exhibits a steeper initial ascent, surpassing FedProto around round 150-200, and continues to climb to a higher final accuracy.

* **Approximate Data Points:**

* Round 0: ~52%

* Round ~50: ~76%

* Round ~100: ~82%

* Round ~150: ~84%

* Round ~200: ~86%

* Round ~250: ~87%

* Round ~300: ~88%

* Round ~350: ~89%

* Round ~400: ~90%

* Round ~450: ~91%

* Round 500: ~91%

### Key Observations

1. **Crossover Point:** The FedMRL line (purple stars) crosses above the FedProto line (green circles) between communication rounds 150 and 200. Before this point, FedProto has a slight accuracy lead; after it, FedMRL maintains a consistent and growing lead.

2. **Convergence:** Both curves show diminishing returns, with the rate of accuracy improvement slowing significantly after round 300. However, FedMRL's plateau occurs at a higher accuracy level (~91%) compared to FedProto (~88%).

3. **Initial Performance:** FedProto has a higher starting accuracy at round 0 (~58% vs. ~52%), suggesting it may have a better initial model or warm-start procedure.

4. **Growth Rate:** FedMRL demonstrates a more aggressive learning curve in the first 100 rounds, gaining approximately 30 percentage points (from ~52% to ~82%), while FedProto gains about 20 points (from ~58% to ~78%) in the same period.

### Interpretation

This chart provides a performance comparison in a federated learning context, where multiple clients collaboratively train a model without sharing raw data. The "Communication Round" axis represents iterations of this collaborative process.

* **What the data suggests:** The FedMRL algorithm is more effective than FedProto for this specific task (CIFAR-10 classification with 100 clients). While it may start from a weaker position, its learning efficiency is superior, allowing it to overtake FedProto and achieve a final model with approximately 3 percentage points higher test accuracy.

* **How elements relate:** The legend is critical for correctly attributing the performance curves. The green circle line (FedProto) shows steady but slower improvement. The purple star line (FedMRL) shows a "catch-up and surpass" pattern. The grid helps in estimating the numerical values and confirming the crossover point.

* **Notable patterns/anomalies:** The most significant pattern is the performance inversion. This could indicate that FedMRL's method of handling non-IID data (common in federated settings) or its model aggregation technique is more robust or efficient in the long run, despite a potentially less optimal initialization. The consistent gap in the later rounds suggests the advantage is stable and not due to noise. There are no obvious anomalies; the curves are smooth and follow expected learning trajectories.