\n

## Algorithm: Three-factor learning with PCM-trace

### Overview

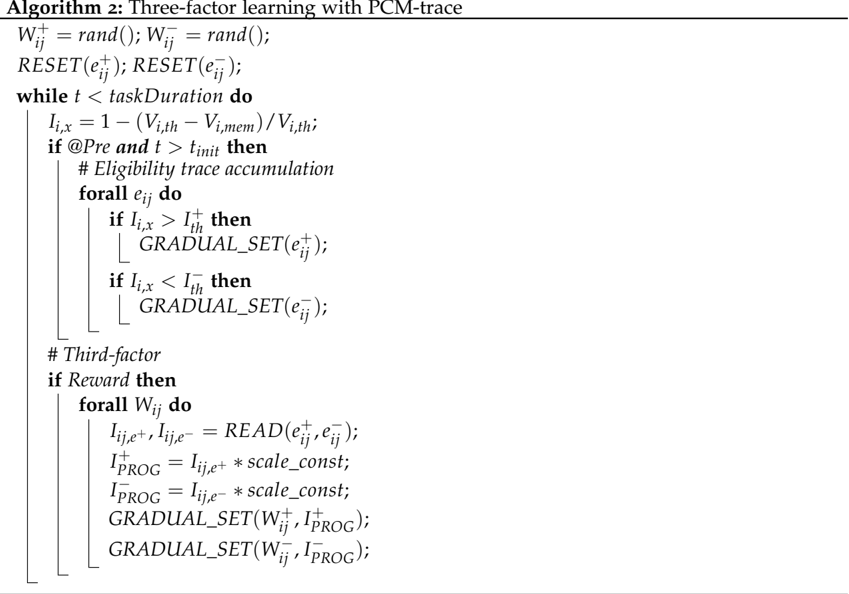

The image presents a pseudocode algorithm titled "Three-factor learning with PCM-trace". It outlines a learning process involving iterative updates based on eligibility traces and reward signals. The algorithm is structured as a `while` loop with nested `if` and `for all` statements, indicating a sequential and iterative process.

### Components/Axes

There are no axes or traditional chart components. The structure is purely textual, representing a computational algorithm. Key variables and functions are:

* `Wᵢⱼ⁺`: Positive weight.

* `Wᵢⱼ⁻`: Negative weight.

* `eᵢⱼ⁺`: Positive eligibility trace.

* `eᵢⱼ⁻`: Negative eligibility trace.

* `Iᵢₓ`: Input index.

* `Vᵢ,th`: Threshold value.

* `Vᵢ,mem`: Memory value.

* `t`: Time step.

* `taskDuration`: Total duration of the task.

* `tᵢnit`: Initialization time.

* `Iᵢ,th`: Input threshold.

* `Iᴾᴿᴼᶜ`: Processed input.

* `scale_const`: Scaling constant.

* `READ(eᵢⱼ⁺, eᵢⱼ⁻)`: Function to read eligibility traces.

* `GRADUAL_SET(variable)`: Function to gradually set a variable.

* `RESET(variable)`: Function to reset a variable.

* `@Pre`: Condition for pre-processing.

* `Reward`: Condition for reward processing.

### Detailed Analysis / Content Details

The algorithm can be broken down as follows:

1. **Initialization:**

* `Wᵢⱼ⁺ = rand(); Wᵢⱼ⁻ = rand();`: Initialize positive and negative weights randomly.

* `RESET(eᵢⱼ⁺); RESET(eᵢⱼ⁻);`: Reset positive and negative eligibility traces.

2. **Main Loop:** `while t < taskDuration do`

* `Iᵢₓ = 1 − (Vᵢ,th − Vᵢ,mem) / Vᵢ,th;`: Calculate the input index `Iᵢₓ`.

* `if @Pre and t > tᵢnit then`: Check if the pre-processing condition is met and the time step is greater than the initialization time.

* `# Eligibility trace accumulation`: Comment indicating eligibility trace accumulation.

* `forall eᵢⱼ do`: Iterate over all eligibility traces.

* `if Iᵢₓ > Iᵢ,th then`: If the input index is greater than the input threshold.

* `GRADUAL_SET(eᵢⱼ⁺);`: Gradually set the positive eligibility trace.

* `if Iᵢₓ < Iᵢ,th then`: If the input index is less than the input threshold.

* `GRADUAL_SET(eᵢⱼ⁻);`: Gradually set the negative eligibility trace.

* `# Third-factor`: Comment indicating the third-factor processing.

* `if Reward then`: Check if a reward is received.

* `forall Wᵢⱼ do`: Iterate over all weights.

* `Iᵢⱼ₋⁺, Iᵢⱼ₋⁻ = READ(eᵢⱼ⁺, eᵢⱼ⁻);`: Read the positive and negative eligibility traces.

* `Iᴾᴿᴼᶜ₋⁺ = Iᵢⱼ₋⁺ * scale_const;`: Calculate the processed positive input.

* `Iᴾᴿᴼᶜ₋⁻ = Iᵢⱼ₋⁻ * scale_const;`: Calculate the processed negative input.

* `GRADUAL_SET(Wᵢⱼ⁺, Iᴾᴿᴼᶜ₋⁺);`: Gradually set the positive weight using the processed positive input.

* `GRADUAL_SET(Wᵢⱼ⁻, Iᴾᴿᴼᶜ₋⁻);`: Gradually set the negative weight using the processed negative input.

### Key Observations

The algorithm combines elements of reinforcement learning (reward signal) with eligibility traces to update weights. The use of separate positive and negative weights and eligibility traces suggests a mechanism for handling both reinforcement and punishment signals. The `GRADUAL_SET` function implies a learning rate or smoothing factor is applied during weight updates. The `READ` function suggests that the eligibility traces are being accessed for use in weight updates.

### Interpretation

This algorithm appears to implement a form of reinforcement learning where the weights are adjusted based on the reward received and the eligibility traces of the associated actions. The eligibility traces act as a short-term memory of recent actions, allowing the algorithm to credit or blame those actions for the received reward. The "third-factor" component, involving the `READ` function and processed inputs, likely introduces a more nuanced weighting scheme based on the magnitude of the eligibility traces. The use of `scale_const` suggests a control over the impact of the eligibility traces on the weight updates. The algorithm's structure suggests it is designed for continuous learning in an environment where rewards are sparse or delayed. The `GRADUAL_SET` function is crucial for stability, preventing drastic weight changes that could disrupt learning. The algorithm is a sophisticated approach to learning, combining several key concepts from reinforcement learning and neural networks.