## System Architecture Diagram: NetLogo-LLM Integration via Python Extension

### Overview

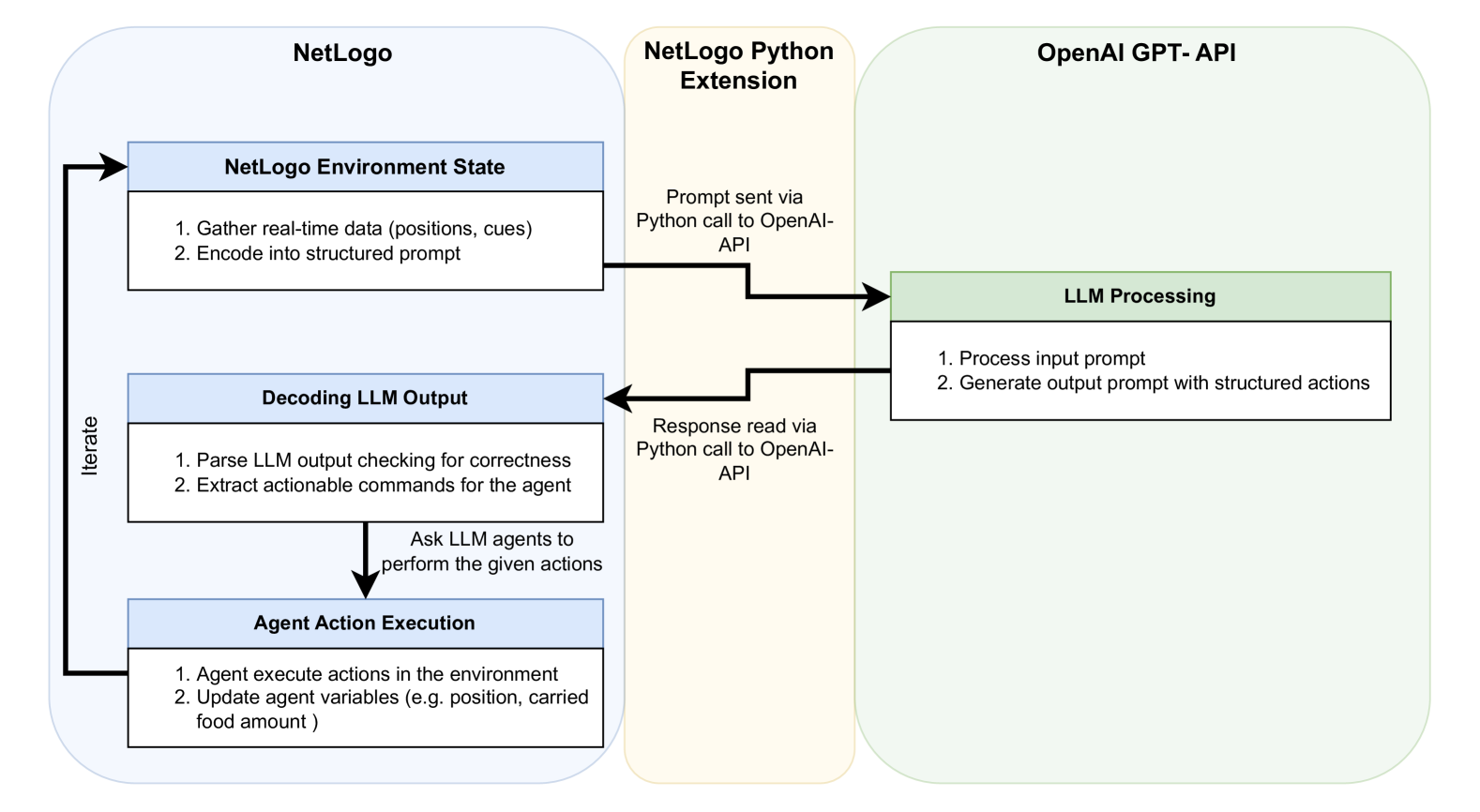

This diagram illustrates the technical workflow for integrating a Large Language Model (LLM) from OpenAI's GPT API with the NetLogo agent-based modeling environment. The system uses a Python extension as a bridge to enable a continuous, iterative loop where the LLM processes the simulation state and returns structured actions for agents to execute.

### Components/Axes

The diagram is segmented into three primary, color-coded regions arranged horizontally:

1. **Left Region (Light Blue): NetLogo**

* Contains the core simulation environment and agent logic.

2. **Center Region (Light Beige): NetLogo Python Extension**

* Acts as the communication middleware.

3. **Right Region (Light Green): OpenAI GPT-API**

* Hosts the external LLM processing.

**Detailed Component Breakdown:**

**A. NetLogo Region (Left)**

* **Component 1: NetLogo Environment State** (Top-left box)

* **Text Content:**

1. Gather real-time data (positions, cues)

2. Encode into structured prompt

* **Component 2: Decoding LLM Output** (Middle-left box)

* **Text Content:**

1. Parse LLM output checking for correctness

2. Extract actionable commands for the agent

* **Component 3: Agent Action Execution** (Bottom-left box)

* **Text Content:**

1. Agent execute actions in the environment

2. Update agent variables (e.g. position, carried food amount)

* **Flow Arrow:** A vertical arrow labeled **"Iterate"** connects the bottom of "Agent Action Execution" back to the top of "NetLogo Environment State," closing the loop.

**B. NetLogo Python Extension Region (Center)**

* This region contains no internal boxes but serves as a conduit. Two key process descriptions are placed here:

* **Top Arrow Label:** "Prompt sent via Python call to OpenAI-API"

* **Bottom Arrow Label:** "Response read via Python call to OpenAI-API"

**C. OpenAI GPT-API Region (Right)**

* **Component: LLM Processing** (Single box on the right)

* **Text Content:**

1. Process input prompt

2. Generate output prompt with structured actions

### Detailed Analysis: Process Flow

The system operates in a clear, cyclical sequence:

1. **State Encoding (NetLogo):** The process begins in the NetLogo Environment State. The system gathers real-time simulation data (e.g., agent positions, environmental cues) and encodes this information into a structured text prompt.

2. **Prompt Transmission (Python Extension):** The encoded prompt is sent from NetLogo, through the Python extension, via a Python call to the OpenAI API.

3. **LLM Reasoning (OpenAI API):** The LLM Processing component receives the prompt. It processes the input and generates a new output prompt containing structured actions for the agents.

4. **Response Retrieval (Python Extension):** The LLM's response is read back from the OpenAI API via another Python call through the extension.

5. **Output Decoding (NetLogo):** The response enters the Decoding LLM Output stage. The system parses the LLM's output, checks it for correctness, and extracts the actionable commands.

6. **Action Execution (NetLogo):** The extracted commands are passed to the Agent Action Execution stage. Agents perform the specified actions within the NetLogo environment (e.g., moving, carrying food), which updates their internal variables.

7. **Iteration:** The updated environment state is fed back into the first step, and the cycle repeats.

### Key Observations

* **Closed-Loop System:** The diagram explicitly shows a continuous feedback loop ("Iterate"), indicating this is not a one-time query but an ongoing integration where the LLM's decisions directly influence the simulation's next state.

* **Clear Separation of Concerns:** Responsibilities are distinctly partitioned: NetLogo handles simulation and agent logic, the Python extension handles API communication, and the OpenAI API handles high-level reasoning and action planning.

* **Structured Communication:** The prompts and responses are described as "structured," implying a predefined format or schema is used to ensure the LLM's output can be reliably parsed and executed by the NetLogo agents.

* **Focus on Agent Variables:** The example given for updated variables ("position, carried food amount") suggests a common use case in ecological or resource-gathering simulations.

### Interpretation

This architecture demonstrates a method for augmenting traditional agent-based models with the reasoning capabilities of modern LLMs. The LLM acts as a "central planner" or "policy network," interpreting the complex state of the simulation and deciding on agent behaviors based on that context.

The **Python extension is the critical linchpin**, enabling two disparate systems—a specialized modeling environment (NetLogo) and a general-purpose cloud API (OpenAI)—to communicate. The iterative loop is fundamental; it allows the simulation to evolve dynamically based on the LLM's continuous analysis, potentially modeling more complex, adaptive, or human-like decision-making processes than traditional rule-based agents. The emphasis on parsing and correctness checks highlights a key challenge in such integrations: ensuring the LLM's often creative or verbose outputs are translated into valid, executable commands within the simulation's strict rules.