\n

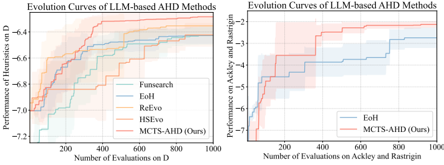

## Line Chart: Evolution Curves of LLM-based AHD Methods

### Overview

The image presents two line charts comparing the performance of several LLM-based AHD (Algorithm Hyperparameter Design) methods. The left chart focuses on performance on a dataset labeled "D", while the right chart focuses on performance on the "Ackley and Rastrigin" datasets. Each chart displays the performance (y-axis) as a function of the number of evaluations (x-axis). The charts include shaded regions representing the standard deviation of the performance.

### Components/Axes

**Left Chart:**

* **Title:** Evolution Curves of LLM-based AHD Methods

* **X-axis Label:** Number of Evaluations on D

* **Y-axis Label:** Performance of Heuristics on D

* **Y-axis Scale:** -2 to -6.2

* **Legend:**

* Funsearch (Light Blue)

* Eoh (Medium Blue)

* ReRevo (Light Orange)

* HSEvo (Medium Orange)

* MCTS-AHD (Ours) (Light Red)

**Right Chart:**

* **Title:** Evolution Curves of LLM-based AHD Methods

* **X-axis Label:** Number of Evaluations on Ackley and Rastrigin

* **Y-axis Label:** Performance on Ackley and Rastrigin

* **Y-axis Scale:** -2 to -6.2

* **Legend:**

* Eoh (Blue)

* MCTS-AHD (Ours) (Red)

### Detailed Analysis or Content Details

**Left Chart (Performance on D):**

* **Funsearch (Light Blue):** Starts around -5.8 at 0 evaluations, increases to approximately -5.2 by 100 evaluations, plateaus around -5.1 between 400 and 1000 evaluations.

* **Eoh (Medium Blue):** Starts around -5.8 at 0 evaluations, increases steadily to approximately -5.0 by 1000 evaluations.

* **ReRevo (Light Orange):** Starts around -5.8 at 0 evaluations, increases rapidly to approximately -4.8 by 200 evaluations, then plateaus around -4.7 between 400 and 1000 evaluations.

* **HSEvo (Medium Orange):** Starts around -5.8 at 0 evaluations, increases to approximately -5.0 by 100 evaluations, then plateaus around -4.8 between 400 and 1000 evaluations.

* **MCTS-AHD (Ours) (Light Red):** Starts around -5.8 at 0 evaluations, increases rapidly to approximately -4.6 by 200 evaluations, then fluctuates between -4.6 and -4.4 between 400 and 1000 evaluations.

**Right Chart (Performance on Ackley and Rastrigin):**

* **Eoh (Blue):** Starts around -5.8 at 0 evaluations, increases to approximately -5.0 by 200 evaluations, then fluctuates between -5.0 and -4.8 between 400 and 1000 evaluations.

* **MCTS-AHD (Ours) (Red):** Starts around -5.8 at 0 evaluations, increases rapidly to approximately -4.6 by 200 evaluations, then fluctuates between -4.6 and -4.4 between 400 and 1000 evaluations.

### Key Observations

* On both datasets, MCTS-AHD (Ours) consistently outperforms Funsearch and Eoh, especially in the initial stages of evaluation.

* ReRevo and HSEvo show strong initial performance on dataset D, but their performance plateaus relatively early.

* The shaded regions indicate a significant degree of variance in the performance of each method.

* The performance improvement diminishes as the number of evaluations increases for all methods.

### Interpretation

The charts demonstrate the effectiveness of the MCTS-AHD method compared to other LLM-based AHD methods. MCTS-AHD exhibits faster initial performance gains on both datasets, suggesting it can more efficiently explore the hyperparameter space. The plateaus observed in the performance curves indicate that the methods eventually converge to a local optimum, and further evaluations yield diminishing returns. The variance shown by the shaded regions suggests that the performance of these methods can be sensitive to the specific problem instance or random seed. The comparison between the two datasets suggests that the relative performance of the methods may vary depending on the characteristics of the optimization problem. The fact that MCTS-AHD consistently performs well across both datasets suggests its robustness and generalizability.