## Histogram: First Correct Answer Emergence in Decoding Steps

### Overview

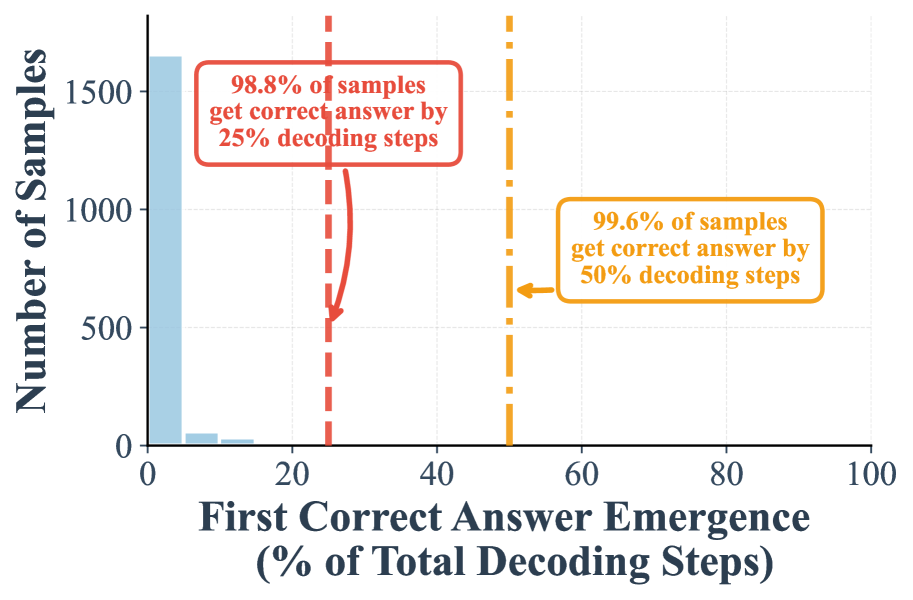

The image is a histogram chart analyzing the point at which a model first produces a correct answer during a decoding process. The chart demonstrates that the vast majority of samples achieve a correct answer very early in the process, with near-total correctness achieved well before the full decoding budget is exhausted.

### Components/Axes

* **Chart Type:** Histogram.

* **X-Axis:** Titled **"First Correct Answer Emergence (% of Total Decoding Steps)"**. It is a linear scale ranging from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **Y-Axis:** Titled **"Number of Samples"**. It is a linear scale ranging from 0 to over 1500, with major tick marks at 0, 500, 1000, and 1500.

* **Data Series:** A single data series represented by light blue histogram bars. The distribution is heavily right-skewed.

* **Annotations:** Two vertical dashed lines with associated text boxes provide cumulative statistics.

* **Red Dashed Line:** Positioned at approximately **25%** on the x-axis. An arrow points from a red-bordered text box to this line.

* **Orange Dashed Line:** Positioned at approximately **50%** on the x-axis. An arrow points from an orange-bordered text box to this line.

### Detailed Analysis

* **Histogram Distribution:**

* The tallest bar is in the **0-5%** bin, with a height of approximately **1650 samples**. This indicates the largest group of samples gets the correct answer almost immediately.

* The second bar (5-10% bin) is significantly shorter, at approximately **100 samples**.

* The third bar (10-15% bin) is very short, at approximately **50 samples**.

* Bars beyond the 15% mark are negligible or not visible, showing that very few samples require more than 15% of decoding steps to first achieve a correct answer.

* **Annotation Text (Transcribed):**

1. **Red Text Box (Top-Left):** "98.8% of samples get correct answer by 25% decoding steps"

2. **Orange Text Box (Center-Right):** "99.6% of samples get correct answer by 50% decoding steps"

### Key Observations

1. **Extreme Early Success:** The distribution is dominated by the first bin (0-5%), showing that for the overwhelming majority of samples, the correct answer emerges at the very beginning of the decoding process.

2. **Rapid Saturation:** The cumulative statistics confirm the visual trend. By the 25% mark of the total allowed decoding steps, 98.8% of all samples have already found a correct answer. This leaves only 1.2% of samples unresolved at that point.

3. **Diminishing Returns:** The improvement from the 25% checkpoint to the 50% checkpoint is minimal (from 98.8% to 99.6% correct), indicating that allocating more than 50% of the decoding budget yields almost no additional correct answers for this dataset and model configuration.

4. **Long Tail Absence:** There is no visible long tail in the histogram. The process either succeeds very quickly or, for a tiny fraction of samples, does not succeed within the observed range.

### Interpretation

This chart provides strong evidence for the **efficiency** of the decoding or generation process being evaluated. The data suggests the model is highly confident and accurate in its early steps for this particular task.

* **Performance Implication:** The primary takeaway is that the model's "first correct answer" is not a late-stage correction but an early-stage success. This has implications for resource allocation; one could potentially truncate the decoding process early (e.g., at 25-50% of the steps) with minimal loss in accuracy, leading to significant computational savings.

* **Underlying Behavior:** The pattern indicates that for most inputs, the model's initial reasoning or generation path is correct. The few samples that take longer (the small bars between 5-15%) might represent more complex or ambiguous cases where the model explores incorrect paths before converging on the right answer.

* **Anomaly Note:** The near-total correctness (99.6%) by the 50% mark is a notable result. It suggests that for this specific benchmark or task, the problem of "never finding the correct answer" is almost non-existent within the given decoding budget. The remaining 0.4% of samples may represent fundamental failures or edge cases the model cannot handle.