## Line Chart: Model Size vs. Vocab Accuracy for Different Tokenization Methods

### Overview

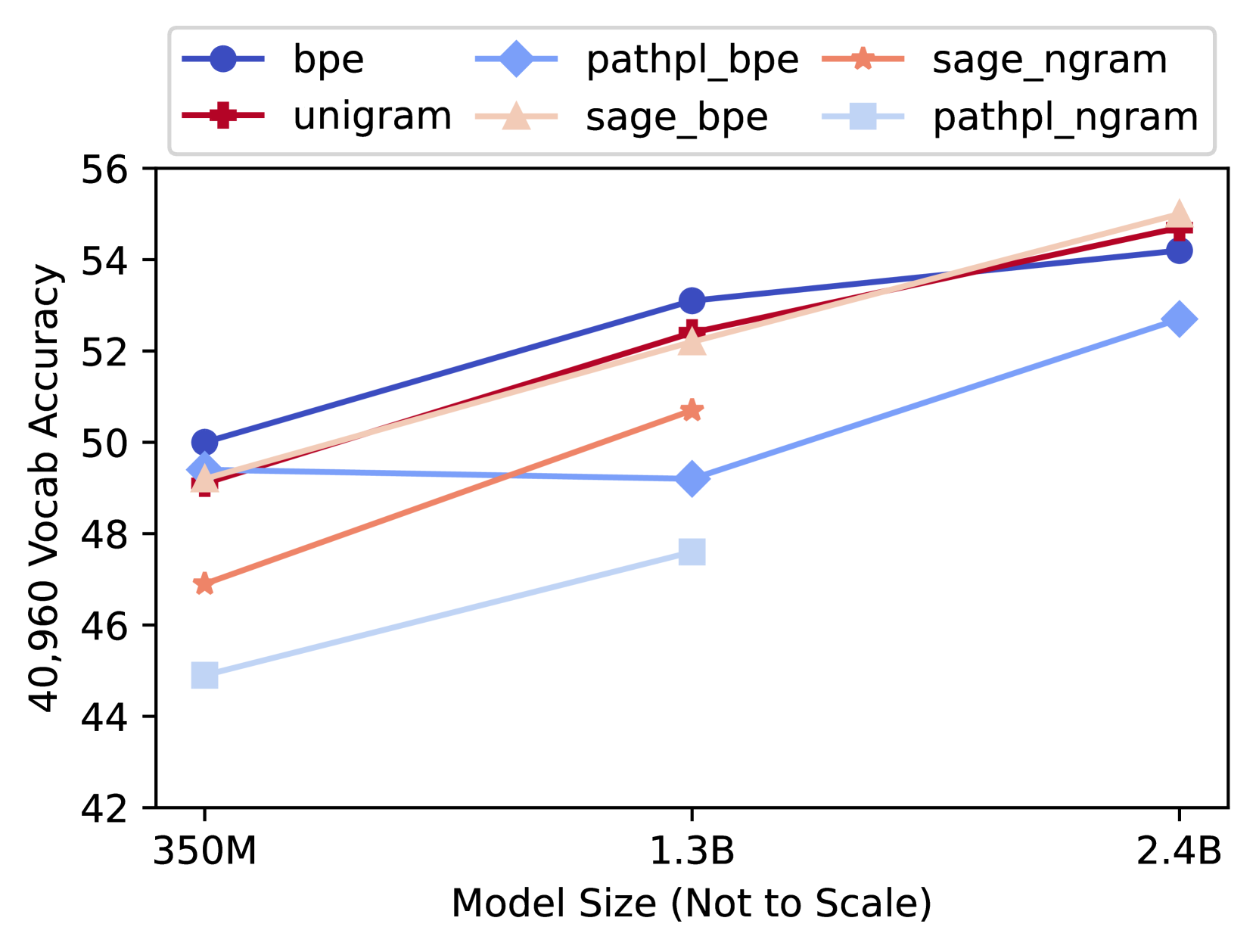

The image is a line chart comparing the performance of six different tokenization or model training methods across three model sizes. The chart plots "40,960 Vocab Accuracy" on the y-axis against "Model Size (Not to Scale)" on the x-axis. The data suggests that accuracy generally increases with model size for all methods, but the rate of improvement and final performance vary significantly.

### Components/Axes

* **Chart Type:** Multi-series line chart.

* **Y-Axis:**

* **Label:** "40,960 Vocab Accuracy"

* **Scale:** Linear, ranging from 42 to 56, with major tick marks every 2 units (42, 44, 46, 48, 50, 52, 54, 56).

* **X-Axis:**

* **Label:** "Model Size (Not to Scale)"

* **Categories/Points:** Three discrete model sizes: "350M", "1.3B", "2.4B". The axis is categorical, not numerically scaled.

* **Legend:** Positioned at the top center of the chart area. It contains six entries, each with a unique color, line style, and marker:

1. `bpe`: Dark blue line with circle markers.

2. `unigram`: Dark red line with square markers.

3. `pathpl_bpe`: Light blue line with diamond markers.

4. `sage_bpe`: Light orange/peach line with upward-pointing triangle markers.

5. `sage_ngram`: Orange line with star (asterisk) markers.

6. `pathpl_ngram`: Very light blue/grey line with square markers.

### Detailed Analysis

**Data Series Trends and Approximate Values:**

1. **bpe (Dark Blue, Circles):**

* **Trend:** Steady, strong upward slope across all model sizes.

* **Values:** ~50.0 (350M) → ~53.1 (1.3B) → ~54.2 (2.4B).

2. **unigram (Dark Red, Squares):**

* **Trend:** Strong upward slope, nearly parallel to `bpe` but slightly lower at 350M and 1.3B, converging at 2.4B.

* **Values:** ~49.1 (350M) → ~52.5 (1.3B) → ~54.7 (2.4B).

3. **sage_bpe (Light Orange, Triangles):**

* **Trend:** Very strong upward slope, starting near `unigram` and ending as the highest-performing method at 2.4B.

* **Values:** ~49.2 (350M) → ~52.2 (1.3B) → ~55.0 (2.4B).

4. **sage_ngram (Orange, Stars):**

* **Trend:** Moderate upward slope. Data is only plotted for 350M and 1.3B; the line does not extend to 2.4B.

* **Values:** ~46.9 (350M) → ~50.7 (1.3B). No data point for 2.4B.

5. **pathpl_bpe (Light Blue, Diamonds):**

* **Trend:** Slight dip or plateau between 350M and 1.3B, followed by a strong increase to 2.4B.

* **Values:** ~49.4 (350M) → ~49.2 (1.3B) → ~52.7 (2.4B).

6. **pathpl_ngram (Very Light Blue, Squares):**

* **Trend:** Steady upward slope. This is the lowest-performing series at 350M and 1.3B. Data is only plotted for these two points.

* **Values:** ~44.9 (350M) → ~47.6 (1.3B). No data point for 2.4B.

### Key Observations

* **Performance Hierarchy at 350M:** `bpe` > `pathpl_bpe` ≈ `sage_bpe` ≈ `unigram` > `sage_ngram` > `pathpl_ngram`.

* **Performance Hierarchy at 1.3B:** `bpe` > `unigram` > `sage_bpe` > `sage_ngram` > `pathpl_bpe` > `pathpl_ngram`.

* **Performance Hierarchy at 2.4B:** `sage_bpe` > `unigram` > `bpe` > `pathpl_bpe`. (`sage_ngram` and `pathpl_ngram` have no data).

* **Notable Outliers/Anomalies:**

* `pathpl_bpe` is the only method that does not show a strict monotonic increase, exhibiting a slight performance drop when scaling from 350M to 1.3B.

* The `sage_ngram` and `pathpl_ngram` methods have incomplete data, missing results for the largest (2.4B) model size.

* At the largest model size (2.4B), the `sage_bpe` method overtakes the initially leading `bpe` method.

### Interpretation

This chart demonstrates the relationship between model scale and downstream task accuracy (specifically for a 40,960 vocabulary size) when using different subword tokenization or training strategies. The core finding is that **increasing model size generally improves accuracy**, but the choice of tokenization method significantly impacts both the absolute performance and the scaling efficiency.

* **Method Effectiveness:** The `sage_bpe` and `unigram` methods show the most promising scaling behavior, with `sage_bpe` achieving the highest observed accuracy at 2.4B parameters. The standard `bpe` method is a strong and consistent performer but is eventually surpassed.

* **Scaling Inefficiency:** The `pathpl_bpe` method's dip at 1.3B suggests a potential instability or suboptimal configuration at that specific scale, though it recovers at 2.4B. The `pathpl_ngram` method consistently underperforms others at the scales where it is measured.

* **Data Gaps:** The absence of data for `sage_ngram` and `pathpl_ngram` at 2.4B limits a full comparison. It is unclear if this is due to experimental constraints, failure to converge, or results not being ready.

* **Practical Implication:** For practitioners aiming to maximize accuracy with a large vocabulary, this data suggests that `sage_bpe` or `unigram` tokenization paired with a model size of at least 2.4B parameters is a highly effective combination. The choice between methods may also depend on other factors not shown here, such as training cost, inference speed, or performance on other metrics.