## Diagram: Federated Learning System with Differential Privacy

### Overview

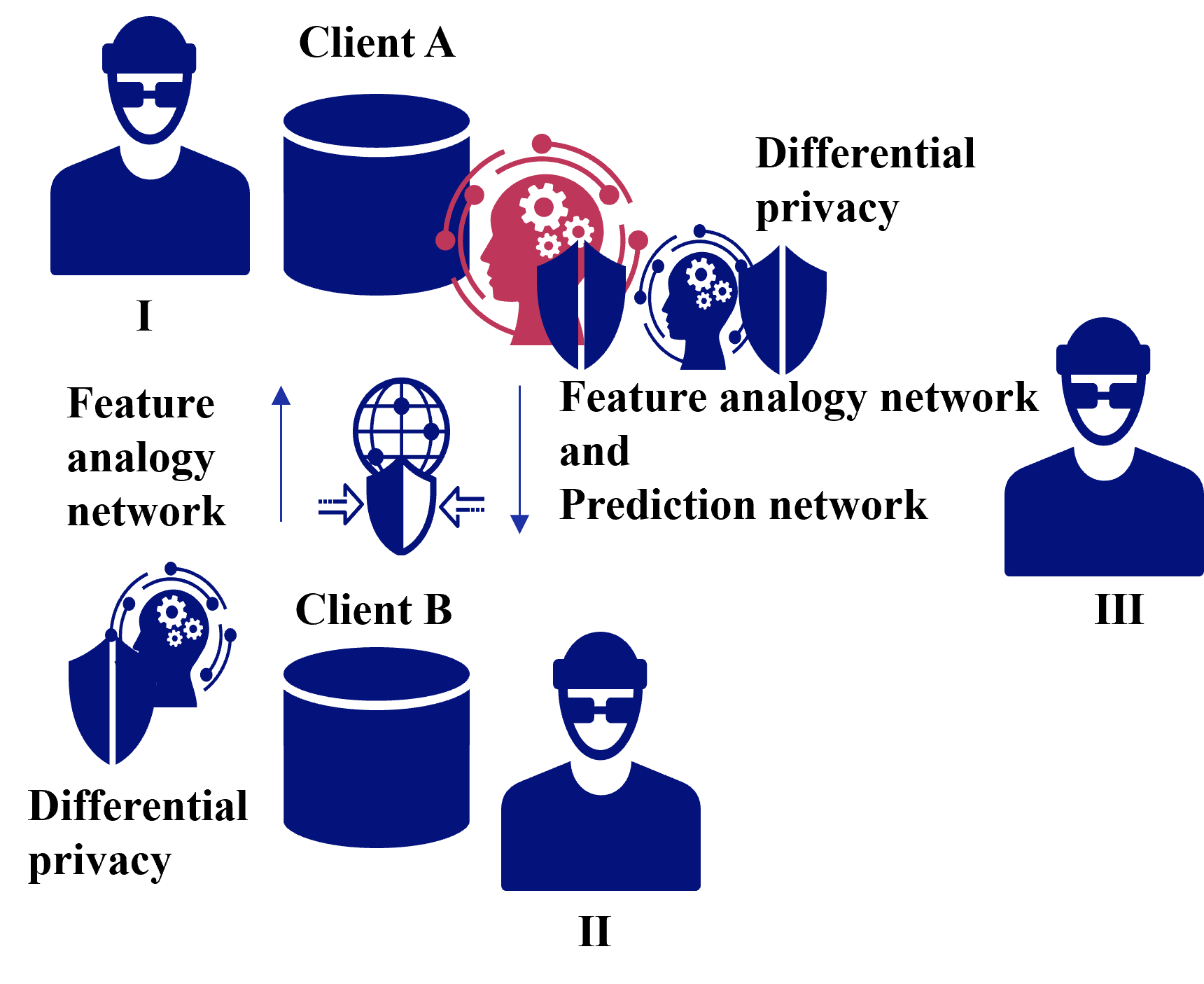

The diagram illustrates a federated learning architecture involving three entities (Client A, Client B, and Client III) and two data containers (I and II). It emphasizes data privacy through differential privacy mechanisms and feature analogy networks. Arrows indicate data flow, and shields/gears symbolize privacy and processing steps.

### Components/Axes

- **Entities**:

- Client A (I), Client B (II), Client III (III)

- Data containers (blue cylinders) labeled I and II

- **Networks**:

- Feature analogy network (red gears/shield)

- Prediction network (blue shield)

- **Privacy Mechanisms**:

- Differential privacy (blue shields with "Differential privacy" labels)

- **Flow Direction**:

- Data moves from Client A → Feature analogy network → Prediction network → Client B (via differential privacy).

- Data moves from Client B → Differential privacy → Feature analogy network → Prediction network → Client III.

### Detailed Analysis

1. **Client A (I)**:

- Data container (blue cylinder) labeled "Client A."

- Outputs to a **feature analogy network** (red gears/shield), which connects to a **prediction network** (blue shield).

- Differential privacy is applied before data reaches Client B.

2. **Client B (II)**:

- Data container (blue cylinder) labeled "Client B."

- Receives data from Client A’s prediction network via differential privacy.

- Outputs to a **feature analogy network** and **prediction network**, then to Client III via differential privacy.

3. **Client III (III)**:

- Receives processed data from Client B’s prediction network after differential privacy.

4. **Key Symbols**:

- **Shields**: Represent differential privacy (blue) and prediction networks (blue).

- **Gears**: Represent feature analogy networks (red).

- **Arrows**: Indicate data flow direction.

### Key Observations

- **Bidirectional Collaboration**: Client A and Client B exchange data through shared networks, suggesting collaborative model training.

- **Privacy Emphasis**: Differential privacy is applied at multiple stages (before data leaves Client A, before data reaches Client B, and before data reaches Client III).

- **Feature Alignment**: The feature analogy network (red gears) likely aligns data features between clients to improve prediction accuracy.

### Interpretation

This diagram represents a **secure, privacy-preserving federated learning system**. Clients (A and B) contribute data without sharing raw information, using differential privacy to anonymize data at each transfer. The feature analogy network ensures compatibility between clients’ data features, while the prediction network aggregates insights. Client III may act as a central server or another participant in the federated system. The use of shields and gears visually reinforces the balance between data utility (gears) and privacy (shields).

**Notable Patterns**:

- Differential privacy is prioritized at every data handoff, minimizing exposure.

- The feature analogy network acts as a bridge between disparate data sources, enabling cross-client collaboration.

- Client III’s role is ambiguous but likely involves receiving finalized, privacy-protected insights.

**Underlying Logic**:

The system prioritizes **data minimization** (only sharing necessary features) and **privacy guarantees** (differential privacy at every stage). The red gears (feature analogy network) suggest a focus on aligning heterogeneous data, while blue shields (prediction/differential privacy) emphasize secure processing.