TECHNICAL ASSET FINGERPRINT

8aff1ad9aed43ca75132c8b3

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

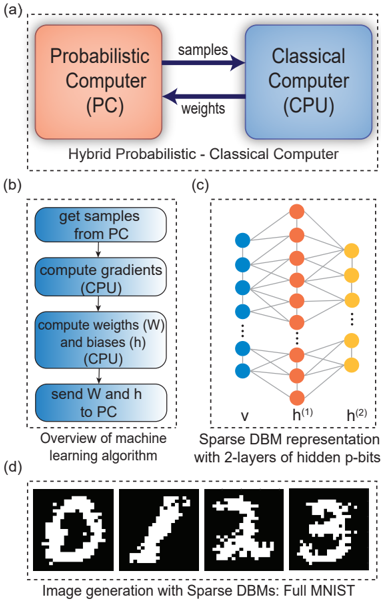

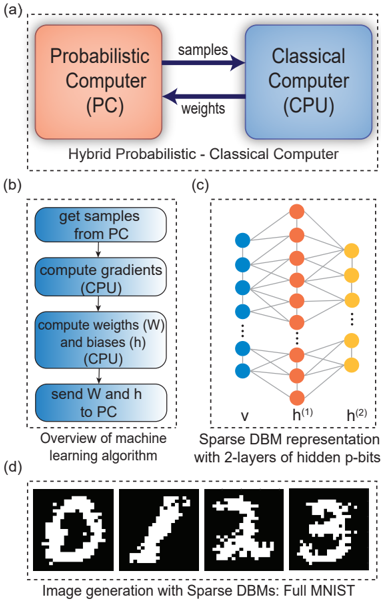

## Diagram: Hybrid Probabilistic-Classical Computer System

### Overview

The image presents a system diagram of a hybrid probabilistic-classical computer, a machine learning algorithm overview, a sparse Deep Boltzmann Machine (DBM) representation, and image generation results using sparse DBMs. It is divided into four sub-figures labeled (a), (b), (c), and (d).

### Components/Axes

* **(a) Hybrid Probabilistic - Classical Computer:**

* Two rectangular blocks represent the "Probabilistic Computer (PC)" (colored orange) and the "Classical Computer (CPU)" (colored blue).

* Arrows indicate data flow: "samples" from PC to CPU, and "weights" from CPU to PC.

* **(b) Overview of machine learning algorithm:**

* A flowchart with four rounded rectangles, each representing a step in the algorithm.

* "get samples from PC"

* "compute gradients (CPU)"

* "compute weights (W) and biases (h) (CPU)"

* "send W and h to PC"

* **(c) Sparse DBM representation with 2-layers of hidden p-bits:**

* A neural network diagram with three layers of nodes.

* Input layer 'v' (blue nodes).

* First hidden layer 'h^(1)' (orange nodes).

* Second hidden layer 'h^(2)' (yellow nodes).

* Lines connect nodes between layers, representing connections/weights.

* **(d) Image generation with Sparse DBMs: Full MNIST:**

* Four black and white images, presumably generated digits from the MNIST dataset. The digits appear to be 0, 1, 2, and 3.

### Detailed Analysis

* **(a)** The Probabilistic Computer (PC) and Classical Computer (CPU) exchange data. The PC sends samples to the CPU, and the CPU sends weights back to the PC.

* **(b)** The machine learning algorithm involves getting samples from the PC, computing gradients and weights/biases using the CPU, and sending the weights/biases back to the PC.

* **(c)** The Sparse DBM has an input layer (v) and two hidden layers (h^(1) and h^(2)). The connections between layers suggest a feedforward network.

* **(d)** The generated images are pixelated representations of digits, indicating the DBM's ability to generate images.

### Key Observations

* The system combines probabilistic and classical computing elements.

* The machine learning algorithm is iterative, involving data exchange between the PC and CPU.

* The DBM architecture has two hidden layers.

* The generated images are recognizable as digits, demonstrating the model's learning capability.

### Interpretation

The diagram illustrates a hybrid computing system where a probabilistic computer and a classical computer work together to perform machine learning tasks. The probabilistic computer likely provides samples for training, while the classical computer handles the computationally intensive tasks of gradient calculation and weight updates. The sparse DBM is used for image generation, and the results show that the model can learn to generate recognizable digits. The system leverages the strengths of both probabilistic and classical computing to achieve its goals.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: Hybrid Probabilistic-Classical Computing System for Machine Learning

### Overview

This image is a multi-panel technical diagram illustrating a hybrid computing architecture involving probabilistic and classical computers, an overview of a machine learning algorithm designed for this architecture, the representation of a Sparse Deep Boltzmann Machine (DBM), and examples of image generation using this approach. The figure is divided into four sub-panels labeled (a), (b), (c), and (d), each presenting a distinct aspect of the system.

### Components/Axes

**Panel (a): Hybrid Probabilistic - Classical Computer**

* **Left Component (Orange Box)**: Labeled "Probabilistic Computer (PC)".

* **Right Component (Blue Box)**: Labeled "Classical Computer (CPU)".

* **Data Flow Arrows**:

* An arrow pointing from "Probabilistic Computer (PC)" to "Classical Computer (CPU)" is labeled "samples".

* An arrow pointing from "Classical Computer (CPU)" to "Probabilistic Computer (PC)" is labeled "weights".

* **Overall Title (Bottom-Center)**: "Hybrid Probabilistic - Classical Computer".

**Panel (b): Overview of machine learning algorithm**

* This is a vertical flowchart with four rectangular, rounded-corner steps, colored in a gradient from light blue at the top to a slightly darker blue at the bottom.

* **Step 1 (Top)**: "get samples from PC"

* **Step 2**: "compute gradients (CPU)"

* **Step 3**: "compute weights (W) and biases (h) (CPU)"

* **Step 4 (Bottom)**: "send W and h to PC"

* **Flow Direction**: Downward arrows connect each step, indicating sequential execution.

* **Overall Title (Bottom-Center)**: "Overview of machine learning algorithm".

**Panel (c): Sparse DBM representation with 2-layers of hidden p-bits**

* This diagram represents a neural network-like structure with three vertical layers of nodes.

* **Layer 1 (Left)**: Consists of blue circular nodes. There are 5 visible nodes at the top and 3 visible nodes at the bottom, separated by a vertical ellipsis (...), indicating many more nodes. This layer is labeled "v" at the bottom.

* **Layer 2 (Middle)**: Consists of orange circular nodes. There are 5 visible nodes at the top and 3 visible nodes at the bottom, separated by a vertical ellipsis (...), indicating many more nodes. This layer is labeled "h^(1)" at the bottom.

* **Layer 3 (Right)**: Consists of yellow circular nodes. There are 3 visible nodes at the top and 3 visible nodes at the bottom, separated by a vertical ellipsis (...), indicating many more nodes. This layer is labeled "h^(2)" at the bottom.

* **Connections**: Lines connect nodes between adjacent layers (Layer 1 to Layer 2, and Layer 2 to Layer 3), indicating weighted connections. The connections appear to be sparse, as not every node in one layer is connected to every node in the next.

* **Overall Title (Bottom-Center)**: "Sparse DBM representation with 2-layers of hidden p-bits".

**Panel (d): Image generation with Sparse DBMs: Full MNIST**

* This panel displays four distinct black-and-white pixelated images arranged horizontally. Each image is a representation of a handwritten digit.

* **Image 1 (Left)**: Appears to be the digit "0".

* **Image 2**: Appears to be the digit "1".

* **Image 3**: Appears to be the digit "2".

* **Image 4 (Right)**: Appears to be the digit "3".

* **Overall Title (Bottom-Center)**: "Image generation with Sparse DBMs: Full MNIST".

### Detailed Analysis

**Panel (a):** This diagram illustrates a feedback loop between a "Probabilistic Computer (PC)" and a "Classical Computer (CPU)". The PC generates "samples" which are sent to the CPU. The CPU then processes these samples to compute "weights", which are subsequently sent back to the PC. This suggests an iterative process where the PC acts as a sampler or generator, and the CPU performs optimization or learning based on the PC's output.

**Panel (b):** This flowchart details the steps of a machine learning algorithm executed within the hybrid computing framework.

1. The process begins by "getting samples from PC", indicating the PC's role in providing data.

2. Next, the "CPU" computes "gradients", which are essential for optimizing machine learning models.

3. Following gradient computation, the "CPU" calculates "weights (W) and biases (h)", which are the parameters of the machine learning model.

4. Finally, these updated "W and h" parameters are "sent to PC", closing the loop and updating the probabilistic computer's state or model. This sequence describes a typical iterative training process for a machine learning model, where the PC handles the probabilistic sampling aspect and the CPU handles the deterministic optimization.

**Panel (c):** This diagram visualizes a "Sparse DBM (Deep Boltzmann Machine)" with two hidden layers.

* The "v" layer (visible layer, blue nodes) represents the input or observable data.

* The "h^(1)" layer (first hidden layer, orange nodes) and "h^(2)" layer (second hidden layer, yellow nodes) represent increasingly abstract features or representations learned by the model.

* The "p-bits" in the title likely refer to probabilistic bits, indicating that the nodes in this DBM operate probabilistically, consistent with the "Probabilistic Computer" in panel (a). The "sparse" nature implies that not all nodes are fully connected, which can be beneficial for efficiency or learning specific types of representations.

**Panel (d):** This panel showcases the output of the system: "Image generation with Sparse DBMs". The four images are pixelated representations of handwritten digits (0, 1, 2, 3) from the "Full MNIST" dataset. This demonstrates that the hybrid system, utilizing Sparse DBMs, is capable of generating realistic (albeit pixelated) images, which is a common task for generative machine learning models. The quality of the digits suggests successful learning of the underlying data distribution.

### Key Observations

* The entire system is designed around a feedback loop between a probabilistic and a classical computer.

* The classical computer (CPU) is responsible for the computational heavy lifting of gradient calculation and parameter updates (weights and biases).

* The probabilistic computer (PC) is responsible for generating samples and likely holding the probabilistic model parameters (W and h).

* The machine learning model used is a "Sparse DBM with 2-layers of hidden p-bits," indicating a deep generative model.

* The application demonstrated is image generation, specifically for handwritten digits from the MNIST dataset.

### Interpretation

This technical document describes a novel approach to machine learning by leveraging a "Hybrid Probabilistic - Classical Computer" architecture. The core idea is to offload the probabilistic sampling aspects of a Deep Boltzmann Machine (DBM) to a specialized "Probabilistic Computer (PC)", while the computationally intensive gradient calculations and parameter updates (weights and biases) are handled by a "Classical Computer (CPU)".

The "Overview of machine learning algorithm" (b) clearly outlines the iterative training process: the PC provides samples, the CPU computes gradients and updates model parameters (W and h), and these updated parameters are then sent back to the PC to refine its probabilistic model. This suggests that the PC might be a hardware accelerator or a specialized device designed for efficient sampling from complex probability distributions, which is often a bottleneck in training DBMs.

The "Sparse DBM representation" (c) indicates the specific type of neural network being trained. DBMs are generative models capable of learning complex data distributions. The "2-layers of hidden p-bits" imply a deep architecture where 'p-bits' are likely physical or simulated probabilistic bits, aligning with the "Probabilistic Computer" concept. The sparsity in connections could be a design choice for efficiency, regularization, or to model specific types of data structures.

Finally, the "Image generation with Sparse DBMs: Full MNIST" (d) serves as a proof-of-concept, demonstrating the system's ability to learn and generate images of handwritten digits. The MNIST dataset is a standard benchmark for image recognition and generation tasks. The successful generation of recognizable digits (0, 1, 2, 3) implies that the hybrid system effectively learns the underlying patterns of the dataset.

In essence, this document proposes a synergistic computing paradigm where the strengths of probabilistic hardware (for sampling) are combined with the strengths of classical hardware (for optimization) to efficiently train and utilize complex generative models like Sparse DBMs, particularly for tasks such as image generation. This approach could potentially overcome computational limitations faced by purely classical systems when dealing with highly probabilistic or quantum-inspired machine learning models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Hybrid Probabilistic-Classical Computing System

### Overview

The image presents a diagram illustrating a hybrid computing system combining a Probabilistic Computer (PC) and a Classical Computer (CPU). It details the interaction between these two components, the machine learning algorithm overview, a sparse Deep Belief Machine (DBM) representation, and examples of image generation using the system. The image is divided into four sub-diagrams labeled (a), (b), (c), and (d).

### Components/Axes

* **(a) Hybrid Probabilistic-Classical Computer:** Shows the interaction between a "Probabilistic Computer (PC)" and a "Classical Computer (CPU)". The PC sends "samples" and "weights" to the CPU.

* **(b) Overview of Machine Learning Algorithm:** A flowchart outlining the steps of the machine learning algorithm.

* **(c) Sparse DBM Representation:** A diagram of a neural network structure with labeled layers.

* **(d) Image Generation with Sparse DBMs:** Displays four generated images of digits.

* **Labels in (c):** "v" (bottom layer), "h<sup>(1)</sup>" (middle layer), "h<sup>(2)</sup>" (top layer).

### Detailed Analysis or Content Details

**(a) Hybrid Probabilistic-Classical Computer:**

* The Probabilistic Computer (PC) is represented by a salmon-colored rectangle in the top-left.

* The Classical Computer (CPU) is represented by a light-blue rectangle in the top-right.

* An arrow labeled "samples" points from the PC to the CPU.

* An arrow labeled "weights" points from the PC to the CPU.

* Text below the rectangles reads: "Hybrid Probabilistic - Classical Computer".

**(b) Overview of Machine Learning Algorithm:**

* This section is a flowchart within a light-blue rectangle.

* Step 1: "get samples from PC" (top rectangle).

* Step 2: "compute gradients" (middle-top rectangle).

* Step 3: "compute weights (W) and biases (h) (CPU)" (middle-bottom rectangle).

* Step 4: "send W and h to PC" (bottom rectangle).

* Text below the flowchart reads: "Overview of machine learning algorithm".

**(c) Sparse DBM Representation:**

* This section depicts a neural network.

* The bottom layer ("v") consists of approximately 8 blue nodes.

* The middle layer ("h<sup>(1)</sup>") consists of approximately 6 orange nodes.

* The top layer ("h<sup>(2)</sup>") consists of approximately 6 orange nodes.

* Connections between layers are represented by dotted lines.

**(d) Image Generation with Sparse DBMs:**

* This section displays four generated images.

* Image 1: Digit "0".

* Image 2: Digit "7".

* Image 3: Digit "2".

* Image 4: Digit "3".

* Text below the images reads: "Image generation with Sparse DBMs: Full MNIST".

### Key Observations

* The system utilizes a hybrid approach, leveraging the strengths of both probabilistic and classical computing.

* The machine learning algorithm involves iterative sampling, gradient computation, and weight/bias updates.

* The DBM architecture consists of multiple layers of hidden units.

* The system is capable of generating images resembling handwritten digits from the MNIST dataset.

### Interpretation

The diagram illustrates a novel computing paradigm that combines the advantages of probabilistic and classical computation. The probabilistic computer likely generates samples, which are then used by the classical computer to compute gradients and update weights. This iterative process allows the system to learn and generate complex data, such as images of handwritten digits. The use of a sparse DBM suggests an attempt to reduce computational complexity and improve generalization performance. The MNIST dataset is a standard benchmark for image recognition and generation, indicating that the system is being evaluated on a well-established task. The overall architecture suggests a potential for developing more efficient and powerful machine learning algorithms. The flow of information is clearly defined, starting with the PC generating samples, moving to the CPU for processing, and then back to the PC with updated weights. This cyclical process is central to the machine learning algorithm. The diagram doesn't provide quantitative data, but rather a conceptual overview of the system's architecture and functionality.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Diagram Composite]: Hybrid Probabilistic-Classical Computing System for Sparse DBM Machine Learning

### Overview

The image is a composite technical figure containing four distinct but related diagrams labeled (a), (b), (c), and (d). It illustrates the architecture, algorithmic workflow, network structure, and output of a machine learning system that combines a Probabilistic Computer (PC) with a Classical Computer (CPU) to train and run a Sparse Deep Belief Machine (DBM) for image generation.

### Components/Axes

The figure is divided into four dashed-line boxes, each with a caption:

* **(a) Top:** A block diagram titled "Hybrid Probabilistic - Classical Computer".

* **(b) Bottom Left:** A flowchart titled "Overview of machine learning algorithm".

* **(c) Bottom Right:** A neural network diagram titled "Sparse DBM representation with 2-layers of hidden p-bits".

* **(d) Bottom:** A series of images titled "Image generation with Sparse DBMs: Full MNIST".

### Detailed Analysis

**Part (a): Hybrid Computer Architecture**

* **Components:** Two main blocks.

* Left block (orange): Labeled "Probabilistic Computer (PC)".

* Right block (blue): Labeled "Classical Computer (CPU)".

* **Data Flow:** Two arrows indicate bidirectional communication.

* Arrow from PC to CPU: Labeled "samples".

* Arrow from CPU to PC: Labeled "weights".

**Part (b): Machine Learning Algorithm Flowchart**

* **Process Steps (in sequence, top to bottom):**

1. "get samples from PC" (Blue rounded rectangle)

2. "compute gradients (CPU)" (Blue rounded rectangle)

3. "compute weights (W) and biases (h) (CPU)" (Blue rounded rectangle)

4. "send W and h to PC" (Blue rounded rectangle)

* **Flow:** Vertical arrows connect the steps in the order listed above.

**Part (c): Sparse DBM Network Diagram**

* **Structure:** A three-layer neural network.

* **Leftmost Layer (Input/Visible):** Labeled "v". Consists of 5 blue circles (nodes).

* **Middle Layer (Hidden 1):** Labeled "h⁽¹⁾". Consists of 5 orange circles.

* **Rightmost Layer (Hidden 2):** Labeled "h⁽²⁾". Consists of 3 yellow circles.

* **Connections:** Lines connect nodes between adjacent layers. The connections appear dense between `v` and `h⁽¹⁾`, and sparser between `h⁽¹⁾` and `h⁽²⁾`, illustrating the "Sparse" property. Vertical ellipses (`...`) between nodes in layers `v` and `h⁽¹⁾` indicate these are representative subsets of a larger layer.

**Part (d): Generated Image Outputs**

* **Content:** Four black-and-white, pixelated images resembling handwritten digits.

* Image 1: Resembles a "0".

* Image 2: Resembles a "1".

* Image 3: Resembles a "2".

* Image 4: Resembles a "3".

* **Style:** The images are noisy and imperfect, characteristic of early-stage or generative model outputs.

### Key Observations

1. **Clear Division of Labor:** The system explicitly separates probabilistic sampling (PC) from deterministic gradient and weight calculations (CPU).

2. **Algorithmic Loop:** The flowchart in (b) defines a clear iterative training loop: sample -> compute gradients -> update parameters -> send new parameters back to the probabilistic hardware.

3. **Network Sparsity:** The diagram in (c) visually emphasizes a sparse connectivity pattern, particularly in the second hidden layer (`h⁽²⁾`), which has fewer nodes than the previous layers.

4. **Functional Demonstration:** Part (d) provides empirical evidence that the described hybrid system and Sparse DBM architecture can generate recognizable, albeit low-fidelity, images from the MNIST dataset.

### Interpretation

This composite figure presents a complete pipeline for a novel computing paradigm. It argues for the efficiency of using specialized probabilistic hardware (PC) for the sampling-intensive tasks inherent in training certain types of generative models (like DBMs), while leveraging conventional CPUs for the well-defined mathematical operations of gradient descent and parameter updates.

The **relationship between elements** is sequential and functional: (a) defines the hardware architecture, (b) details the software algorithm that runs on it, (c) specifies the model structure being trained, and (d) shows the tangible result of that training. The "Sparse" aspect of the DBM, highlighted in (c), is likely a key innovation aimed at reducing computational and memory overhead, making the hybrid approach more feasible.

The **notable trend** is the movement from abstract architecture (a) to concrete output (d). The **anomaly or point of interest** is the quality of the generated digits in (d); while recognizable, they are coarse, suggesting the system may be a proof-of-concept or that the sparsity constraint trades off some generative fidelity for efficiency. The figure collectively makes a case for heterogeneous computing architectures tailored to specific machine learning workloads.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Hybrid Probabilistic-Classical Computer System for Machine Learning

### Overview

The image illustrates a hybrid probabilistic-classical computing architecture for machine learning, combining probabilistic sampling (PC) with classical computation (CPU). It includes workflows for gradient computation, weight optimization, and sparse DBM (Deep Belief Machine) representations, culminating in image generation from the MNIST dataset.

---

### Components/Axes

#### (a) Hybrid System Architecture

- **Components**:

- **Probabilistic Computer (PC)**: Orange box labeled "Probabilistic Computer (PC)".

- **Classical Computer (CPU)**: Blue box labeled "Classical Computer (CPU)".

- **Data Flow**:

- **Samples**: Arrows from PC to CPU (labeled "samples").

- **Weights**: Arrows from CPU to PC (labeled "weights").

- **Label**: "Hybrid Probabilistic - Classical Computer".

#### (b) Machine Learning Workflow

- **Steps** (blue rounded rectangles):

1. **Get samples from PC**.

2. **Compute gradients (CPU)**.

3. **Compute weights (W) and biases (h) (CPU)**.

4. **Send W and h to PC**.

- **Label**: "Overview of machine learning algorithm".

#### (c) Sparse DBM Representation

- **Structure**:

- **Nodes**:

- **Input Layer**: Blue nodes labeled "v".

- **Hidden Layers**: Orange nodes labeled "h^(1)" and "h^(2)".

- **Output Layer**: Yellow nodes (no explicit label).

- **Connections**: Fully connected between layers (dashed lines).

- **Label**: "Sparse DBM representation with 2-layers of hidden p-bits".

#### (d) Image Generation

- **Examples**: Four black-and-white pixelated digits (0, 1, 2, 3).

- **Caption**: "Image generation with Sparse DBMs: Full MNIST".

---

### Detailed Analysis

#### (a) Hybrid System

- The PC generates probabilistic samples, which are processed by the CPU to compute gradients and update weights/biases. These updated parameters are then fed back to the PC, creating an iterative optimization loop.

#### (b) Workflow

- The algorithm alternates between probabilistic sampling (PC) and classical optimization (CPU), typical of contrastive divergence or Gibbs sampling in DBMs.

#### (c) Sparse DBM

- The 2-layer hidden p-bit architecture reduces computational complexity compared to full DBMs. The sparse connectivity (dashed lines) implies probabilistic dependencies between nodes.

#### (d) MNIST Generation

- The generated digits demonstrate the system's ability to reconstruct handwritten numerals, validating the effectiveness of the hybrid approach.

---

### Key Observations

1. **Bidirectional Data Flow**: The PC and CPU collaborate iteratively, with samples flowing to the CPU for gradient computation and updated parameters returning to the PC.

2. **Sparse Connectivity**: The DBM's sparse representation (orange/blue/yellow nodes) reduces memory and computational requirements.

3. **MNIST Validation**: The generated digits (0-3) confirm the system's capability for image synthesis.

---

### Interpretation

This hybrid architecture leverages the strengths of probabilistic sampling (exploration of high-dimensional spaces) and classical optimization (efficient gradient descent). The sparse DBM representation balances model complexity and computational efficiency, enabling practical deployment on hybrid hardware. The MNIST results suggest the system can generalize to real-world datasets, though scalability to larger images or datasets would require further optimization of the sparse connectivity and sampling strategies.

DECODING INTELLIGENCE...