\n

## Diagram: Privacy Layer for LLM/Gen AI Tools

### Overview

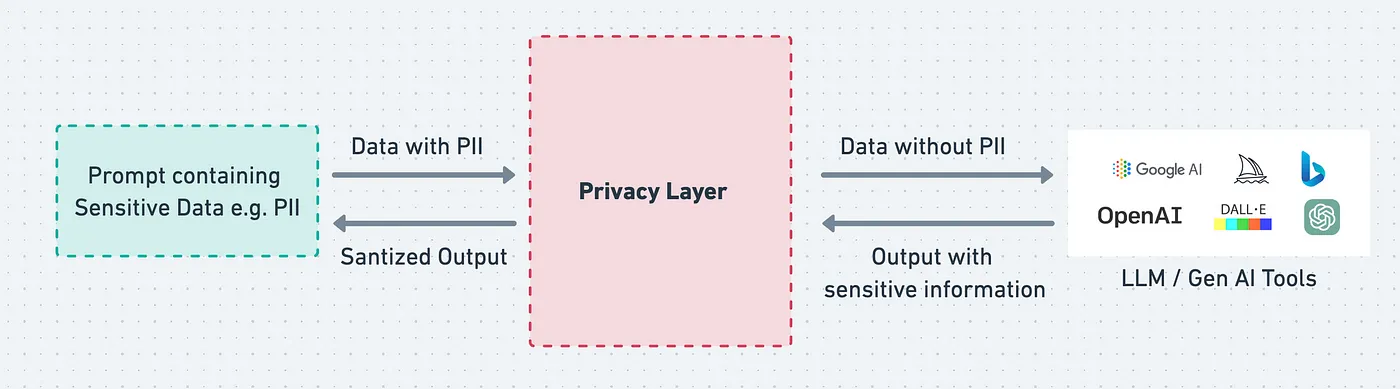

This diagram illustrates a privacy layer designed to protect Personally Identifiable Information (PII) when interacting with Large Language Models (LLMs) and Generative AI tools. It depicts the flow of data, both with and without PII, through this layer, and highlights the sanitization process.

### Components/Axes

The diagram consists of three main sections:

1. **Input Section (Left):** Labeled "Prompt containing Sensitive Data e.g. PII".

2. **Privacy Layer (Center):** A large, rectangular block labeled "Privacy Layer".

3. **Output Section (Right):** Labeled "LLM / Gen AI Tools" and includes logos for Google AI, OpenAI, DALL-E, and Microsoft Azure AI.

There are two data flows depicted:

* **Forward Flow:** "Data with PII" enters the Privacy Layer from the Input Section. "Data without PII" exits the Privacy Layer towards the LLM/Gen AI Tools.

* **Reverse Flow:** "Sanitized Output" exits the Input Section and enters the Privacy Layer. "Output with sensitive information" exits the Privacy Layer.

Arrows indicate the direction of data flow.

### Detailed Analysis or Content Details

The diagram shows a process where a prompt containing sensitive data (PII) is submitted. This prompt is processed by the "Privacy Layer," resulting in two outputs:

1. **Data without PII:** This is the sanitized data that is sent to the LLM/Gen AI Tools.

2. **Sanitized Output:** This is the output from the Privacy Layer that is sent back to the original prompt source.

The LLM/Gen AI Tools then generate an output, which may contain sensitive information. This output is sent back through the Privacy Layer, resulting in:

1. **Output with sensitive information:** This is the output from the Privacy Layer that is sent back to the original prompt source.

The logos in the Output Section are:

* **Google AI:** Represented by a multi-colored logo.

* **OpenAI:** Represented by a green logo.

* **DALL-E:** Represented by a rainbow-colored logo.

* **Microsoft Azure AI:** Represented by a blue logo.

### Key Observations

The diagram emphasizes the importance of a privacy layer in protecting sensitive data when using LLMs and Gen AI tools. It highlights the two-way flow of data and the sanitization process that occurs within the Privacy Layer. The inclusion of logos from major AI providers suggests this is a general architecture applicable to various platforms.

### Interpretation

This diagram illustrates a crucial architectural pattern for responsible AI development. The "Privacy Layer" acts as a gatekeeper, preventing the direct exposure of PII to LLMs and Gen AI tools. The two-way flow suggests a feedback loop where outputs are also scrutinized for potential leakage of sensitive information. The diagram implies that the Privacy Layer performs both input sanitization (removing PII from prompts) and output filtering (removing PII from generated responses).

The presence of multiple LLM/Gen AI tool logos indicates that this privacy layer is intended to be a generalized solution, applicable across different platforms. The diagram doesn't specify *how* the Privacy Layer achieves sanitization (e.g., redaction, masking, differential privacy), but it clearly establishes the *need* for such a mechanism. The diagram suggests a system designed to balance utility (allowing access to AI capabilities) with privacy (protecting sensitive data).