## Line Chart: Model Accuracy on Math Problems

### Overview

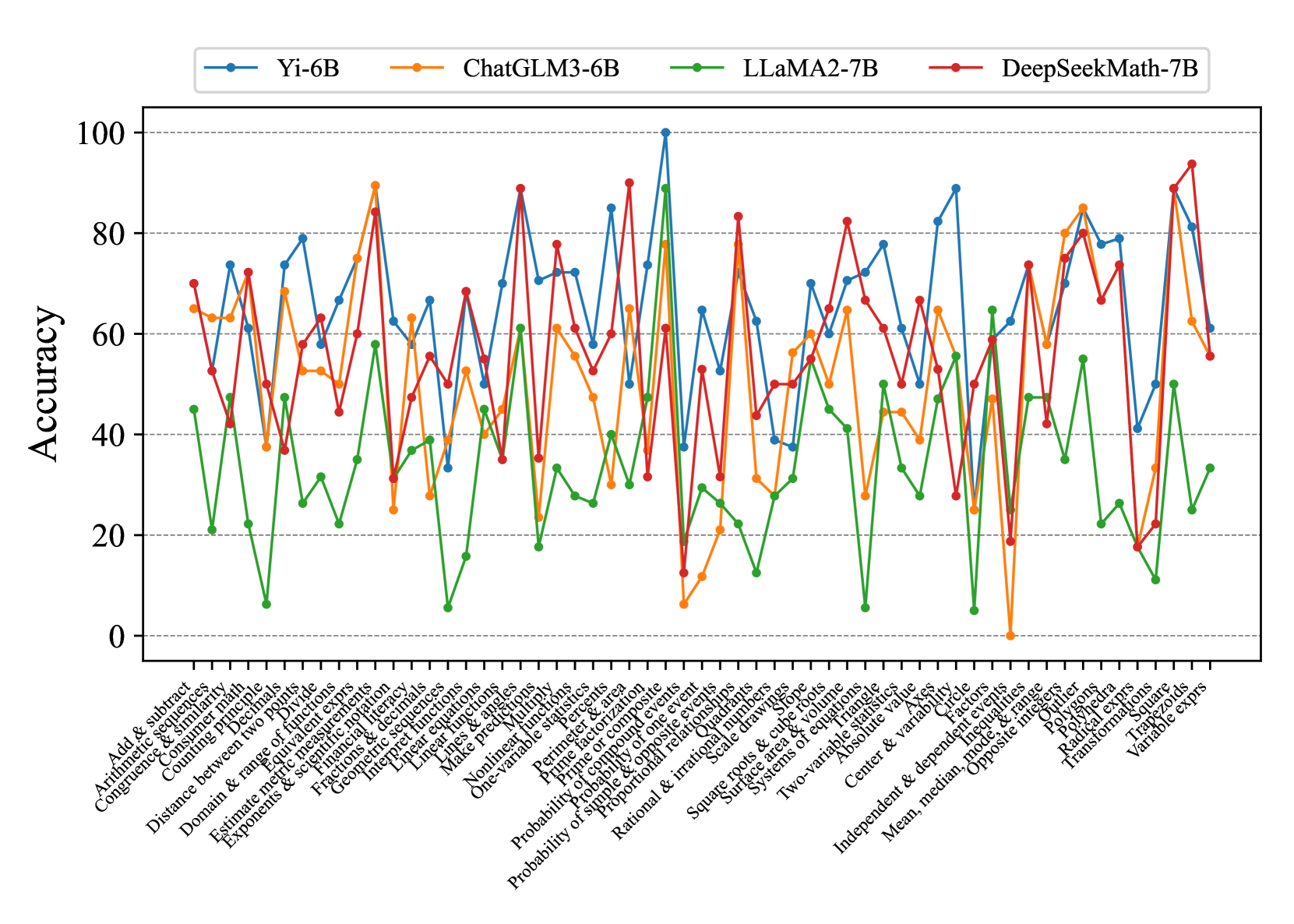

The image is a line chart comparing the accuracy of four different language models (Yi-6B, ChatGLM3-6B, LLaMA2-7B, and DeepSeekMath-7B) on a variety of math problem types. The x-axis represents different math topics, and the y-axis represents the accuracy score, ranging from 0 to 100.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:** Represents different math problem types. The labels are densely packed and rotated for readability. The labels are:

* Add & subtract

* Arithmetic sequences

* Congruence & similarity

* Consumer math

* Counting principle

* Distance between two points

* Domain & range of functions

* Estimate metric measurements

* Exponents & scientific notation

* Financial literacy

* Geometric sequences

* Interpret functions

* Linear equations

* Linear functions

* Lines & angles

* Make predictions

* Multiply

* Nonlinear functions

* One-variable statistics

* Percents

* Perimeter & area

* Prime factorization

* Prime or composite

* Probability of compound events

* Probability of one event

* Probability of simple & opposite events

* Proportional relationships

* Quadrants

* Radical expressions

* Rational & irrational numbers

* Scale drawings

* Slope

* Square

* Square roots & cube roots

* Surface area & volume

* Systems of equations

* Transformations

* Trapezoids

* Triangle

* Two-variable statistics

* Variable expressions

* Absolute value

* Center & variability

* Circle

* Divide

* Equivalent expressions

* Factors

* Inequalities

* Independent & dependent events

* Mean, median, mode, & range

* Opposite integers

* Outliers

* Polygons

* Polyhedra

* **Y-axis:** Represents "Accuracy" and ranges from 0 to 100, with tick marks at intervals of 20.

* **Legend:** Located at the top of the chart.

* **Blue:** Yi-6B

* **Orange:** ChatGLM3-6B

* **Green:** LLaMA2-7B

* **Red:** DeepSeekMath-7B

### Detailed Analysis

* **Yi-6B (Blue):** Generally fluctuates between 50 and 80 accuracy, with some dips and peaks.

* **ChatGLM3-6B (Orange):** Shows a similar trend to Yi-6B, but often with slightly lower accuracy on many problem types.

* **LLaMA2-7B (Green):** Consistently has the lowest accuracy across almost all problem types, often below 40, and sometimes near 0.

* **DeepSeekMath-7B (Red):** Appears to have the highest accuracy overall, frequently reaching above 70 and sometimes peaking near 90.

**Specific Data Points (Approximate):**

It's difficult to provide exact values without a grid, but here are some approximate data points for a few problem types:

* **Add & Subtract:**

* Yi-6B: ~70

* ChatGLM3-6B: ~65

* LLaMA2-7B: ~45

* DeepSeekMath-7B: ~70

* **Exponents & Scientific Notation:**

* Yi-6B: ~80

* ChatGLM3-6B: ~70

* LLaMA2-7B: ~30

* DeepSeekMath-7B: ~75

* **Prime Factorization:**

* Yi-6B: ~50

* ChatGLM3-6B: ~40

* LLaMA2-7B: ~20

* DeepSeekMath-7B: ~50

* **Absolute Value:**

* Yi-6B: ~70

* ChatGLM3-6B: ~70

* LLaMA2-7B: ~60

* DeepSeekMath-7B: ~80

### Key Observations

* DeepSeekMath-7B generally outperforms the other models.

* LLaMA2-7B consistently underperforms compared to the other models.

* Yi-6B and ChatGLM3-6B have similar performance, with Yi-6B often slightly better.

* There is significant variance in accuracy across different problem types for all models.

### Interpretation

The chart demonstrates the varying capabilities of different language models in solving math problems. DeepSeekMath-7B appears to be the most proficient, suggesting it may have been specifically trained or optimized for mathematical reasoning. LLaMA2-7B's lower performance indicates it may not be as well-suited for these types of tasks. The fluctuations in accuracy across different problem types highlight the challenges that even the best models face in handling the diverse range of mathematical concepts. The data suggests that model architecture and training data play a crucial role in determining a language model's ability to solve math problems accurately.