## Diagram: Knowledge Distillation for Large Transformer-Based Models

### Overview

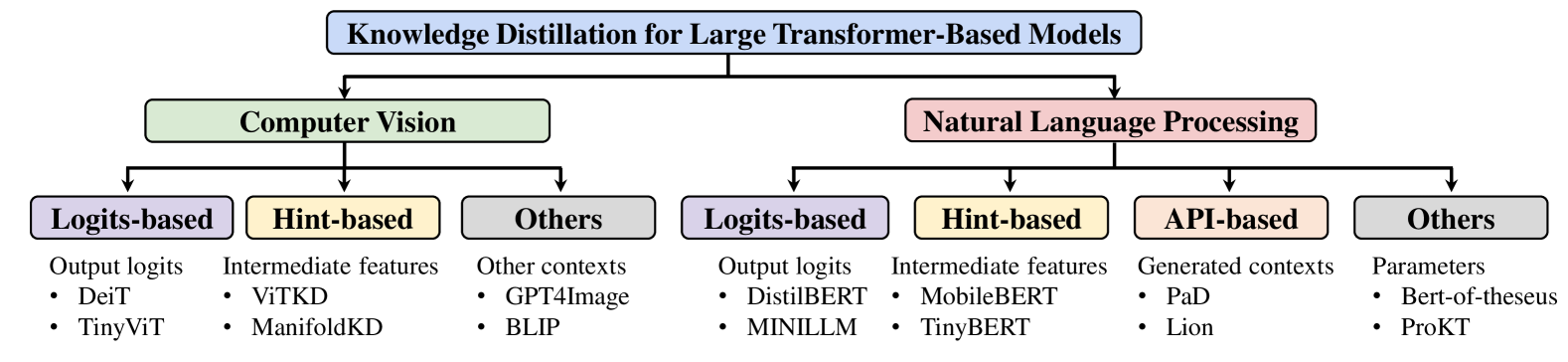

This diagram outlines the landscape of knowledge distillation techniques applied to large transformer-based models. It categorizes these techniques based on the domain (Computer Vision and Natural Language Processing) and then further subdivides them by the type of information being distilled.

### Components/Axes

The diagram is structured hierarchically:

1. **Top Level (Blue Rounded Rectangle):** "Knowledge Distillation for Large Transformer-Based Models" - This is the overarching topic.

2. **Second Level (Green and Pink Rectangles):**

* "Computer Vision" (Green)

* "Natural Language Processing" (Pink)

These represent the two primary application domains. Arrows point from the top level to these domains, indicating they are sub-categories.

3. **Third Level (Colored Rectangles with Bold Text):** These represent different distillation approaches within each domain.

* **Under Computer Vision:**

* "Logits-based" (Purple)

* "Hint-based" (Yellow)

* "Others" (Gray)

* **Under Natural Language Processing:**

* "Logits-based" (Purple)

* "Hint-based" (Yellow)

* "API-based" (Orange)

* "Others" (Gray)

Arrows point from the domain rectangles to these sub-categories.

4. **Fourth Level (Bullet Points):** These list specific examples of models or methods within each distillation approach. Each approach has a brief description of what is being distilled.

### Detailed Analysis

**Computer Vision Domain:**

* **Logits-based (Purple):**

* Description: Output logits

* Examples:

* DeiT

* TinyViT

* **Hint-based (Yellow):**

* Description: Intermediate features

* Examples:

* ViTKD

* ManifoldKD

* **Others (Gray):**

* Description: Other contexts

* Examples:

* GPT4Image

* BLIP

**Natural Language Processing Domain:**

* **Logits-based (Purple):**

* Description: Output logits

* Examples:

* DistilBERT

* MINILLM

* **Hint-based (Yellow):**

* Description: Intermediate features

* Examples:

* MobileBERT

* TinyBERT

* **API-based (Orange):**

* Description: Generated contexts

* Examples:

* PaD

* Lion

* **Others (Gray):**

* Description: Parameters

* Examples:

* Bert-of-theseus

* ProKT

### Key Observations

* The diagram clearly distinguishes between knowledge distillation for Computer Vision and Natural Language Processing.

* Both domains share "Logits-based" and "Hint-based" distillation approaches.

* Natural Language Processing has an additional category, "API-based," which is not present in Computer Vision.

* The "Others" category exists in both domains, suggesting a catch-all for methods that don't fit the primary classifications.

* Specific model names are provided as concrete examples for each category, offering a practical view of these distillation techniques.

### Interpretation

This diagram provides a structured taxonomy of knowledge distillation strategies for large transformer models. It illustrates that the core principles of distillation, such as leveraging output logits or intermediate features, are applicable across different AI domains. The divergence in NLP with the "API-based" category suggests that the nature of interaction with large language models (e.g., through APIs) can lead to unique distillation paradigms. The presence of an "Others" category in both domains highlights the evolving nature of research and the potential for novel distillation methods that may not fit neatly into existing frameworks. Overall, the diagram serves as a useful map for understanding the current approaches and potential research directions in making large transformer models more efficient through knowledge distillation.