## Textual Instruction Block: LLM Reasoning Extraction Task

### Overview

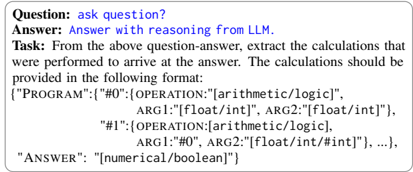

The image displays a set of instructions for a task, presented as a block of text within a light grey rounded rectangular border. It outlines a process involving a "Question", an "Answer" (presumably from an LLM), and a "Task" to extract calculations from the provided question-answer pair. A detailed, JSON-like output format for these extracted calculations is also specified.

### Components/Axes

This image does not contain traditional chart components like axes, legends, or data series. Instead, it consists of structured textual elements:

* **Main Content Area**: A white background containing all the text, enclosed by a light grey rounded rectangular border.

* **Labels**: Bolded text labels "Question:", "Answer:", and "Task:" are used to categorize the subsequent information.

* **Instructional Text**: Regular font size and weight text providing the details of the question, answer, and task.

* **Code/Format Block**: An indented, multi-line block of text defining a JSON-like structure for the required output format.

### Detailed Analysis

The entire content is in English.

1. **Question**: Located at the top-left of the text block.

* The label "Question:" is bold.

* The content is "ask question?".

2. **Answer**: Located directly below the "Question" section.

* The label "Answer:" is bold.

* The content is "Answer with reasoning from LLM." This text is displayed in blue color.

3. **Task**: Located directly below the "Answer" section.

* The label "Task:" is bold.

* The instruction text is: "From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format:"

4. **Output Format Specification**: This section, starting below the "Task" description, details the required structure for the extracted calculations. It is presented as an indented, multi-line, JSON-like object:

```json

{"PROGRAM":{"#0":{"OPERATION":"[arithmetic/logic]",

ARG1:"[float/int]", ARG2:"[float/int]"},

"#1":{"OPERATION":"[arithmetic/logic]",

ARG1:"#0", ARG2:"[float/int/#int]"}, ...},

"ANSWER":"[numerical/boolean]"}

```

* The top-level object contains two keys: `"PROGRAM"` and `"ANSWER"`.

* `"PROGRAM"` is an object containing multiple operations, indexed by string keys like `"#0"`, `"#1"`, and so on (indicated by `...`).

* Each operation object (e.g., `"#0"`, `"#1"`) has three keys:

* `"OPERATION"`: A string value indicating the type of operation, e.g., `"[arithmetic/logic]"`.

* `"ARG1"`: A string value representing the first argument. It can be a direct numerical type (`"[float/int]"`) or a reference to the result of a previous operation (e.g., `"#0"`).

* `"ARG2"`: A string value representing the second argument. It can be a direct numerical type (`"[float/int]"`) or a reference to the result of a previous operation (`"#int]"`).

* `"ANSWER"`: A string value indicating the final result type, e.g., `"[numerical/boolean]"`.

### Key Observations

* The document defines a clear input (Question-Answer pair) and a highly structured output (JSON-like program).

* The "Answer" is explicitly stated to be "with reasoning from LLM," highlighting the source and nature of the input to be analyzed.

* The output format for the "PROGRAM" allows for a sequence of operations, where subsequent operations can depend on the results of prior ones (e.g., `ARG1:"#0"`).

* The types for arguments (`float/int`) and the final answer (`numerical/boolean`) are specified, indicating a focus on quantitative or logical reasoning.

* The `...` in the program structure implies that an arbitrary number of operations can be extracted.

### Interpretation

This document outlines a specific task designed to extract and formalize the computational or logical steps embedded within a Large Language Model's (LLM) natural language reasoning. The goal is to transform an LLM's explanation of how it arrived at an answer into a structured, machine-readable "program."

The "PROGRAM" object represents a sequence of discrete operations, each with a defined type (`arithmetic/logic`) and arguments. The ability for arguments to reference previous operations (`#0`, `#int`) signifies that the extracted program should capture the flow and dependencies of the LLM's reasoning process. This structure is crucial for several reasons:

1. **Verifiability**: By formalizing the reasoning into a program, the steps can be executed independently to verify if the LLM's stated reasoning indeed leads to the correct answer. This enhances the trustworthiness and auditability of LLM outputs.

2. **Interpretability**: It provides a clear, step-by-step breakdown of the LLM's thought process, making its internal workings more transparent than just a natural language explanation.

3. **Debugging and Improvement**: If the extracted program yields an incorrect result, it can pinpoint exactly where the LLM's reasoning went astray, facilitating targeted improvements to the model.

In essence, this document describes a method for "programmatic reasoning extraction," aiming to bridge the gap between natural language explanations and formal computational logic, particularly in the context of AI model evaluation.