## Line Chart: Performance Comparison of Dimensionality Reduction Methods

### Overview

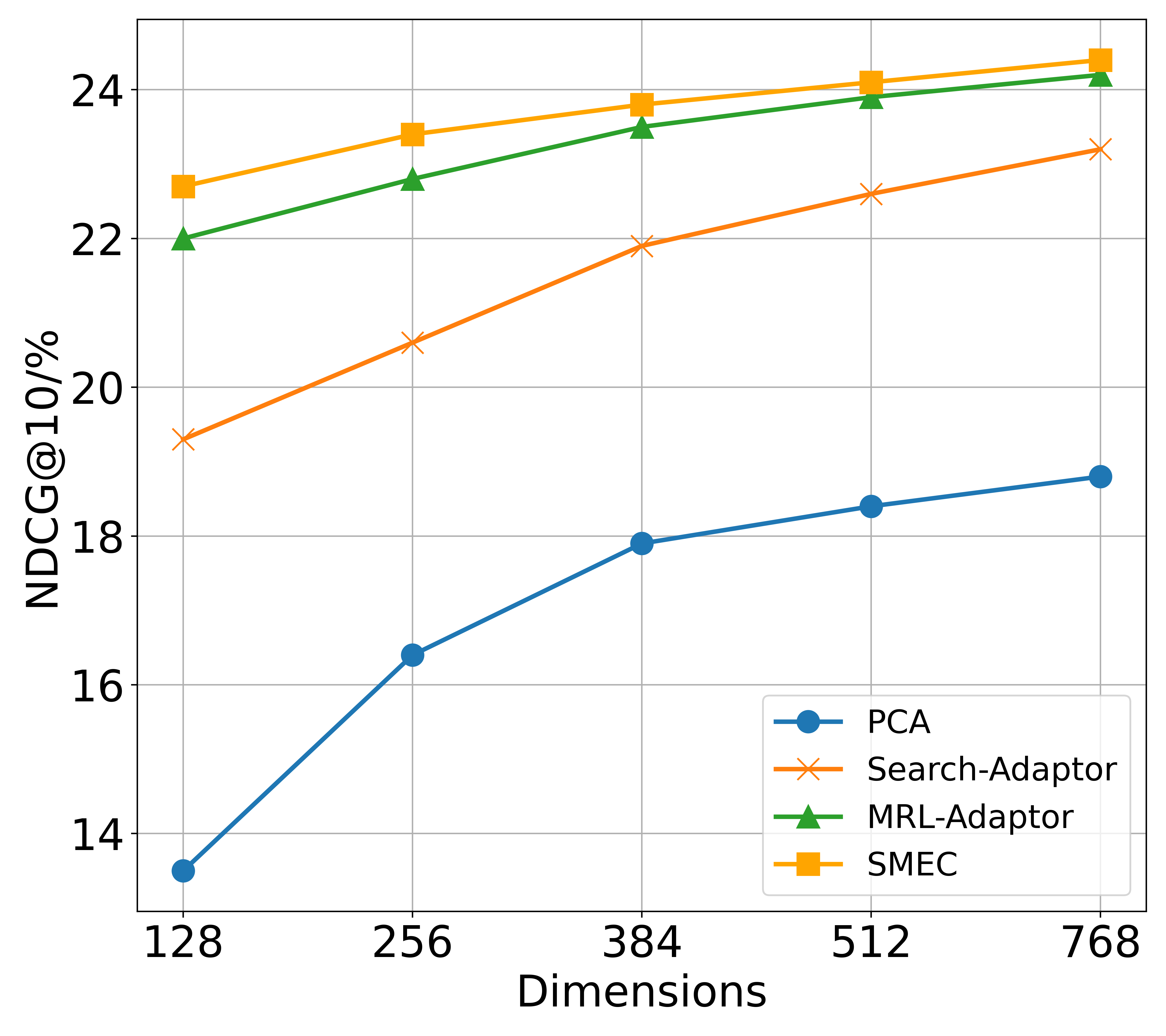

The image is a line chart comparing the performance of four different dimensionality reduction or adaptation methods across varying numbers of dimensions. The performance metric is NDCG@10 (Normalized Discounted Cumulative Gain at rank 10), expressed as a percentage. The chart demonstrates how each method's effectiveness changes as the dimensionality of the representation increases.

### Components/Axes

* **Chart Type:** Multi-series line chart with markers.

* **X-Axis:**

* **Label:** "Dimensions"

* **Scale:** Categorical/ordinal with discrete values: 128, 256, 384, 512, 768.

* **Y-Axis:**

* **Label:** "NDCG@10/%"

* **Scale:** Linear, ranging from approximately 13 to 25. Major gridlines are at intervals of 2 (14, 16, 18, 20, 22, 24).

* **Legend:**

* **Position:** Bottom-right corner of the chart area.

* **Entries (from top to bottom as listed):**

1. **PCA:** Blue line with circle markers.

2. **Search-Adaptor:** Orange line with 'x' (cross) markers.

3. **MRL-Adaptor:** Green line with triangle markers.

4. **SMEC:** Yellow/gold line with square markers.

### Detailed Analysis

**Trend Verification:** All four data series show a clear upward trend, indicating that NDCG@10 performance improves as the number of dimensions increases from 128 to 768.

**Data Series & Approximate Values:**

1. **PCA (Blue line, circle markers):**

* **Trend:** Steepest initial increase, then continues to rise steadily. It is the lowest-performing method at all dimension points.

* **Approximate Values:**

* 128 Dimensions: ~13.5%

* 256 Dimensions: ~16.4%

* 384 Dimensions: ~17.9%

* 512 Dimensions: ~18.4%

* 768 Dimensions: ~18.8%

2. **Search-Adaptor (Orange line, 'x' markers):**

* **Trend:** Consistent, nearly linear upward slope. It is the third-best performing method.

* **Approximate Values:**

* 128 Dimensions: ~19.3%

* 256 Dimensions: ~20.6%

* 384 Dimensions: ~21.9%

* 512 Dimensions: ~22.6%

* 768 Dimensions: ~23.2%

3. **MRL-Adaptor (Green line, triangle markers):**

* **Trend:** Steady upward slope, slightly less steep than Search-Adaptor. It is the second-best performing method.

* **Approximate Values:**

* 128 Dimensions: ~22.0%

* 256 Dimensions: ~22.8%

* 384 Dimensions: ~23.5%

* 512 Dimensions: ~23.9%

* 768 Dimensions: ~24.2%

4. **SMEC (Yellow/gold line, square markers):**

* **Trend:** Gradual upward slope, consistently the highest-performing method. The performance gap between SMEC and MRL-Adaptor narrows slightly as dimensions increase.

* **Approximate Values:**

* 128 Dimensions: ~22.7%

* 256 Dimensions: ~23.4%

* 384 Dimensions: ~23.8%

* 512 Dimensions: ~24.1%

* 768 Dimensions: ~24.4%

### Key Observations

1. **Performance Hierarchy:** A clear and consistent performance hierarchy is maintained across all tested dimensions: **SMEC > MRL-Adaptor > Search-Adaptor > PCA**.

2. **Diminishing Returns:** All methods show signs of diminishing returns; the performance gain from increasing dimensions is larger at the lower end (e.g., 128 to 256) than at the higher end (e.g., 512 to 768).

3. **Significant Gap:** There is a substantial performance gap (approximately 5-6 percentage points) between the traditional PCA method and the three adaptor-based methods (Search-Adaptor, MRL-Adaptor, SMEC) at every dimension level.

4. **Convergence at Top:** The top two methods, SMEC and MRL-Adaptor, have very similar performance, with their lines nearly converging at 768 dimensions (a difference of only ~0.2%).

### Interpretation

This chart likely comes from a research paper in information retrieval or machine learning, evaluating methods for creating efficient vector representations for search or recommendation tasks. NDCG@10 is a standard metric for ranking quality.

The data suggests that:

* **Adaptor-based methods are superior:** The three methods labeled as "Adaptor" or a related technique (SMEC) significantly outperform the classical PCA baseline. This indicates that task-specific adaptation or learning of the dimensionality reduction projection is more effective than a generic, unsupervised method like PCA for this ranking task.

* **SMEC is the most effective:** Among the compared techniques, SMEC achieves the highest ranking quality across all tested dimensionalities, making it the most effective method in this evaluation.

* **More dimensions help, but cost more:** Increasing the dimensionality of the vector representation improves retrieval quality for all methods, but the benefit per added dimension decreases. This presents a trade-off between performance and computational/storage cost. The chart helps identify a potential "sweet spot" (e.g., 384 or 512 dimensions) where most of the performance gain has been realized.

* **Robustness of advanced methods:** The relatively flat slopes of MRL-Adaptor and SMEC compared to PCA and Search-Adaptor suggest their performance is more robust and less sensitive to reductions in dimensionality, which is a desirable property for efficient systems.