## Diagram: Encoder-Decoder and Decoder-Only PaG Architectures

### Overview

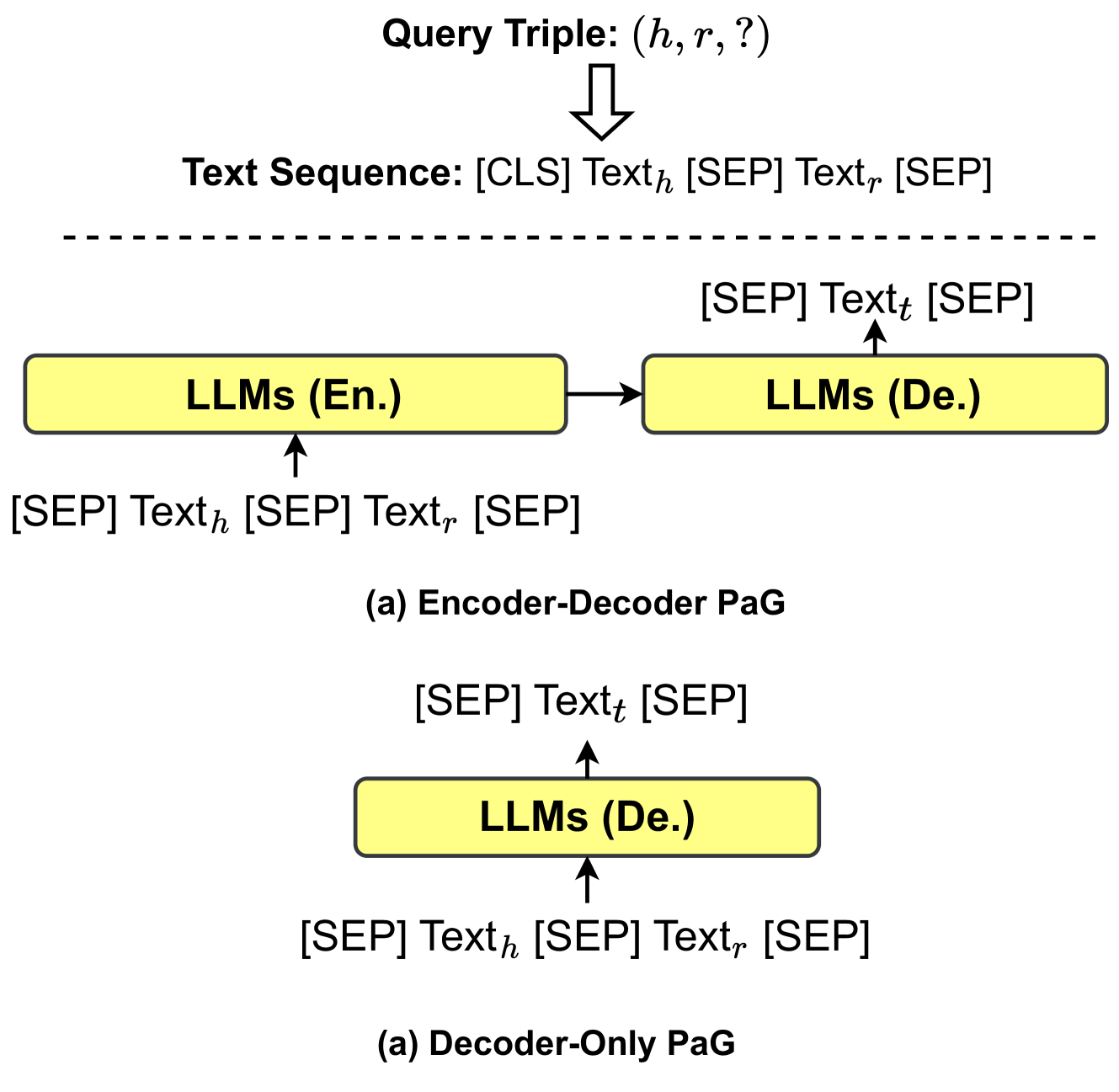

The image presents two distinct architectures for Prompt Augmented Generation (PaG) using Large Language Models (LLMs). The first architecture is an Encoder-Decoder PaG, and the second is a Decoder-Only PaG. Both diagrams illustrate how a query triple (h, r, ?) is processed to generate text.

### Components/Axes

* **Top:**

* `Query Triple: (h, r, ?)`: Represents the input query consisting of a head entity (h), a relation (r), and a missing tail entity (?).

* Downward arrow indicating the flow of the query into the subsequent text sequence.

* `Text Sequence: [CLS] Text_h [SEP] Text_r [SEP]`: Represents the input text sequence. `[CLS]` denotes the classification token, `Text_h` the text of the head entity, `[SEP]` the separator token, and `Text_r` the text of the relation.

* Dashed horizontal line separating the input sequence from the PaG architectures.

* **Encoder-Decoder PaG (Top Diagram):**

* `LLMs (En.)`: Represents the Encoder LLM.

* `LLMs (De.)`: Represents the Decoder LLM.

* `[SEP] Text_h [SEP] Text_r [SEP]`: Input to the Encoder LLM.

* `[SEP] Text_t [SEP]`: Input to the Decoder LLM, where `Text_t` is the generated text for the tail entity.

* Arrow from `LLMs (En.)` to `LLMs (De.)` indicating the flow of information from the encoder to the decoder.

* Upward arrow from `[SEP] Text_h [SEP] Text_r [SEP]` to `LLMs (En.)`.

* Upward arrow from `[SEP] Text_t [SEP]` to `LLMs (De.)`.

* `(a) Encoder-Decoder PaG`: Label indicating the type of architecture.

* **Decoder-Only PaG (Bottom Diagram):**

* `LLMs (De.)`: Represents the Decoder LLM.

* `[SEP] Text_h [SEP] Text_r [SEP]`: Input to the Decoder LLM.

* `[SEP] Text_t [SEP]`: Output from the Decoder LLM, where `Text_t` is the generated text for the tail entity.

* Upward arrow from `[SEP] Text_h [SEP] Text_r [SEP]` to `LLMs (De.)`.

* Upward arrow from `[SEP] Text_t [SEP]` to `LLMs (De.)`.

* `(a) Decoder-Only PaG`: Label indicating the type of architecture.

### Detailed Analysis or Content Details

* **Query Triple:** The process starts with a query triple `(h, r, ?)`, where `h` is the head entity, `r` is the relation, and `?` indicates the missing tail entity that needs to be predicted.

* **Text Sequence:** The query triple is converted into a text sequence `[CLS] Text_h [SEP] Text_r [SEP]`. This sequence is then used as input to the PaG architectures.

* **Encoder-Decoder PaG:** In this architecture, the text sequence `[SEP] Text_h [SEP] Text_r [SEP]` is fed into the Encoder LLM. The Encoder processes this input and passes information to the Decoder LLM. The Decoder then generates the text for the tail entity `Text_t`, which is represented as `[SEP] Text_t [SEP]`.

* **Decoder-Only PaG:** In this architecture, the text sequence `[SEP] Text_h [SEP] Text_r [SEP]` is directly fed into the Decoder LLM. The Decoder then generates the text for the tail entity `Text_t`, which is represented as `[SEP] Text_t [SEP]`.

### Key Observations

* Both architectures aim to generate the missing tail entity `Text_t` based on the given head entity `Text_h` and relation `Text_r`.

* The Encoder-Decoder PaG uses two separate LLMs (Encoder and Decoder), while the Decoder-Only PaG uses only a single Decoder LLM.

* The input text sequence is similar for both architectures, but the processing differs based on whether an encoder is used.

### Interpretation

The diagram illustrates two different approaches to Prompt Augmented Generation (PaG) for knowledge graph completion. The Encoder-Decoder PaG leverages the strengths of both encoder and decoder models, where the encoder can effectively capture the context of the input sequence, and the decoder can generate the missing tail entity. The Decoder-Only PaG simplifies the architecture by using a single decoder model, which can be more efficient but may require more sophisticated prompting techniques to achieve comparable performance. The choice between these architectures depends on the specific requirements of the task, such as the desired accuracy, efficiency, and the available computational resources.