## Diagram: Soft Thinking in Large Language Models

### Overview

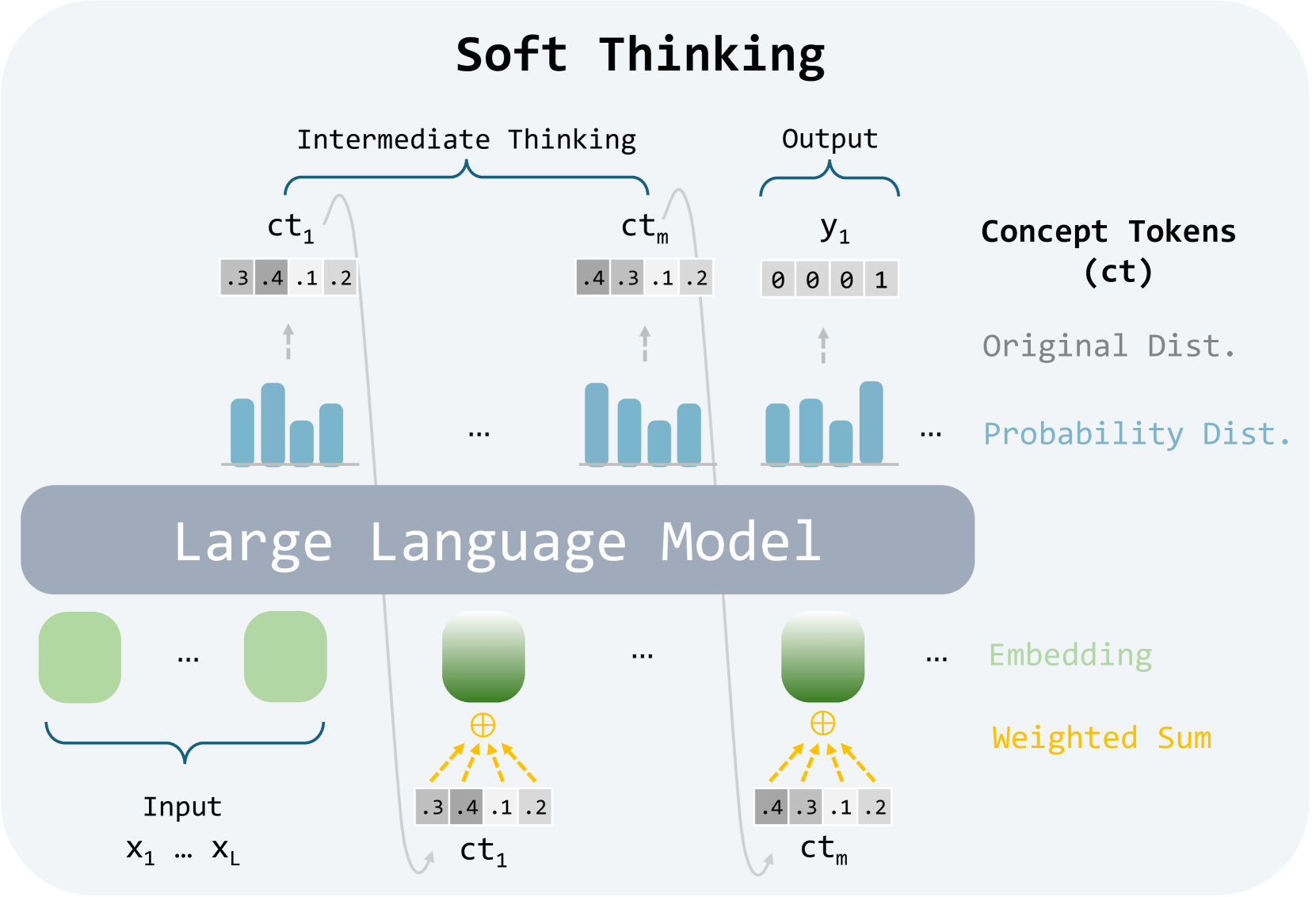

The image illustrates the concept of "Soft Thinking" within a Large Language Model (LLM). It depicts the flow of information from input tokens through intermediate thinking stages to the final output, highlighting the role of concept tokens and probability distributions.

### Components/Axes

* **Title:** Soft Thinking

* **Input:** Represented by a series of green rounded rectangles labeled "Input" and denoted as X1 ... XL.

* **Large Language Model:** A grey rounded rectangle labeled "Large Language Model" represents the core processing unit.

* **Intermediate Thinking:** A bracket above two sets of elements indicates this stage.

* **Output:** A bracket above a set of elements indicates the output stage.

* **Concept Tokens (ct):** Represented as ct1 and ctm, with associated numerical values.

* **Original Dist.:** Label associated with the concept tokens.

* **Probability Dist.:** Label associated with bar graphs.

* **Embedding:** Label associated with the green rounded rectangles.

* **Weighted Sum:** Label associated with the summation operation and arrows.

* **y1:** Represents the output tokens.

### Detailed Analysis

* **Input Stage:**

* A series of green rounded rectangles represent the input tokens (X1 ... XL).

* The number of input tokens is not explicitly defined but implied to be variable.

* **Large Language Model:**

* The grey rounded rectangle signifies the LLM's processing.

* **Intermediate Thinking Stage:**

* Two sets of elements are shown: one labeled ct1 and the other ctm.

* Each set includes:

* A green rounded rectangle representing an embedding.

* A summation symbol (⊕).

* A grey rectangle containing numerical values: ".3 .4 .1 .2" for ct1 and ".4 .3 .1 .2" for ctm.

* A bar graph representing a probability distribution.

* An arrow pointing from the bar graph to the grey rectangle.

* Dashed yellow arrows connect the embedding to the summation symbol and then to the numerical values in the grey rectangle, indicating a weighted sum operation.

* **Output Stage:**

* A set of elements labeled y1.

* Includes:

* A grey rectangle containing numerical values: "0 0 0 1".

* A bar graph representing a probability distribution.

* An arrow pointing from the bar graph to the grey rectangle.

* **Concept Tokens (ct):**

* Represented by ct1 and ctm.

* Associated with numerical values that appear to represent probabilities or weights.

* **Probability Distributions:**

* Represented by bar graphs.

* The height of each bar corresponds to a probability value.

* For ct1, the approximate heights of the bars are: 0.3, 0.4, 0.1, 0.2.

* For ctm, the approximate heights of the bars are: 0.4, 0.3, 0.1, 0.2.

* For y1, the approximate heights of the bars are: 0, 0, 0, 1.

* **Weighted Sum:**

* The dashed yellow arrows indicate that the embeddings are combined using a weighted sum operation to produce the concept tokens.

### Key Observations

* The diagram illustrates a simplified view of how an LLM processes input tokens to generate an output.

* The concept of "Soft Thinking" is represented by the intermediate thinking stages, where embeddings are combined and transformed into concept tokens.

* The probability distributions provide a visual representation of the uncertainty associated with each token.

* The output y1 shows a clear preference for the last token, as indicated by the value "1".

### Interpretation

The diagram suggests that "Soft Thinking" in LLMs involves a process of transforming input embeddings into concept tokens through weighted sums and probability distributions. This allows the model to represent uncertainty and make more nuanced predictions. The intermediate thinking stages (ct1 and ctm) represent key steps in this process, where the model refines its understanding of the input before generating the final output. The diagram highlights the importance of probability distributions in capturing the uncertainty associated with each token, which is a crucial aspect of soft thinking. The final output y1 demonstrates how the model uses these probabilities to make a decision, in this case, favoring the last token.