TECHNICAL ASSET FINGERPRINT

9711924a93cf9c31da4f03eb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

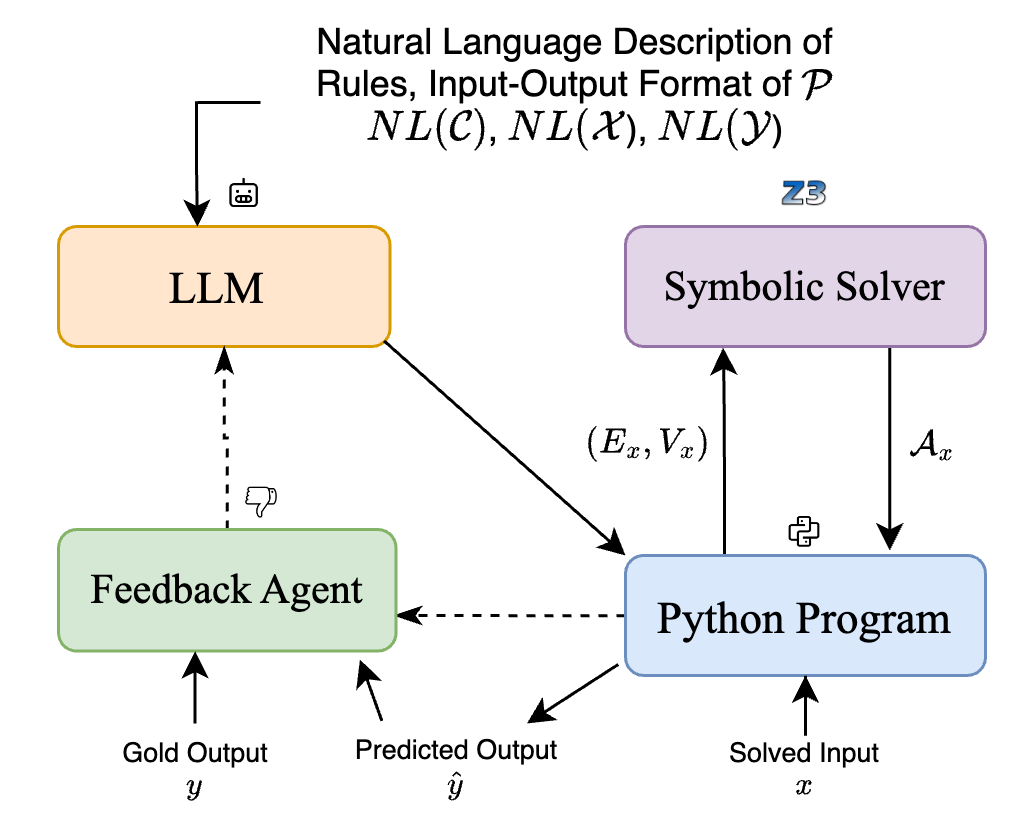

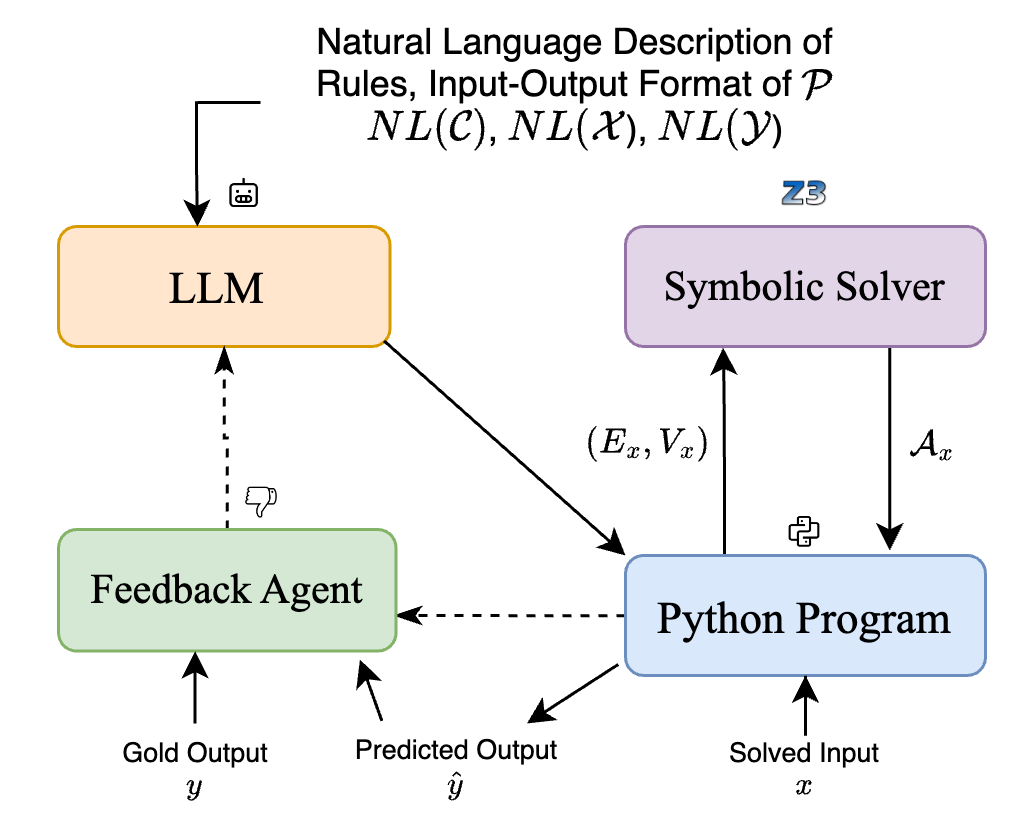

## System Architecture Diagram: Neuro-Symbolic Feedback Loop

### Overview

The image is a technical system architecture diagram illustrating a feedback-driven process that combines a Large Language Model (LLM), a symbolic solver, and a Python program. The system appears designed to translate natural language descriptions into executable code, solve constraints, and iteratively refine outputs using a feedback agent. The diagram uses colored boxes, directional arrows with labels, and icons to denote components and data flow.

### Components/Axes

The diagram is composed of four primary rectangular components, each with a distinct color and label, arranged in a 2x2 grid.

**1. Top-Left Component: LLM**

* **Label:** "LLM"

* **Color:** Light orange/peach fill with a darker orange border.

* **Position:** Top-left quadrant.

* **Inputs:**

* A solid black arrow from the top, originating from the text block "Natural Language Description of Rules, Input-Output Format of 𝒫".

* A dashed black arrow from below, originating from the "Feedback Agent".

* **Outputs:**

* A solid black arrow pointing diagonally down-right to the "Python Program".

* **Icon:** A small robot head icon is placed near the top input arrow.

**2. Top-Right Component: Symbolic Solver**

* **Label:** "Symbolic Solver"

* **Color:** Light purple fill with a darker purple border.

* **Position:** Top-right quadrant.

* **Inputs:**

* A solid black arrow from below, originating from the "Python Program", labeled with the mathematical notation `(Eₓ, Vₓ)`.

* **Outputs:**

* A solid black arrow pointing down to the "Python Program", labeled with the mathematical notation `𝒜ₓ`.

* **Icon:** The text "Z3" (a well-known SMT solver) is placed above this component.

**3. Bottom-Left Component: Feedback Agent**

* **Label:** "Feedback Agent"

* **Color:** Light green fill with a darker green border.

* **Position:** Bottom-left quadrant.

* **Inputs:**

* A solid black arrow from below, labeled "Gold Output" with the variable `y`.

* A solid black arrow from the right, labeled "Predicted Output" with the variable `ŷ` (y-hat).

* A dashed black arrow from the right, originating from the "Python Program".

* **Outputs:**

* A dashed black arrow pointing up to the "LLM".

* **Icon:** A thumbs-down icon is placed near the output arrow to the LLM.

**4. Bottom-Right Component: Python Program**

* **Label:** "Python Program"

* **Color:** Light blue fill with a darker blue border.

* **Position:** Bottom-right quadrant.

* **Inputs:**

* A solid black arrow from the top-left, originating from the "LLM".

* A solid black arrow from above, originating from the "Symbolic Solver", labeled `𝒜ₓ`.

* A solid black arrow from below, labeled "Solved Input" with the variable `x`.

* **Outputs:**

* A solid black arrow pointing up to the "Symbolic Solver", labeled `(Eₓ, Vₓ)`.

* A solid black arrow pointing down-left, labeled "Predicted Output" with the variable `ŷ`.

* A dashed black arrow pointing left to the "Feedback Agent".

* **Icon:** A plus sign inside a square (resembling a code or execution icon) is placed near the output arrow to the Symbolic Solver.

**Top Text Block:**

* **Text:** "Natural Language Description of Rules, Input-Output Format of 𝒫"

* **Sub-text:** "NL(𝒞), NL(𝒳), NL(𝒴)"

* **Position:** Centered at the very top of the diagram. This serves as the primary input description for the system.

### Detailed Analysis

The diagram defines a precise data flow and interaction protocol between the four components:

1. **Initialization:** The process begins with a natural language description of rules and formats (`NL(𝒞), NL(𝒳), NL(𝒴)`) for a problem `𝒫`. This description is fed into the **LLM**.

2. **Code Generation & Execution:** The **LLM** processes the natural language and generates output that is sent to the **Python Program** component. The Python Program also receives a "Solved Input" `x`.

3. **Symbolic Reasoning:** The **Python Program** formulates a constraint or problem, represented as `(Eₓ, Vₓ)` (likely equations and variables), and sends it to the **Symbolic Solver** (Z3). The solver processes this and returns a solution or assignment `𝒜ₓ` back to the Python Program.

4. **Output & Feedback:** The **Python Program** produces a "Predicted Output" `ŷ`. This prediction, along with the "Gold Output" `y` (the ground truth), is sent to the **Feedback Agent**. The Feedback Agent compares `y` and `ŷ`.

5. **Iterative Refinement:** Based on the comparison, the **Feedback Agent** sends feedback (indicated by the thumbs-down icon and dashed line) back to the **LLM**, presumably to improve its next generation. This creates a closed-loop system for iterative refinement.

### Key Observations

* **Hybrid Architecture:** The system explicitly combines statistical AI (LLM) with symbolic AI (Z3 Solver), mediated by deterministic code (Python Program).

* **Two Feedback Loops:** There is a primary, solid-line data flow for execution and a secondary, dashed-line feedback loop for learning/correction.

* **Clear Role Separation:** Each component has a distinct, non-overlapping role: natural language understanding (LLM), formal constraint solving (Symbolic Solver), imperative execution (Python Program), and evaluation (Feedback Agent).

* **Mathematical Formalism:** The use of notations like `NL(𝒞)`, `(Eₓ, Vₓ)`, and `𝒜ₓ` indicates the system is grounded in formal mathematical or logical representations.

### Interpretation

This diagram represents a **neuro-symbolic AI system designed for robust, verifiable, and correctable code generation or problem-solving**. The core innovation is the integration loop:

* **The LLM** acts as a "translator" from ambiguous human language to a more structured representation, but its outputs are not trusted directly.

* **The Symbolic Solver (Z3)** provides a "grounding" in formal logic, ensuring that the final output adheres to strict rules and constraints, which pure LLMs often violate.

* **The Python Program** serves as the "orchestrator" and "interface," converting between the different representations (LLM output to solver input, solver output to final prediction).

* **The Feedback Agent** enables **self-correction**. By comparing the system's prediction (`ŷ`) to the known correct answer (`y`), it can identify failures and instruct the LLM to adjust its approach, potentially improving performance over multiple iterations or on similar future tasks.

The system's goal is likely to achieve higher reliability and accuracy than an LLM alone, especially for tasks requiring strict logical consistency, mathematical reasoning, or adherence to formal specifications (e.g., program synthesis, theorem proving, constraint satisfaction problems). The "Z3" label strongly suggests applications in software verification, security policy analysis, or complex scheduling. The architecture acknowledges the strengths and weaknesses of each paradigm and seeks to combine them synergistically.

DECODING INTELLIGENCE...