TECHNICAL ASSET FINGERPRINT

98f5d78f850ff12a7e8c49ad

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Question Answering Diagram: Wigan Athletic F.C. Sponsorship

### Overview

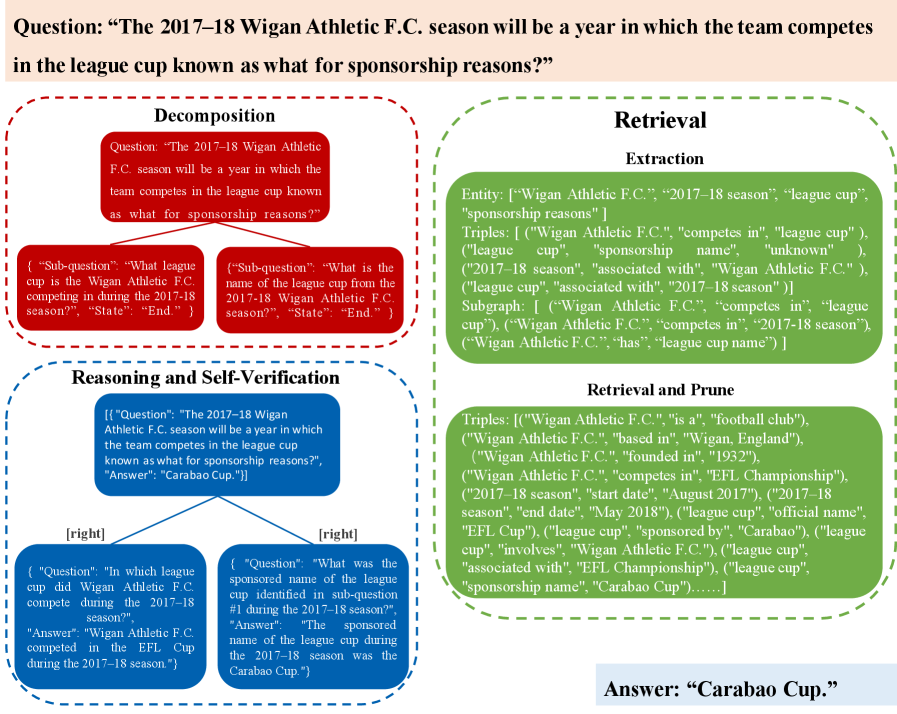

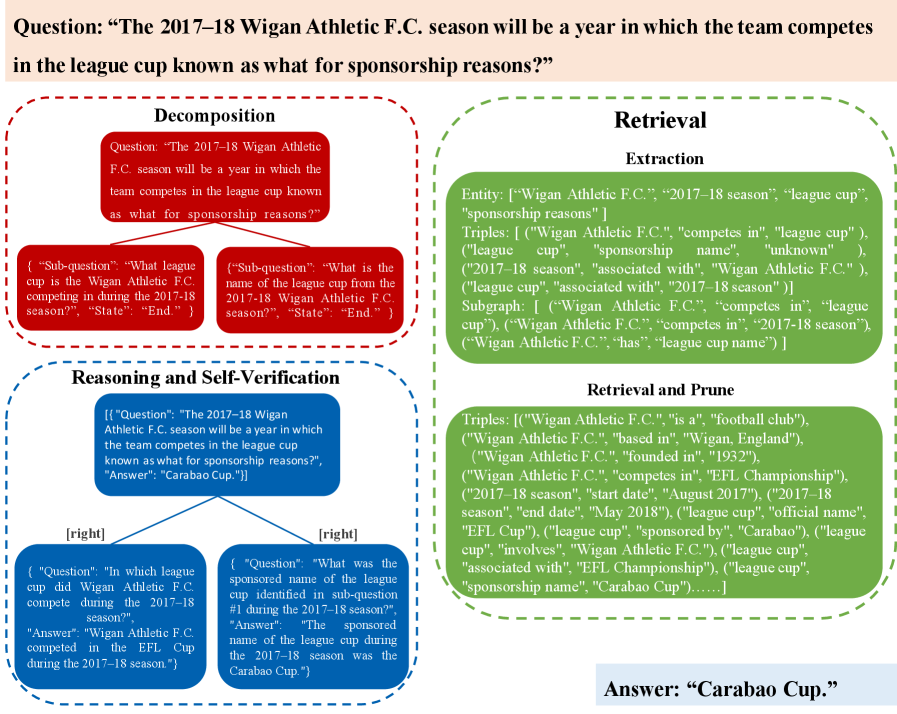

The image presents a diagram illustrating a question-answering process related to the sponsorship of the league cup in which Wigan Athletic F.C. competed during the 2017-18 season. The diagram is divided into four sections: Decomposition, Retrieval, Reasoning and Self-Verification, and Answer. Each section outlines a step in the process of answering the question: "The 2017-18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?".

### Components/Axes

* **Overall Question (Top):** "The 2017-18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?"

* **Decomposition (Top-Left, Red Border):**

* Contains the original question.

* Two sub-questions are derived from the original question:

* "What league cup is the Wigan Athletic F.C. competing in during the 2017-18 season?"

* "What is the name of the league cup from the 2017-18 Wigan Athletic F.C. season?"

* Both sub-questions have a "State": "End." attribute.

* **Retrieval (Top-Right, Green Border):**

* **Extraction:**

* Entity: \["Wigan Athletic F.C.", "2017-18 season", "league cup", "sponsorship reasons"]

* Triples: \[("Wigan Athletic F.C.", "competes in", "league cup"), ("league cup", "sponsorship name", "unknown"), ("2017-18 season", "associated with", "Wigan Athletic F.C."), ("league cup", "associated with", "2017-18 season")]

* Subgraph: \[("Wigan Athletic F.C.", "competes in", "league cup"), ("Wigan Athletic F.C.", "competes in", "2017-18 season"), ("Wigan Athletic F.C.", "has", "league cup name")]

* **Retrieval and Prune:**

* Triples: \[("Wigan Athletic F.C.", "is a", "football club"), ("Wigan Athletic F.C.", "based in", "Wigan, England"), ("Wigan Athletic F.C.", "founded in", "1932"), ("Wigan Athletic F.C.", "competes in", "EFL Championship"), ("2017-18 season", "start date", "August 2017"), ("2017-18 season", "end date", "May 2018"), ("league cup", "official name", "EFL Cup"), ("league cup", "sponsored by", "Carabao"), ("league cup", "involves", "Wigan Athletic F.C."), ("league cup", "associated with", "EFL Championship"), ("league cup", "sponsorship name", "Carabao Cup")......]

* **Reasoning and Self-Verification (Bottom-Left, Blue Border):**

* Contains the original question and the final answer: "Carabao Cup."

* Two branches representing reasoning steps:

* Left Branch:

* Question: "In which league cup did Wigan Athletic F.C. compete during the 2017-18 season?"

* Answer: "Wigan Athletic F.C. competed in the EFL Cup during the 2017-18 season."

* Right Branch:

* Question: "What was the sponsored name of the league cup identified in sub-question #1 during the 2017-18 season?"

* Answer: "The sponsored name of the league cup during the 2017-18 season was the Carabao Cup."

* **Answer (Bottom-Right):** "Carabao Cup."

### Detailed Analysis or ### Content Details

* **Decomposition:** The original question is broken down into two sub-questions to simplify the retrieval and reasoning process.

* **Retrieval:** This section extracts relevant information from a knowledge base or database. It includes entities, triples (subject-predicate-object relationships), and a subgraph representing the relationships between entities. The "Retrieval and Prune" section refines the extracted information.

* **Reasoning and Self-Verification:** This section uses the answers to the sub-questions to derive the final answer. The diagram shows a tree-like structure where the original question is at the root, and the sub-questions and their answers form the branches.

* **Answer:** The final answer to the original question is "Carabao Cup."

### Key Observations

* The diagram illustrates a structured approach to question answering, involving decomposition, information retrieval, and reasoning.

* The use of triples and subgraphs in the Retrieval section indicates a knowledge graph-based approach.

* The Reasoning and Self-Verification section demonstrates how the answers to sub-questions are combined to arrive at the final answer.

### Interpretation

The diagram demonstrates a question-answering system's process for determining the sponsorship name of a league cup. The system breaks down the complex question into smaller, more manageable sub-questions. It then retrieves relevant information from a knowledge base, prunes irrelevant data, and uses the retrieved information to answer the sub-questions. Finally, it combines the answers to the sub-questions to arrive at the final answer. This approach highlights the importance of structured knowledge representation and reasoning in question-answering systems. The system correctly identifies "Carabao Cup" as the answer, demonstrating its ability to process and understand complex queries related to sports sponsorships.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Question Decomposition for Knowledge Retrieval

### Overview

The image presents a diagram illustrating a question decomposition and retrieval process, likely within a knowledge-based system or a question-answering application. The diagram visually breaks down a complex question into sub-questions, performs information extraction, and then uses reasoning and pruning to arrive at an answer. The overall structure is a flow diagram with four main sections: Decomposition, Retrieval, Reasoning & Self-Verification, and Retrieval & Prune.

### Components/Axes

The diagram is organized into four distinct sections, each visually separated by dashed-line boxes and labeled with a title. Each section contains text blocks representing steps or results of the process. Arrows indicate the flow of information between stages. The diagram also includes a title at the top: "Question: “The 2017-18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?”". A copyright notice is present at the bottom right: "© Nanjing, CuptAI".

### Detailed Analysis or Content Details

**1. Decomposition:**

* **Question:** “The 2017-18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?”

* **Sub-question 1:** “What league cup is the Wigan Athletic F.C. competing in during the 2017-18 season?”, “State: “End””.

* **Sub-question 2:** “What is the name of the league cup from the 2017-18 Wigan Athletic F.C. season?”, “State: “End””.

**2. Retrieval:**

* **Extraction:**

* Entity: ["Wigan Athletic F.C.", "2017-18 season", "league cup", "sponsorship reasons"]

* Triples: ["Wigan Athletic F.C.", "competes in", "league cup"], ["league cup", "sponsorship name", "unknown"], ["2017-18 season", "associated with", "Wigan Athletic F.C."], ["league cup", "associated with", "2017-18 season"]

* Subgraph: ["Wigan Athletic F.C.", "competes in", "league cup"], ["Wigan Athletic F.C.", "competes in", "2017-18 season"], ["Wigan Athletic F.C.", "has", "league cup name"]

**3. Reasoning and Self-Verification:**

* **Question:** “The 2017-18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?”

* **Answer:** “Carabao Cup.”

* **[right]** (Sub-question): “In which league cup did Wigan Athletic F.C. compete during the 2017-18 season?”. Answer: “Wigan Athletic F.C. competed in the EFL Cup during the 2017-18 season.”

* **[right]** (Question): “What was the sponsored name of the league cup identified in sub-question one during the 2017-18 season?”. Answer: “The league cup during the 2017-18 season was sponsored with the name ‘Carabao Cup’”.

**4. Retrieval and Prune:**

* Triples: ["Wigan Athletic F.C.", "is a", "football club"], ["Wigan Athletic F.C.", "based in", "Wigan, England"], ["Wigan Athletic F.C.", "founded in", "1932"], ["Wigan Athletic F.C.", "competes in", "EFL Championship"], ["2017-18 season", "start date", "August 2017"], ["2017-18 season", "end date", "May 2018"], ["league cup", "official name", "EFL Cup"], ["league cup", "sponsored by", "Carabao"], ["league cup", "involves", "Wigan Athletic F.C."], ["league cup", "associated with", "EFL Championship"], ["league cup", "sponsorship name", "Carabao Cup"].

### Key Observations

The diagram demonstrates a multi-stage process for answering a complex question. The decomposition step breaks down the question into simpler, manageable sub-questions. The retrieval step extracts relevant entities and relationships. The reasoning step uses the extracted information to derive an answer, and the final retrieval and prune step refines the knowledge base. The diagram highlights the importance of both information extraction and logical reasoning in question answering.

### Interpretation

This diagram illustrates a typical workflow in a knowledge-based question answering system. The decomposition phase is crucial for handling complex queries that require multiple pieces of information. The extraction phase relies on identifying key entities and relationships within a knowledge graph or text corpus. The reasoning phase then leverages these extracted elements to infer the answer. The final pruning step ensures the answer is concise and relevant. The use of "Triples" suggests a knowledge graph representation, where facts are stored as subject-predicate-object relationships. The diagram suggests a system capable of not only retrieving facts but also performing logical inference to answer questions that require combining multiple pieces of information. The copyright notice indicates this is likely a research project or a component of a larger system developed by CuptAI. The diagram is a visual representation of a semantic reasoning process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Question Answering Process Flowchart

### Overview

The image is a technical flowchart diagram illustrating a multi-step natural language question answering (QA) process. It visually decomposes a complex question about a football team's league cup name into sub-questions, retrieves relevant information from a knowledge base, performs reasoning and self-verification, and arrives at a final answer. The diagram uses color-coded sections and directional arrows to show the flow of information and logic.

### Components/Axes

The diagram is structured into four main sections, each with a distinct border color and label:

1. **Main Question (Top, Beige Box):** Contains the initial query.

2. **Decomposition (Left, Red Dashed Border):** Breaks the main question into simpler sub-questions.

3. **Retrieval (Right, Green Dashed Border):** Shows the extraction and pruning of relevant knowledge graph information.

4. **Reasoning and Self-Verification (Bottom Left, Blue Dashed Border):** Demonstrates the logical steps to answer the sub-questions and verify the main answer.

5. **Answer (Bottom Right, Beige Box):** Presents the final output.

### Detailed Analysis / Content Details

**1. Main Question (Top Center):**

* **Text:** `Question: "The 2017–18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?"`

**2. Decomposition Section (Left):**

* **Central Red Box:** Contains the original question.

* **Two Sub-question Red Boxes (below central box):**

* Left Sub-question: `{"Sub-question": "What league cup is the Wigan Athletic F.C. competing in during the 2017-18 season?", "State": "End."}`

* Right Sub-question: `{"Sub-question": "What is the name of the league cup from the 2017-18 Wigan Athletic F.C. season?", "State": "End."}`

**3. Retrieval Section (Right):**

* **Extraction Sub-section (Top Green Box):**

* **Entity:** `["Wigan Athletic F.C.", "2017–18 season", "league cup", "sponsorship reasons"]`

* **Triples:** `( "Wigan Athletic F.C.", "competes in", "league cup" ), ( "league cup", "sponsorship name", "unknown" ), ( "2017–18 season", "associated with", "Wigan Athletic F.C." ), ( "league cup", "associated with", "2017–18 season" )`

* **Subgraph:** `[ ("Wigan Athletic F.C.", "competes in", "league cup"), ("Wigan Athletic F.C.", "competes in", "2017-18 season"), ("Wigan Athletic F.C.", "has", "league cup name") ]`

* **Retrieval and Prune Sub-section (Bottom Green Box):**

* **Triples:** `( "Wigan Athletic F.C.", "is a", "football club" ), ( "Wigan Athletic F.C.", "based in", "Wigan, England" ), ( "Wigan Athletic F.C.", "founded in", "1932" ), ( "Wigan Athletic F.C.", "competes in", "EFL Championship" ), ( "2017–18 season", "start date", "August 2017" ), ( "2017–18 season", "end date", "May 2018" ), ( "league cup", "official name", "EFL Cup" ), ( "league cup", "sponsored by", "Carabao" ), ( "league cup", "involves", "Wigan Athletic F.C." ), ( "league cup", "associated with", "EFL Championship" ), ( "league cup", "sponsorship name", "Carabao Cup" )......]`

**4. Reasoning and Self-Verification Section (Bottom Left):**

* **Top Blue Box (Main Reasoning):**

* `({"Question": "The 2017–18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?", "Answer": "Carabao Cup."})`

* **Two Supporting Blue Boxes (below, connected by lines labeled "[right]"):**

* Left Box: `{ "Question": "In which league cup did Wigan Athletic F.C. compete during the 2017-18 season?", "Answer": "Wigan Athletic F.C. competed in the EFL Cup during the 2017-18 season." }`

* Right Box: `{ "Question": "What was the sponsored name of the league cup identified in sub-question #1 during the 2017-18 season?", "Answer": "The sponsored name of the league cup during the 2017-18 season was the Carabao Cup." }`

**5. Final Answer (Bottom Right):**

* **Text:** `Answer: "Carabao Cup."`

### Key Observations

* **Process Flow:** The diagram clearly shows a sequential pipeline: Question → Decomposition → Retrieval → Reasoning → Answer.

* **Knowledge Representation:** The "Retrieval" section explicitly uses a knowledge graph format with entities and (subject, predicate, object) triples.

* **Self-Verification:** The reasoning step includes verifying the answer against the decomposed sub-questions, as indicated by the "[right]" labels on the connecting lines.

* **Information Hierarchy:** The initial retrieval ("Extraction") yields incomplete information (e.g., `"sponsorship name", "unknown"`), while the pruned retrieval contains the definitive fact (`"sponsorship name", "Carabao Cup"`).

### Interpretation

This diagram is a schematic representation of a neuro-symbolic or hybrid AI question-answering system. It demonstrates how a complex, multi-fact question is handled not as a single black-box operation, but through a structured, interpretable process.

* **What it demonstrates:** The system first parses the question into atomic, answerable parts (Decomposition). It then queries a structured knowledge base (like a knowledge graph) to gather relevant facts (Retrieval). The "Retrieval and Prune" step suggests a filtering or ranking mechanism to select the most pertinent information. Finally, it uses logical reasoning over the retrieved facts to answer the sub-questions and synthesize the final answer, with a built-in verification step.

* **Relationships:** The Decomposition guides the Retrieval. The outputs of Retrieval (the triples) are the raw materials for the Reasoning module. The Reasoning module's success is validated by its ability to correctly answer the sub-questions derived from the original Decomposition.

* **Notable Pattern:** The process explicitly handles uncertainty. The initial extraction identifies "sponsorship name" as "unknown," but the subsequent retrieval and pruning step resolves this to "Carabao Cup," showing how the system iteratively refines its information gathering.

* **Underlying Mechanism:** The use of triples and subgraphs points to a symbolic AI component (knowledge graphs, logical inference) working in tandem with a natural language understanding component (for parsing and question decomposition). This is a classic architecture for achieving high accuracy and explainability in factual QA tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Question Answering Process for Sponsorship Identification

### Overview

The flowchart illustrates a structured process to answer the question: *"The 2017–18 Wigan Athletic F.C. season will be a year in which the team competes in the league cup known as what for sponsorship reasons?"* It breaks the problem into decomposition, retrieval, and reasoning/self-verification steps, using color-coded blocks (red, green, blue) to represent each phase.

### Components/Axes

- **Decomposition (Red Block)**:

- **Sub-question 1**: *"What league cup is the Wigan Athletic F.C. competing in during the 2017-18 season?"*

- **Sub-question 2**: *"What is the name of the league cup from the 2017-18 Wigan Athletic F.C. season?"*

- **State**: *"End."*

- **Retrieval (Green Block)**:

- **Entity Extraction**:

- `"Wigan Athletic F.C."`, `"2017–18 season"`, `"league cup"`, `"sponsorship reasons"`.

- **Triple Extraction**:

- Triples like `("Wigan Athletic F.C.", "competes in", "league cup")`, `("2017–18 season", "associated with", "Wigan Athletic F.C.")`, and `("league cup", "sponsorship name", "unknown")`.

- **Subgraph**:

- Relationships such as `("Wigan Athletic F.C.", "competes in", "league cup")` and `("league cup", "sponsorship name", "Carabao Cup")`.

- **Reasoning and Self-Verification (Blue Block)**:

- **Verification Steps**:

1. *"In which league cup did Wigan Athletic F.C. compete during the 2017–18 season?"* → Answer: `"EFL Cup"`.

2. *"What was the sponsored name of the league cup identified in sub-question #1 during the 2017–18 season?"* → Answer: `"Carabao Cup"`.

### Content Details

- **Decomposition**:

- The question is split into two sub-questions to isolate the league cup name and its sponsorship name.

- **Retrieval**:

- Entities and triples are extracted from a knowledge graph, linking the team, season, league cup, and sponsorship.

- The subgraph confirms the league cup as the `"EFL Cup"` (official name: `"Carabao Cup"`).

- **Reasoning**:

- Self-verification cross-checks answers using retrieved data, ensuring consistency.

### Key Observations

- The flowchart emphasizes **modular problem-solving**, breaking the question into smaller, verifiable components.

- The answer `"Carabao Cup"` is derived through iterative validation of retrieved triples.

- The `"sponsorship name"` triple initially marked as `"unknown"` is resolved to `"Carabao Cup"` in the final step.

### Interpretation

The flowchart demonstrates a **knowledge graph-based QA system** where:

1. **Decomposition** reduces ambiguity by isolating sub-problems.

2. **Retrieval** leverages structured data (entities/triples) to populate knowledge gaps.

3. **Self-verification** ensures answers align with retrieved facts, avoiding hallucinations.

The process highlights how sponsorship names (e.g., `"Carabao Cup"`) are dynamically linked to league cups via temporal and contextual relationships in the knowledge graph. The use of color coding aids in tracing the flow of logic from question decomposition to final answer validation.

DECODING INTELLIGENCE...