\n

## Chart: Gradient Size vs. Epochs with Variance

### Overview

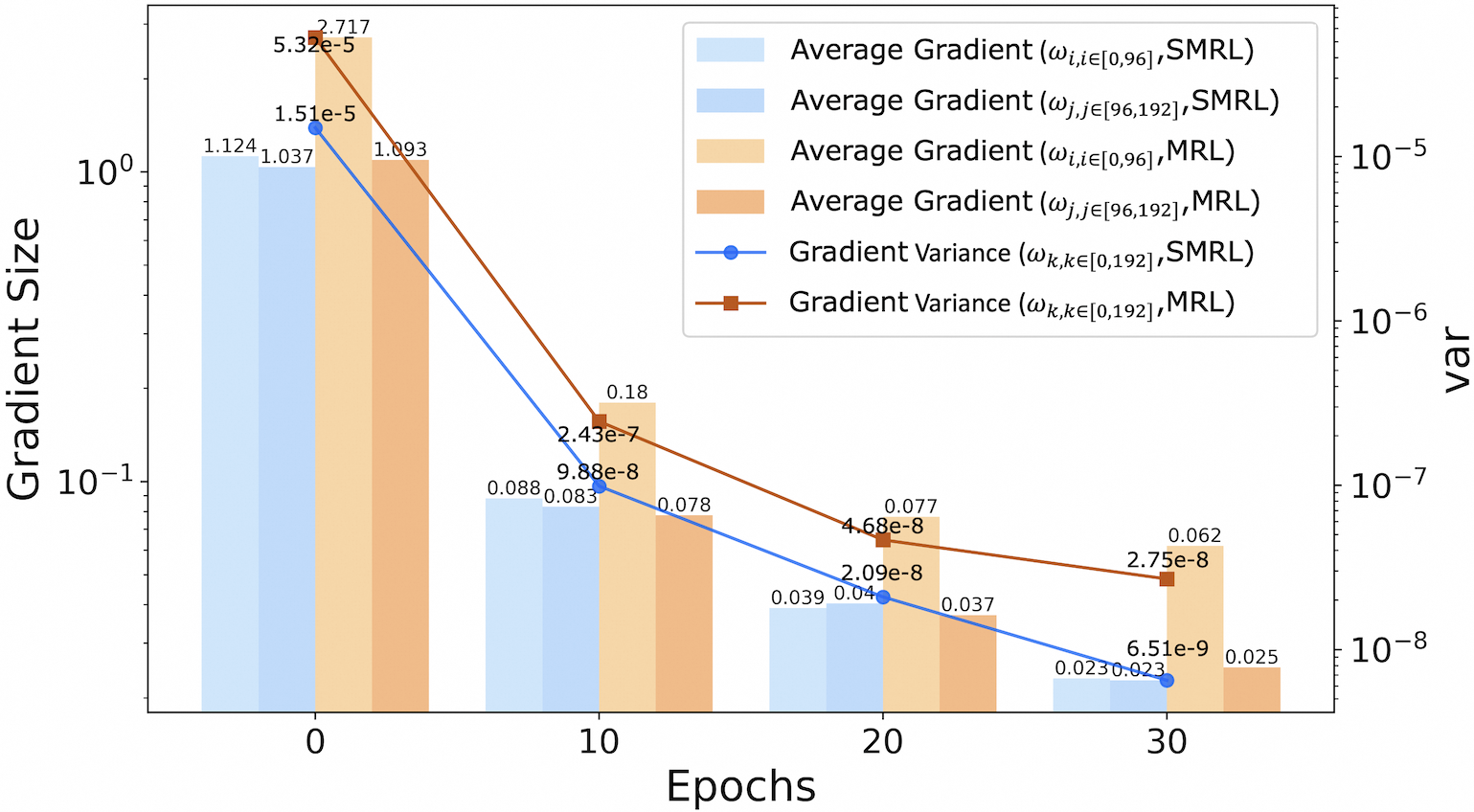

The image presents a chart illustrating the relationship between gradient size and epochs during a training process. It displays four lines representing the average gradient for different parameter sets, along with two lines representing the gradient variance for those same sets. The chart uses a logarithmic scale for the gradient size (y-axis) and a linear scale for epochs (x-axis). A heatmap in the background indicates the variance.

### Components/Axes

* **X-axis:** Epochs (linear scale, ranging from approximately -2 to 35)

* **Y-axis:** Gradient Size (logarithmic scale, ranging from approximately 1e-8 to 1e+0)

* **Legend:**

* Average Gradient (ω<sub>i,j∈[0.96]</sub>, SMRL) - Light Blue

* Average Gradient (ω<sub>i,j∈[96,192]</sub>, SMRL) - Orange

* Average Gradient (ω<sub>i,j∈[0.96]</sub>, MRL) - Yellow

* Average Gradient (ω<sub>i,j∈[96,192]</sub>, MRL) - Red

* Gradient Variance (ω<sub>k,k∈[0.192]</sub>, SMRL) - Blue

* Gradient Variance (ω<sub>k,k∈[0.192]</sub>, MRL) - Brown

* **Heatmap:** Background color representing variance, with a colorbar on the right indicating the variance scale (ranging from approximately 1e-5 to 1e-8).

### Detailed Analysis

The chart displays six lines, each representing a different metric.

**Average Gradient Lines:**

* **Light Blue (ω<sub>i,j∈[0.96]</sub>, SMRL):** The line starts at approximately 1.124 at epoch -2 and decreases rapidly to approximately 0.025 at epoch 35.

* **Orange (ω<sub>i,j∈[96,192]</sub>, SMRL):** The line begins at approximately 1.993 at epoch -2 and decreases to approximately 0.023 at epoch 35.

* **Yellow (ω<sub>i,j∈[0.96]</sub>, MRL):** The line starts at approximately 5.32e-5 at epoch -2 and decreases to approximately 6.51e-9 at epoch 35.

* **Red (ω<sub>i,j∈[96,192]</sub>, MRL):** The line begins at approximately 1.51e-5 at epoch -2 and decreases to approximately 6.15e-9 at epoch 35.

**Gradient Variance Lines:**

* **Blue (ω<sub>k,k∈[0.192]</sub>, SMRL):** The line starts at approximately 2.717 at epoch -2 and decreases to approximately 0.062 at epoch 35.

* **Brown (ω<sub>k,k∈[0.192]</sub>, MRL):** The line begins at approximately 1.037 at epoch -2 and decreases to approximately 0.025 at epoch 35.

**Specific Data Points (Approximate):**

* Epoch 0: Gradient Sizes: ~1.124, ~1.993, ~5.32e-5, ~1.51e-5, ~2.717, ~1.037

* Epoch 10: Gradient Sizes: ~0.088, ~0.18, ~2.43e-7, ~0.078, ~0.077, ~0.039

* Epoch 20: Gradient Sizes: ~0.039, ~0.04, ~2.09e-8, ~0.037, ~4.68e-8, ~0.03

* Epoch 30: Gradient Sizes: ~0.025, ~0.023, ~6.51e-9, ~6.15e-9, ~0.062, ~0.025

### Key Observations

* All lines exhibit a decreasing trend, indicating that both average gradient size and gradient variance decrease as the number of epochs increases.

* The average gradient lines (light blue, orange, yellow, red) are generally higher in magnitude than the gradient variance lines (blue, brown).

* The lines representing SMRL (light blue and orange) start at higher values than those representing MRL (yellow and red).

* The heatmap shows a gradient of variance, with higher variance values (warmer colors) at the beginning of training and lower variance values (cooler colors) as training progresses.

* The variance lines show a similar decreasing trend, but the magnitude of the decrease is less pronounced than that of the average gradient lines.

### Interpretation

The chart demonstrates the typical behavior of gradient descent during training. As the model trains (epochs increase), the gradients generally decrease in size, indicating that the model is converging towards a minimum of the loss function. The decreasing gradient variance suggests that the training process is becoming more stable.

The difference between SMRL and MRL likely represents different training configurations or datasets. The higher initial gradient sizes for SMRL suggest that it may require more epochs to converge or that it is learning at a faster rate initially.

The heatmap provides a visual representation of the variance in the gradients, which can be used to assess the stability of the training process. The decreasing variance over time suggests that the training process is becoming more stable as the model converges.

The data suggests that the training process is progressing as expected, with gradients decreasing and variance stabilizing over time. The differences between SMRL and MRL warrant further investigation to understand the impact of different training configurations on model performance. The logarithmic scale on the y-axis emphasizes the rapid initial decrease in gradient size, followed by a slower decrease as the model approaches convergence.