## Line Chart: Model Accuracy on Math Problems

### Overview

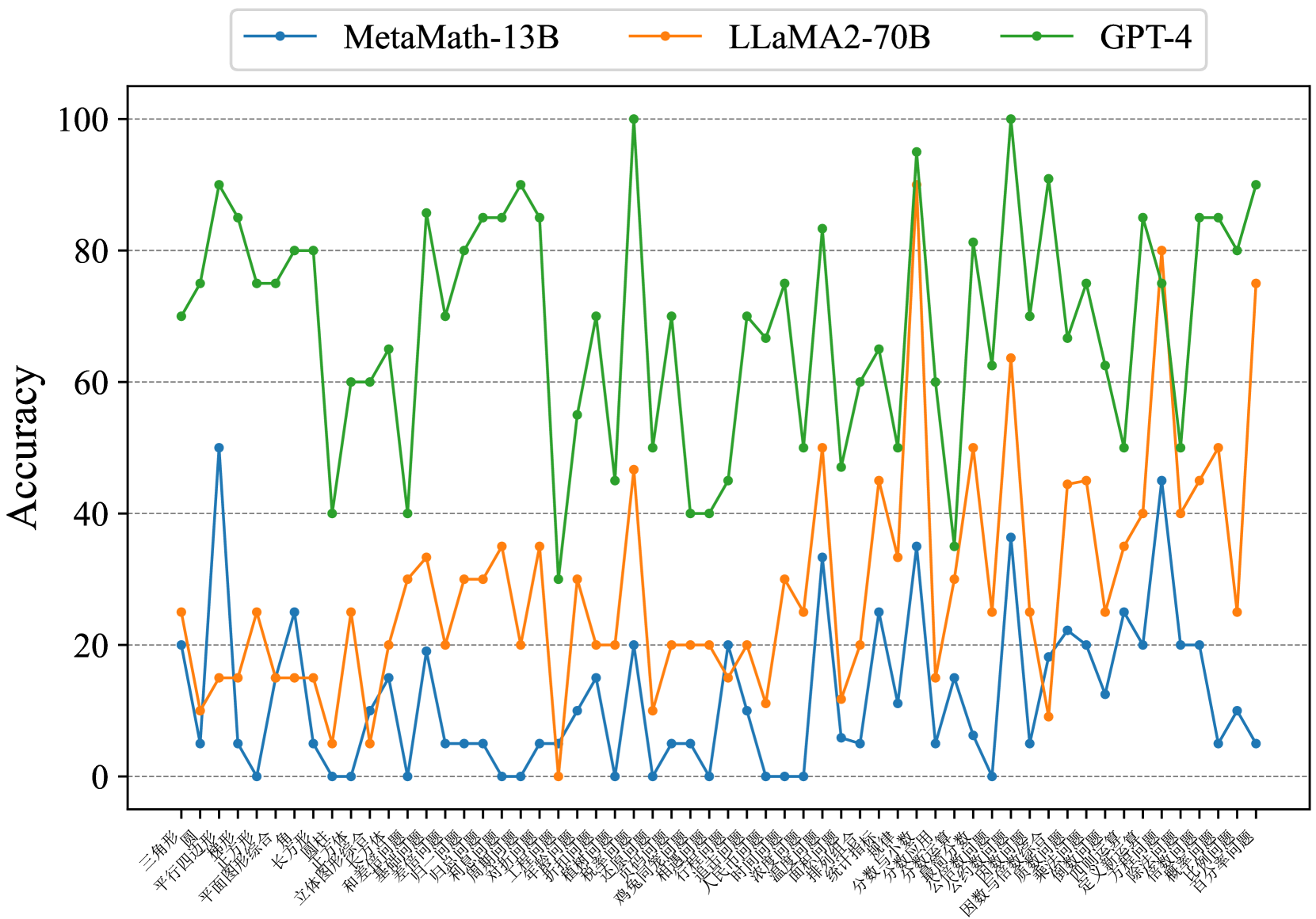

The image is a line chart comparing the accuracy of three language models (MetaMath-13B, LLaMA2-70B, and GPT-4) on a series of math problems. The x-axis represents different types of math problems, and the y-axis represents accuracy, ranging from 0 to 100.

### Components/Axes

* **Title:** (Implicit) Model Accuracy on Math Problems

* **X-axis:** Math Problem Types (in Chinese)

* **Y-axis:** Accuracy (ranging from 0 to 100, with gridlines at intervals of 20)

* **Legend:** Located at the top of the chart.

* MetaMath-13B (blue)

* LLaMA2-70B (orange)

* GPT-4 (green)

### Detailed Analysis

**X-Axis Labels (Math Problem Types - Chinese with English Translation):**

The x-axis labels are in Chinese. Here are the labels and their approximate English translations:

1. 三角形 (sān jiǎo xíng): Triangle

2. 平行四边形 (píng xíng sì biān xíng): Parallelogram

3. 平面图形综合 (píng miàn tú xíng zōng hé): Plane figure synthesis/combination

4. 梯形 (tī xíng): Trapezoid

5. 长方形 (cháng fāng xíng): Rectangle

6. 正方形 (zhèng fāng xíng): Square

7. 立体图形 (lì tǐ tú xíng): Solid figure

8. 和差倍问题 (hé chā bèi wèn tí): Sum-difference-multiple problem

9. 基础几何问题 (jī chǔ jǐ hé wèn tí): Basic geometry problem

10. 归一问题 (guī yī wèn tí): Reduction to one problem

11. 归总问题 (guī zǒng wèn tí): Summation problem

12. 周长问题 (zhōu cháng wèn tí): Perimeter problem

13. 面积问题 (miàn jī wèn tí): Area problem

14. 工程问题 (gōng chéng wèn tí): Work problem

15. 折扣问题 (zhé kòu wèn tí): Discount problem

16. 植树问题 (zhí shù wèn tí): Tree planting problem

17. 还原问题 (huán yuán wèn tí): Restoration problem

18. 盈亏问题 (yíng kuī wèn tí): Profit and loss problem

19. 鸡兔同笼 (jī tù tóng lóng): Chicken and rabbit in the same cage (a type of math problem)

20. 相遇问题 (xiāng yù wèn tí): Meeting problem

21. 行程问题 (xíng chéng wèn tí): Travel problem

22. 浓度问题 (nóng dù wèn tí): Concentration problem

23. 简单平均数 (jiǎn dān píng jūn shù): Simple average

24. 定义新运算 (dìng yì xīn yùn suàn): Define new operation

25. 整数计算 (zhěng shù jì suàn): Integer calculation

26. 除法计算 (chú fǎ jì suàn): Division calculation

27. 比例问题 (bǐ lì wèn tí): Ratio problem

28. 概率问题 (gài lǜ wèn tí): Probability problem

29. 百分数问题 (bǎi fēn shù wèn tí): Percentage problem

**Data Series Analysis:**

* **MetaMath-13B (blue):** Generally low accuracy across all problem types, mostly below 40. It has a spike at "Plane figure synthesis/combination" reaching approximately 50 accuracy. Many data points are at or near 0 accuracy.

* Triangle: ~10

* Parallelogram: ~5

* Plane figure synthesis/combination: ~50

* Trapezoid: ~25

* Rectangle: ~25

* Square: ~20

* Solid figure: ~5

* Sum-difference-multiple problem: ~0

* Basic geometry problem: ~20

* Reduction to one problem: ~5

* Summation problem: ~5

* Perimeter problem: ~5

* Area problem: ~0

* Work problem: ~0

* Discount problem: ~20

* Tree planting problem: ~20

* Restoration problem: ~20

* Profit and loss problem: ~5

* Chicken and rabbit in the same cage: ~35

* Meeting problem: ~20

* Travel problem: ~5

* Concentration problem: ~35

* Simple average: ~20

* Define new operation: ~10

* Integer calculation: ~40

* Division calculation: ~20

* Ratio problem: ~20

* Probability problem: ~20

* Percentage problem: ~10

* **LLaMA2-70B (orange):** Higher accuracy than MetaMath-13B, but still generally lower than GPT-4. Accuracy fluctuates between approximately 10 and 50.

* Triangle: ~10

* Parallelogram: ~10

* Plane figure synthesis/combination: ~15

* Trapezoid: ~25

* Rectangle: ~15

* Square: ~25

* Solid figure: ~35

* Sum-difference-multiple problem: ~30

* Basic geometry problem: ~20

* Reduction to one problem: ~35

* Summation problem: ~20

* Perimeter problem: ~20

* Area problem: ~30

* Work problem: ~30

* Discount problem: ~35

* Tree planting problem: ~20

* Restoration problem: ~20

* Profit and loss problem: ~25

* Chicken and rabbit in the same cage: ~20

* Meeting problem: ~30

* Travel problem: ~40

* Concentration problem: ~35

* Simple average: ~45

* Define new operation: ~50

* Integer calculation: ~30

* Division calculation: ~25

* Ratio problem: ~45

* Probability problem: ~50

* Percentage problem: ~30

* **GPT-4 (green):** Significantly higher accuracy than both MetaMath-13B and LLaMA2-70B. Accuracy generally ranges between 40 and 100.

* Triangle: ~70

* Parallelogram: ~90

* Plane figure synthesis/combination: ~80

* Trapezoid: ~80

* Rectangle: ~60

* Square: ~65

* Solid figure: ~80

* Sum-difference-multiple problem: ~90

* Basic geometry problem: ~90

* Reduction to one problem: ~50

* Summation problem: ~40

* Perimeter problem: ~75

* Area problem: ~50

* Work problem: ~60

* Discount problem: ~85

* Tree planting problem: ~70

* Restoration problem: ~40

* Profit and loss problem: ~65

* Chicken and rabbit in the same cage: ~85

* Meeting problem: ~100

* Travel problem: ~70

* Concentration problem: ~90

* Simple average: ~70

* Define new operation: ~95

* Integer calculation: ~70

* Division calculation: ~50

* Ratio problem: ~80

* Probability problem: ~85

* Percentage problem: ~90

### Key Observations

* GPT-4 consistently outperforms MetaMath-13B and LLaMA2-70B across all math problem types.

* MetaMath-13B has very low accuracy, often near zero, for many problem types.

* LLaMA2-70B shows moderate accuracy, but is still significantly lower than GPT-4.

* There is significant variance in accuracy across different problem types for all models.

### Interpretation

The data suggests that GPT-4 is significantly better at solving these types of math problems compared to MetaMath-13B and LLaMA2-70B. The varying accuracy across different problem types indicates that the models have different strengths and weaknesses. MetaMath-13B appears to struggle significantly with these math problems. The chart highlights the performance gap between different language models on mathematical reasoning tasks.