## Diagram: Latent Analysis and Classifier Training for Jailbreak Mitigation

### Overview

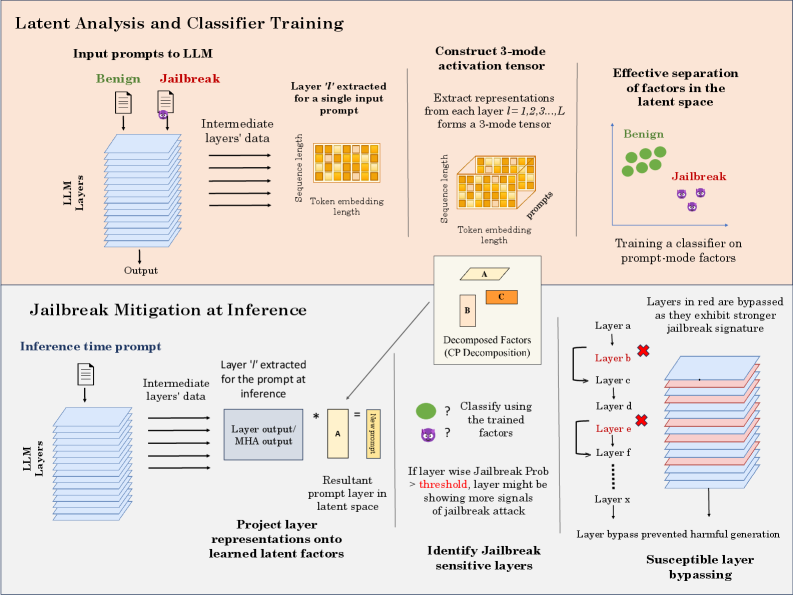

The diagram illustrates a two-phase process for detecting and mitigating jailbreak attacks in large language models (LLMs). It combines latent space analysis, classifier training, and inference-time mitigation strategies to identify and bypass harmful prompt-mode factors.

---

### Components/Axes

1. **Top Section: Latent Analysis and Classifier Training**

- **Input Prompts to LLM**:

- Two input types: *Benign* (green) and *Jailbreak* (red) prompts.

- Arrows show data flow through *LLM Layers* to an *Output*.

- **Intermediate Layers' Data**:

- Extracted from a specific layer (`Layer 'l'`) for a single input prompt.

- Visualized as a 2D grid with dimensions: *Sequence length* (rows) and *Token embedding length* (columns).

- **3-Mode Activation Tensor**:

- Constructed by extracting representations from all layers (`l=1,2,3,...,L`).

- Forms a 3D tensor with dimensions: *Sequence length*, *Token embedding length*, and *Layer depth*.

- **Effective Separation of Factors**:

- Visualized as clusters in latent space: *Benign* (green dots) and *Jailbreak* (purple dots).

- A classifier is trained on *prompt-mode factors* to distinguish these clusters.

2. **Bottom Section: Jailbreak Mitigation at Inference**

- **Inference-Time Prompt**:

- Input prompt processed through *LLM Layers*.

- Intermediate data extracted from `Layer 'l'` and multiplied by matrix **A** to form a *New Prompt Layer*.

- **Decomposed Factors (CP Decomposition)**:

- Visualized as a 3D tensor decomposed into factors **A**, **B**, and **C**.

- **Project Layer Representations**:

- Outputs are projected onto *learned latent factors* for classification.

- **Identify Jailbreak-Sensitive Layers**:

- If `Jailbreak Prob > threshold`, the layer is flagged as sensitive.

- **Susceptible Layer Bypassing**:

- Layers marked in red (e.g., `Layer b`, `Layer e`) are bypassed during inference to prevent harmful outputs.

---

### Detailed Analysis

- **Latent Space Separation**:

- Benign and jailbreak prompts are separated in latent space, enabling classifier training on prompt-mode factors.

- **3-Mode Tensor Construction**:

- Captures multi-dimensional interactions between sequence position, token embeddings, and layer depth.

- **Inference Mitigation**:

- Matrix **A** transforms intermediate layer outputs into a new prompt layer, which is analyzed for jailbreak signals.

- Layers with high jailbreak probability are bypassed to avoid harmful outputs.

---

### Key Observations

1. **Layer Sensitivity**:

- Certain layers (marked in red) exhibit stronger jailbreak signatures and are bypassed during inference.

2. **Classifier Training**:

- The classifier uses prompt-mode factors derived from the 3-mode tensor to distinguish benign from jailbreak inputs.

3. **Decomposition**:

- CP decomposition breaks down the activation tensor into interpretable factors (**A**, **B**, **C**), aiding in mitigation.

---

### Interpretation

This diagram demonstrates a defense mechanism against jailbreak attacks by leveraging latent space analysis. During training, the model learns to separate benign and malicious prompts in latent space. At inference, it dynamically identifies sensitive layers and bypasses them to prevent harmful outputs. The use of CP decomposition suggests an emphasis on interpretability, allowing the system to isolate and neutralize jailbreak signals effectively. The red-marked layers likely represent critical points where jailbreak attempts manifest, making them prime candidates for bypassing.