\n

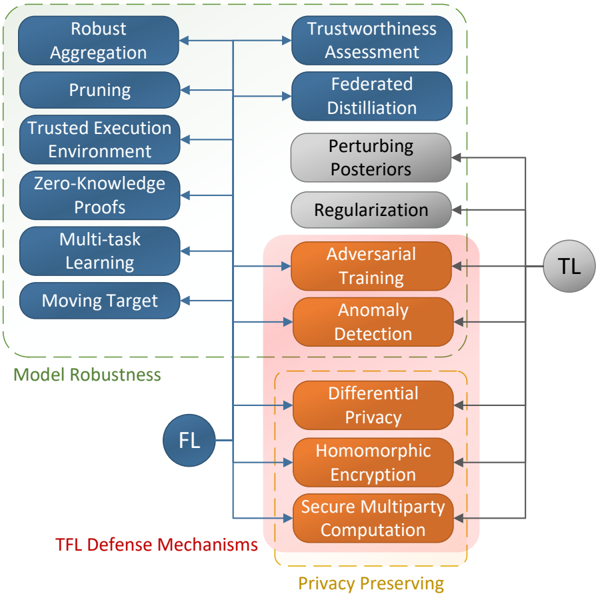

## Diagram: TFL Defense Mechanisms

### Overview

The image is a diagram illustrating defense mechanisms for Trusted Federated Learning (TFL). It categorizes these mechanisms into two main areas: Model Robustness and Privacy Preserving, with connections indicating relationships between different techniques. The diagram uses rounded rectangles to represent individual defense mechanisms and arrows to show dependencies or influences. Two circular labels, "TL" and "FL", are placed on the right side of the diagram.

### Components/Axes

The diagram is structured around two main categories, visually separated by dashed lines:

* **Model Robustness:** Located in the upper portion of the diagram, enclosed by a dashed rectangle.

* **Privacy Preserving:** Located in the lower portion of the diagram, enclosed by a dashed rectangle.

The individual defense mechanisms are:

* Robust Aggregation

* Pruning

* Trusted Execution Environment

* Zero-Knowledge Proofs

* Multi-task Learning

* Moving Target

* Trustworthiness Assessment

* Federated Distillation

* Perturbing Posteriors

* Regularization

* Adversarial Training

* Anomaly Detection

* Differential Privacy

* Homomorphic Encryption

* Secure Multiparty Computation

The labels "TL" and "FL" are positioned on the right side of the diagram.

### Detailed Analysis or Content Details

The diagram shows a flow of influence between the defense mechanisms.

**Model Robustness:**

* Robust Aggregation is connected to Trustworthiness Assessment.

* Pruning is connected to Federated Distillation.

* Trusted Execution Environment is connected to Perturbing Posteriors.

* Zero-Knowledge Proofs is connected to Regularization.

* Multi-task Learning is connected to Adversarial Training.

* Moving Target is connected to Anomaly Detection.

**Privacy Preserving:**

* Adversarial Training is connected to Differential Privacy.

* Anomaly Detection is connected to Homomorphic Encryption.

* Differential Privacy is connected to Secure Multiparty Computation.

The "TL" label is connected to Adversarial Training, Anomaly Detection, Differential Privacy, Homomorphic Encryption, and Secure Multiparty Computation.

The "FL" label is connected to Differential Privacy, Homomorphic Encryption, and Secure Multiparty Computation.

### Key Observations

The diagram highlights the interconnectedness of different defense mechanisms. The "TL" and "FL" labels suggest these are key areas or goals that the defense mechanisms aim to support. The diagram suggests that Model Robustness techniques feed into Privacy Preserving techniques, and both are ultimately linked to "TL" and "FL".

### Interpretation

This diagram illustrates a layered approach to securing Trusted Federated Learning systems. The "Model Robustness" section focuses on protecting the model itself from attacks or manipulation, while the "Privacy Preserving" section focuses on protecting the data used to train the model. The connections between these sections suggest that a robust model is essential for effective privacy preservation, and vice versa.

The labels "TL" and "FL" likely stand for "Trusted Learning" and "Federated Learning" respectively, indicating that these defense mechanisms are designed to enhance both the trustworthiness and privacy of the learning process. The diagram suggests a hierarchical structure, where techniques in the "Model Robustness" category contribute to the techniques in the "Privacy Preserving" category, ultimately supporting the broader goals of "TL" and "FL".

The diagram doesn't provide quantitative data, but rather a conceptual framework for understanding the relationships between different defense mechanisms. It implies that a comprehensive security strategy for TFL requires a combination of techniques from both categories. The connections between the mechanisms suggest that they are not independent, but rather work together to provide a more robust and secure system.