TECHNICAL ASSET FINGERPRINT

a03718fee1637b064cf2b346

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Causal Reasoning Framework

### Overview

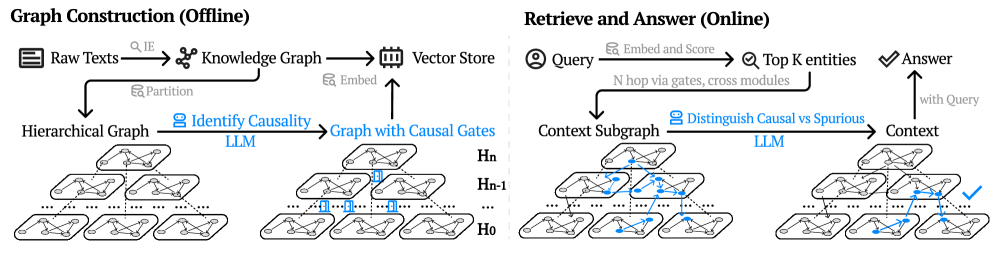

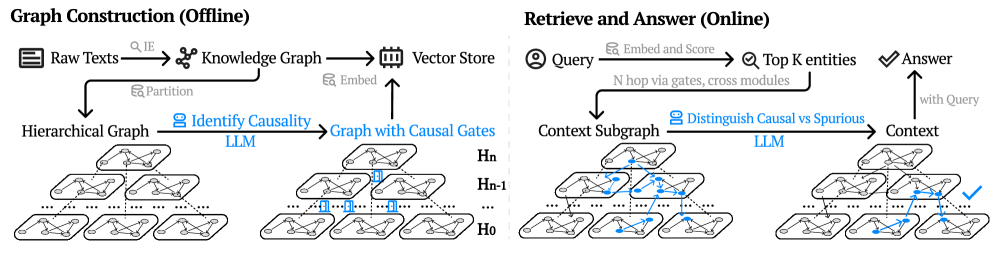

The image presents a diagram illustrating a causal reasoning framework, divided into two main phases: "Graph Construction (Offline)" and "Retrieve and Answer (Online)". The diagram outlines the process of building a knowledge graph from raw texts, identifying causal relationships, and then using this graph to answer queries by distinguishing between causal and spurious connections.

### Components/Axes

**Left Side: Graph Construction (Offline)**

* **Raw Texts:** Represented by an icon of stacked documents.

* **IE (Information Extraction):** A gray label with an arrow pointing from "Raw Texts" to "Knowledge Graph".

* **Knowledge Graph:** Represented by a network icon.

* **Vector Store:** Represented by a database icon.

* **Embed:** A gray label with an arrow pointing from "Knowledge Graph" to "Vector Store".

* **Partition:** A gray label with an arrow pointing from "Raw Texts" to "Hierarchical Graph".

* **Hierarchical Graph:** A multi-layered graph structure.

* **Identify Causality:** A blue label with a person icon, pointing from "Hierarchical Graph" to "Graph with Causal Gates". Labeled "LLM" in blue below.

* **Graph with Causal Gates:** A multi-layered graph structure with some highlighted (blue) connections.

* **Embed:** An arrow pointing from "Graph with Causal Gates" to "Vector Store".

* **Hn, Hn-1, H0:** Labels indicating different layers of the hierarchical graphs.

**Right Side: Retrieve and Answer (Online)**

* **Query:** Represented by a person icon.

* **Embed and Score:** A gray label with an arrow pointing from "Query" to "Top K entities".

* **Top K entities:** Represented by a magnifying glass icon.

* **Answer:** Represented by a checkmark icon.

* **N hop via gates, cross modules:** A black curved arrow pointing from "Query" to "Context Subgraph".

* **Context Subgraph:** A multi-layered graph structure with some highlighted (blue) connections.

* **Distinguish Causal vs Spurious:** A blue label with a robot icon, pointing from "Context Subgraph" to "Context". Labeled "LLM" in blue below.

* **Context:** A multi-layered graph structure with a highlighted (blue) path leading to the "Answer".

* **with Query:** An arrow pointing from "Context" to "Answer".

### Detailed Analysis

**Graph Construction (Offline):**

1. **Raw Texts** are processed using **Information Extraction (IE)** to create a **Knowledge Graph**.

2. The **Knowledge Graph** is embedded into a **Vector Store**.

3. The **Raw Texts** are also partitioned into a **Hierarchical Graph**.

4. **Causality** is identified within the **Hierarchical Graph** using an **LLM (Large Language Model)**, resulting in a **Graph with Causal Gates**.

5. The **Graph with Causal Gates** is embedded into the **Vector Store**.

**Retrieve and Answer (Online):**

1. A **Query** is embedded and scored to identify **Top K entities**.

2. A **Context Subgraph** is extracted from the **Knowledge Graph** based on the **Query**.

3. **Causal** vs. **Spurious** connections are distinguished within the **Context Subgraph** using an **LLM**, resulting in a refined **Context**.

4. The **Context** is used to generate an **Answer** to the **Query**.

### Key Observations

* The diagram highlights the use of Large Language Models (LLMs) in both the offline graph construction and online query answering phases.

* The hierarchical graph structure is used in both phases, suggesting a multi-level representation of knowledge and context.

* The distinction between causal and spurious connections is a key aspect of the framework, ensuring accurate and reliable answers.

### Interpretation

The diagram illustrates a comprehensive framework for causal reasoning, leveraging knowledge graphs and LLMs. The offline phase focuses on building a structured representation of knowledge, while the online phase focuses on retrieving and reasoning about relevant information to answer queries. The use of LLMs to identify causality and distinguish between causal and spurious connections is crucial for ensuring the accuracy and reliability of the answers. The hierarchical graph structure allows for a multi-level representation of knowledge, enabling more nuanced and context-aware reasoning.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Graph Construction and Retrieval/Answer Pipeline

### Overview

The image depicts a diagram illustrating a two-stage pipeline: "Graph Construction (Offline)" and "Retrieve and Answer (Online)". The left side shows the process of building a knowledge graph from raw text, while the right side demonstrates how to query this graph to obtain an answer. Both sides involve Large Language Models (LLMs) and utilize hierarchical graph structures.

### Components/Axes

The diagram is divided into two main sections, labeled "Graph Construction (Offline)" and "Retrieve and Answer (Online)". Within each section, there are several components connected by arrows indicating the flow of information. Key components include: Raw Texts, Knowledge Graph, Vector Store, Hierarchical Graph, LLM, Graph with Causal Gates, Context Subgraph, Query, Top K entities, Answer, and Context. The hierarchical graphs are labeled H₀, H₋₁, and Hₙ.

### Detailed Analysis or Content Details

**Graph Construction (Offline):**

1. **Raw Texts** are processed with a "Q,IE" (Query, Information Extraction) step to create a **Knowledge Graph**.

2. The Knowledge Graph is then embedded and stored in a **Vector Store**.

3. The Raw Texts are also partitioned to form a **Hierarchical Graph**.

4. An **LLM** is used to "Identify Causality" within the Hierarchical Graph, resulting in a **Graph with Causal Gates**.

5. The Vector Store embedding is used to connect to the Graph with Causal Gates.

**Retrieve and Answer (Online):**

1. A **Query** is embedded and scored, leading to the identification of **Top K entities**.

2. These entities are processed through "N hop via gates, cross modules" to generate a **Context Subgraph**.

3. An **LLM** is used to "Distinguish Causal vs Spurious" relationships within the Context Subgraph.

4. This process generates **Context**, which is then used to provide an **Answer** to the original query. A checkmark indicates a successful answer.

The hierarchical graphs (H₀, H₋₁, Hₙ) visually represent layers of abstraction. H₀ appears to be the lowest level, with the most detailed connections, while Hₙ represents the highest level of abstraction. The graphs are composed of nodes (circles) and edges (lines connecting the nodes). Some edges are highlighted in blue, potentially indicating causal relationships.

### Key Observations

* The pipeline is clearly divided into offline and online stages, suggesting a pre-processing step (graph construction) followed by a real-time query/answer process.

* LLMs are used in both stages, highlighting their importance in both knowledge extraction and reasoning.

* The use of hierarchical graphs and causal gates suggests an attempt to model complex relationships and avoid spurious correlations.

* The "Vector Store" component indicates the use of vector embeddings for efficient similarity search.

* The checkmark on the right side indicates a successful answer retrieval.

### Interpretation

This diagram illustrates a sophisticated approach to knowledge representation and question answering. The offline graph construction phase aims to create a structured knowledge base that captures causal relationships. The online retrieval phase leverages this knowledge base to answer queries efficiently and accurately. The use of LLMs suggests that the system is capable of understanding natural language and performing complex reasoning. The hierarchical graph structure allows for different levels of abstraction, potentially enabling the system to answer both broad and specific questions. The inclusion of causal gates suggests an attempt to mitigate the problem of spurious correlations, which is a common challenge in knowledge graph-based systems. The overall architecture suggests a system designed for robust and reliable question answering, particularly in domains where causal reasoning is important. The diagram does not provide any quantitative data, but rather focuses on the conceptual flow of information. It is a high-level overview of a complex system, and further details would be needed to fully understand its implementation and performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## System Architecture Diagram: Two-Phase Knowledge Graph Processing for Question Answering

### Overview

The image is a technical system architecture diagram illustrating a two-phase process for building and utilizing a knowledge graph to answer queries. The system is divided into an **offline "Graph Construction"** phase and an **online "Retrieve and Answer"** phase, separated by a vertical dashed line. The diagram uses icons, text labels, and flow arrows to depict data flow and processing steps, with a focus on integrating causal reasoning via Large Language Models (LLMs).

### Components/Axes

The diagram is segmented into two primary regions:

**1. Left Region: Graph Construction (Offline)**

* **Header Label:** "Graph Construction (Offline)"

* **Process Flow (Top Path):**

* Icon: Document stack. Label: "Raw Texts"

* Arrow with icon (magnifying glass) and label: "IE" (Information Extraction)

* Icon: Network graph. Label: "Knowledge Graph"

* Arrow with icon (cube) and label: "Embed"

* Icon: Database/server rack. Label: "Vector Store"

* **Process Flow (Bottom Path):**

* Arrow from "Raw Texts" with icon (split arrow) and label: "Partition"

* Label: "Hierarchical Graph"

* Arrow with icon (link) and label: "Identify Causality"

* Text below arrow: "LLM"

* Arrow points to label: "Graph with Causal Gates"

* **Visual Elements:**

* Two sets of stacked, layered graph illustrations.

* Left set: Labeled "Hierarchical Graph". Shows three layers of graphs (top, middle, bottom) with nodes and edges. No special highlighting.

* Right set: Labeled "Graph with Causal Gates". Shows three corresponding layers. Specific edges and nodes are highlighted in **blue**, indicating the "causal gates" identified by the LLM.

* Layer labels on the right side: "Hn" (top), "Hn-1" (middle), "H0" (bottom).

**2. Right Region: Retrieve and Answer (Online)**

* **Header Label:** "Retrieve and Answer (Online)"

* **Process Flow:**

* Icon: Person/User. Label: "Query"

* Arrow with icon (magnifying glass) and label: "Embed and Score"

* Icon: Checkmark in circle. Label: "Top K entities"

* Arrow with label: "N hop via gates, cross modules"

* Label: "Context Subgraph"

* Arrow with icon (link) and label: "Distinguish Causal vs Spurious"

* Text below arrow: "LLM"

* Arrow points to label: "Context"

* Final arrow with label: "with Query" pointing to icon (checkmark) and label: "Answer"

* **Visual Elements:**

* Two sets of stacked, layered graph illustrations, mirroring the offline phase.

* Left set: Labeled "Context Subgraph". Shows a subset of the hierarchical graph. Some nodes/edges are highlighted in **blue**, representing the retrieved subgraph.

* Right set: Labeled "Context". Shows the same subgraph structure, but now a specific path or set of elements is marked with a **blue checkmark**, indicating the causal context selected for the answer.

### Detailed Analysis

The diagram details a pipeline that transforms raw text into a structured, causally-aware knowledge representation for efficient question answering.

**Offline Phase (Graph Construction):**

1. **Dual-Path Processing:** Raw texts are processed in two parallel streams.

* **Stream 1 (Direct KG):** Texts undergo Information Extraction (IE) to build a standard Knowledge Graph, which is then embedded into a Vector Store for similarity search.

* **Stream 2 (Hierarchical & Causal):** Texts are partitioned and organized into a Hierarchical Graph (layers Hn to H0). An LLM analyzes this graph to "Identify Causality," resulting in a "Graph with Causal Gates." The blue highlights in the right-hand graph illustration show these gates—specific connections deemed causally significant.

2. **Output:** The outputs are a Vector Store (for retrieval) and a Causal Graph (for reasoning).

**Online Phase (Retrieve and Answer):**

1. **Query Processing:** A user query is embedded and scored against the Vector Store to retrieve the "Top K entities."

2. **Subgraph Retrieval:** Starting from these entities, the system traverses "N hops" through the graph, guided by the "gates" (causal connections) established offline, to assemble a "Context Subgraph."

3. **Causal Filtering:** An LLM processes this subgraph to "Distinguish Causal vs Spurious" relationships, filtering it down to the most relevant "Context."

4. **Answer Generation:** The final causal context, combined with the original query, is used to generate the "Answer."

### Key Observations

* **Central Role of LLMs:** LLMs are explicitly called out for two critical reasoning tasks: identifying causal relationships in the offline phase and distinguishing causal from spurious links in the online phase.

* **Hierarchical Structure:** The use of a hierarchical graph (Hn...H0) suggests the knowledge is organized at multiple levels of abstraction or granularity.

* **Causal Gates as a Core Mechanism:** The "causal gates" (highlighted in blue) are the key innovation. They act as filters or guides during the online retrieval ("N hop via gates") to focus the search on causally relevant paths, improving efficiency and answer quality.

* **Visual Consistency:** The blue highlighting is used consistently across both phases to denote causally significant elements, creating a clear visual link between the offline analysis and online application.

### Interpretation

This diagram presents a sophisticated architecture for **causality-aware knowledge graph question answering**. The core problem it solves is the retrieval of not just any relevant information, but *causally pertinent* information from a large knowledge base.

* **How it Works:** The system pre-computes causal relationships (offline) to create a "map" of meaningful connections. When a query arrives (online), it doesn't just search broadly; it follows this pre-defined causal map to quickly home in on the context that likely contains the answer, ignoring spurious correlations.

* **Why it Matters:** This approach addresses key limitations of standard retrieval-augmented generation (RAG). By focusing on causal links, it aims to:

1. **Improve Accuracy:** Retrieve more relevant context, reducing hallucinations.

2. **Increase Efficiency:** Limit the search space via gates, reducing computational cost.

3. **Enhance Explainability:** The causal path from query to answer is more traceable.

* **Underlying Assumption:** The architecture assumes that causality is a powerful heuristic for relevance in question answering. The LLM's role is to encode this causal understanding into the graph structure itself, which then guides the retrieval process deterministically. The separation into offline and online phases is a practical design to handle the computational cost of causal analysis.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart Diagram: Knowledge Graph Construction and Query Processing Pipeline

### Overview

The diagram illustrates a two-phase system for knowledge graph construction and query processing. The left side depicts an offline "Graph Construction" pipeline, while the right side shows an online "Retrieve and Answer" workflow. Both phases involve hierarchical graph structures, causal reasoning, and large language model (LLM) integration.

### Components/Axes

**Left Section (Graph Construction - Offline):**

1. **Input**: Raw Texts → Knowledge Graph (via IE) → Vector Store

2. **Processing Steps**:

- Partition → Hierarchical Graph

- Embed → Identify Causality (LLM) → Graph with Causal Gates

3. **Output**: Multi-layered hierarchical graph (H₀ to Hₙ) with causal gates

**Right Section (Retrieve and Answer - Online):**

1. **Input**: Query → Embed and Score

2. **Processing Steps**:

- Top K entities → N-hop via gates, cross modules

- Distinguish Causal vs Spurious (LLM) → Context

3. **Output**: Answer with Query

**Visual Elements**:

- **Colors**:

- Blue: LLM components (Identify Causality, Distinguish Causal vs Spurious)

- Green: Causal gates

- **Arrows**: Indicate data flow direction

- **Hierarchical Structure**: Stacked graph layers (H₀ to Hₙ) in both sections

### Detailed Analysis

**Offline Phase**:

1. Raw texts are processed through Information Extraction (IE) to create a Knowledge Graph.

2. The graph is partitioned and embedded into a Vector Store.

3. A Hierarchical Graph is constructed, with layers labeled H₀ to Hₙ.

4. Causal relationships are identified using LLM, resulting in a Graph with Causal Gates.

**Online Phase**:

1. Queries are embedded and scored against the vector store.

2. Top K relevant entities are retrieved using N-hop traversal through causal gates and cross-module connections.

3. LLM distinguishes between causal and spurious relationships to refine context.

4. Final answer is generated by combining query context with retrieved information.

### Key Observations

1. **Modular Design**: The system separates offline graph construction from online query processing.

2. **Causal Reasoning**: Explicit emphasis on identifying and leveraging causal relationships throughout the pipeline.

3. **LLM Integration**: Used at two critical points - causality identification and spurious relationship filtering.

4. **Hierarchical Navigation**: Query processing involves multi-layered graph traversal (N-hop) through causal gates.

5. **Contextualization**: Final answer generation combines retrieved entities with context-aware reasoning.

### Interpretation

This architecture demonstrates a sophisticated approach to knowledge graph utilization:

- The offline phase focuses on building a causally-aware graph structure optimized for efficient querying.

- The online phase leverages this preprocessed structure to enable context-aware question answering.

- The use of LLM for both causality identification and spurious relationship filtering suggests an attempt to mitigate hallucination risks in knowledge graph applications.

- The hierarchical graph structure with causal gates implies a design for handling complex, multi-relational data while maintaining query efficiency.

The system appears to balance computational intensity between offline preprocessing and online query handling, potentially optimizing for real-time performance while maintaining high-quality causal reasoning capabilities.

DECODING INTELLIGENCE...