## Text Extraction: Prompt Engineering Examples

### Overview

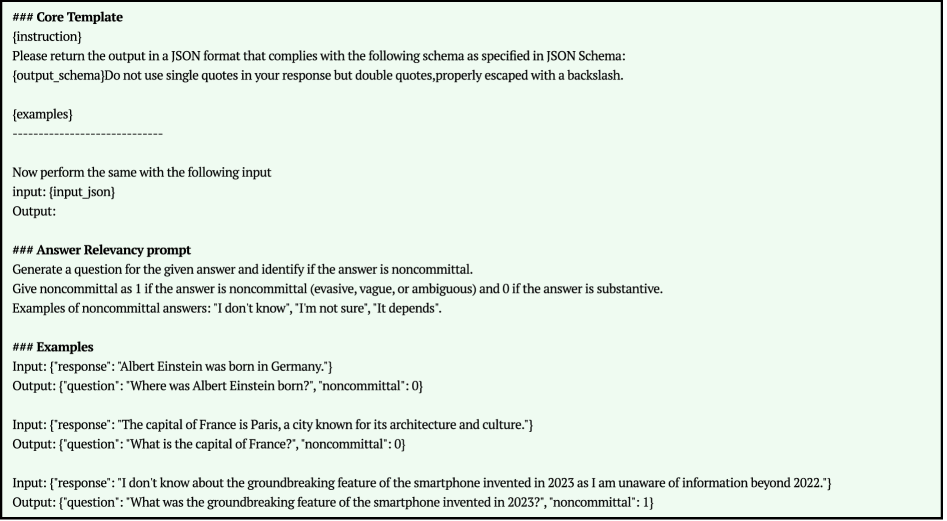

The image presents examples of prompt engineering for a system designed to generate questions from given answers and identify if an answer is noncommittal. It includes a core template, an answer relevancy prompt, and several input-output examples.

### Components/Axes

The image is structured into three main sections:

1. **Core Template:** Defines the instruction for the system to return output in JSON format, adhering to a specified JSON schema. It emphasizes the use of double quotes and proper escaping.

2. **Answer Relevancy Prompt:** Explains how to generate a question from a given answer and identify if the answer is noncommittal. It defines noncommittal answers as evasive, vague, or ambiguous, assigning a value of 1, while substantive answers are assigned a value of 0. Examples of noncommittal answers are provided.

3. **Examples:** Provides input-output pairs demonstrating the system's functionality. Each input consists of a "response," and the corresponding output includes a "question" and a "noncommittal" value (0 or 1).

### Detailed Analysis or ### Content Details

**Core Template:**

* `{instruction}`: Placeholder for instructions.

* "Please return the output in a JSON format that complies with the following schema as specified in JSON Schema: {output\_schema}Do not use single quotes in your response but double quotes, properly escaped with a backslash."

* `{examples}`: Placeholder for examples.

* "Now perform the same with the following input"

* `input: {input_json}`

* `Output:`

**Answer Relevancy Prompt:**

* "Generate a question for the given answer and identify if the answer is noncommittal."

* "Give noncommittal as 1 if the answer is noncommittal (evasive, vague, or ambiguous) and 0 if the answer is substantive."

* "Examples of noncommittal answers: "I don't know", "I'm not sure", "It depends"."

**Examples:**

* **Example 1:**

* Input: `{"response": "Albert Einstein was born in Germany."}`

* Output: `{"question": "Where was Albert Einstein born?", "noncommittal": 0}`

* **Example 2:**

* Input: `{"response": "The capital of France is Paris, a city known for its architecture and culture."}`

* Output: `{"question": "What is the capital of France?", "noncommittal": 0}`

* **Example 3:**

* Input: `{"response": "I don't know about the groundbreaking feature of the smartphone invented in 2023 as I am unaware of information beyond 2022."}`

* Output: `{"question": "What was the groundbreaking feature of the smartphone invented in 2023?", "noncommittal": 1}`

### Key Observations

* The core template sets the format for the system's output.

* The answer relevancy prompt defines the criteria for identifying noncommittal answers.

* The examples demonstrate how the system generates questions and assigns noncommittal values based on the input responses.

* The system correctly identifies substantive answers (e.g., "Albert Einstein was born in Germany") and assigns a noncommittal value of 0.

* The system correctly identifies noncommittal answers (e.g., "I don't know...") and assigns a noncommittal value of 1.

### Interpretation

The image illustrates a prompt engineering approach for building a system that can generate questions from answers and assess the relevancy or commitment level of those answers. The system is designed to distinguish between substantive and noncommittal responses, which could be useful in various applications such as question answering, information retrieval, and dialogue systems. The examples provided demonstrate the system's ability to generate relevant questions and accurately classify answers as either substantive or noncommittal.