TECHNICAL ASSET FINGERPRINT

a2a64f9113828347db71da0b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

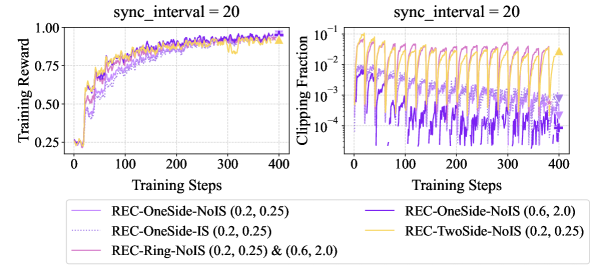

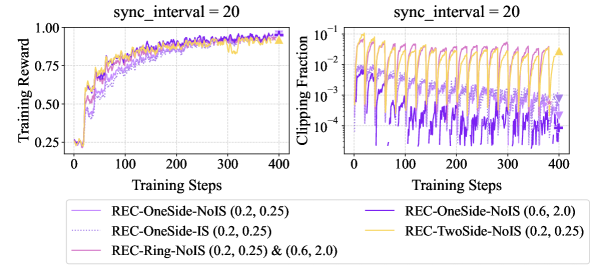

## Dual-Axis Line Chart: Training Reward and Clipping Fraction with sync_interval = 20

### Overview

The image displays two line charts side-by-side, sharing a common x-axis ("Training Steps") and a common legend. The left chart plots "Training Reward" against training steps, while the right chart plots "Clipping Fraction" (on a logarithmic scale) against the same steps. Both charts are titled "sync_interval = 20". A legend at the bottom identifies six different experimental conditions, distinguished by color and line style.

### Components/Axes

* **Chart Titles:** Both subplots are titled "sync_interval = 20".

* **Left Chart (Training Reward):**

* **Y-axis Label:** "Training Reward"

* **Y-axis Scale:** Linear, ranging from 0.25 to 1.00, with major ticks at 0.25, 0.50, 0.75, and 1.00.

* **X-axis Label:** "Training Steps"

* **X-axis Scale:** Linear, ranging from 0 to 400, with major ticks at 0, 100, 200, 300, and 400.

* **Right Chart (Clipping Fraction):**

* **Y-axis Label:** "Clipping Fraction"

* **Y-axis Scale:** Logarithmic (base 10), ranging from 10⁻⁴ to 10⁻¹, with major ticks at 10⁻⁴, 10⁻³, 10⁻², and 10⁻¹.

* **X-axis Label:** "Training Steps"

* **X-axis Scale:** Linear, identical to the left chart (0 to 400).

* **Legend:** Positioned at the bottom center of the figure, spanning both charts. It contains six entries:

1. `REC-OneSide-NoIS (0.2, 0.25)` - Solid purple line.

2. `REC-OneSide-IS (0.2, 0.25)` - Dotted purple line.

3. `REC-Ring-NoIS (0.2, 0.25) & (0.6, 2.0)` - Solid pink/magenta line.

4. `REC-OneSide-NoIS (0.6, 2.0)` - Solid dark purple/indigo line.

5. `REC-TwoSide-NoIS (0.2, 0.25)` - Solid orange/yellow line.

6. `REC-TwoSide-NoIS (0.6, 2.0)` - Dotted orange/yellow line.

### Detailed Analysis

**Left Chart - Training Reward:**

* **Trend Verification:** All six data series show a clear upward trend, starting from a low reward (between ~0.25 and ~0.50) at step 0 and converging towards a high reward (between ~0.90 and ~1.00) by step 400. The rate of increase is steepest in the first ~100 steps.

* **Data Series & Approximate Values:**

* `REC-OneSide-NoIS (0.2, 0.25)` (Solid Purple): Starts ~0.35, rises steadily, ends ~0.98.

* `REC-OneSide-IS (0.2, 0.25)` (Dotted Purple): Follows a nearly identical path to its solid counterpart, ending ~0.98.

* `REC-Ring-NoIS (0.2, 0.25) & (0.6, 2.0)` (Solid Pink): Starts slightly higher (~0.45), rises quickly, and maintains a slight lead for most of the training, ending ~0.99.

* `REC-OneSide-NoIS (0.6, 2.0)` (Solid Dark Purple): Starts the lowest (~0.25), rises more slowly initially but catches up, ending ~0.97.

* `REC-TwoSide-NoIS (0.2, 0.25)` (Solid Orange): Starts ~0.40, rises quickly, and is among the top performers, ending ~0.99.

* `REC-TwoSide-NoIS (0.6, 2.0)` (Dotted Orange): Follows a very similar path to its solid counterpart, ending ~0.99.

* **Convergence:** By step 400, all lines are tightly clustered between approximately 0.95 and 1.00, indicating similar final performance in terms of training reward.

**Right Chart - Clipping Fraction:**

* **Trend Verification:** All series exhibit a distinct, regular oscillatory pattern (sawtooth wave) throughout training. The amplitude and baseline of these oscillations differ between series.

* **Data Series & Approximate Values:**

* `REC-OneSide-NoIS (0.2, 0.25)` (Solid Purple): Oscillates with a baseline around 10⁻³ and peaks near 10⁻².

* `REC-OneSide-IS (0.2, 0.25)` (Dotted Purple): Oscillates with a lower baseline (between 10⁻⁴ and 10⁻³) and lower peaks (around 10⁻³).

* `REC-Ring-NoIS (0.2, 0.25) & (0.6, 2.0)` (Solid Pink): Shows the highest oscillations, with a baseline around 10⁻² and peaks reaching close to 10⁻¹.

* `REC-OneSide-NoIS (0.6, 2.0)` (Solid Dark Purple): Has the lowest overall values, oscillating between 10⁻⁴ and 10⁻³.

* `REC-TwoSide-NoIS (0.2, 0.25)` (Solid Orange): Oscillates with a baseline around 10⁻² and peaks near 5x10⁻².

* `REC-TwoSide-NoIS (0.6, 2.0)` (Dotted Orange): Follows a similar pattern to its solid counterpart but with slightly lower peaks.

* **Pattern:** The oscillations are synchronized across all series, with peaks and troughs occurring at the same training steps (approximately every 20 steps, consistent with `sync_interval = 20`).

### Key Observations

1. **Performance Convergence:** Despite different starting points and clipping behaviors, all methods achieve nearly identical high training rewards (~0.95-1.00) by the end of 400 steps.

2. **Clipping Fraction Hierarchy:** There is a clear hierarchy in clipping fraction magnitude: `REC-Ring-NoIS` > `REC-TwoSide-NoIS` ≈ `REC-OneSide-NoIS (0.2, 0.25)` > `REC-OneSide-IS (0.2, 0.25)` > `REC-OneSide-NoIS (0.6, 2.0)`.

3. **Impact of IS (Importance Sampling?):** The `REC-OneSide-IS` variant (dotted purple) shows significantly lower clipping fractions than its `NoIS` counterpart (solid purple) while achieving similar final reward.

4. **Impact of Parameters (0.2, 0.25) vs (0.6, 2.0):** For the `OneSide-NoIS` method, the (0.6, 2.0) parameter set (dark purple) results in lower initial reward, slower initial learning, and lower clipping fractions compared to the (0.2, 0.25) set (purple).

5. **Synchronized Oscillations:** The perfect synchronization of clipping fraction oscillations across all methods confirms the `sync_interval = 20` setting is actively influencing the training dynamics at a fixed frequency.

### Interpretation

This data suggests an experiment comparing different variants of a "REC" (likely a Reinforcement Learning or optimization algorithm) under a fixed synchronization interval. The primary finding is that **all tested variants are effective at maximizing the training reward**, converging to a similar high performance. The key differences lie in their *training dynamics*, specifically the magnitude of gradient clipping applied.

The `REC-Ring-NoIS` method operates with the highest clipping fractions, suggesting it experiences the largest gradient updates that are frequently clipped. In contrast, the `REC-OneSide-NoIS (0.6, 2.0)` method has the smallest clipping fractions, indicating more conservative updates. The `REC-OneSide-IS` method achieves a middle ground, suggesting importance sampling may help stabilize updates, reducing the need for aggressive clipping.

The synchronized sawtooth pattern in clipping fraction is a direct artifact of the `sync_interval = 20` parameter, likely representing a periodic reset or synchronization event that causes gradients to build up and then be clipped in a regular cycle. The fact that reward converges similarly despite vastly different clipping profiles implies the task may be robust to a range of update magnitudes, or that the clipping mechanism is effectively normalizing the learning process across all variants. The experiment highlights how algorithmic choices (OneSide vs. TwoSide vs. Ring, with or without IS) and hyperparameters (the numeric pairs) primarily affect the *path* (clipping behavior) rather than the *destination* (final reward) in this specific setting.

DECODING INTELLIGENCE...