## Flowchart: Federated Learning System Architecture

### Overview

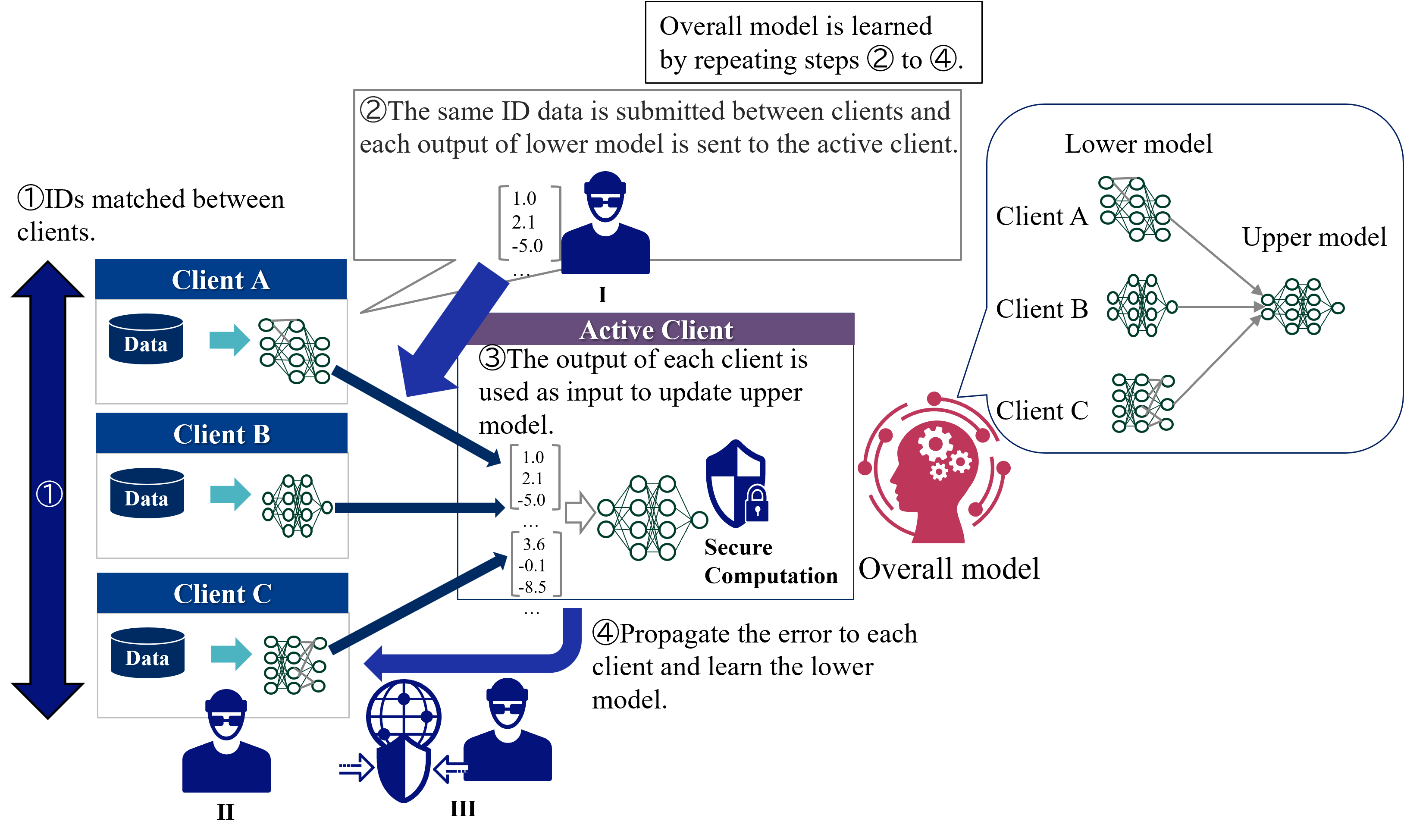

The diagram illustrates a federated learning system where multiple clients (A, B, C) collaboratively train a shared model while maintaining data privacy. The process involves iterative steps of data matching, secure computation, and model updates across distributed clients.

### Components/Axes

1. **Clients**:

- Client A, Client B, Client C (each with private data and local lower models)

- Active Client (highlighted in purple, receiving outputs from other clients)

2. **Models**:

- Lower models (client-specific neural networks)

- Upper model (global model updated by aggregated client outputs)

3. **Process Flow**:

- Step ①: ID matching between clients

- Step ②: Data submission between clients

- Step ③: Secure computation using client outputs

- Step ④: Error propagation for model refinement

### Detailed Analysis

- **Client Data Flow**:

- Each client (A/B/C) processes local data through their lower model

- Outputs (numerical values like 1.0, 2.1, -5.0) are sent to the active client

- Example outputs shown: Client A → [1.0], Client B → [2.1, -5.0], Client C → [3.6, -0.1, -8.5]

- **Secure Computation**:

- Shield icon with lock symbol indicates encrypted data processing

- Outputs are combined securely to update the upper model

- **Model Update Mechanism**:

- Upper model receives aggregated client outputs

- Error propagation (Step ④) sends feedback to individual clients

- Lower models are refined iteratively through this feedback loop

### Key Observations

1. **Cyclical Process**: Steps ②-④ form a closed loop for continuous model improvement

2. **Privacy Preservation**: Data remains localized (Step ① ensures ID matching without raw data sharing)

3. **Hierarchical Structure**: Two-tier model architecture (lower models → upper model)

4. **Active Client Role**: Central node coordinating updates from multiple clients

### Interpretation

This architecture demonstrates a privacy-preserving machine learning framework where:

- Clients maintain data sovereignty while contributing to global model training

- Secure computation enables collaborative learning without data exposure

- Iterative error propagation creates a feedback loop for model refinement

- The active client acts as a coordinator, aggregating updates while preserving individual client privacy

The system's strength lies in its balance between collaborative learning and data security, with the active client serving as both coordinator and computational hub. The numerical outputs suggest a regression-type task where clients contribute partial solutions that are combined to form a comprehensive model.