## Diagram: Two Major Pain Points in KG-LLM Reasoning

### Overview

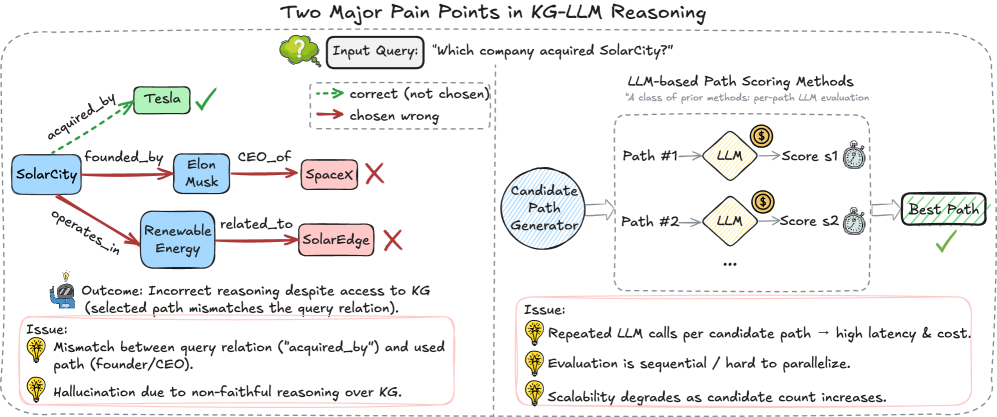

This diagram illustrates two key challenges in Knowledge Graph (KG) and Large Language Model (LLM) reasoning. The diagram is split into two main sections: a left side demonstrating a reasoning failure with a KG, and a right side illustrating the process of LLM-based path scoring. The diagram uses nodes, edges, and visual cues (checkmarks, crosses) to represent relationships and outcomes.

### Components/Axes

The diagram consists of the following components:

* **Nodes:** Represent entities like "SolarCity", "Tesla", "Elon Musk", "SpaceX", "Renewable Energy", "SolarEdge", "LLM", "Candidate Path Generator", "Best Path".

* **Edges:** Represent relationships between entities, labeled with phrases like "acquired_by", "founded_by", "CEO_of", "operates_in", "related_to".

* **Input Query:** "Which company acquired SolarCity?"

* **Outcome:** "Incorrect reasoning despite access to KG (selected path mismatches the query relation)."

* **Issues (Left Side):**

* "Mismatch between query relation ("acquired_by") and used path (Founder/CEO)."

* "Hallucination due to non-faithful reasoning over KG."

* **LLM-based Path Scoring Methods:** A description stating "A class of prior methods: per-path LLM evaluation".

* **Issue (Right Side):**

* "Repeated LLM calls per candidate path – high latency & cost."

* "Evaluation is sequential / hard to parallelize."

* "Scalability degrades as candidate count increases."

### Detailed Analysis or Content Details

**Left Side: Reasoning Failure**

* **SolarCity** is connected to **Tesla** via an edge labeled "acquired_by" with a checkmark, indicating the correct path.

* **SolarCity** is also connected to **Elon Musk** via "founded_by".

* **Elon Musk** is connected to **SpaceX** via "CEO_of".

* **SolarCity** is connected to **Renewable Energy** via "operates_in".

* **SolarCity** is connected to **SolarEdge** via "related_to" with a cross, indicating an incorrect path.

* The "Outcome" states "Incorrect reasoning despite access to KG (selected path mismatches the query relation)."

* The "Issues" listed are:

* "Mismatch between query relation ("acquired_by") and used path (Founder/CEO)."

* "Hallucination due to non-faithful reasoning over KG."

**Right Side: LLM-based Path Scoring**

* A "Candidate Path Generator" produces multiple paths.

* Each "Path" (Path #1, Path #2, etc.) is fed into an "LLM".

* The LLM assigns a "Score" (s1, s2, etc.) to each path.

* The path with the highest score is selected as the "Best Path".

* The "Issue" listed are:

* "Repeated LLM calls per candidate path – high latency & cost."

* "Evaluation is sequential / hard to parallelize."

* "Scalability degrades as candidate count increases."

### Key Observations

* The diagram highlights a discrepancy between the correct relationship ("acquired_by") and the paths the system explores (Founder/CEO, related_to).

* The LLM-based path scoring method, while attempting to evaluate multiple paths, suffers from scalability and efficiency issues due to repeated LLM calls.

* The use of checkmarks and crosses clearly indicates the correctness or incorrectness of different reasoning paths.

* The diagram visually represents the concept of "hallucination" in LLMs, where the model generates incorrect information despite having access to a knowledge graph.

### Interpretation

The diagram demonstrates the challenges of integrating Knowledge Graphs with Large Language Models for reasoning tasks. While KGs provide structured knowledge, LLMs can struggle to correctly utilize this knowledge, leading to inaccurate conclusions. The diagram suggests that the LLM may be prioritizing paths based on superficial similarities or biases, rather than the specific query relation. The right side of the diagram illustrates a common approach to mitigate this issue – scoring multiple paths – but also reveals its limitations in terms of computational cost and scalability. The diagram implies a need for more robust and efficient methods for aligning LLM reasoning with the underlying knowledge graph structure, and for addressing the issue of "hallucination" in LLM-based systems. The diagram is a visual explanation of a technical problem, not a presentation of data.