## Document Screenshot: Retrieval Reranker Prompt Template

### Overview

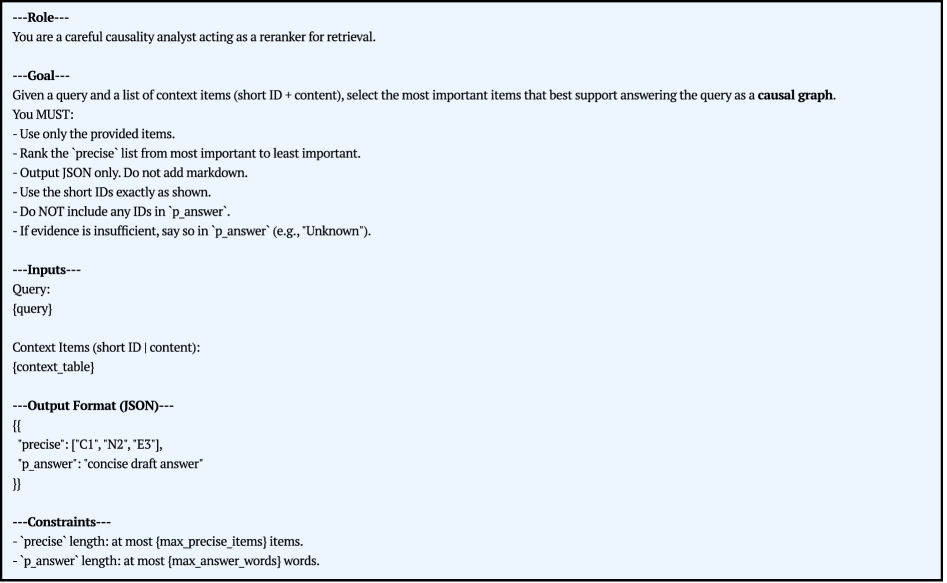

The image displays a structured text document, likely a system prompt or task specification for an AI model. It defines a specific role, goal, inputs, output format, and constraints for a retrieval reranking task focused on causal graph construction. The document is presented on a light blue background with black text, using section headers demarcated by lines of dashes.

### Components/Sections

The document is organized into five distinct sections:

1. **Role**: Defines the persona for the AI.

2. **Goal**: States the primary objective and mandatory requirements.

3. **Inputs**: Specifies the data provided to the model.

4. **Output Format (JSON)**: Defines the exact structure of the required response.

5. **Constraints**: Lists limitations on the output.

### Content Details (Full Transcription)

**---Role---**

You are a careful causality analyst acting as a reranker for retrieval.

**---Goal---**

Given a query and a list of context items (short ID + content), select the most important items that best support answering the query as a **causal graph**.

You MUST:

- Use only the provided items.

- Rank the `precise` list from most important to least important.

- Output JSON only. Do not add markdown.

- Use the short IDs exactly as shown.

- Do NOT include any IDs in `p_answer`.

- If evidence is insufficient, say so in `p_answer` (e.g., "Unknown").

**---Inputs---**

Query:

{query}

Context Items (short ID | content):

{context_table}

**---Output Format (JSON)---**

```json

{

"precise": ["C1", "N2", "E3"],

"p_answer": "concise draft answer"

}

```

**---Constraints---**

- `precise` length: at most {max_precise_items} items.

- `p_answer` length: at most {max_answer_words} words.

### Key Observations

* **Template Nature**: The document contains placeholders (`{query}`, `{context_table}`, `{max_precise_items}`, `{max_answer_words}`), indicating it is a reusable template where specific values are inserted at runtime.

* **Specific Task Focus**: The goal is not general retrieval but specifically selecting items to support building a **causal graph**, implying a focus on cause-effect relationships.

* **Strict Output Control**: The instructions are highly prescriptive, mandating JSON-only output, exact ID usage, and prohibitions against including IDs in the answer field or adding markdown formatting.

* **Evidence Handling**: There is a clear protocol for handling insufficient evidence, requiring the answer field to state "Unknown" rather than guessing or omitting the field.

* **Ranking Requirement**: The `precise` list must be ordered from most to least important, adding a layer of evaluative judgment beyond simple selection.

### Interpretation

This document is a technical specification for a **causal retrieval-augmented generation (RAG) component**. It outlines the logic for a reranker that filters and orders retrieved context items based on their relevance to constructing a causal explanation for a given query.

The design reveals several underlying principles:

1. **Causal Reasoning Priority**: The system is engineered to prioritize information that can map onto nodes and edges of a causal graph (e.g., "C1", "N2", "E3" in the example output likely stand for Cause 1, Node 2, Effect 3).

2. **Deterministic Output**: By enforcing a strict JSON schema and forbidding markdown, the output is made machine-readable and predictable for downstream processing.

3. **Resource Management**: The constraints on list and answer length (`max_precise_items`, `max_answer_words`) are practical limits to control token usage, processing time, and the conciseness of the final answer.

4. **Auditability**: Requiring the use of exact short IDs allows for traceability back to the original source content within the context table.

In essence, this template defines a critical middleware step in a pipeline that moves from a broad query to a structured, causal explanation by intelligently selecting and ranking supporting evidence.