\n

## Line Chart: Test AUROC vs. Layer Index for Different Models

### Overview

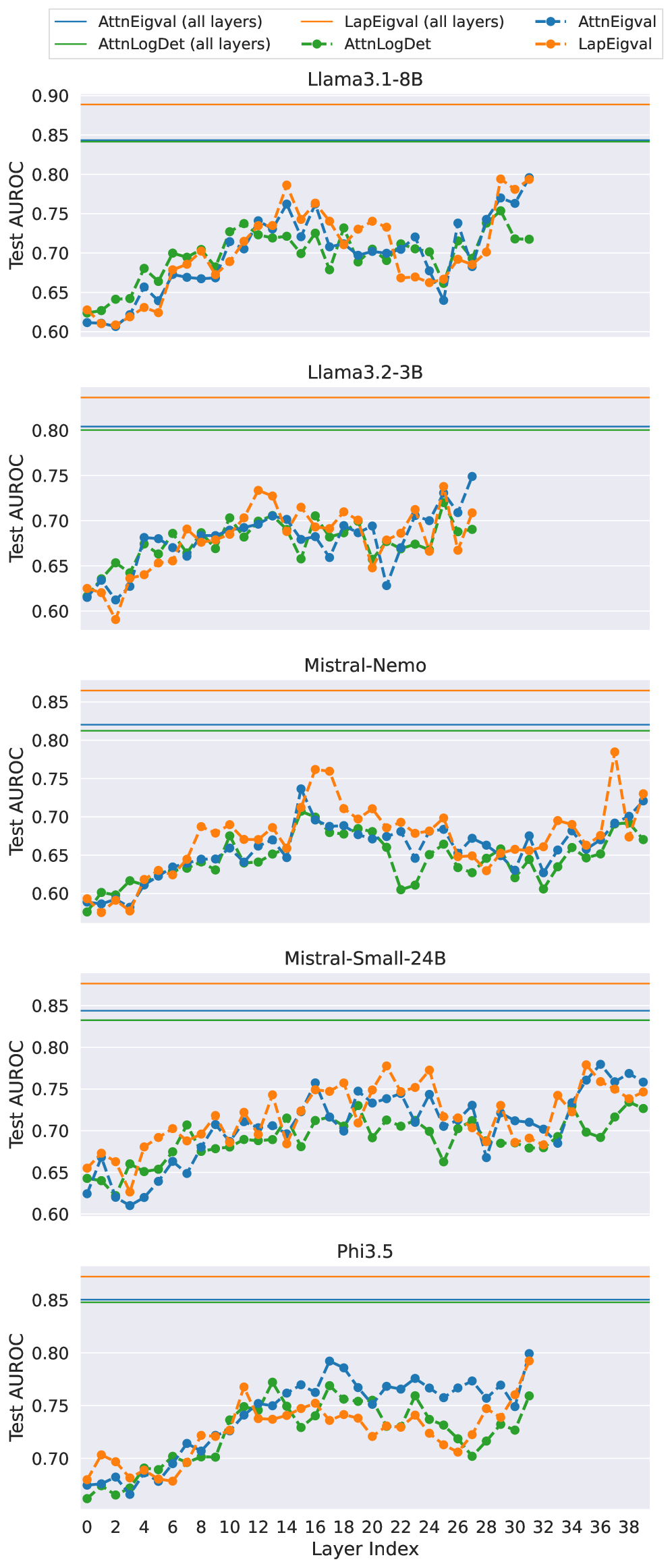

The image presents a line chart comparing the Test Area Under the Receiver Operating Characteristic curve (AUROC) across different layers for five different language models: Llama3.1-8B, Llama3.2-3B, Mistral-Nemo, Mistral-Small-24B, and Phi3.5. The x-axis represents the Layer Index, ranging from 0 to 36. The y-axis represents the Test AUROC, ranging from 0.60 to 0.90. Four different attention mechanisms are evaluated: AttnEigval (all layers), LapEigval (all layers), AttnLogDet (all layers), and LapLogDet (all layers). Each model has its own subplot, displaying the AUROC values for each attention mechanism as a function of the layer index.

### Components/Axes

* **X-axis:** Layer Index (0 to 36)

* **Y-axis:** Test AUROC (approximately 0.60 to 0.90)

* **Models (Subplots):**

* Llama3.1-8B

* Llama3.2-3B

* Mistral-Nemo

* Mistral-Small-24B

* Phi3.5

* **Attention Mechanisms (Lines/Colors):**

* AttnEigval (all layers) - Blue

* LapEigval (all layers) - Green

* AttnLogDet (all layers) - Yellow

* LapLogDet (all layers) - Red

* **Legend:** Located at the top-right of the image, mapping colors to attention mechanisms.

### Detailed Analysis or Content Details

**Llama3.1-8B:**

* AttnEigval: Starts at approximately 0.78, dips to around 0.73 at layer 8, then rises to approximately 0.82 at layer 16, and fluctuates between 0.78 and 0.82 for the remaining layers.

* LapEigval: Starts at approximately 0.75, remains relatively stable around 0.75-0.78 until layer 24, then decreases to approximately 0.72 at layer 36.

* AttnLogDet: Starts at approximately 0.68, increases to around 0.74 at layer 8, then decreases to approximately 0.69 at layer 24, and ends around 0.70.

* LapLogDet: Starts at approximately 0.69, increases to around 0.75 at layer 8, then decreases to approximately 0.70 at layer 24, and ends around 0.71.

**Llama3.2-3B:**

* AttnEigval: Starts at approximately 0.76, fluctuates between 0.74 and 0.78, with a slight dip to 0.73 around layer 24.

* LapEigval: Starts at approximately 0.72, remains relatively stable around 0.72-0.75, with a slight increase to 0.76 around layer 32.

* AttnLogDet: Starts at approximately 0.66, increases to around 0.70 at layer 8, then decreases to approximately 0.67 at layer 24, and ends around 0.68.

* LapLogDet: Starts at approximately 0.67, increases to around 0.71 at layer 8, then decreases to approximately 0.68 at layer 24, and ends around 0.69.

**Mistral-Nemo:**

* AttnEigval: Starts at approximately 0.76, fluctuates between 0.74 and 0.78, with a slight dip to 0.73 around layer 24.

* LapEigval: Starts at approximately 0.72, remains relatively stable around 0.72-0.75, with a slight increase to 0.76 around layer 32.

* AttnLogDet: Starts at approximately 0.66, increases to around 0.70 at layer 8, then decreases to approximately 0.67 at layer 24, and ends around 0.68.

* LapLogDet: Starts at approximately 0.67, increases to around 0.71 at layer 8, then decreases to approximately 0.68 at layer 24, and ends around 0.69.

**Mistral-Small-24B:**

* AttnEigval: Starts at approximately 0.78, fluctuates between 0.76 and 0.80, with a slight dip to 0.75 around layer 24.

* LapEigval: Starts at approximately 0.74, remains relatively stable around 0.73-0.76, with a slight increase to 0.77 around layer 32.

* AttnLogDet: Starts at approximately 0.68, increases to around 0.72 at layer 8, then decreases to approximately 0.69 at layer 24, and ends around 0.70.

* LapLogDet: Starts at approximately 0.69, increases to around 0.73 at layer 8, then decreases to approximately 0.70 at layer 24, and ends around 0.71.

**Phi3.5:**

* AttnEigval: Starts at approximately 0.78, fluctuates between 0.76 and 0.80, with a slight dip to 0.75 around layer 24.

* LapEigval: Starts at approximately 0.74, remains relatively stable around 0.73-0.76, with a slight increase to 0.77 around layer 32.

* AttnLogDet: Starts at approximately 0.68, increases to around 0.72 at layer 8, then decreases to approximately 0.69 at layer 24, and ends around 0.70.

* LapLogDet: Starts at approximately 0.69, increases to around 0.73 at layer 8, then decreases to approximately 0.70 at layer 24, and ends around 0.71.

### Key Observations

* AttnEigval consistently shows higher AUROC values compared to the other attention mechanisms across all models.

* LapLogDet and AttnLogDet generally have the lowest AUROC values.

* The AUROC values tend to fluctuate across layers, indicating that the performance of each attention mechanism varies depending on the layer.

* There isn't a clear monotonic trend (increasing or decreasing) for any of the attention mechanisms across all models.

### Interpretation

The chart demonstrates the performance of different attention mechanisms within various language models, as measured by the Test AUROC. The consistent superiority of AttnEigval suggests that this attention mechanism is more effective at capturing relevant information across layers in these models. The lower performance of LapLogDet and AttnLogDet might indicate that these mechanisms are less efficient or struggle with the specific characteristics of the data or model architecture. The fluctuations in AUROC values across layers suggest that the optimal attention mechanism might vary depending on the depth of the network. The differences in performance between models highlight the importance of model-specific optimization of attention mechanisms. The data suggests that the choice of attention mechanism can significantly impact the performance of a language model, and further research is needed to understand the underlying reasons for these differences.