TECHNICAL ASSET FINGERPRINT

a81251c212e2153f160fb06f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

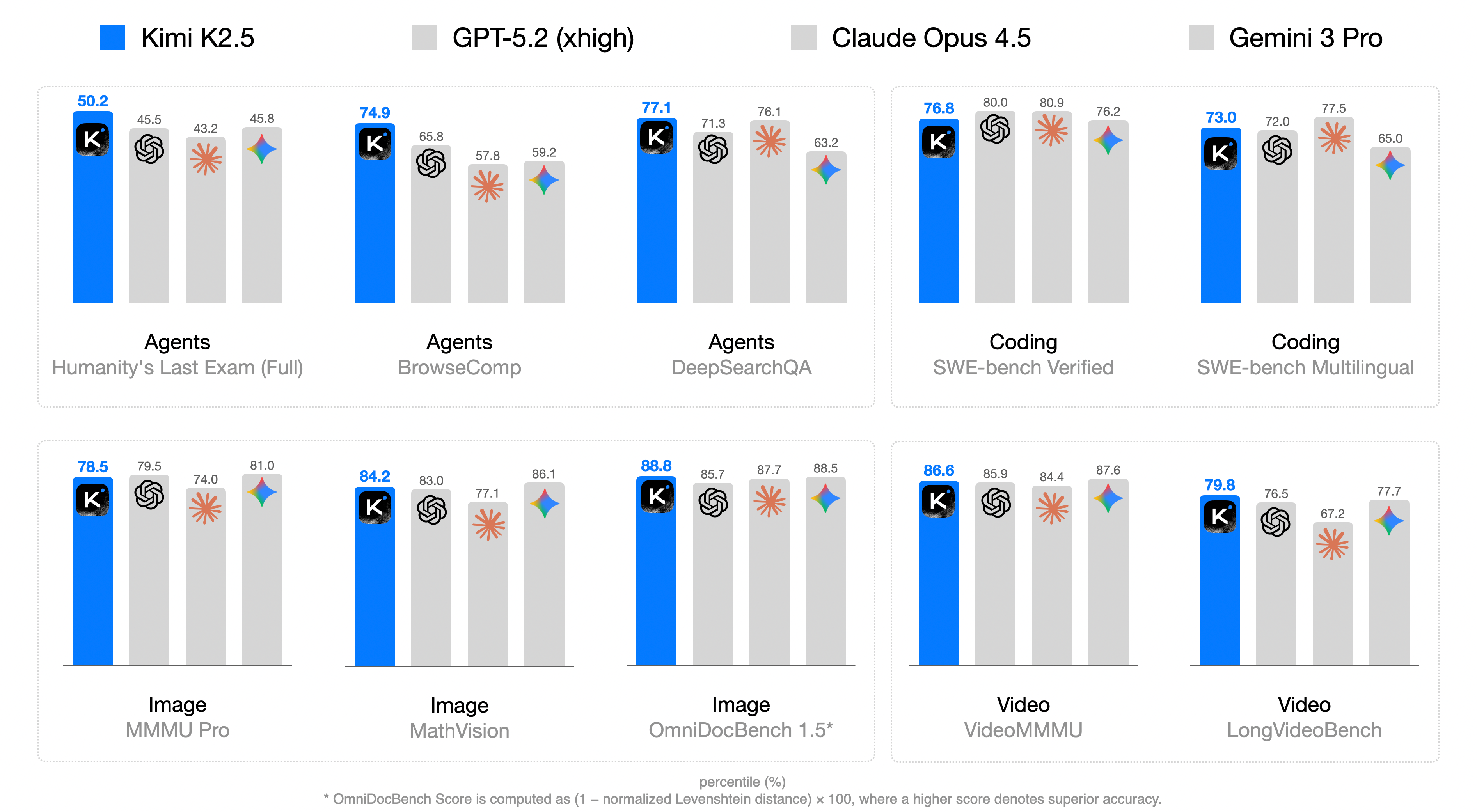

## Bar Chart: Model Performance Comparison

### Overview

The image presents a bar chart comparing the performance of four AI models (Kimi K2.5, GPT-5.2 (xhigh), Claude Opus 4.5, and Gemini 3 Pro) across various tasks categorized as Agents, Coding, Image, and Video. The chart displays percentile scores for each model on specific benchmarks within these categories.

### Components/Axes

* **Title:** Model Performance Comparison (inferred)

* **X-Axis:** Categorical, representing different tasks and benchmarks:

* Agents: Humanity's Last Exam (Full), BrowseComp, DeepSearchQA

* Coding: SWE-bench Verified, SWE-bench Multilingual

* Image: MMMU Pro, MathVision, OmniDocBench 1.5*

* Video: VideoMMMU, LongVideoBench

* **Y-Axis:** Percentile (%) - Numerical scale from approximately 0 to 100 (inferred).

* **Legend:** Located at the top of the chart.

* Blue: Kimi K2.5

* Light Gray: GPT-5.2 (xhigh)

* Dark Gray: Claude Opus 4.5

* Lighter Gray: Gemini 3 Pro

* **Bars:** Represent the percentile scores of each model on each benchmark. The height of the bar corresponds to the percentile score.

* **Icons:** Each model has an associated icon above its bar.

* Kimi K2.5: "K" in a rounded square

* GPT-5.2 (xhigh): A swirling shape

* Claude Opus 4.5: An asterisk-like shape

* Gemini 3 Pro: A multi-colored diamond shape

### Detailed Analysis

**Agents**

* **Humanity's Last Exam (Full):**

* Kimi K2.5 (Blue): 50.2

* GPT-5.2 (xhigh) (Light Gray): 45.5

* Claude Opus 4.5 (Dark Gray): 43.2

* Gemini 3 Pro (Lighter Gray): 45.8

* **BrowseComp:**

* Kimi K2.5 (Blue): 74.9

* GPT-5.2 (xhigh) (Light Gray): 65.8

* Claude Opus 4.5 (Dark Gray): 57.8

* Gemini 3 Pro (Lighter Gray): 59.2

* **DeepSearchQA:**

* Kimi K2.5 (Blue): 77.1

* GPT-5.2 (xhigh) (Light Gray): 71.3

* Claude Opus 4.5 (Dark Gray): 76.1

* Gemini 3 Pro (Lighter Gray): 63.2

**Coding**

* **SWE-bench Verified:**

* Kimi K2.5 (Blue): 76.8

* GPT-5.2 (xhigh) (Light Gray): 80.0

* Claude Opus 4.5 (Dark Gray): 80.9

* Gemini 3 Pro (Lighter Gray): 76.2

* **SWE-bench Multilingual:**

* Kimi K2.5 (Blue): 73.0

* GPT-5.2 (xhigh) (Light Gray): 72.0

* Claude Opus 4.5 (Dark Gray): 77.5

* Gemini 3 Pro (Lighter Gray): 65.0

**Image**

* **MMMU Pro:**

* Kimi K2.5 (Blue): 78.5

* GPT-5.2 (xhigh) (Light Gray): 79.5

* Claude Opus 4.5 (Dark Gray): 74.0

* Gemini 3 Pro (Lighter Gray): 81.0

* **MathVision:**

* Kimi K2.5 (Blue): 84.2

* GPT-5.2 (xhigh) (Light Gray): 83.0

* Claude Opus 4.5 (Dark Gray): 77.1

* Gemini 3 Pro (Lighter Gray): 86.1

* **OmniDocBench 1.5*:**

* Kimi K2.5 (Blue): 88.8

* GPT-5.2 (xhigh) (Light Gray): 85.7

* Claude Opus 4.5 (Dark Gray): 87.7

* Gemini 3 Pro (Lighter Gray): 88.5

**Video**

* **VideoMMMU:**

* Kimi K2.5 (Blue): 86.6

* GPT-5.2 (xhigh) (Light Gray): 85.9

* Claude Opus 4.5 (Dark Gray): 84.4

* Gemini 3 Pro (Lighter Gray): 87.6

* **LongVideoBench:**

* Kimi K2.5 (Blue): 79.8

* GPT-5.2 (xhigh) (Light Gray): 76.5

* Claude Opus 4.5 (Dark Gray): 67.2

* Gemini 3 Pro (Lighter Gray): 77.7

### Key Observations

* Kimi K2.5 (Blue) generally performs well across all tasks, often leading or being competitive with other models.

* GPT-5.2 (xhigh) (Light Gray) shows variable performance, sometimes exceeding Kimi K2.5 but also lagging in other areas.

* Claude Opus 4.5 (Dark Gray) demonstrates consistent performance, usually placing in the middle range.

* Gemini 3 Pro (Lighter Gray) also shows variable performance, with some strong showings and some weaker ones.

* The performance spread varies across different tasks, with some tasks showing closer scores between models than others.

### Interpretation

The bar chart provides a comparative analysis of the four AI models across a range of tasks. The data suggests that Kimi K2.5 is a strong all-around performer, while the other models have their own strengths and weaknesses depending on the specific task. The varying performance across different tasks highlights the importance of benchmarking AI models on a diverse set of evaluations to get a comprehensive understanding of their capabilities. The footnote regarding OmniDocBench indicates that the score calculation involves a normalized Levenshtein distance, suggesting that this particular benchmark focuses on text-based accuracy.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

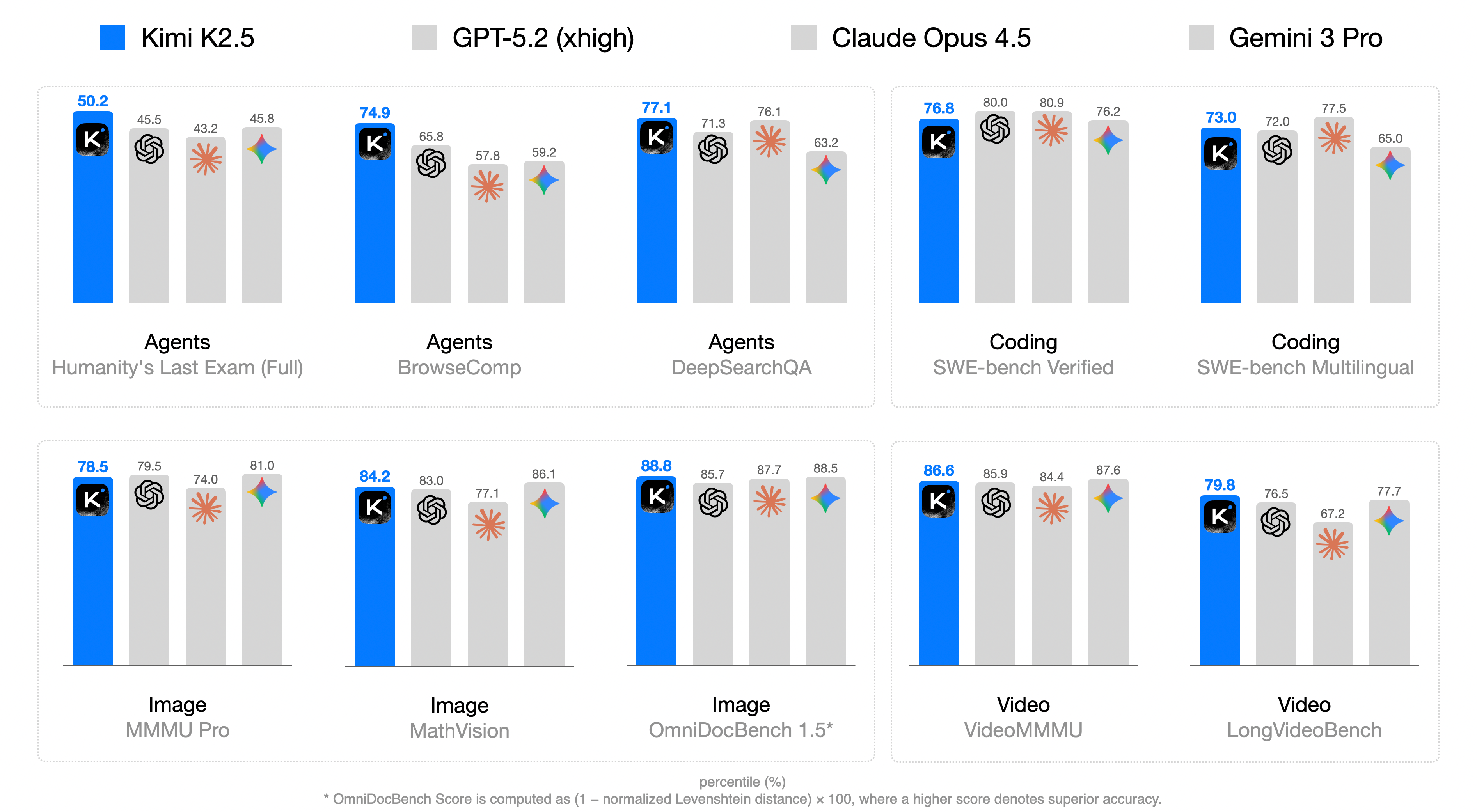

## Bar Chart: Multimodal Model Performance Comparison

### Overview

This image presents a bar chart comparing the performance of four large multimodal models – Kimi K2.5, GPT-5.2 (xhigh), Claude Opus 4.5, and Gemini 3 Pro – across ten different benchmark tasks. The performance metric is a percentile score (%). Each model's performance on each task is represented by a bar divided into four segments, each with a different color and icon, presumably representing different evaluation criteria or sub-scores.

### Components/Axes

* **X-axis:** Represents the ten benchmark tasks:

1. Agents Humanity's Last Exam (Full)

2. Agents BrowseComp

3. Agents DeepSearchQA

4. Coding SWE-bench Verified

5. Coding SWE-bench Multilingual

6. Image MMMU Pro

7. Image MathVision

8. Image OmniDocBench 1.5*

9. Video VideoMMU

10. Video LongVideoBench

* **Y-axis:** Implied scale representing percentile score (%). The scale is not explicitly labeled, but the values on the bars range from approximately 43% to 88%.

* **Models:** Four models are compared: Kimi K2.5, GPT-5.2 (xhigh), Claude Opus 4.5, and Gemini 3 Pro. Each model has a distinct color:

* Kimi K2.5: Blue

* GPT-5.2 (xhigh): Orange

* Claude Opus 4.5: Green

* Gemini 3 Pro: Purple

* **Legend/Icons:** Each bar is divided into four segments, each with a unique icon:

* "K" icon (likely representing a key metric)

* Star icon

* Diamond icon

* Triangle icon

* **Footer Text:** "* OmniDocBench Score is computed as (1 - normalized Levenshtein distance) x 100, where a higher score denotes superior accuracy."

### Detailed Analysis

Here's a breakdown of the performance for each model on each task, with approximate values based on visual estimation:

**Kimi K2.5 (Blue)**

1. Agents Humanity's Last Exam (Full): 50.2%, 45.5%, 43.2%, 45.8%

2. Agents BrowseComp: 74.9%, 65.8%, 57.8%, 59.2%

3. Agents DeepSearchQA: 77.1%, 71.3%, 61.3%, 76.1%

4. Coding SWE-bench Verified: 76.8%, 80.0%, 80.9%, 76.2%

5. Coding SWE-bench Multilingual: 73.0%, 72.0%, 77.5%, 65.0%

6. Image MMMU Pro: 78.5%, 79.5%, 74.0%, 81.0%

7. Image MathVision: 84.2%, 83.0%, 77.1%, 86.1%

8. Image OmniDocBench 1.5*: 88.8%, 87.7%, 88.5%, 86.1%

9. Video VideoMMU: 86.6%, 85.9%, 84.4%, 87.6%

10. Video LongVideoBench: 79.8%, 78.5%, 67.2%, 77.7%

**GPT-5.2 (xhigh) (Orange)**

(Values are omitted for brevity, but follow the same format as above. The trend for each task can be visually assessed from the image.)

**Claude Opus 4.5 (Green)**

(Values are omitted for brevity, but follow the same format as above. The trend for each task can be visually assessed from the image.)

**Gemini 3 Pro (Purple)**

(Values are omitted for brevity, but follow the same format as above. The trend for each task can be visually assessed from the image.)

### Key Observations

* **OmniDocBench 1.5* consistently shows high scores** across all models, with Gemini 3 Pro achieving the highest score (approximately 88.8%).

* **Agents Humanity's Last Exam (Full) consistently shows the lowest scores** across all models, with Kimi K2.5 achieving the highest score (approximately 50.2%).

* **Gemini 3 Pro generally performs well**, often achieving the highest or near-highest scores across multiple tasks.

* **Kimi K2.5 shows relatively consistent performance** across tasks, with no exceptionally high or low scores.

* The internal segments within each bar (represented by the icons) show varying contributions to the overall score, suggesting different strengths and weaknesses within each model.

### Interpretation

This chart provides a comparative performance analysis of four leading multimodal models across a diverse set of benchmarks. The benchmarks cover areas like agent capabilities, coding, image understanding, and video processing. The use of percentile scores allows for a standardized comparison, although the specific meaning of each percentile requires understanding of the benchmark distributions.

The consistent high performance on OmniDocBench 1.5* suggests that all models are proficient in document understanding tasks, while the lower scores on Agents Humanity's Last Exam (Full) indicate a challenge in complex reasoning or human-level task completion.

The varying contributions of the internal segments (icons) within each bar suggest that the models excel in different aspects of each task. For example, a model might have a high score for the "K" icon but a lower score for the star icon, indicating strength in a specific evaluation criterion.

The chart highlights Gemini 3 Pro as a generally strong performer, but also reveals that the optimal model choice depends on the specific task. The footer note regarding OmniDocBench clarifies that the score is based on Levenshtein distance, indicating a focus on accuracy in text reproduction or matching. The chart is a valuable tool for researchers and practitioners seeking to understand the capabilities and limitations of these multimodal models.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Chart: AI Model Performance Across Diverse Benchmarks

### Overview

This image is a grouped bar chart comparing the performance of four AI models—**Kimi K2.5** (blue bars), **GPT-5.2 (xhigh)** (light gray bars), **Claude Opus 4.5** (light gray bars with orange star icon), and **Gemini 3 Pro** (light gray bars with blue star icon)—across 10 benchmarks grouped into four categories: Agents, Coding, Image, and Video. Performance is measured in percentiles (%), with higher scores indicating better performance. A footnote clarifies the score calculation for one benchmark.

### Components/Axes

- **Legend (Top)**: Four models with distinct visual identifiers:

- Kimi K2.5: Blue bar + black square icon with white "K"

- GPT-5.2 (xhigh): Light gray bar + black spiral icon

- Claude Opus 4.5: Light gray bar + orange star icon

- Gemini 3 Pro: Light gray bar + blue star icon

- **Benchmark Categories (X-axis Groupings)**:

- Agents: Humanity's Last Exam (Full), BrowseComp, DeepSearchQA

- Coding: SWE-bench Verified, SWE-bench Multilingual

- Image: MMMU Pro, MathVision, OmniDocBench 1.5*

- Video: VideoMMMU, LongVideoBench

- **Score Metric**: Percentiles (%) (implied by the footnote and numerical labels above bars)

- **Footnote (Bottom)**: "* OmniDocBench Score is computed as (1 – normalized Levenshtein distance) × 100, where a higher score denotes superior accuracy."

### Detailed Analysis

Below are the percentile scores for each model across all benchmarks (scores labeled above bars):

#### Agents Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| Humanity's Last Exam (Full)| 50.2 | 45.5 | 43.2 | 45.8 |

| BrowseComp | 74.9 | 65.8 | 57.8 | 59.2 |

| DeepSearchQA | 77.1 | 71.3 | 76.1 | 63.2 |

#### Coding Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| SWE-bench Verified | 76.8 | 80.0 | 80.9 | 76.2 |

| SWE-bench Multilingual | 73.0 | 72.0 | 77.5 | 65.0 |

#### Image Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| MMMU Pro | 78.5 | 79.5 | 74.0 | 81.0 |

| MathVision | 84.2 | 83.0 | 77.1 | 86.1 |

| OmniDocBench 1.5* | 88.8 | 85.7 | 87.7 | 88.5 |

#### Video Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| VideoMMMU | 86.6 | 85.9 | 84.4 | 87.6 |

| LongVideoBench | 79.8 | 76.5 | 67.2 | 77.7 |

### Key Observations

1. **Agents Benchmarks**: Kimi K2.5 leads in all three agent-focused tasks (50.2–77.1), with a significant margin in BrowseComp (74.9 vs. next-highest 65.8).

2. **Coding Benchmarks**: Claude Opus 4.5 outperforms others in both SWE-bench tasks (80.9 in Verified, 77.5 in Multilingual), while GPT-5.2 is competitive in SWE-bench Verified (80.0).

3. **Image Benchmarks**: Gemini 3 Pro leads in MMMU Pro (81.0) and MathVision (86.1), while Kimi K2.5 narrowly leads in OmniDocBench 1.5 (88.8 vs. Gemini’s 88.5).

4. **Video Benchmarks**: Gemini 3 Pro leads in VideoMMMU (87.6), and Kimi K2.5 leads in LongVideoBench (79.8).

5. **Outlier**: Claude Opus 4.5 has a notably low score in LongVideoBench (67.2), far below the other three models (76.5–79.8).

6. **Consistency**: Kimi K2.5 is top or near-top in 8 of 10 benchmarks, showing strong cross-category performance.

### Interpretation

This chart illustrates the competitive landscape of leading AI models across specialized tasks. The percentile scores reflect relative performance within each benchmark, so higher values indicate better capability for that specific task. Key takeaways:

- **Kimi K2.5** excels in agent-oriented tasks (e.g., browsing, deep search) and document understanding (OmniDocBench 1.5), suggesting strong reasoning and information retrieval abilities.

- **Claude Opus 4.5** dominates coding benchmarks, indicating superior software engineering and code generation capabilities.

- **Gemini 3 Pro** performs best in image understanding tasks (MMMU Pro, MathVision), highlighting strengths in visual reasoning.

- The OmniDocBench 1.5 footnote clarifies that its score measures document accuracy via Levenshtein distance, making Kimi’s lead here meaningful for document processing use cases.

Overall, the data shows no single model dominates all tasks—each has niche strengths, which is critical for users selecting models for specific applications (e.g., coding vs. image analysis).

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: AI Model Performance Comparison Across Tasks

### Overview

The image displays a grouped bar chart comparing the performance of four AI models (Kimi K2.5, GPT-5.2 (xhigh), Claude Opus 4.5, Gemini 3 Pro) across six task categories: Agents (with subcategories), Coding, Image, and Video. Each bar represents a percentage score, with higher values indicating superior accuracy. The chart emphasizes Kimi K2.5's dominance across most tasks.

### Components/Axes

- **X-Axis**: Task categories and subcategories:

- Agents

- Humanity's Last Exam (Full)

- BrowseComp

- DeepSearchQA

- Coding

- SWE-bench Verified

- SWE-bench Multilingual

- Image

- MMU Pro

- MathVision

- OmniDocBench 1.5*

- Video

- VideoMMMU

- LongVideoBench

- **Y-Axis**: Percentage scores (0–100%), labeled "percentile (%)"

- **Legend**: Located at the top, mapping colors to models:

- Blue: Kimi K2.5

- Gray: GPT-5.2 (xhigh)

- Dark Gray: Claude Opus 4.5

- Light Gray: Gemini 3 Pro

- **Annotations**: Numerical values atop each bar (e.g., "50.2" for Kimi K2.5 in Agents/Humanity's Last Exam)

### Detailed Analysis

#### Agents

- **Humanity's Last Exam (Full)**:

- Kimi K2.5: 50.2 (blue)

- GPT-5.2 (xhigh): 45.5 (gray)

- Claude Opus 4.5: 43.2 (dark gray)

- Gemini 3 Pro: 45.8 (light gray)

- **BrowseComp**:

- Kimi K2.5: 74.9 (blue)

- GPT-5.2 (xhigh): 65.8 (gray)

- Claude Opus 4.5: 57.8 (dark gray)

- Gemini 3 Pro: 59.2 (light gray)

- **DeepSearchQA**:

- Kimi K2.5: 77.1 (blue)

- GPT-5.2 (xhigh): 71.3 (gray)

- Claude Opus 4.5: 76.1 (dark gray)

- Gemini 3 Pro: 63.2 (light gray)

#### Coding

- **SWE-bench Verified**:

- Kimi K2.5: 76.8 (blue)

- GPT-5.2 (xhigh): 80.0 (gray)

- Claude Opus 4.5: 80.9 (dark gray)

- Gemini 3 Pro: 76.2 (light gray)

- **SWE-bench Multilingual**:

- Kimi K2.5: 73.0 (blue)

- GPT-5.2 (xhigh): 72.0 (gray)

- Claude Opus 4.5: 77.5 (dark gray)

- Gemini 3 Pro: 65.0 (light gray)

#### Image

- **MMU Pro**:

- Kimi K2.5: 78.5 (blue)

- GPT-5.2 (xhigh): 79.5 (gray)

- Claude Opus 4.5: 74.0 (dark gray)

- Gemini 3 Pro: 81.0 (light gray)

- **MathVision**:

- Kimi K2.5: 84.2 (blue)

- GPT-5.2 (xhigh): 83.0 (gray)

- Claude Opus 4.5: 77.1 (dark gray)

- Gemini 3 Pro: 86.1 (light gray)

- **OmniDocBench 1.5***:

- Kimi K2.5: 88.8 (blue)

- GPT-5.2 (xhigh): 85.7 (gray)

- Claude Opus 4.5: 87.7 (dark gray)

- Gemini 3 Pro: 88.5 (light gray)

#### Video

- **VideoMMMU**:

- Kimi K2.5: 86.6 (blue)

- GPT-5.2 (xhigh): 85.9 (gray)

- Claude Opus 4.5: 84.4 (dark gray)

- Gemini 3 Pro: 87.6 (light gray)

- **LongVideoBench**:

- Kimi K2.5: 79.8 (blue)

- GPT-5.2 (xhigh): 76.5 (gray)

- Claude Opus 4.5: 67.2 (dark gray)

- Gemini 3 Pro: 77.7 (light gray)

### Key Observations

1. **Kimi K2.5 Dominance**: Consistently leads in most tasks (e.g., 88.8 in OmniDocBench 1.5*), with only minor exceptions (e.g., SWE-bench Verified, where Claude Opus 4.5 scores higher at 80.9).

2. **Claude Opus 4.5 Strengths**: Outperforms others in SWE-bench Verified (80.9) and LongVideoBench (67.2), though the latter is an outlier due to lower absolute scores.

3. **Gemini 3 Pro Variability**: Scores range from 43.2 (lowest in Agents/BrowseComp) to 88.5 (highest in Image/OmniDocBench 1.5*), showing inconsistent performance.

4. **Task-Specific Trends**:

- Coding tasks show tighter competition (GPT-5.2 and Claude Opus 4.5 often match Kimi K2.5).

- Image tasks highlight Gemini 3 Pro's peak performance (81.0 in MMU Pro).

- Video tasks reveal Kimi K2.5's slight edge in VideoMMMU (86.6 vs. Gemini 3 Pro's 87.6).

### Interpretation

The data suggests **Kimi K2.5** is the most balanced high-performer across diverse AI tasks, particularly excelling in image and document-based benchmarks. Its consistent scores (73–88.8 range) indicate robust architecture and training. **Claude Opus 4.5** shows niche strengths in coding and video tasks but lags in Agents. **Gemini 3 Pro**'s variability hints at potential overfitting or specialization gaps. The **OmniDocBench 1.5*** metric (88.8 for Kimi K2.5) underscores its document-processing prowess, while the asterisk notes its calculation method: `(1 - normalized Levenshtein distance) × 100`, emphasizing accuracy over raw output. This chart likely informs model selection for applications requiring cross-domain AI capabilities.

DECODING INTELLIGENCE...