## Diagram: Neural Logic Machine (NLM) Architecture

### Overview

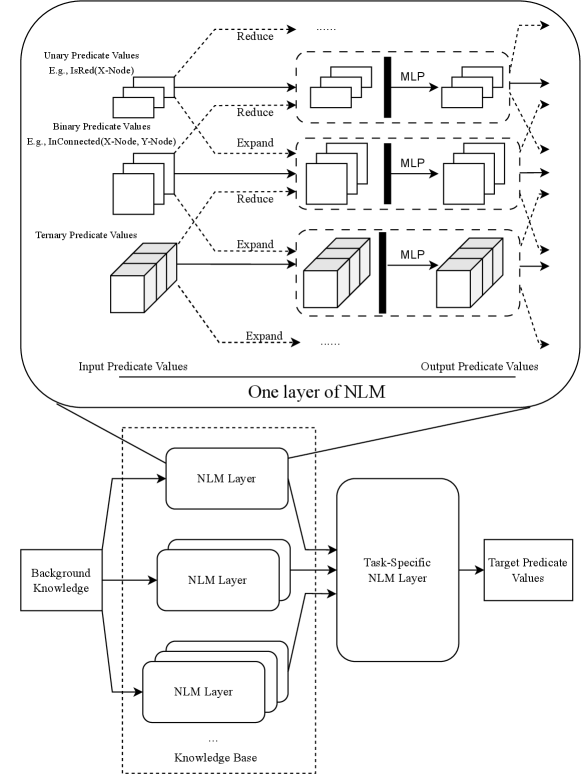

The image illustrates the architecture of a Neural Logic Machine (NLM). It shows how different types of predicate values (Unary, Binary, Ternary) are processed through NLM layers, ultimately leading to target predicate values. The diagram is divided into two main sections: a detailed view of "One layer of NLM" and a broader view showing how multiple NLM layers are organized within a knowledge base.

### Components/Axes

* **Top Section (One layer of NLM):**

* **Input Predicate Values:** Located on the left side.

* Unary Predicate Values (E.g., IsRed(X-Node))

* Binary Predicate Values (E.g., InConnected(X-Node, Y-Node))

* Ternary Predicate Values

* **Operations:** "Reduce" and "Expand" operations are shown as arrows connecting the input predicate values to the subsequent layers.

* **MLP Blocks:** Represent Multi-Layer Perceptrons, which process the predicate values.

* **Output Predicate Values:** Located on the right side.

* **Bottom Section (Knowledge Base):**

* **Background Knowledge:** Input to the NLM layers.

* **NLM Layer:** Multiple NLM layers are stacked within the "Knowledge Base".

* **Knowledge Base:** A dashed rectangle containing multiple NLM layers.

* **Task-Specific NLM Layer:** A single NLM layer that processes the output from the Knowledge Base.

* **Target Predicate Values:** The final output of the architecture.

### Detailed Analysis

* **Unary Predicate Values:** These values are processed through a "Reduce" operation, then passed through an MLP.

* **Binary Predicate Values:** These values are processed through "Reduce" and "Expand" operations, then passed through an MLP.

* **Ternary Predicate Values:** These values are processed through an "Expand" operation, then passed through an MLP.

* **NLM Layers:** The bottom section shows multiple NLM layers within a "Knowledge Base". These layers receive input from "Background Knowledge".

* **Task-Specific NLM Layer:** The output from the "Knowledge Base" is fed into a "Task-Specific NLM Layer", which produces the "Target Predicate Values".

### Key Observations

* The diagram highlights the flow of information from input predicate values through various NLM layers to produce target predicate values.

* The "Reduce" and "Expand" operations suggest different ways of aggregating or distributing predicate information.

* The use of MLPs indicates that the NLM layers involve non-linear transformations of the predicate values.

* The separation of NLM layers into a "Knowledge Base" and a "Task-Specific NLM Layer" suggests a modular architecture where knowledge representation is separated from task-specific processing.

### Interpretation

The diagram illustrates a Neural Logic Machine (NLM) architecture designed to process predicate values and generate target predicate values. The architecture leverages multiple NLM layers organized within a knowledge base, allowing for complex reasoning and inference. The use of MLPs within the NLM layers enables non-linear transformations of the predicate values, potentially capturing intricate relationships between different predicates. The separation of the architecture into a "Knowledge Base" and a "Task-Specific NLM Layer" suggests a design that promotes modularity and reusability, where the knowledge representation can be adapted to different tasks by simply swapping out the task-specific layer. The "Reduce" and "Expand" operations likely play a crucial role in aggregating and distributing predicate information, enabling the NLM to reason about complex relationships between different entities and concepts.