## Grouped Bar Chart: Model Performance Comparison on Solved Tasks

### Overview

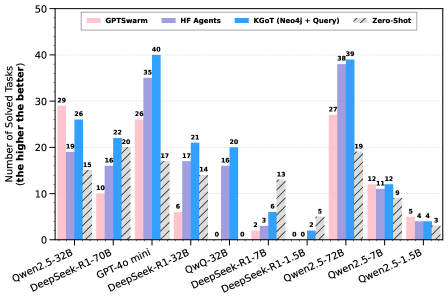

This image displays a grouped bar chart comparing the performance of four different methods (GPTSwarm, HF Agents, KGoT (Neo4j + Query), and Zero-Shot) across ten different language models or model sizes. The performance metric is the "Number of Solved Tasks," where a higher value indicates better performance.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** "Number of Solved Tasks (the higher the better)"

* **Scale:** Linear scale from 0 to 50, with major tick marks at intervals of 10 (0, 10, 20, 30, 40, 50).

* **X-Axis:**

* **Label:** Not explicitly labeled, but contains categorical labels for different models/model sizes.

* **Categories (from left to right):** Qwen2.5-32B, DeepSeek-R1-70B, GPT4o mini, DeepSeek-R1-32B, QwQ-32B, DeepSeek-R1-7B, DeepSeek-R1-1.5B, Qwen2.5-7B, Qwen2.5-27B, Qwen2.5-1.5B.

* **Legend:**

* **Position:** Top center of the chart area.

* **Items (with associated colors/patterns):**

1. **GPTSwarm:** Solid pink bar.

2. **HF Agents:** Solid purple bar.

3. **KGoT (Neo4j + Query):** Solid blue bar.

4. **Zero-Shot:** Bar with diagonal black hatching on a white background.

### Detailed Analysis

The following table reconstructs the data presented in the chart. Values are read directly from the data labels positioned above each bar.

| Model / Model Size | GPTSwarm (Pink) | HF Agents (Purple) | KGoT (Neo4j + Query) (Blue) | Zero-Shot (Hatched) |

| :--- | :--- | :--- | :--- | :--- |

| **Qwen2.5-32B** | 29 | 19 | 26 | 15 |

| **DeepSeek-R1-70B** | 10 | 16 | 22 | 20 |

| **GPT4o mini** | 26 | 6 | 40 | 17 |

| **DeepSeek-R1-32B** | 0 | 17 | 35 | 14 |

| **QwQ-32B** | 0 | 6 | 21 | 0 |

| **DeepSeek-R1-7B** | 0 | 2 | 20 | 0 |

| **DeepSeek-R1-1.5B** | 0 | 0 | 8 | 13 |

| **Qwen2.5-7B** | 0 | 2 | 5 | 0 |

| **Qwen2.5-27B** | 27 | 12 | 38 | 19 |

| **Qwen2.5-1.5B** | 5 | 4 | 4 | 7 |

**Trend Verification per Method:**

* **KGoT (Blue):** This series shows the strongest overall performance. The blue bars are the tallest or tied for tallest in 8 out of 10 model categories. The trend is generally high performance, with a peak of 40 solved tasks for GPT4o mini and a low of 4 for Qwen2.5-1.5B.

* **GPTSwarm (Pink):** Performance is highly variable. It performs well on larger models (29 for Qwen2.5-32B, 27 for Qwen2.5-27B) and GPT4o mini (26), but drops to 0 for five of the models, particularly the mid-range and smaller DeepSeek and Qwen variants.

* **HF Agents (Purple):** Shows moderate, relatively consistent performance across most models, typically ranging between 2 and 19 solved tasks. It never achieves the highest score in any category but also rarely drops to zero (only for DeepSeek-R1-1.5B).

* **Zero-Shot (Hatched):** Performance is inconsistent. It achieves moderate results on some models (20 for DeepSeek-R1-70B, 19 for Qwen2.5-27B) but scores 0 for three models (QwQ-32B, DeepSeek-R1-7B, Qwen2.5-7B). Its highest score is 20.

### Key Observations

1. **Dominant Method:** KGoT (Neo4j + Query) is the clear top performer across the broadest range of models.

2. **Model Size Sensitivity:** GPTSwarm appears highly sensitive to model size or capability, failing completely (0 tasks) on several mid-range and smaller models while performing well on the largest ones.

3. **Zero-Shot Failure Cases:** The Zero-Shot method completely fails (0 tasks) on three specific models: QwQ-32B, DeepSeek-R1-7B, and Qwen2.5-7B.

4. **Lowest Overall Performance:** The smallest models tested (DeepSeek-R1-1.5B and Qwen2.5-1.5B) show the lowest aggregate performance across all methods, with no method exceeding 13 solved tasks.

5. **Notable Outlier:** For the Qwen2.5-1.5B model, the Zero-Shot method (7 tasks) outperforms all other methods, which is an exception to the general trend.

### Interpretation

The data suggests a significant advantage for the **KGoT (Neo4j + Query)** method in solving the given set of tasks. Its consistent high performance implies that integrating a structured knowledge graph (Neo4j) with a query-based approach provides a robust framework that generalizes well across different underlying language models, from large to relatively small.

The **GPTSwarm** method's performance pattern indicates it may rely on capabilities that are only present in larger or more advanced models (like Qwen2.5-32B/27B and GPT4o mini), making it less reliable for a broader range of models. The **HF Agents** method offers a stable, middle-ground performance, suggesting it is a dependable but not state-of-the-art approach. The **Zero-Shot** method's inconsistency highlights the challenge of solving complex tasks without any specialized agent framework or external knowledge structure, as its success appears highly dependent on the specific model's inherent abilities.

The chart effectively demonstrates that for this benchmark, the choice of agent or problem-solving framework (KGoT) can be more impactful than the raw size of the underlying language model, as seen by KGoT's strong performance even on mid-sized models like DeepSeek-R1-7B.