## Diagram: RLVR vs. ERL in an Unknown Environment

### Overview

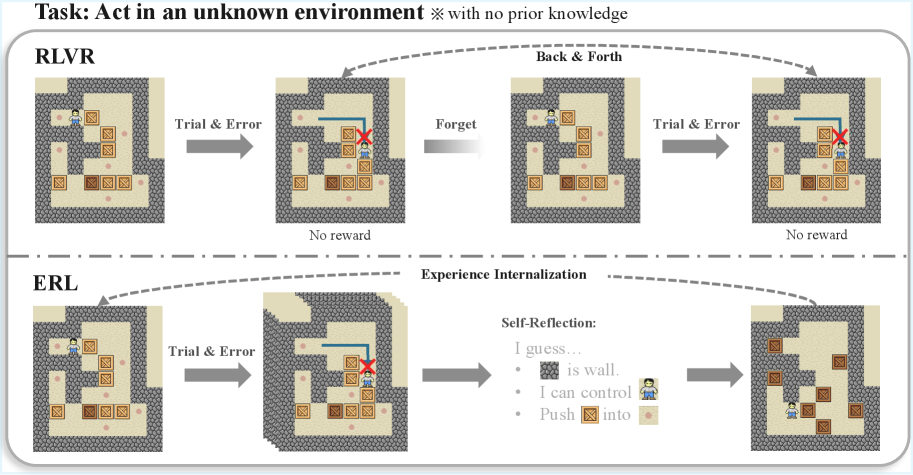

The image presents a diagram comparing two approaches, RLVR and ERL, for an agent navigating an unknown environment. The task involves moving boxes within a walled area. The diagram illustrates the steps each approach takes, highlighting the differences in their learning and problem-solving strategies.

### Components/Axes

* **Title:** Task: Act in an unknown environment * with no prior knowledge

* **Top Row:** RLVR (likely an abbreviation for a Reinforcement Learning approach)

* **Bottom Row:** ERL (likely an abbreviation for an Experience Replay Learning approach)

* **Arrows:** Indicate the flow of actions and learning.

* **Text Labels:** "Trial & Error", "Forget", "Back & Forth", "No reward", "Experience Internalization", "Self-Reflection"

* **Environment:** A walled area with boxes and a character.

* **Red X:** Indicates a failed attempt or a blocked path.

* **Self-Reflection Text:** "I guess... [gray square] is wall. I can control [character icon]. Push [box icon] into [tan square]."

### Detailed Analysis

**RLVR (Top Row):**

1. **Initial State:** The character is in a walled area with several boxes.

* Label: Trial & Error

* Arrow points to the next state.

2. **Second State:** The character attempts to move a box, but the path is blocked, indicated by a red X. A blue line shows the attempted path.

* Label: No reward

* Label: Forget

* Arrow points back to the third state.

3. **Third State:** The character is back in a similar initial state, having "forgotten" the previous attempt.

* Label: Back & Forth

4. **Fourth State:** The character attempts to move a box, but the path is blocked, indicated by a red X. A blue line shows the attempted path.

* Label: Trial & Error

* Label: No reward

**ERL (Bottom Row):**

1. **Initial State:** The character is in a walled area with several boxes.

* Label: Trial & Error

* Arrow points to the next state.

2. **Second State:** The character attempts to move a box, but the path is blocked, indicated by a red X. A blue line shows the attempted path.

3. **Experience Internalization:** The agent learns from the failed attempt.

4. **Third State:** The agent engages in self-reflection.

* Label: Self-Reflection:

* Text: "I guess... [gray square] is wall. I can control [character icon]. Push [box icon] into [tan square]."

5. **Fourth State:** The character has rearranged the boxes, presumably using the learned information.

### Key Observations

* RLVR appears to be a more basic trial-and-error approach, where the agent "forgets" previous failures and repeats similar actions.

* ERL incorporates experience internalization and self-reflection, allowing the agent to learn from failures and improve its problem-solving strategy.

* The "Self-Reflection" text in ERL suggests the agent is learning about the environment's constraints (walls) and its own capabilities (controlling the character and pushing boxes).

### Interpretation

The diagram illustrates the difference between a simple trial-and-error reinforcement learning approach (RLVR) and a more sophisticated experience-based learning approach (ERL). RLVR struggles because it doesn't retain information from past failures, leading to repetitive and ineffective actions. ERL, on the other hand, learns from its experiences, allowing it to adapt and eventually solve the task. The "Self-Reflection" component in ERL is crucial, as it enables the agent to understand the environment and its own actions, leading to more efficient problem-solving. The diagram highlights the importance of memory and learning in intelligent agents operating in unknown environments.