## Federated Learning Diagram: Model Training Process

### Overview

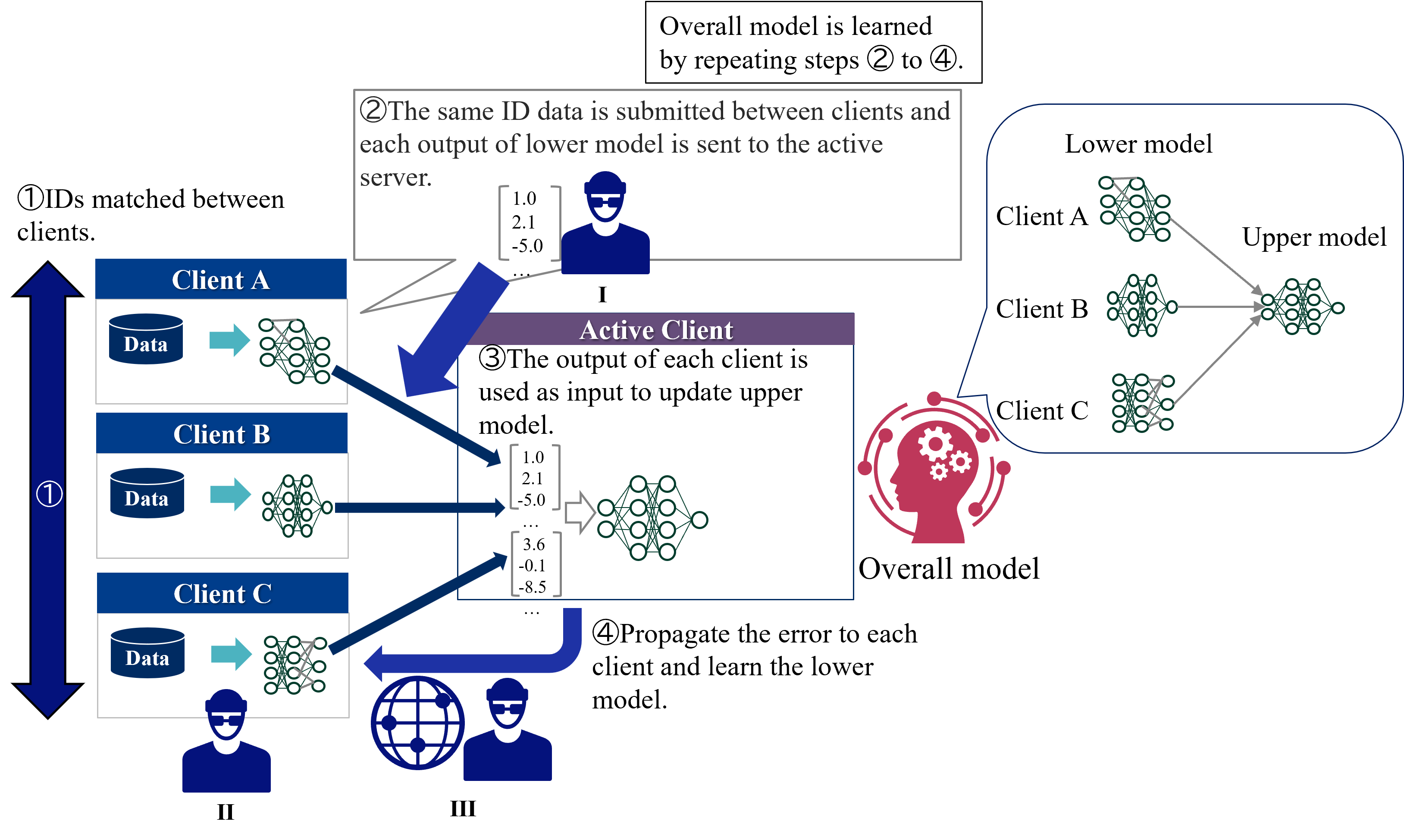

The image illustrates a federated learning process involving multiple clients (A, B, and C) and an active client/server. The diagram outlines the steps for training an overall model by iteratively updating lower models on each client and aggregating their outputs to update an upper model on the active client. Error propagation is used to refine the lower models.

### Components/Axes

* **Clients:** Client A, Client B, Client C

* Each client has a "Data" storage component and a "Lower model" (neural network).

* **Active Client:** Represents the server or aggregator.

* Contains an "Upper model" (neural network).

* **Overall Model:** A visual representation of the final trained model.

* **Flow Arrows:** Indicate the direction of data and model updates.

* **Numerical Data:** Sample output values from the lower models.

* **Icons:** Represent users and a global network.

* **Step Indicators:** Numbered circles indicating the sequence of steps.

### Detailed Analysis or ### Content Details

1. **Step 1: IDs matched between clients.**

* A large blue arrow points downwards, indicating the initial step of matching IDs between clients.

* Clients A, B, and C each have a "Data" storage component (represented by a cylinder) and a lower model (neural network).

* Data flows from the "Data" storage to the lower model on each client, indicated by a light blue arrow.

2. **Step 2: The same ID data is submitted between clients and each output of lower model is sent to the active server.**

* A grey box contains the text describing this step.

* A sample output vector `[1.0, 2.1, -5.0, ...]` is shown, representing the data sent to the active client.

* A user icon labeled "I" is present below the data.

3. **Step 3: The output of each client is used as input to update upper model.**

* A purple box contains the text describing this step.

* Sample output vectors `[1.0, 2.1, -5.0, ...]` and `[3.6, -0.1, -8.5, ...]` are shown, representing the data used to update the upper model.

* The upper model is a neural network.

4. **Step 4: Propagate the error to each client and learn the lower model.**

* A blue arrow points from a globe icon (labeled "III") and a user icon (labeled "II") back towards the clients.

* The globe icon likely represents a global network or server.

5. **Overall Model Learning:**

* A text box at the top states: "Overall model is learned by repeating steps 2 to 4."

6. **Model Architecture:**

* The "Lower model" and "Upper model" are represented as neural networks.

* The "Overall model" is represented by a head with gears inside, suggesting a complex, integrated system.

### Key Observations

* The diagram illustrates a cyclical process of federated learning.

* Data privacy is implied, as raw data remains on the clients.

* The active client aggregates the outputs of the lower models to update the upper model.

* Error propagation is used to refine the lower models on each client.

### Interpretation

The diagram depicts a federated learning approach where a global model is trained without directly accessing the raw data on individual clients. The process involves iterative updates of local models on each client, aggregation of these updates on a central server (active client), and propagation of errors back to the clients for further refinement. This approach is beneficial for scenarios where data privacy is paramount, as the raw data remains distributed across the clients. The cyclical nature of the process (repeating steps 2 to 4) highlights the iterative refinement of the overall model through continuous learning and error correction.