## System Architecture Diagram: Federated Learning Workflow

### Overview

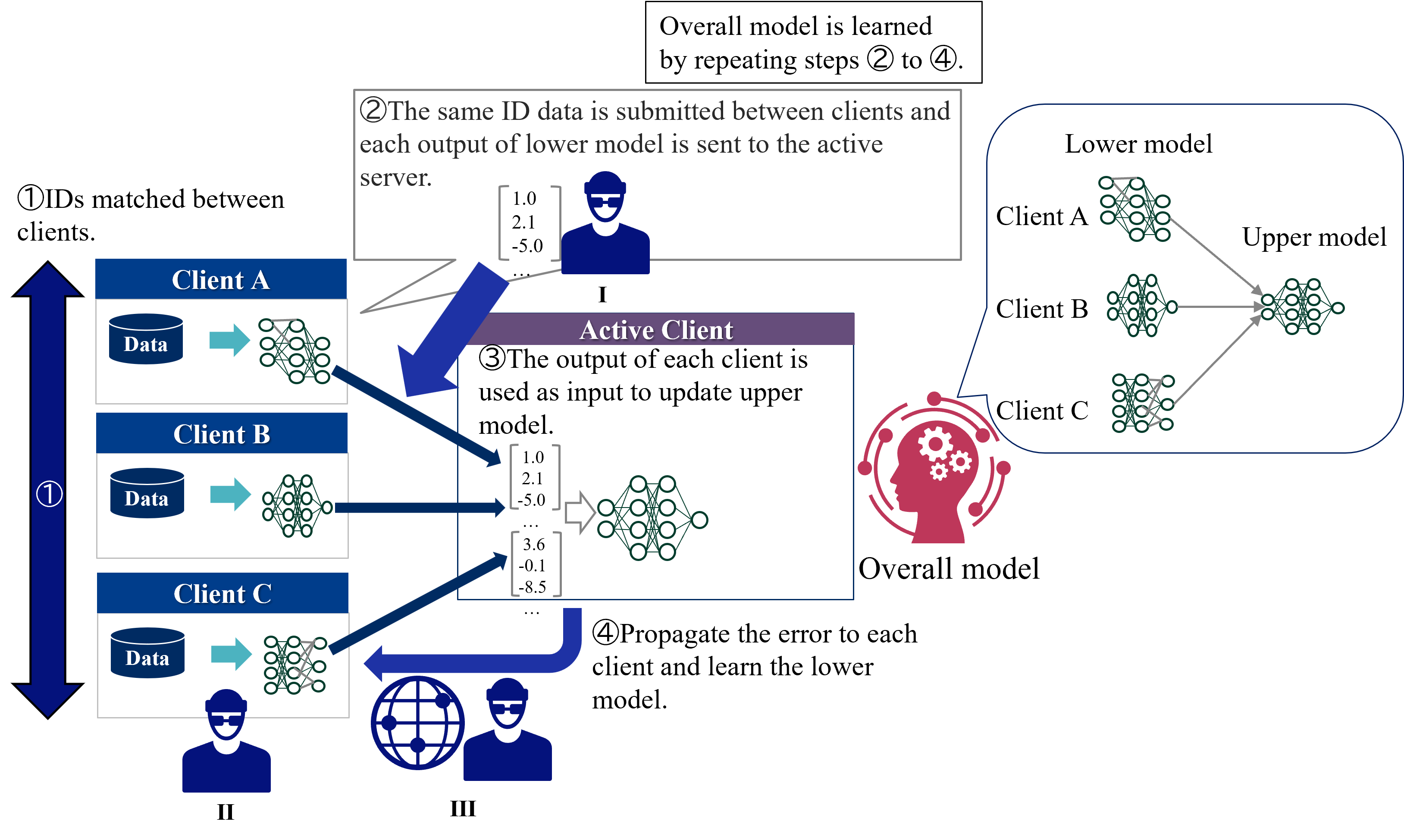

This diagram illustrates a federated learning system where multiple clients (A, B, C) collaboratively train a shared "overall model" while maintaining data privacy. The workflow involves iterative steps of model training, error propagation, and synchronization between clients and a central server.

### Components/Axes

1. **Clients (A, B, C)**:

- Each client has:

- **Data**: Represented by blue database icons.

- **Lower Model**: Neural network diagrams (green nodes) processing client-specific data.

- Positioned on the left side of the diagram.

2. **Active Client (Client A)**:

- Highlighted with a purple banner.

- Sends its lower model's output (numerical values: `1.0`, `2.1`, `-5.0`, `3.6`, `-0.1`, `-8.5`) to the server.

3. **Server**:

- Central node receiving inputs from clients.

- Updates the **Upper Model** (global model) using the active client's output.

4. **Upper Model**:

- Aggregates contributions from all clients' lower models.

- Positioned on the right side, connected to clients via gray arrows.

5. **Error Propagation**:

- Red arrows indicate error feedback from the server to all clients.

- Clients update their lower models using propagated errors.

### Detailed Analysis

- **Step ①**: Client IDs are matched between clients (blue vertical arrow).

- **Step ②**: Active client (A) sends its lower model's output to the server.

- **Step ③**: Server uses the active client's output to update the upper model.

- **Step ④**: Error from the upper model is propagated back to all clients to refine their lower models.

- **Overall Model**: Learned iteratively by repeating steps ②–④.

### Key Observations

- **Hierarchical Structure**: Clients train local models (lower) that contribute to a global model (upper).

- **Data Flow**:

- Client data → Lower model → Active client output → Server → Upper model → Error → Clients.

- **Numerical Values**:

- Active client's output includes values like `1.0`, `2.1`, `-5.0`, `3.6`, `-0.1`, `-8.5`.

- **Color Coding**:

- Blue: Clients and data.

- Purple: Active client.

- Red: Error propagation.

- Green: Neural network nodes.

### Interpretation

This system demonstrates a **decentralized machine learning framework** where:

1. **Privacy Preservation**: Raw data remains on clients; only model updates are shared.

2. **Collaborative Learning**: The upper model aggregates knowledge from all clients' local models.

3. **Iterative Refinement**: Error feedback ensures continuous improvement of both local and global models.

4. **Scalability**: The workflow can accommodate additional clients (e.g., Client D, E) without structural changes.

The diagram emphasizes **federated averaging** principles, where local model updates are combined to form a robust global model while minimizing data exposure. The error propagation step ensures that discrepancies between local and global models are addressed, enhancing overall accuracy.