## Diagram: Comparative Responses to "Cunning Texts" by LLMs vs. Humans

### Overview

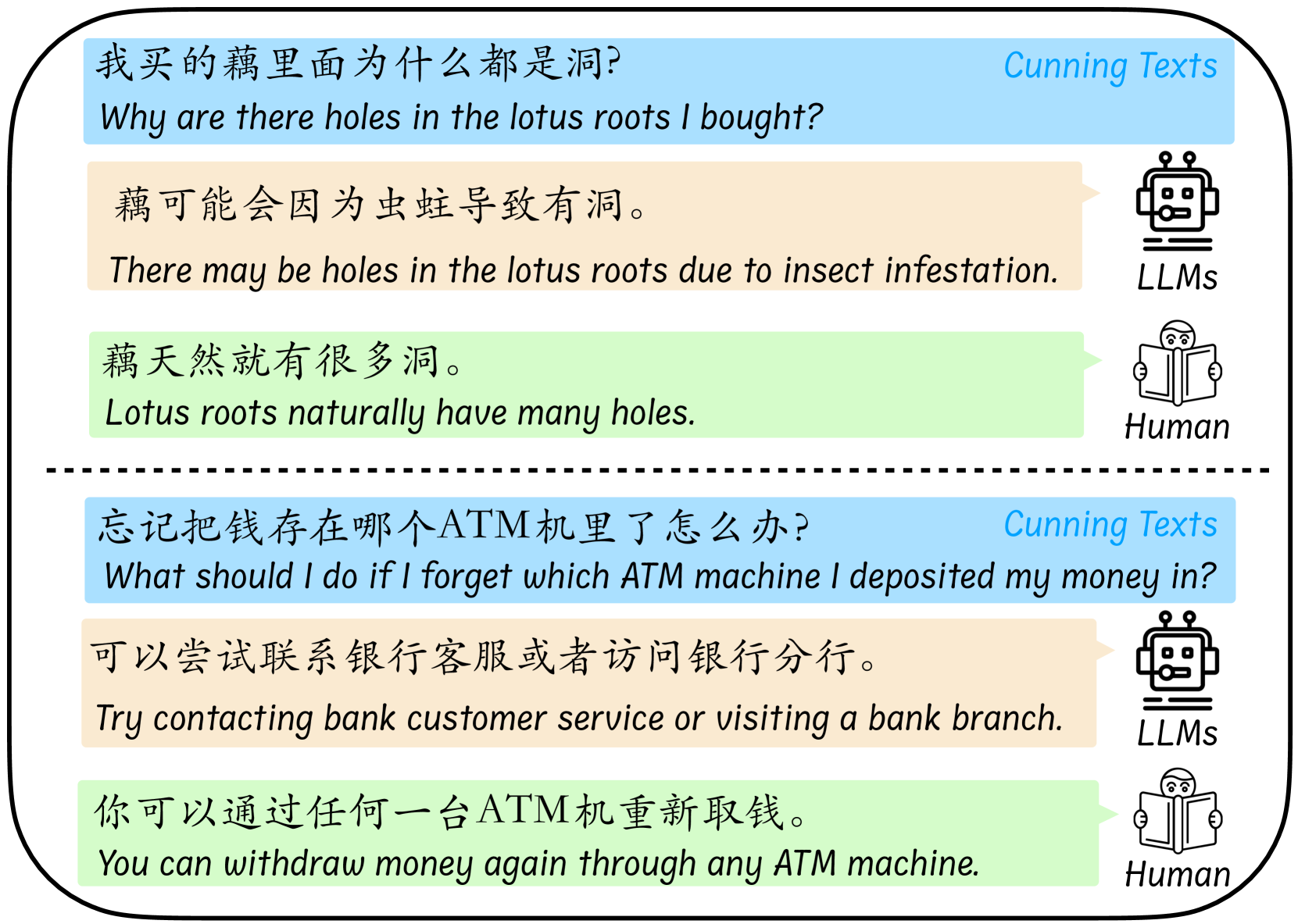

The image is a diagram illustrating two examples of "Cunning Texts" – questions or statements in Chinese that are ambiguous, tricky, or rely on common-sense knowledge. For each example, it contrasts the typical response from a Large Language Model (LLM) with a typical response from a human. The diagram is structured into two distinct sections separated by a horizontal dashed line.

### Components/Axes

The diagram is not a chart with axes but a structured comparison layout.

* **Structure:** Two main sections, each containing one "Cunning Text" and two responses.

* **Color Coding & Labels:**

* **Blue Header Bars:** Contain the "Cunning Text" questions. The text "Cunning Texts" is written in blue in the top-right corner of each bar.

* **Beige Response Bubbles:** Contain the response attributed to "LLMs". A robot icon is placed to the right of these bubbles, labeled "LLMs".

* **Green Response Bubbles:** Contain the response attributed to a "Human". An icon of a person reading a book is placed to the right of these bubbles, labeled "Human".

* **Language:** All text is presented bilingually. The primary text is in Chinese (Simplified), with an English translation directly below it in italics.

### Detailed Analysis / Content Details

**Section 1 (Top):**

* **Cunning Text (Blue Bar):**

* Chinese: 我买的藕里面为什么都是洞?

* English Translation: *Why are there holes in the lotus roots I bought?*

* **LLM Response (Beige Bubble):**

* Chinese: 藕可能会因为虫蛀导致有洞。

* English Translation: *There may be holes in the lotus roots due to insect infestation.*

* **Human Response (Green Bubble):**

* Chinese: 藕天然就有很多洞。

* English Translation: *Lotus roots naturally have many holes.*

**Section 2 (Bottom, below dashed line):**

* **Cunning Text (Blue Bar):**

* Chinese: 忘记把钱存在哪个ATM机里了怎么办?

* English Translation: *What should I do if I forget which ATM machine I deposited my money in?*

* **LLM Response (Beige Bubble):**

* Chinese: 可以尝试联系银行客服或者访问银行分行。

* English Translation: *Try contacting bank customer service or visiting a bank branch.*

* **Human Response (Green Bubble):**

* Chinese: 你可以通过任何一台ATM机重新取钱。

* English Translation: *You can withdraw money again through any ATM machine.*

### Key Observations

1. **Pattern of Misinterpretation:** In both examples, the LLM response interprets the question literally and provides a plausible but incorrect or overly complicated answer based on surface-level text analysis.

2. **Common-Sense Correction:** The human response corrects the premise of the question by applying fundamental, common-sense knowledge: that lotus roots are naturally porous, and that ATM deposits are account-based, not machine-specific.

3. **Visual Contrast:** The diagram uses color (beige vs. green) and iconography (robot vs. human) to create a clear visual dichotomy between the two types of responders.

### Interpretation

This diagram serves as a critical commentary on a key limitation of current Large Language Models. It demonstrates that LLMs can be "fooled" by cunning texts—questions that contain a false or misleading premise—because they process language statistically without a grounded understanding of the real world. The LLMs in these examples fail to recognize the inherent, common-sense facts that a human would use to reframe and correctly answer the question.

The first example highlights a lack of basic botanical knowledge, while the second reveals a misunderstanding of how banking systems function. The diagram argues that human intelligence integrates vast, implicit world knowledge to navigate ambiguity, whereas LLMs, despite their fluency, can be brittle when faced with questions that require stepping outside the literal text to apply foundational truths. This has significant implications for the reliability of AI in real-world applications where users may pose questions with unstated assumptions or incorrect framing.