TECHNICAL ASSET FINGERPRINT

b0cc54ea26394436bb1fe229

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

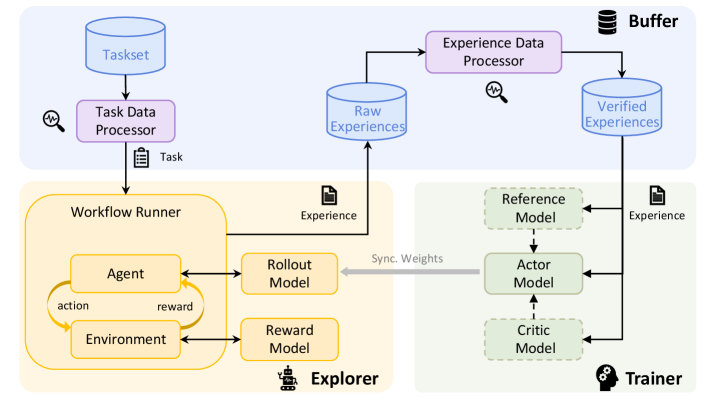

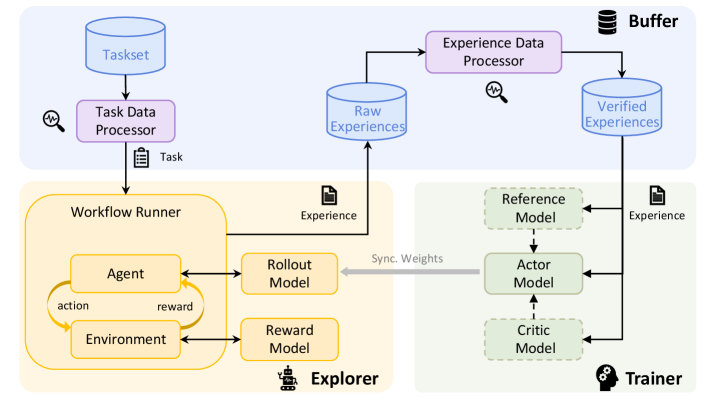

## Diagram: Reinforcement Learning Workflow

### Overview

The image presents a diagram of a reinforcement learning workflow. It illustrates the flow of data and processes between different components, including task management, experience generation, and model training.

### Components/Axes

The diagram is divided into several key components:

* **Taskset:** A blue cylinder at the top-left, representing a collection of tasks.

* **Task Data Processor:** A purple rectangle below the Taskset, responsible for processing task data.

* **Raw Experiences:** A blue cylinder, representing the raw experiences collected during the learning process.

* **Experience Data Processor:** A purple rectangle, responsible for processing experience data.

* **Verified Experiences:** A blue cylinder, representing the verified experiences after processing.

* **Workflow Runner:** A yellow region containing the Agent, Environment, Rollout Model, and Reward Model.

* **Agent:** A rounded rectangle within the Workflow Runner, representing the learning agent.

* **Environment:** A rounded rectangle within the Workflow Runner, representing the environment in which the agent interacts.

* **Rollout Model:** A yellow rounded rectangle to the right of the Agent, used for generating rollouts.

* **Reward Model:** A yellow rounded rectangle below the Rollout Model, used for providing rewards.

* **Explorer:** The yellow region containing the Workflow Runner, Rollout Model, and Reward Model.

* **Buffer:** A stack of cylinders at the top-right, representing a buffer for storing experiences.

* **Trainer:** A green region containing the Reference Model, Actor Model, and Critic Model.

* **Reference Model:** A green dashed rounded rectangle within the Trainer.

* **Actor Model:** A green solid rounded rectangle within the Trainer, representing the policy network.

* **Critic Model:** A green dashed rounded rectangle within the Trainer, representing the value network.

### Detailed Analysis

* **Flow from Taskset:** The Taskset feeds into the Task Data Processor.

* A magnifying glass icon with a squiggly line is next to the Task Data Processor.

* The Task Data Processor outputs a "Task" to the Workflow Runner.

* **Workflow Runner Details:**

* The Agent interacts with the Environment, producing "action" and receiving "reward".

* The Agent also interacts with the Rollout Model and Reward Model.

* The Rollout Model outputs "Experience" to the Raw Experiences.

* **Experience Processing:**

* Raw Experiences are fed into the Experience Data Processor.

* A magnifying glass icon with a squiggly line is next to the Experience Data Processor.

* The Experience Data Processor outputs to Verified Experiences.

* **Trainer Details:**

* Verified Experiences are fed into the Reference Model, Actor Model, and Critic Model.

* The Reference Model feeds into the Actor Model.

* The Actor Model feeds into the Critic Model.

* "Sync. Weights" is written next to a gray arrow pointing from the Actor Model to the Agent.

* The Rollout Model outputs "Experience" to the Reference Model.

### Key Observations

* The diagram illustrates a closed-loop reinforcement learning system.

* The Workflow Runner is responsible for generating experiences.

* The Trainer is responsible for updating the models based on the experiences.

* The Buffer stores experiences for later use.

### Interpretation

The diagram depicts a typical reinforcement learning workflow, emphasizing the interaction between different components. The Taskset provides the initial tasks, which are then processed to generate experiences. These experiences are used to train the Actor and Critic models, which are then used by the Agent to interact with the Environment. The "Sync. Weights" arrow suggests that the Agent's policy is updated based on the Actor Model's weights, indicating a policy gradient approach. The presence of a Reference Model suggests a form of imitation learning or a stable learning target. The overall system aims to learn an optimal policy for the Agent to perform the given tasks within the Environment.

DECODING INTELLIGENCE...

EXPERT: gemini-3-flash-free VERSION 1

RUNTIME: nugit/gemini/gemini-3-flash-preview

INTEL_VERIFIED

# Technical Document Extraction: Reinforcement Learning System Architecture

This document provides a comprehensive extraction and analysis of the provided architectural diagram, which illustrates a Reinforcement Learning (RL) pipeline consisting of three primary functional regions: **Buffer**, **Explorer**, and **Trainer**.

---

## 1. Component Isolation (Spatial Segmentation)

The diagram is divided into three color-coded regions:

* **Top Region (Blue Background):** The **Buffer** system, responsible for task management and data processing.

* **Bottom-Left Region (Yellow Background):** The **Explorer**, responsible for environment interaction and data generation.

* **Bottom-Right Region (Green Background):** The **Trainer**, responsible for model optimization and weight synchronization.

---

## 2. Detailed Component Extraction

### A. Buffer Region (Top)

* **Taskset (Database Icon):** The initial source of tasks.

* **Task Data Processor (Purple Box):** Processes data from the Taskset. Accompanied by a magnifying glass icon (indicating monitoring/inspection).

* **Raw Experiences (Database Icon):** Stores data generated by the Explorer.

* **Experience Data Processor (Purple Box):** Processes raw experiences. Accompanied by a magnifying glass icon.

* **Verified Experiences (Database Icon):** Stores the output of the Experience Data Processor, ready for training.

### B. Explorer Region (Bottom-Left)

* **Workflow Runner (Large Yellow Container):**

* **Agent (Yellow Box):** Sends an "action" to the Environment.

* **Environment (Yellow Box):** Sends a "reward" back to the Agent.

* **Rollout Model (Yellow Box):** Interfaces with the Agent.

* **Reward Model (Yellow Box):** Interfaces with the Environment.

* **Output:** Generates "Experience" (indicated by a document icon) which is sent to the **Raw Experiences** database.

### C. Trainer Region (Bottom-Right)

* **Reference Model (Green Dashed Box):** Used as a baseline for training.

* **Actor Model (Green Solid Box):** The primary model being trained.

* **Critic Model (Green Dashed Box):** Evaluates the actions of the Actor.

* **Input:** Receives "Experience" (indicated by a document icon) from the **Verified Experiences** database.

---

## 3. Process Flow and Logic

The system operates in a continuous loop characterized by the following data movements:

1. **Task Initialization:**

* `Taskset` $\rightarrow$ `Task Data Processor` $\rightarrow$ `Workflow Runner`.

* The transition is labeled with a clipboard icon and the text **"Task"**.

2. **Experience Generation (The Explorer Loop):**

* Inside the `Workflow Runner`, the `Agent` and `Environment` interact via an **action/reward** cycle.

* The `Rollout Model` and `Reward Model` support this interaction.

* The resulting data is labeled **"Experience"** and sent to the `Raw Experiences` database in the Buffer.

3. **Data Refinement:**

* `Raw Experiences` $\rightarrow$ `Experience Data Processor` $\rightarrow$ `Verified Experiences`.

* This stage ensures data quality before it reaches the training phase.

4. **Model Training (The Trainer):**

* `Verified Experiences` are fed into the `Reference Model`, `Actor Model`, and `Critic Model`.

* The `Actor Model` is the central component, flanked by the `Reference` and `Critic` models (indicated by vertical dashed arrows).

5. **Weight Synchronization (The Feedback Loop):**

* A thick grey arrow labeled **"Sync. Weights"** points from the `Actor Model` (Trainer) back to the `Rollout Model` (Explorer).

* This represents the deployment of the trained model back into the exploration phase to improve future data collection.

---

## 4. Textual Transcription

| Category | Transcribed Text |

| :--- | :--- |

| **Headers/Regions** | Buffer, Explorer, Trainer |

| **Data Stores** | Taskset, Raw Experiences, Verified Experiences |

| **Processors** | Task Data Processor, Experience Data Processor |

| **Explorer Components** | Workflow Runner, Agent, Environment, action, reward, Rollout Model, Reward Model |

| **Trainer Components** | Reference Model, Actor Model, Critic Model |

| **Data Labels** | Task, Experience, Experience, Sync. Weights |

---

## 5. Summary of Facts

* **Language:** English (100%).

* **System Type:** Distributed Reinforcement Learning Architecture.

* **Key Feature:** Decoupled data collection (Explorer) and model optimization (Trainer) mediated by a processing buffer.

* **Monitoring:** The presence of magnifying glass icons suggests that both Task and Experience processing stages are subject to automated or manual validation/monitoring.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Reinforcement Learning Workflow

### Overview

This diagram illustrates a reinforcement learning workflow, depicting the interaction between an agent, environment, and various processing components. The workflow is segmented into three main areas: a Task Processing section (top), an Explorer section (center-left), and a Trainer section (center-right). Data flow is indicated by arrows, and key components are represented as labeled boxes.

### Components/Axes

The diagram contains the following components:

* **Taskset:** A database of tasks.

* **Task Data Processor:** Processes the taskset and outputs a "Task".

* **Workflow Runner:** Contains the "Agent" and "Environment" and manages the interaction between them.

* **Agent:** Takes "action" and receives a "reward" from the environment.

* **Environment:** Provides feedback (reward) to the agent based on its actions.

* **Rollout Model:** Part of the Explorer, receives "Experience" from the Workflow Runner.

* **Reward Model:** Part of the Explorer, receives "Experience" from the Workflow Runner.

* **Explorer:** Contains the Rollout and Reward Models.

* **Experience Data Processor:** Processes "Raw Experiences" and outputs "Verified Experiences".

* **Raw Experiences:** A database of raw experiences.

* **Verified Experiences:** A database of verified experiences.

* **Reference Model:** Part of the Trainer, receives "Experience".

* **Actor Model:** Part of the Trainer, receives "Experience" from the Reference Model.

* **Critic Model:** Part of the Trainer, receives input from the Actor Model.

* **Trainer:** Contains the Reference, Actor, and Critic Models.

* **Buffer:** Stores verified experiences.

* **Sync. Weights:** Indicates synchronization of weights between models.

### Detailed Analysis or Content Details

The diagram shows a cyclical flow within the Workflow Runner: the Agent takes an action, the Environment provides a reward, and this cycle repeats. The Explorer receives "Experience" from the Workflow Runner. The Experience Data Processor transforms "Raw Experiences" into "Verified Experiences", which are stored in a "Buffer". The Trainer utilizes the "Verified Experiences" to update the Reference, Actor, and Critic Models. The "Sync. Weights" arrow indicates that the weights of the models are synchronized.

### Key Observations

The diagram highlights the separation of concerns in a reinforcement learning system: task generation, agent interaction, experience processing, and model training. The cyclical nature of the agent-environment interaction is clearly depicted. The flow of "Experience" is central to the entire process, being passed from the Workflow Runner to the Explorer, then to the Experience Data Processor, and finally to the Trainer.

### Interpretation

This diagram represents a common architecture for reinforcement learning algorithms, particularly those employing techniques like experience replay and actor-critic methods. The "Taskset" and "Task Data Processor" suggest a system capable of handling multiple tasks or a dynamic task environment. The separation of the "Explorer" and "Trainer" components is indicative of an off-policy learning approach, where the data used for training is not necessarily generated by the current policy. The "Reference Model" likely serves as a target or baseline for the Actor and Critic models, facilitating stable learning. The "Buffer" is a crucial component for experience replay, allowing the agent to learn from past experiences and break correlations in the data. The synchronization of weights suggests a mechanism for periodically updating the models in the Explorer with the latest knowledge from the Trainer. The overall workflow demonstrates a sophisticated system for learning optimal policies through trial and error, leveraging experience replay and model-based techniques.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: Reinforcement Learning Workflow with Experience Buffer

### Overview

The image displays a technical system architecture diagram illustrating a reinforcement learning (RL) workflow. The diagram is segmented into three primary colored regions representing distinct functional modules: a **Data Processing Layer** (light blue, top), an **Explorer Module** (yellow, left), and a **Trainer Module** (light green, right). The flow depicts how tasks are processed, experiences are generated and stored, and models are trained and synchronized.

### Components/Regions

The diagram is organized into three main spatial regions:

1. **Top Region (Light Blue Background): Data Processing & Buffer**

* **Components:**

* `Taskset` (Cylinder/Database icon, top-left)

* `Task Data Processor` (Purple rectangle, below Taskset)

* `Experience Data Processor` (Purple rectangle, top-center)

* `Raw Experiences` (Cylinder/Database icon, center)

* `Verified Experiences` (Cylinder/Database icon, top-right)

* `Buffer` (Database icon with label, top-right corner)

* **Flow & Labels:** An arrow from `Taskset` to `Task Data Processor` is labeled with a document icon and the word `Task`. An arrow from `Raw Experiences` to `Experience Data Processor` has a magnifying glass icon. An arrow from `Experience Data Processor` to `Verified Experiences` also has a magnifying glass icon. An arrow from `Verified Experiences` points to the `Buffer` icon.

2. **Left Region (Yellow Background): Explorer**

* **Components:**

* `Workflow Runner` (Large yellow rounded rectangle)

* `Agent` (Yellow rectangle inside Workflow Runner)

* `Environment` (Yellow rectangle inside Workflow Runner, below Agent)

* `Rollout Model` (Yellow rectangle, right of Workflow Runner)

* `Reward Model` (Yellow rectangle, below Rollout Model)

* **Flow & Labels:** Inside the `Workflow Runner`, a circular flow is shown: an arrow from `Agent` to `Environment` is labeled `action`, and an arrow from `Environment` back to `Agent` is labeled `reward`. A double-headed arrow connects `Agent` and `Rollout Model`. A double-headed arrow connects `Environment` and `Reward Model`. An arrow from `Rollout Model` points to the `Raw Experiences` database in the top region, labeled with a document icon and the word `Experience`. A robot icon is placed near the `Reward Model`.

3. **Right Region (Light Green Background): Trainer**

* **Components:**

* `Reference Model` (Green dashed rectangle, top)

* `Actor Model` (Green dashed rectangle, center)

* `Critic Model` (Green dashed rectangle, bottom)

* **Flow & Labels:** All three models (`Reference Model`, `Actor Model`, `Critic Model`) receive input via arrows originating from the `Verified Experiences` database. These arrows are each labeled with a document icon and the word `Experience`. A thick, gray, double-headed arrow labeled `Sync. Weights` connects the `Actor Model` (in the Trainer region) to the `Rollout Model` (in the Explorer region). A head-with-gears icon is placed in the bottom-right corner of this region.

### Detailed Analysis: Component Relationships and Data Flow

The diagram defines a closed-loop system for training RL agents using stored experiences.

1. **Task Initiation:** The process begins with a `Taskset` (a collection of tasks). The `Task Data Processor` extracts a specific `Task` and sends it to the `Workflow Runner` within the Explorer.

2. **Experience Generation (Explorer):** Inside the `Workflow Runner`, an `Agent` interacts with an `Environment` by taking `action`s and receiving `reward`s. This interaction loop generates experience data. The `Rollout Model` (likely responsible for generating trajectories) and the `Reward Model` (for evaluating states/actions) are part of this process. The `Rollout Model` sends generated `Experience` data to the `Raw Experiences` database.

3. **Experience Processing (Buffer):** The `Experience Data Processor` retrieves data from `Raw Experiences`, processes or filters it (indicated by the magnifying glass icons), and outputs cleaned `Verified Experiences` into a `Buffer` for later use.

4. **Model Training (Trainer):** The `Verified Experiences` from the buffer are used as training data for three models in the Trainer module: the `Reference Model`, the `Actor Model`, and the `Critic Model`. This suggests a model-based or actor-critic RL training paradigm.

5. **Synchronization:** A critical feedback loop is shown by the `Sync. Weights` arrow. The trained `Actor Model`'s parameters are synchronized back to the `Rollout Model` in the Explorer, updating it for future experience generation. This creates an iterative cycle of experience collection and model improvement.

### Key Observations

* **Modular Design:** The system is cleanly separated into data handling, exploration (data generation), and training (model improvement) modules.

* **Central Role of Experience Buffer:** The `Buffer` containing `Verified Experiences` acts as the central hub, decoupling the Explorer and Trainer. This allows for asynchronous data generation and training.

* **Model Synchronization:** The explicit `Sync. Weights` connection highlights that the policy being executed in the environment (`Rollout Model`) is periodically updated with the improved policy learned by the `Actor Model`.

* **Iconography:** Icons are used consistently to denote data types: a document icon for `Task` and `Experience`, a magnifying glass for processing/inspection, a robot for the Explorer module, and a head with gears for the Trainer module.

### Interpretation

This diagram represents a sophisticated reinforcement learning system designed for stability and efficiency. The architecture addresses several key challenges in RL:

1. **Experience Replay & Stability:** By storing experiences in a `Buffer` and using a `Verified Experiences` database, the system can break temporal correlations in data and reuse past experiences for training, which stabilizes learning.

2. **Parallelization Potential:** The separation of Explorer and Trainer suggests these processes could run in parallel or on different resources. The Explorer can continuously generate new data while the Trainer improves models using stored data.

3. **Policy Iteration:** The core learning loop is clearly depicted: the current policy (in the `Rollout Model`) generates experiences, which are used to train an improved policy (in the `Actor Model`), which is then synced back to become the new data-generating policy. This is a classic policy iteration scheme.

4. **Model-Based Elements:** The presence of a `Reward Model` and `Reference Model` alongside the standard `Actor` and `Critic` suggests this might be a model-based RL setup or one that uses auxiliary tasks and reward shaping to improve learning efficiency and performance.

In essence, the diagram illustrates a production-oriented RL pipeline that emphasizes data management, modular component design, and a continuous cycle of experience collection and policy refinement.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Reinforcement Learning System Architecture

### Overview

This diagram illustrates a reinforcement learning (RL) system architecture, depicting the flow of data and interactions between components. It includes elements for task processing, agent-environment interaction, experience management, and model training.

### Components/Axes

**Key Components**:

1. **Taskset** → **Task Data Processor** → **Workflow Runner**

2. **Workflow Runner** → **Agent**, **Environment**, **Rollout Model**, **Reward Model**

3. **Experience Data Processor** → **Raw Experiences** → **Verified Experiences** → **Buffer**

4. **Trainer** → **Reference Model**, **Actor Model**, **Critic Model**

5. **Synchronize Weights** (connects Workflow Runner and Trainer)

**Flow Direction**:

- Top-to-bottom: Taskset → Task Data Processor → Workflow Runner → Experience Data Processor → Trainer.

- Horizontal: Workflow Runner ↔ Trainer via "Synchronize Weights."

**Color Coding**:

- **Top Section (Blue)**: Taskset, Task Data Processor, Experience Data Processor.

- **Middle Section (Orange)**: Workflow Runner, Agent, Environment, Rollout Model, Reward Model.

- **Bottom Section (Green)**: Trainer, Reference Model, Actor Model, Critic Model.

- **Buffer**: Black icon with "Buffer" label.

### Detailed Analysis

1. **Taskset → Task Data Processor**:

- The Taskset (input) is processed by the Task Data Processor, which generates a "Task" output.

2. **Workflow Runner**:

- Contains an **Agent** that interacts with an **Environment** via **actions** and **rewards**.

- Uses a **Rollout Model** (predicts actions) and **Reward Model** (evaluates rewards).

- Outputs "Experience" to the Experience Data Processor.

3. **Experience Data Processor**:

- Processes **Raw Experiences** into **Verified Experiences**, which are stored in the **Buffer**.

4. **Trainer**:

- Uses **Reference Model** (baseline/expert model), **Actor Model** (policy), and **Critic Model** (value function) to train on experiences from the Buffer.

- **Synchronize Weights** ensures alignment between the Workflow Runner and Trainer models.

### Key Observations

- **Modular Design**: The system separates task processing (top), agent interaction (middle), and training (bottom).

- **Feedback Loop**: The Workflow Runner and Trainer share weights, enabling continuous improvement.

- **Experience Pipeline**: Raw experiences are filtered/verified before training, ensuring data quality.

### Interpretation

This architecture represents a closed-loop RL system:

1. **Exploration**: The Agent explores the Environment, generating experiences.

2. **Experience Refinement**: The Experience Data Processor cleans and validates data.

3. **Training**: The Trainer updates the Actor and Critic models using the Reference Model as a guide.

4. **Weight Synchronization**: Ensures the Workflow Runner’s models (e.g., Rollout, Reward) stay aligned with the Trainer’s policies.

The system emphasizes **data quality** (via verification) and **model alignment** (via weight synchronization), critical for stable RL training. The modular structure allows scalability and separation of concerns.

DECODING INTELLIGENCE...