\n

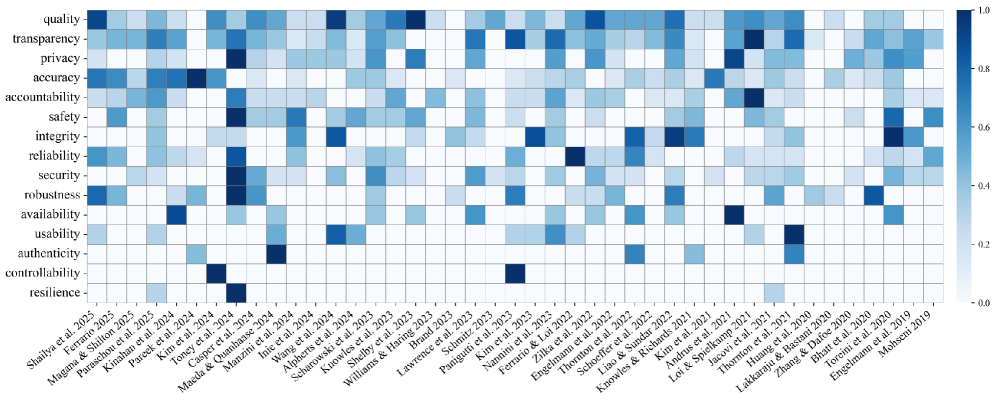

## Heatmap: Ethical Considerations in AI Research (2019-2025)

### Overview

This image presents a heatmap visualizing the consideration of various ethical factors across a range of AI research papers published between 2019 and 2025. The heatmap uses a color gradient to represent the degree to which each ethical consideration is addressed in the respective papers, with darker shades of red indicating higher consideration and lighter shades of blue indicating lower consideration.

### Components/Axes

* **Y-axis (Vertical):** Lists 16 ethical considerations: quality, transparency, privacy, accuracy, accountability, reliability, safety, integrity, security, robustness, availability, usability, authenticity, controllability, and resilience.

* **X-axis (Horizontal):** Lists AI research papers, identified by author(s) and year of publication, ranging from "Shalia et al. 2025" to "Mohsen 2019".

* **Color Scale (Legend):** Located on the right side of the heatmap, ranging from 0.0 (light blue) to 1.0 (dark red). The scale indicates the level of ethical consideration, with higher values representing greater consideration.

* **Grid Lines:** Horizontal and vertical lines delineate the cells of the heatmap, corresponding to the intersection of ethical considerations and research papers.

### Detailed Analysis

The heatmap displays a matrix of color-coded cells, each representing the ethical consideration score for a specific research paper. The values are approximate, based on visual estimation of the color gradient.

Here's a breakdown of trends and approximate values, reading across the X-axis (papers) for each ethical consideration (Y-axis):

* **Quality:** Generally low consideration across all papers, ranging from approximately 0.05 to 0.3. A slight increase is observed towards the middle of the timeline (2022-2023).

* **Transparency:** Starts low (around 0.1-0.2 in 2019-2021), peaks around 0.6-0.7 for papers in 2023-2024, and then declines slightly.

* **Privacy:** Similar trend to transparency, with a peak around 0.6-0.7 in 2023-2024.

* **Accuracy:** Generally moderate consideration, fluctuating between 0.3 and 0.6.

* **Accountability:** Low to moderate consideration, with a slight increase towards 2023-2024 (0.4-0.6).

* **Reliability:** Similar to accountability, with values ranging from 0.2 to 0.5.

* **Safety:** Moderate consideration, generally between 0.4 and 0.7, with a peak around 2023.

* **Integrity:** Low to moderate consideration, similar to reliability.

* **Security:** Low consideration across most papers, generally below 0.3.

* **Robustness:** Low consideration, similar to security.

* **Availability:** Very low consideration, consistently below 0.2.

* **Usability:** Low consideration, generally below 0.3.

* **Authenticity:** Low consideration, similar to usability.

* **Controllability:** Low consideration, consistently below 0.3.

* **Resilience:** Very low consideration, consistently below 0.2.

**Specific Data Points (Approximate):**

* **Mohsen et al. 2019:** All ethical considerations are very low (0.0-0.2).

* **Engelmann et al. 2020:** Slightly higher consideration for transparency (around 0.3) and accuracy (around 0.3).

* **Tseng et al. 2021:** Moderate consideration for safety (around 0.5).

* **Kim et al. 2023:** High consideration for transparency, privacy, and safety (around 0.7-0.8).

* **Paeguit et al. 2023:** High consideration for transparency and privacy (around 0.7).

* **Shalia et al. 2025:** Moderate consideration for transparency and accuracy (around 0.5-0.6).

### Key Observations

* **Peak in 2023-2024:** There's a noticeable peak in the consideration of transparency, privacy, and safety around 2023-2024. This suggests a growing awareness of these ethical issues during that period.

* **Low Consideration for Availability, Usability, Authenticity, Controllability, and Resilience:** These ethical considerations consistently receive the lowest scores, indicating they are often overlooked in AI research.

* **Variability:** There is significant variability in ethical consideration across different research papers, even within the same year.

* **Quality is consistently low:** Quality is consistently the lowest considered ethical factor.

### Interpretation

The heatmap suggests that while ethical considerations are becoming increasingly important in AI research, the focus is primarily on transparency, privacy, and safety. Other crucial ethical aspects, such as availability, usability, and resilience, are often neglected. The peak in consideration around 2023-2024 could be attributed to increased public awareness of AI ethics, the release of ethical guidelines by organizations, or a growing emphasis on responsible AI development within the research community.

The data highlights a potential imbalance in ethical focus. While addressing transparency, privacy, and safety is essential, a holistic approach to AI ethics requires considering a broader range of factors to ensure the responsible and beneficial deployment of AI technologies. The consistent low scores for availability, usability, and resilience suggest a need for more research and discussion on these often-overlooked ethical dimensions.

The heatmap provides a valuable snapshot of the ethical landscape in AI research, revealing both progress and areas for improvement. It can inform researchers, policymakers, and stakeholders about the current state of ethical consideration and guide future efforts towards more responsible AI development.