## Diagram: Two-Stage Language Model Reasoning and Answer Extraction Process

### Overview

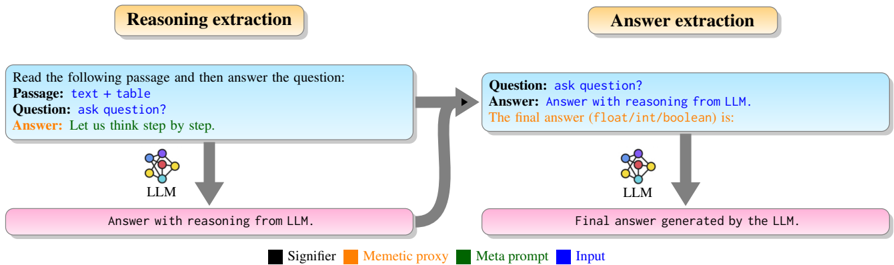

The image is a technical flowchart illustrating a two-stage process for extracting answers from a Large Language Model (LLM). The process is divided into two sequential phases: "Reasoning extraction" and "Answer extraction." Each phase involves an LLM processing a structured input to produce an intermediate or final output. A color-coded legend at the bottom defines the semantic roles of different text elements within the process boxes.

### Components/Axes

The diagram is structured into two main vertical sections, each headed by a title box, and a legend at the bottom.

**1. Header Boxes (Top):**

* **Left Header:** A rounded rectangle with a light orange fill and a black border. Contains the text: **"Reasoning extraction"**.

* **Right Header:** A rounded rectangle with a light orange fill and a black border. Contains the text: **"Answer extraction"**.

**2. Process Flow - Left Section (Reasoning Extraction):**

* **Input Box (Blue Border):** A rectangular box with a light blue fill and a blue border. Positioned in the upper-left quadrant.

* **Text Content:**

* "Read the following passage and then answer the question:"

* "Passage: **text + table**" (The phrase "text + table" is in blue font).

* "Question: **ask question?**" (The phrase "ask question?" is in blue font).

* "Answer: **Let us think step by step.**" (The phrase "Let us think step by step." is in orange font).

* **LLM Icon:** A stylized icon of a neural network or brain, labeled **"LLM"** below it. Positioned directly below the center of the Input Box.

* **Arrow (Downward):** A thick, gray arrow points from the Input Box to the LLM icon.

* **Output Box (Pink Fill):** A rectangular box with a light pink fill and a pink border. Positioned at the bottom-left.

* **Text Content:** "Answer with reasoning from LLM." (The text is in black font).

* **Arrow (Downward):** A thick, gray arrow points from the LLM icon to this Output Box.

**3. Process Flow - Right Section (Answer Extraction):**

* **Input Box (Blue Border):** A rectangular box with a light blue fill and a blue border. Positioned in the upper-right quadrant.

* **Text Content:**

* "Question: **ask question?**" (The phrase "ask question?" is in blue font).

* "Answer: **Answer with reasoning from LLM.**" (The phrase "Answer with reasoning from LLM." is in black font).

* "The final answer (float/int/boolean) is:" (This line is in green font).

* **LLM Icon:** An identical LLM icon, labeled **"LLM"** below it. Positioned directly below the center of this Input Box.

* **Arrow (Downward):** A thick, gray arrow points from the Input Box to the LLM icon.

* **Output Box (Pink Fill):** A rectangular box with a light pink fill and a pink border. Positioned at the bottom-right.

* **Text Content:** "Final answer generated by the LLM." (The text is in black font).

* **Arrow (Downward):** A thick, gray arrow points from the LLM icon to this Output Box.

**4. Connecting Flow:**

* A thick, gray, curved arrow originates from the right side of the **left Output Box** ("Answer with reasoning from LLM.") and points to the left side of the **right Input Box**. This indicates the output of the first stage becomes part of the input for the second stage.

**5. Legend (Bottom Center):**

A horizontal legend with four colored squares and corresponding labels:

* **Black Square:** Label is **"Signifier"**.

* **Orange Square:** Label is **"Memetic proxy"**.

* **Green Square:** Label is **"Meta prompt"**.

* **Blue Square:** Label is **"Input"**.

### Detailed Analysis

The diagram defines a specific prompting strategy for an LLM, broken into two distinct steps:

**Stage 1: Reasoning Extraction**

* **Input:** A composite prompt containing:

* A **meta-prompt** (green): "Read the following passage and then answer the question:"

* The **input data** (blue): A passage described as "text + table" and a question "ask question?".

* A **memetic proxy** (orange): The specific instruction "Let us think step by step." This is a known prompting technique to elicit chain-of-thought reasoning.

* **Process:** The LLM processes this combined input.

* **Output:** The LLM generates a response that includes its reasoning process ("Answer with reasoning from LLM.").

**Stage 2: Answer Extraction**

* **Input:** A new prompt constructed from:

* The original **input question** (blue): "ask question?".

* The **output from Stage 1** (black, acting as a "Signifier"): "Answer with reasoning from LLM.".

* A new **meta-prompt** (green): "The final answer (float/int/boolean) is:". This explicitly instructs the LLM to distill the final answer into a specific data type.

* **Process:** The LLM processes this second, more focused prompt.

* **Output:** The LLM generates the concise, final answer ("Final answer generated by the LLM.").

### Key Observations

1. **Sequential Dependency:** The process is strictly sequential. The second stage cannot begin without the output of the first stage.

2. **Role of Color Coding:** The legend is critical for understanding the function of each text string. Blue text represents the core user inputs (data and question). Orange text is a specific prompting technique ("memetic proxy"). Green text provides structural or formatting instructions ("meta prompt"). Black text represents outputs or signifiers that bridge the stages.

3. **Prompt Engineering Pattern:** This illustrates a sophisticated "generate-then-extract" or "reason-then-decide" pattern. It separates the open-ended reasoning task from the constrained final answer extraction task, likely to improve accuracy and control.

4. **Data Type Specification:** The second stage's meta-prompt explicitly requests a structured output type (float/int/boolean), indicating this pipeline is designed for tasks requiring precise, machine-readable answers.

### Interpretation

This diagram is a blueprint for a reliable question-answering system using an LLM. It addresses a common challenge: LLMs can provide correct reasoning but bury the final answer in verbose text, or they can hallucinate when asked for a direct answer.

The two-stage process acts as a form of **cognitive scaffolding**:

1. **Stage 1 (Reasoning Extraction)** gives the model space to "think aloud," leveraging its ability to process complex information (text + table) and follow a chain-of-thought directive ("step by step"). This reduces the pressure to be immediately correct and allows for internal verification.

2. **Stage 2 (Answer Extraction)** then acts as a focused "editor" or "summarizer." It takes the model's own reasoning as context and is given a strict, simple instruction to produce only the final answer in a predefined format. This second prompt is less ambiguous and easier for the model to follow correctly.

The color-coded roles highlight the importance of **prompt structure**. The "memetic proxy" (orange) is a behavioral trigger, the "meta prompts" (green) are control instructions, and the "input" (blue) is the raw data. By separating these elements, the pipeline makes the prompting strategy explicit, modular, and potentially easier to debug or optimize. The overall goal is to transform an LLM from a conversational agent into a reliable component within a larger technical system that requires structured data outputs.