TECHNICAL ASSET FINGERPRINT

b37253a2b5cb92f11ce56887

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## System Architecture Diagram: Memory and Processing Unit Interaction

### Overview

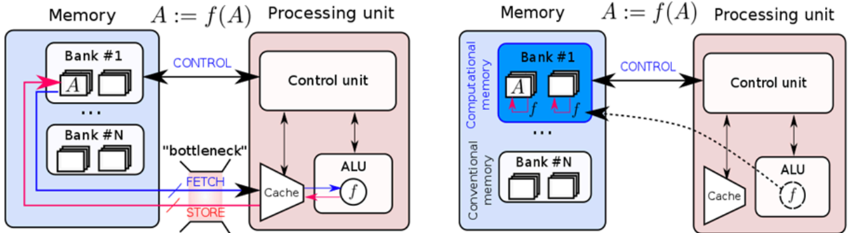

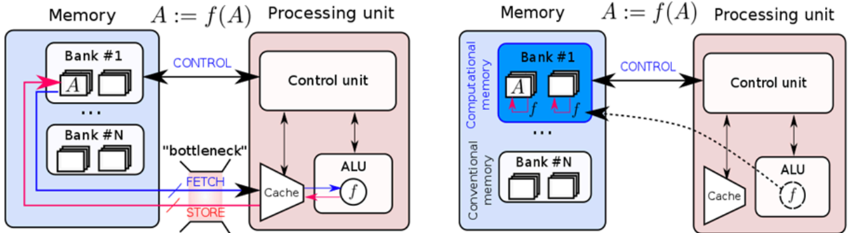

The image presents two diagrams illustrating the interaction between memory and a processing unit. The left diagram depicts a conventional memory architecture, while the right diagram shows a computational memory architecture. Both diagrams highlight the flow of data and control signals between the memory banks and the processing unit components.

### Components/Axes

**Left Diagram (Conventional Memory):**

* **Memory:** Labeled "Memory" at the top. Contains two memory banks: "Bank #1" and "Bank #N". Each bank contains multiple memory locations represented by small rectangles.

* The memory banks are enclosed in a light blue rounded rectangle.

* **Processing Unit:** Labeled "Processing unit" at the top. Contains a "Control unit", "ALU" (Arithmetic Logic Unit), and "Cache".

* The processing unit is enclosed in a light red rounded rectangle.

* **Data Flow:**

* **FETCH:** Data is fetched from memory to the cache via a "bottleneck". The "FETCH" label is associated with a red arrow pointing from the memory to the cache.

* **STORE:** Data is stored from the cache to memory. The "STORE" label is associated with a pink arrow pointing from the cache to the memory.

* **Control:** Control signals are sent from the control unit to the memory. The "CONTROL" label is associated with a blue arrow pointing from the control unit to Bank #1.

* Data flows between Bank #1 and Bank #N via pink and blue arrows.

* **Equation:** "A := f(A)" is displayed above the processing unit, indicating that the processing unit applies a function 'f' to data 'A'.

**Right Diagram (Computational Memory):**

* **Memory:** Labeled "Memory" at the top. Contains two memory banks: "Bank #1" and "Bank #N". Each bank contains multiple memory locations represented by small rectangles.

* Bank #1 is labeled as "Computational memory" and is enclosed in a blue rounded rectangle.

* Bank #N is labeled as "Conventional memory" and is enclosed in a white rounded rectangle.

* **Processing Unit:** Labeled "Processing unit" at the top. Contains a "Control unit", "ALU" (Arithmetic Logic Unit), and "Cache".

* The processing unit is enclosed in a light red rounded rectangle.

* **Data Flow:**

* The function 'f' is applied directly within the computational memory bank (Bank #1), indicated by small pink arrows and 'f' labels within the bank.

* **Control:** Control signals are sent from the control unit to the memory. The "CONTROL" label is associated with a blue arrow pointing from the control unit to Bank #1.

* A dashed black arrow indicates a potential data flow path from the computational memory to the cache.

* **Equation:** "A := f(A)" is displayed above the processing unit, indicating that the processing unit applies a function 'f' to data 'A'.

### Detailed Analysis or ### Content Details

**Left Diagram (Conventional Memory):**

* Data 'A' is stored in Bank #1.

* The "bottleneck" suggests a limitation in the data transfer rate between memory and cache.

* The ALU performs the function 'f' on data fetched from memory.

* The control unit manages the data flow and operations.

**Right Diagram (Computational Memory):**

* Data 'A' is stored in Bank #1 (Computational Memory).

* The function 'f' is applied directly within the memory bank, reducing the need to transfer data to the ALU for processing.

* The dashed arrow suggests that the processed data can be sent to the cache if needed.

* Bank #N (Conventional Memory) operates similarly to the memory banks in the left diagram.

### Key Observations

* The primary difference between the two diagrams is the location where the function 'f' is applied. In the conventional memory architecture, 'f' is applied in the ALU within the processing unit. In the computational memory architecture, 'f' is applied directly within the memory bank.

* The "bottleneck" label in the conventional memory diagram highlights a potential performance limitation.

* The computational memory architecture aims to improve performance by reducing data transfer between memory and the processing unit.

### Interpretation

The diagrams illustrate the evolution from a conventional memory architecture to a computational memory architecture. The conventional architecture requires data to be transferred to the processing unit for computation, which can be a bottleneck. The computational memory architecture aims to overcome this bottleneck by performing computations directly within the memory bank. This reduces data transfer, potentially leading to improved performance and energy efficiency. The dashed arrow in the computational memory diagram suggests that the processed data can still be accessed by the processing unit if needed, providing flexibility in the system design.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Processing Unit and Memory Interaction - Conventional vs. Computational

### Overview

The image presents a comparative diagram illustrating two architectures for processing unit and memory interaction. The left side depicts a conventional architecture, while the right side shows a computational memory architecture. Both diagrams represent the flow of data and control signals during a computation defined as A := f(A), where A is a variable and f is a function.

### Components/Axes

The diagram consists of two main sections, each representing an architecture. Each section contains:

* **Memory:** Divided into banks labeled "Bank #1" through "Bank #N".

* **Processing Unit:** Comprising a Control Unit, Arithmetic Logic Unit (ALU), and Cache.

* **Connections:** Arrows indicating data and control flow. Labels on these arrows include "CONTROL", "FETCH", "STORE", and "bottleneck".

* **Mathematical Notation:** "A := f(A)" appears above each diagram, representing the computation being performed.

* **Data Representation:** The variable 'A' is shown within the memory banks. In the computational memory architecture, the function 'f' is also stored within the memory bank alongside 'A'.

### Detailed Analysis or Content Details

**Left Diagram (Conventional Architecture):**

* **Memory:** The memory is divided into 'N' banks. The variable 'A' is located in Bank #1. Data flow between the memory and the processing unit is represented by pink arrows for 'FETCH' and 'STORE', and a black arrow for 'CONTROL'.

* **Processing Unit:** The 'FETCH' signal retrieves data from memory to the Cache. The Cache then passes the data to the ALU, which applies the function 'f' to it. The result is then 'STORE'd back into memory. The 'bottleneck' label is placed near the 'FETCH' and 'STORE' arrows, indicating a potential performance limitation.

* **ALU:** The output of the ALU is labeled 'f'.

**Right Diagram (Computational Memory Architecture):**

* **Memory:** The memory is divided into 'N' banks. Bank #1 contains both the variable 'A' and the function 'f'. The memory is further labeled as "Computational memory" and "Conventional memory".

* **Processing Unit:** The 'CONTROL' signal is sent from the Control Unit to the memory. The ALU receives the function 'f' directly from the memory (Bank #1) via a dotted arrow, bypassing the Cache.

* **ALU:** The output of the ALU is labeled 'f' with a circular arrow around it, suggesting an iterative process or feedback loop.

### Key Observations

* The computational memory architecture aims to reduce the "bottleneck" associated with data transfer between memory and the processing unit by storing the function 'f' directly in memory alongside the data 'A'.

* The dotted arrow in the computational memory architecture indicates a direct connection between the memory and the ALU for the function 'f', bypassing the Cache.

* The conventional architecture relies heavily on the Cache for intermediate data and function storage, potentially leading to performance limitations.

### Interpretation

The diagram illustrates a shift in computing paradigms from a traditional Von Neumann architecture (conventional) to a more modern computational memory architecture. The conventional architecture suffers from the "memory wall" problem, where the speed of data transfer between the processor and memory limits overall performance. The computational memory architecture attempts to address this by bringing computation closer to the data, reducing the need for frequent data transfers.

The presence of 'f' within the memory bank in the computational architecture suggests that the processing is partially or fully offloaded to the memory itself. This is a key characteristic of in-memory computing and near-data processing. The dotted line represents a more efficient pathway for the function 'f' to reach the ALU, potentially improving performance.

The diagram highlights the potential benefits of integrating computation and memory, leading to reduced latency, increased bandwidth, and improved energy efficiency. The 'A := f(A)' notation emphasizes the iterative nature of many computations, and the computational memory architecture is designed to optimize this iterative process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Traditional vs. Computational Memory Architectures

### Overview

The image displays a side-by-side technical diagram comparing two computer architecture models. The left side illustrates a traditional von Neumann architecture with a clear memory-processing bottleneck. The right side presents an alternative architecture where computation is integrated directly into the memory unit ("Computational memory"), aiming to alleviate that bottleneck. The diagram uses block elements, arrows, and labels to show components and data/control flow.

### Components/Axes

The diagram is divided into two primary sections, each containing two main blocks:

**Left Diagram (Traditional Architecture):**

1. **Memory Block (Left, light blue background):**

* Label: `Memory`

* Equation: `A := f(A)` (positioned above the block)

* Contains: Multiple memory banks labeled `Bank #1` through `Bank #N`.

* `Bank #1` contains two sub-elements: a box labeled `A` and an empty box.

2. **Processing Unit Block (Right, light pink background):**

* Label: `Processing unit`

* Contains:

* `Control unit` (top)

* `ALU` (Arithmetic Logic Unit, bottom right) containing a function symbol `f`.

* `Cache` (bottom left, trapezoid shape).

3. **Data/Control Flow Arrows:**

* **CONTROL:** A double-headed arrow between the `Memory` block and the `Control unit`.

* **FETCH:** A blue arrow from `Bank #1` (specifically from box `A`) to the `Cache`.

* **STORE:** A red arrow from the `Cache` back to `Bank #1` (specifically to box `A`).

* A dotted arrow from the `Cache` to the `ALU`.

* A label `"bottleneck"` with lines pointing to the FETCH and STORE arrows.

**Right Diagram (Computational Memory Architecture):**

1. **Memory Block (Left, light blue background):**

* Label: `Memory`

* Equation: `A := f(A)` (positioned above the block)

* Internally divided into two regions:

* **Top (Darker Blue):** Labeled `Computational memory` vertically on its left edge. Contains `Bank #1`.

* **Bottom (Light Blue):** Labeled `Conventional memory` vertically on its left edge. Contains `Bank #N`.

* `Bank #1` (within Computational memory) contains: a box labeled `A`, a box labeled `f`, and a box labeled `f` (with a dotted outline).

2. **Processing Unit Block (Right, light pink background):**

* Label: `Processing unit`

* Contains the same components as the left diagram: `Control unit`, `ALU` (with `f`), and `Cache`.

3. **Data/Control Flow Arrows:**

* **CONTROL:** A double-headed arrow between the `Memory` block and the `Control unit`.

* **Direct Computational Paths:** Two red arrows originate from the `f` boxes within `Bank #1` (Computational memory) and point directly to the `ALU`, bypassing the `Cache`.

* A dotted arrow from the `ALU` back to the `f` box with the dotted outline in `Bank #1`.

### Detailed Analysis

* **Component Isolation - Left Diagram (Traditional):**

* **Header Region:** Contains the title `Memory` and the equation `A := f(A)`.

* **Main Chart Region:** Shows the separation between Memory and Processing Unit. The critical path for data (`A`) involves leaving the memory bank (`Bank #1`), traveling via the `FETCH` path to the `Cache`, being processed by the `ALU`, and returning via the `STORE` path. This round-trip is explicitly labeled as the `"bottleneck"`.

* **Footer Region:** Not distinctly present; the diagram is contained within the two main blocks.

* **Component Isolation - Right Diagram (Computational Memory):**

* **Header Region:** Same as left (`Memory`, `A := f(A)`).

* **Main Chart Region:** The memory block is now heterogeneous. `Bank #1` is reclassified as `Computational memory` and now houses not just data (`A`) but also processing elements (`f`). The `ALU` in the processing unit now has direct, dedicated connections (red arrows) to these in-memory processing elements. The `Cache` appears to be bypassed for these operations. The `Conventional memory` (`Bank #N`) remains for standard storage.

* **Spatial Grounding:** The `Computational memory` label is positioned vertically along the left edge of the top, darker blue section of the Memory block. The direct red arrows from `Bank #1` to the `ALU` are the most visually prominent change, cutting across the center of the diagram.

### Key Observations

1. **Architectural Shift:** The core difference is the relocation of the function `f` from being solely within the `ALU` (left) to also being embedded within `Bank #1` of the memory (right).

2. **Bottleneck Mitigation:** The left diagram explicitly identifies the data movement between memory and processor as a `"bottleneck"`. The right diagram's design, with direct in-memory computation paths, is a visual solution to this problem.

3. **Memory Heterogeneity:** The right diagram introduces the concept of specialized memory (`Computational memory`) coexisting with `Conventional memory` within the same memory unit.

4. **Data Flow Simplification:** The right diagram eliminates the need for the `FETCH` and `STORE` cycle through the `Cache` for operations performed by the embedded `f` units, as shown by the direct red arrows.

### Interpretation

This diagram is a conceptual illustration of the **"Processing-in-Memory" (PIM)** or **"Computational Memory"** paradigm, proposed to overcome the **von Neumann bottleneck**.

* **What it demonstrates:** The left side represents the classic von Neumann architecture where the CPU (Processing unit) and memory are separate. Data (`A`) must be constantly moved (`FETCH`/`STORE`) to the CPU for processing (`f`), creating a performance and energy bottleneck. The right side proposes integrating simple processing elements (`f`) directly into the memory array (`Bank #1`). This allows certain operations (like `A := f(A)`) to be performed *where the data resides*, drastically reducing data movement.

* **Relationship between elements:** The `Control unit` and `ALU` in the Processing unit remain, but their role changes. The `Control unit` still orchestrates operations. The `ALU` may handle more complex tasks, while the embedded `f` units handle specific, frequent operations. The direct arrows symbolize low-latency, high-bandwidth connections that are orders of magnitude faster than moving data across the memory bus.

* **Notable Implications:** This architecture is particularly relevant for data-intensive workloads like machine learning inference, database operations, and scientific computing, where moving large datasets is the primary cost. The diagram suggests a future where the line between "memory" and "processor" blurs for efficiency. The retention of `Conventional memory` (`Bank #N`) indicates a hybrid approach, not a complete replacement.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Memory and Processing Unit Architectures Comparison

### Overview

The image presents two side-by-side diagrams comparing memory and processing unit architectures. The left diagram illustrates a conventional architecture with a clear separation between memory banks and processing units, while the right diagram shows a computational memory architecture with integrated processing capabilities. Both diagrams include control units, ALUs (Arithmetic Logic Units), and memory banks, but differ in their data flow and computational capabilities.

### Components/Axes

**Left Diagram (Conventional Architecture):**

- **Memory Section**:

- Contains multiple "Bank #1" to "Bank #N" blocks, each with two square elements.

- Labels: "Memory", "Bank #1", "Bank #N".

- **Processing Unit Section**:

- Contains "Control unit" and "ALU" blocks.

- Labels: "Processing unit", "Control unit", "ALU".

- **Data Flow**:

- Arrows labeled "CONTROL" (blue) connect memory banks to the control unit.

- Arrows labeled "FETCH" (blue) and "STORE" (red) connect the cache to the ALU.

- A "bottleneck" label (red) highlights the fetch/store pathway.

- **Function Notation**: "A := f(A)" appears above the processing unit.

**Right Diagram (Computational Memory Architecture):**

- **Memory Section**:

- Contains "Computational memory" with "Bank #1" to "Bank #N" blocks.

- Labels: "Computational memory", "Bank #1", "Bank #N".

- **Processing Unit Section**:

- Contains "Control unit" and "ALU" blocks.

- Labels: "Processing unit", "Control unit", "ALU".

- **Data Flow**:

- Arrows labeled "CONTROL" (blue) connect computational memory to the control unit.

- Dashed arrows labeled "f" (function application) connect memory banks directly to the ALU.

- No explicit "bottleneck" label.

- **Function Notation**: "A := f(A)" appears above the processing unit.

**Shared Elements**:

- Both diagrams use color-coded arrows:

- Blue: "CONTROL" signals.

- Red: "STORE" operations (left diagram only).

- Pink: "bottleneck" highlight (left diagram only).

- Both include "Cache" blocks connected to the ALU.

### Detailed Analysis

**Left Diagram**:

- Memory banks are isolated from processing units.

- Data must pass through the control unit before reaching the ALU.

- Fetch/store operations create a bottleneck, indicated by the red label and thicker arrow.

- Function application (f(A)) occurs after data retrieval from memory.

**Right Diagram**:

- Memory banks are labeled "Computational memory," implying integrated computation.

- Dashed arrows labeled "f" suggest in-memory computation (A := f(A)).

- No explicit bottleneck, as computation occurs closer to memory.

- Direct data flow from memory to ALU via function application.

### Key Observations

1. **Bottleneck Elimination**: The right diagram removes the fetch/store bottleneck present in the left diagram.

2. **In-Memory Computation**: The right diagram introduces function application (f) directly in memory banks, enabling computation without data movement.

3. **Architectural Integration**: Computational memory in the right diagram blurs the line between storage and processing.

4. **Control Unit Role**: Both architectures retain a central control unit, but its role differs:

- Left: Manages data flow between isolated components.

- Right: Coordinates integrated memory-processing operations.

### Interpretation

The diagrams contrast traditional von Neumann architectures (left) with emerging computational memory designs (right). The left diagram's bottleneck highlights the performance limitations of separating memory and processing. The right diagram's integration of computation into memory banks suggests:

- Reduced latency through in-memory operations.

- Lower energy consumption by minimizing data movement.

- Potential for parallel computation across memory banks.

This architectural shift aligns with trends in neuromorphic and in-memory computing, where processing units are embedded within memory hierarchies to address the "memory wall" problem. The function notation (f(A)) implies support for complex operations directly in memory, which could enable applications like AI/ML workloads with reduced data transfer overhead.

DECODING INTELLIGENCE...