## Performance vs. Recurrence at Test-Time Line Chart

### Overview

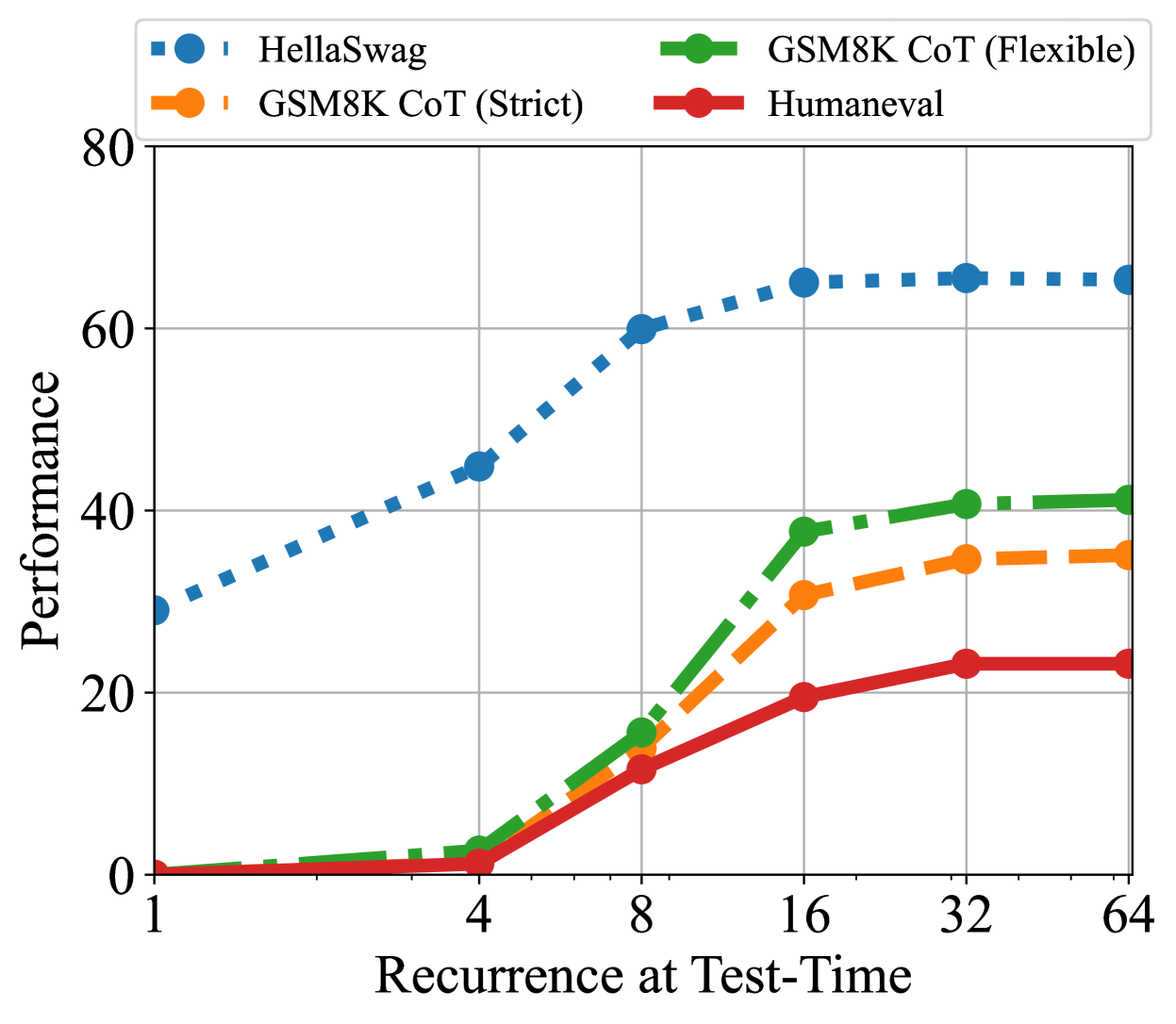

The image is a line chart plotting "Performance" (y-axis) against "Recurrence at Test-Time" (x-axis) for four different benchmark tasks. The chart demonstrates how performance on these tasks changes as the number of recurrence steps at test time increases. The x-axis uses a logarithmic scale (base 2), while the y-axis is linear.

### Components/Axes

* **X-Axis:** Labeled "Recurrence at Test-Time". Major tick marks and labels are at values: 1, 4, 8, 16, 32, 64.

* **Y-Axis:** Labeled "Performance". The scale runs from 0 to 80, with major grid lines at intervals of 20 (0, 20, 40, 60, 80).

* **Legend:** Positioned at the top of the chart, centered horizontally. It contains four entries:

1. **HellaSwag:** Blue square marker, blue dotted line.

2. **GSM8K CoT (Strict):** Orange circle marker, orange dashed line.

3. **GSM8K CoT (Flexible):** Green circle marker, green dash-dot line.

4. **Humaneval:** Red circle marker, red solid line.

### Detailed Analysis

**Data Series Trends and Approximate Values:**

1. **HellaSwag (Blue, Dotted Line):**

* **Trend:** Starts highest, shows a strong, steady upward slope that begins to plateau after x=16.

* **Data Points (Approximate):**

* x=1: y ≈ 30

* x=4: y ≈ 45

* x=8: y ≈ 60

* x=16: y ≈ 65

* x=32: y ≈ 66

* x=64: y ≈ 66

2. **GSM8K CoT (Flexible) (Green, Dash-Dot Line):**

* **Trend:** Starts near zero, remains low until x=4, then exhibits a very steep increase between x=4 and x=16, followed by a slower rise to a plateau.

* **Data Points (Approximate):**

* x=1: y ≈ 0

* x=4: y ≈ 2

* x=8: y ≈ 16

* x=16: y ≈ 38

* x=32: y ≈ 41

* x=64: y ≈ 41

3. **GSM8K CoT (Strict) (Orange, Dashed Line):**

* **Trend:** Follows a similar pattern to the "Flexible" variant but consistently achieves lower performance. Starts near zero, rises sharply after x=4, and plateaus.

* **Data Points (Approximate):**

* x=1: y ≈ 0

* x=4: y ≈ 1

* x=8: y ≈ 12

* x=16: y ≈ 31

* x=32: y ≈ 35

* x=64: y ≈ 35

4. **Humaneval (Red, Solid Line):**

* **Trend:** The lowest-performing series. Starts near zero, shows a gradual, steady increase that begins to level off after x=16.

* **Data Points (Approximate):**

* x=1: y ≈ 0

* x=4: y ≈ 1

* x=8: y ≈ 11

* x=16: y ≈ 20

* x=32: y ≈ 23

* x=64: y ≈ 23

### Key Observations

1. **Universal Improvement with Recurrence:** All four benchmarks show improved performance as the number of recurrence steps increases from 1 to 64.

2. **Performance Hierarchy:** A clear and consistent performance hierarchy is maintained across all recurrence levels: HellaSwag > GSM8K CoT (Flexible) > GSM8K CoT (Strict) > Humaneval.

3. **Diminishing Returns:** All curves show signs of saturation. The most significant gains occur between recurrence steps 4 and 16. After x=16, the rate of improvement slows dramatically for all series, with performance largely plateauing between x=32 and x=64.

4. **Task Sensitivity:** The magnitude of improvement varies greatly by task. HellaSwag shows the largest absolute gain (~36 points), while Humaneval shows the smallest (~23 points). The GSM8K tasks show a dramatic "phase transition" between 4 and 16 steps.

### Interpretation

This chart illustrates the impact of increasing computational steps (recurrence) at inference time on model performance across diverse reasoning tasks. The data suggests:

* **Recurrence is Beneficial:** For these specific benchmarks, allowing the model to "think longer" (via more recurrence steps) consistently leads to better answers.

* **Task-Dependent Scaling:** The benefit of additional computation is not uniform. HellaSwag, likely a commonsense reasoning task, starts from a higher baseline and gains steadily. The GSM8K (Grade School Math) tasks show a critical threshold effect, where performance is negligible until a sufficient number of recurrence steps (around 8) is reached, after which it improves rapidly. Humaneval (code generation) shows the most modest, linear gains.

* **Saturation Point:** There appears to be an optimal compute budget (around 16-32 recurrence steps) for these tasks, beyond which additional steps yield minimal performance improvement. This indicates a limit to the effectiveness of pure recurrence for these specific problem types and model setup.

* **Strict vs. Flexible Evaluation:** For GSM8K, the "Flexible" evaluation metric consistently outperforms the "Strict" one, quantifying the gap between answers that are functionally correct versus those that match a precise solution format.

In summary, the chart provides empirical evidence for the value of test-time computation but highlights that its effectiveness is bounded and highly dependent on the nature of the task.