TECHNICAL ASSET FINGERPRINT

b415a2356fce15d844225d90

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

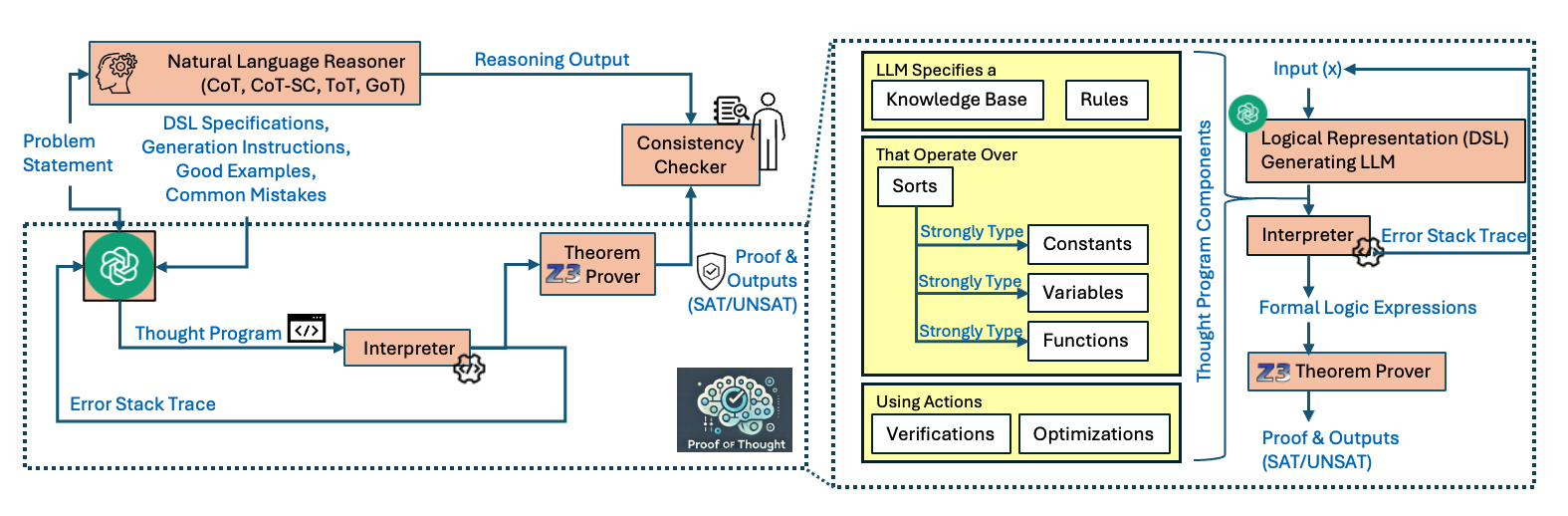

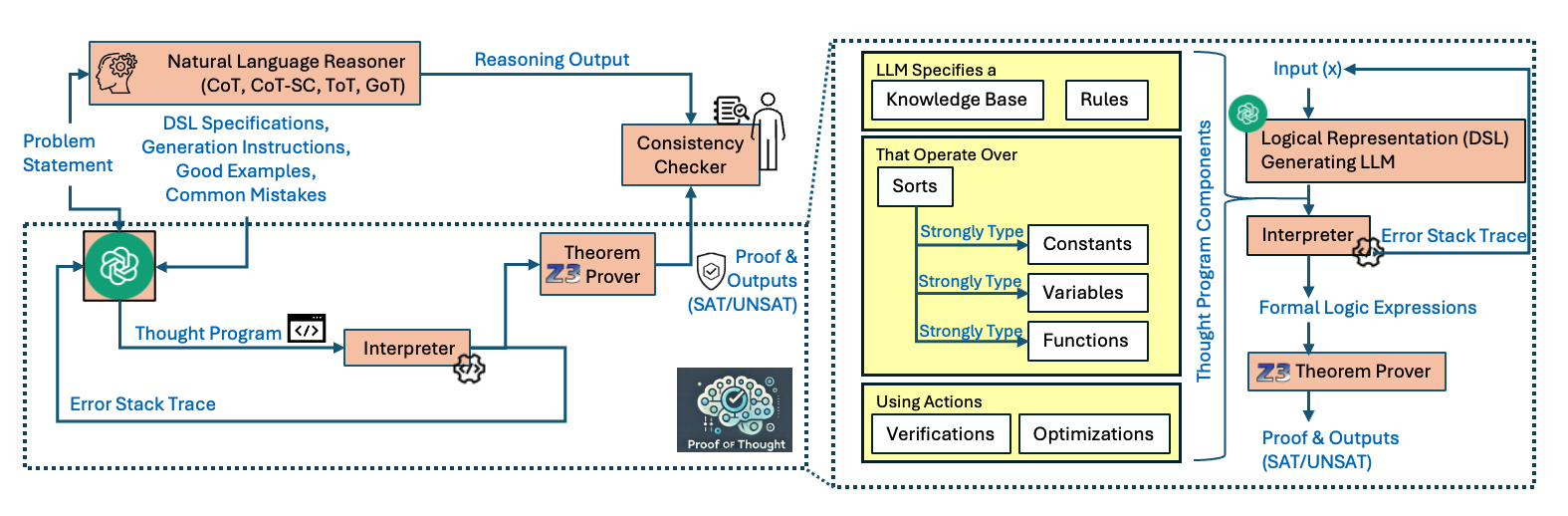

## Diagram: Proof of Thought System Architecture

### Overview

This image is a technical system architecture diagram illustrating a framework called "Proof of Thought." It depicts a multi-stage process for enhancing the reasoning capabilities of Large Language Models (LLMs) by generating and verifying formal "Thought Programs." The system integrates natural language reasoning, formal logic, and theorem proving to produce verifiable outputs. The diagram is divided into two primary sections: a left panel showing the overall system flow and a right panel detailing the internal components of a "Thought Program."

### Components/Axes

The diagram is a flowchart with labeled boxes, arrows indicating data/control flow, and descriptive text annotations. There are no traditional chart axes. Key components are color-coded:

* **Orange Boxes:** Represent active processing modules (e.g., Natural Language Reasoner, Theorem Prover, Interpreter).

* **Green Icon:** Represents an LLM (Large Language Model) at the core of the generation process.

* **Yellow Box:** Encapsulates the detailed structure of the "Thought Program Components."

* **Blue Text/Arrows:** Primarily indicate data flow, inputs, outputs, and labels.

* **Black Dashed Lines:** Group related subsystems.

**Spatial Layout:**

* **Left Panel (Overall Flow):** Occupies the left ~60% of the image. It shows the high-level pipeline from a "Problem Statement" to final "Proof & Outputs."

* **Right Panel (Thought Program Components):** Occupies the right ~40% of the image, enclosed in a black dashed box. It provides a detailed breakdown of the "Thought Program" generated by the LLM.

* **Logo:** A "Proof of Thought" logo with a brain and checkmark is positioned in the bottom-center of the left panel.

### Detailed Analysis

**Left Panel - Overall System Flow:**

1. **Input:** A "Problem Statement" is fed into two parallel paths.

2. **Path 1 (Natural Language Reasoning):**

* The problem goes to a **"Natural Language Reasoner"** box. Text inside lists reasoning methods: "(CoT, CoT-SC, ToT, GoT)".

* This reasoner also receives "DSL Specifications, Generation Instructions, Good Examples, Common Mistakes."

* Its output is labeled **"Reasoning Output"** and flows to a **"Consistency Checker"**.

3. **Path 2 (Formal Thought Program Generation & Verification):**

* The problem also goes to a central **green LLM icon**.

* The LLM generates a **"Thought Program"** (indicated by a `</>` code icon).

* This program is sent to an **"Interpreter"**.

* The Interpreter produces an **"Error Stack Trace"** which loops back to the LLM, creating a feedback loop for refinement.

* The Interpreter's output also goes to a **"Theorem Prover"** labeled with the **"Z3"** logo.

* The Theorem Prover produces **"Proof & Outputs (SAT/UNSAT)"**.

* This proof output is also sent to the **"Consistency Checker"**, which compares it with the "Reasoning Output" from Path 1.

4. **Final Output:** The system's verified result is the **"Proof & Outputs (SAT/UNSAT)"**.

**Right Panel - Thought Program Components (Detailed View):**

This panel is a callout from the "Thought Program" in the left panel. It details what the LLM specifies.

1. **Top Section:** **"LLM Specifies a"** containing two boxes: **"Knowledge Base"** and **"Rules"**.

2. **Middle Section:** **"That Operate Over"** containing a box **"Sorts"**. From "Sorts," three arrows labeled **"Strongly Type"** point to boxes for **"Constants"**, **"Variables"**, and **"Functions"**.

3. **Bottom Section:** **"Using Actions"** containing two boxes: **"Verifications"** and **"Optimizations"**.

4. **Right-Side Flow (within the yellow box):**

* An **"Input (x)"** enters the system.

* It goes to a **"Logical Representation (DSL) Generating LLM"**.

* The output goes to an **"Interpreter"** (with a gear icon).

* The Interpreter produces **"Formal Logic Expressions"**.

* These expressions are fed into the **"Z3 Theorem Prover"**.

* The prover yields the final **"Proof & Outputs (SAT/UNSAT)"**.

* An **"Error Stack Trace"** also flows from the Interpreter back to the "Logical Representation... LLM".

### Key Observations

1. **Dual Verification Pathway:** The architecture employs a unique dual-check system: formal verification via Z3 (producing SAT/UNSAT) and consistency checking between the formal proof and the natural language reasoning output.

2. **Iterative Refinement Loop:** A clear feedback loop exists where the "Interpreter" sends an "Error Stack Trace" back to the generating LLM, enabling iterative correction of the Thought Program.

3. **Strong Typing Emphasis:** The Thought Program is built on a formally specified foundation with "Sorts" that are "Strongly Type[d]" for Constants, Variables, and Functions, ensuring logical rigor.

4. **Integration of Methods:** The diagram explicitly lists advanced reasoning techniques (Chain-of-Thought, Self-Consistency, Tree-of-Thought, Graph-of-Thought) as part of the Natural Language Reasoner, showing the system's intent to leverage and verify these methods.

5. **Modular Design:** The separation between the high-level flow (left) and the detailed Thought Program specification (right) indicates a modular, layered system design.

### Interpretation

This diagram presents a sophisticated framework for making LLM reasoning more reliable and verifiable. The core innovation is the "Thought Program"—a formal, interpretable representation of the LLM's reasoning process that can be executed, checked for errors, and mathematically proven.

The system addresses a critical weakness of standard LLMs: their outputs are not inherently verifiable. By translating natural language reasoning into a Domain-Specific Language (DSL) and formal logic, and then using a theorem prover (Z3), the framework can provide a formal guarantee (SAT/UNSAT) about the correctness of the conclusion relative to the specified knowledge and rules.

The "Consistency Checker" adds a second layer of validation, ensuring that the informal natural language reasoning aligns with the formal proof. This dual approach mitigates the risk of the LLM generating a formally correct but semantically irrelevant proof, or a plausible-sounding but logically flawed explanation.

The feedback loop via "Error Stack Trace" is crucial, as it allows the system to self-correct, mimicking a debugging process in software engineering. This transforms the LLM from a one-shot generator into a component within an iterative, verifiable reasoning engine. The overall architecture suggests a move towards "neuro-symbolic" AI, combining the flexible pattern recognition of neural networks (LLMs) with the rigorous, reliable inference of symbolic logic and formal methods.

DECODING INTELLIGENCE...