## Diagram: Machine Learning Pipeline with Cross-Batch Memory (XBM)

### Overview

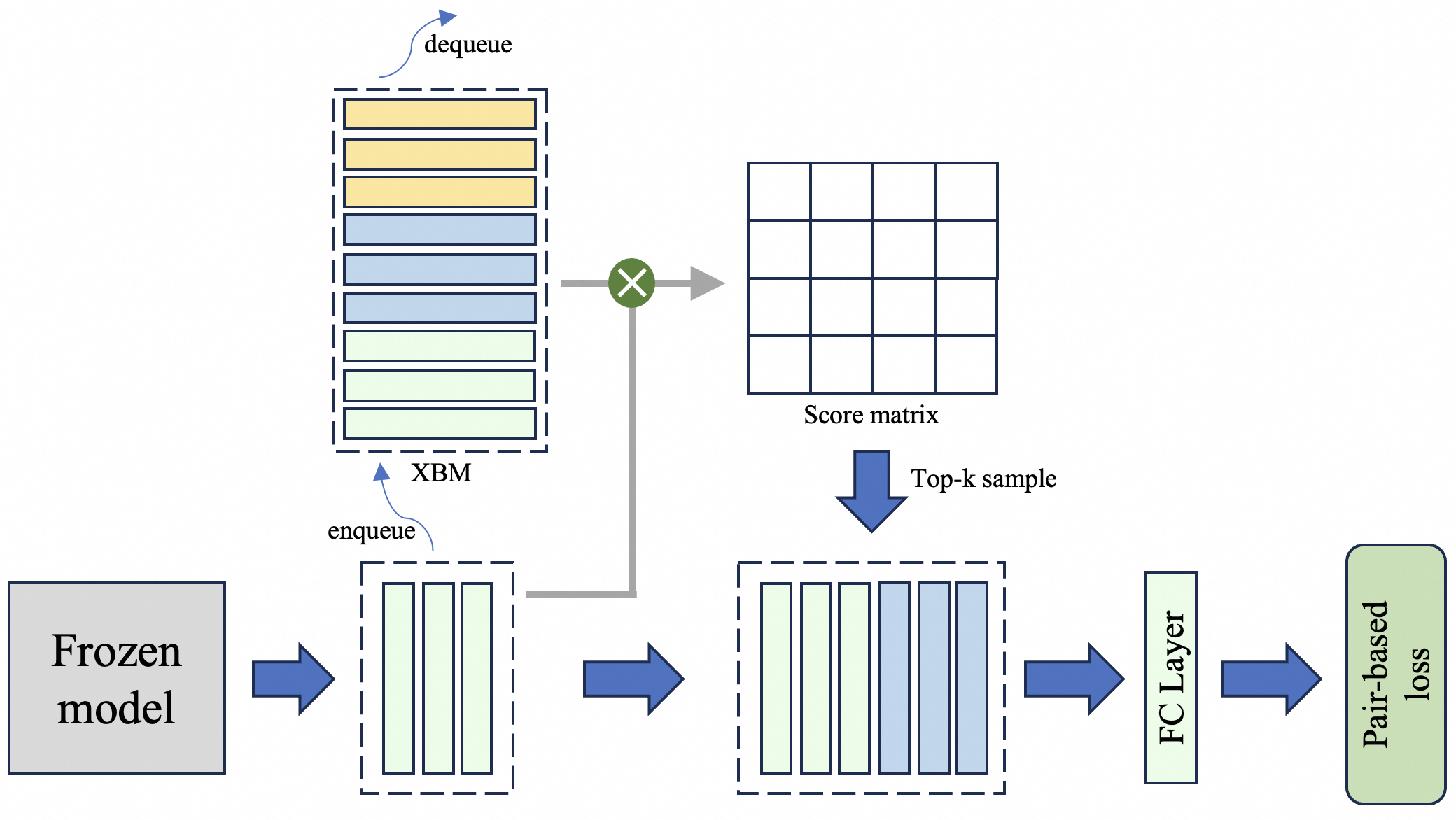

This image is a technical diagram illustrating a machine learning training pipeline. The system processes data through a frozen model, utilizes a memory bank (XBM) to store and retrieve feature representations, computes similarity scores, performs top-k sampling, and finally calculates a pair-based loss. The flow is primarily left-to-right with a vertical memory interaction.

### Components/Axes

The diagram consists of several distinct components connected by arrows indicating data flow. There are no traditional chart axes. The key labeled components are:

1. **Frozen model**: A gray box on the far left, representing a pre-trained model whose parameters are not updated during this training phase.

2. **XBM (Cross-Batch Memory)**: A vertically oriented, dashed-line box containing a stack of colored rectangles. It has two labeled operations:

* `enqueue`: An arrow pointing into the bottom of the XBM.

* `dequeue`: An arrow pointing out of the top of the XBM.

3. **Score matrix**: A 4x4 grid of empty squares, representing a matrix of similarity scores.

4. **Top-k sample**: A label next to a large blue arrow pointing downward.

5. **FC Layer**: A vertical rectangle labeled "FC Layer" (Fully Connected Layer).

6. **Pair-based loss**: A green, rounded rectangle on the far right, representing the final loss function.

### Detailed Analysis

**Data Flow and Component Relationships:**

1. **Input to Frozen Model**: The process begins with data (not shown) entering the "Frozen model" (leftmost gray box).

2. **Feature Extraction & Memory Enqueue**: The frozen model outputs a batch of feature vectors, represented by three light green vertical bars inside a dashed box. A blue arrow points from this output to the right. A branch from this path, indicated by a gray line and an upward blue arrow labeled `enqueue`, sends these new features into the bottom of the **XBM** memory bank.

3. **XBM Memory Bank**: The XBM is depicted as a stack of horizontal bars in three colors:

* Top three bars: Yellow

* Middle three bars: Light blue

* Bottom three bars: Light green

This color-coding likely represents different batches or types of stored features. The `dequeue` arrow at the top indicates older features are removed from the memory.

4. **Score Matrix Computation**: The current batch's features (the three light green bars) and the features retrieved from the XBM (implied by the gray line connecting the XBM to the multiplication symbol) are combined. A green circle with a white "X" (multiplication operator) symbolizes a dot product or similarity computation. The result is the **Score matrix**, a 4x4 grid. This suggests the current batch is compared against a set of features from memory, producing a matrix of pairwise similarity scores.

5. **Top-k Sampling**: A large blue arrow labeled `Top-k sample` points downward from the Score matrix to a new set of feature bars. This operation selects the top-k most similar (or dissimilar, depending on the loss) pairs from the score matrix. The resulting set, shown in a dashed box, contains a mix of light green and light blue bars, indicating it's a sampled subset combining current and memory features.

6. **Loss Calculation**: The sampled features are passed through an **FC Layer** (a transformation). The output then flows to the final component, the **Pair-based loss** function (green rounded rectangle), which computes the training objective (e.g., contrastive loss, triplet loss) based on the selected pairs.

### Key Observations

* **Color Consistency**: The light green color is used consistently for the output of the frozen model and the corresponding bars in the XBM and the top-k sampled set, indicating these are the "current" or "anchor" features. Light blue appears in the XBM and the sampled set, representing "memory" or "positive/negative" features. Yellow bars are only in the XBM and are not selected in the top-k sample shown.

* **Spatial Layout**: The XBM is positioned above the main data flow, emphasizing its role as an external memory bank that interacts with the primary pipeline. The flow is linear until the score computation, which involves a vertical interaction with memory.

* **Data Transformation**: The diagram clearly shows a transformation from raw features (green bars) to a similarity space (score matrix), then to a selected subset (sampled bars), and finally to a loss value.

### Interpretation

This diagram depicts a sophisticated training strategy, likely for **metric learning** or **self-supervised learning** (e.g., MoCo, BYOL variants). The core innovation is the **Cross-Batch Memory (XBM)**.

* **Purpose**: The pipeline addresses the limitation of small batch sizes in contrastive learning. By maintaining a memory bank (XBM) of feature representations from previous batches, the model can compare the current batch against a much larger and more diverse set of examples, improving training stability and performance.

* **Mechanism**: The frozen model ensures stable feature extraction. The `enqueue`/`dequeue` mechanism implements a FIFO queue, keeping the memory bank updated with recent features. The **Score matrix** computes similarities between the current batch and the memory bank. **Top-k sampling** is a critical step that selects the most informative pairs (e.g., hardest positives or negatives) for loss computation, making the training more efficient and effective.

* **Flow Logic**: The system creates a dynamic, expanding set of comparisons for each training step. The pair-based loss then updates the trainable part of the network (not shown, but implied to be connected to the frozen model or a separate projector) to improve the feature space, pulling positive pairs closer and pushing negative pairs apart.

* **Notable Design**: The separation of the "Frozen model" suggests this could be part of a larger architecture where only a projection head or a specific module is being trained, while the backbone remains fixed to preserve learned representations. The use of a memory bank is a key technique for scaling contrastive learning beyond the GPU batch size.