## Diagram: Layer 1 Architecture

### Overview

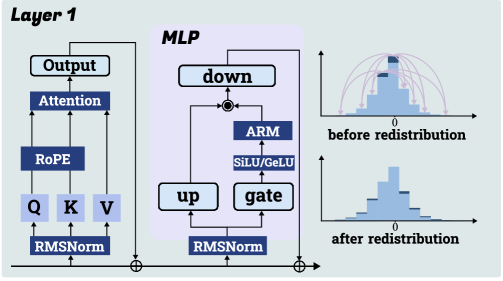

The image presents a diagram of a "Layer 1" architecture, likely within a neural network. It illustrates the flow of data through different components, including an attention mechanism, a Multi-Layer Perceptron (MLP), and redistribution histograms.

### Components/Axes

* **Header:** "Layer 1" is located at the top-left.

* **Left Branch:**

* "Output" (top)

* "Attention" (below Output)

* "RoPE" (below Attention)

* "Q", "K", "V" (arranged horizontally below RoPE)

* "RMSNorm" (bottom)

* **Right Branch (MLP):**

* "MLP" label at the top.

* "down" (top)

* "ARM" (below down)

* "SiLU/GeLU" (below ARM)

* "up" (below and to the left of SiLU/GeLU)

* "gate" (below and to the right of SiLU/GeLU)

* "RMSNorm" (bottom)

* **Histograms:**

* Top histogram labeled "before redistribution" with a horizontal axis labeled "0".

* Bottom histogram labeled "after redistribution" with a horizontal axis labeled "0".

* **Connections:** Arrows indicate the flow of data between components. A summation symbol (⊕) connects the outputs of the left and right branches.

### Detailed Analysis

* **Left Branch:**

* Data flows from "RMSNorm" to "Q", "K", and "V".

* "Q", "K", and "V" feed into "RoPE".

* "RoPE" feeds into "Attention".

* "Attention" feeds into "Output".

* "Output" connects to the summation symbol.

* **Right Branch (MLP):**

* Data flows from "RMSNorm" to "up" and "gate".

* "up" and "gate" feed into "SiLU/GeLU".

* "SiLU/GeLU" feeds into "ARM".

* "ARM" feeds into "down".

* "down" connects back to "up" and also to the summation symbol.

* **Histograms:**

* The "before redistribution" histogram shows a distribution with a peak slightly to the left of 0 and a smaller peak to the right. Arrows indicate a redistribution process.

* The "after redistribution" histogram shows a modified distribution, seemingly more concentrated around 0.

### Key Observations

* The diagram illustrates a parallel processing architecture with two main branches: an attention mechanism and an MLP.

* The histograms suggest a redistribution of data values, potentially for normalization or regularization purposes.

* The "RoPE" component in the attention branch is likely related to positional encoding.

* The "SiLU/GeLU" component in the MLP branch represents an activation function.

* The "ARM" component is located between "down" and "SiLU/GeLU".

### Interpretation

The diagram depicts a layer within a neural network that combines attention mechanisms with a multi-layer perceptron. The redistribution histograms suggest a process of modifying the data distribution, possibly to improve training stability or performance. The parallel architecture allows for simultaneous processing of data through different pathways, potentially capturing different aspects of the input. The presence of "RoPE" indicates that positional information is being incorporated into the attention mechanism. The "ARM" component's function is unclear without further context, but its placement suggests it modifies the signal between the "down" block and the activation function.