## Diagram: STAgent Training Pipeline Flowchart

### Overview

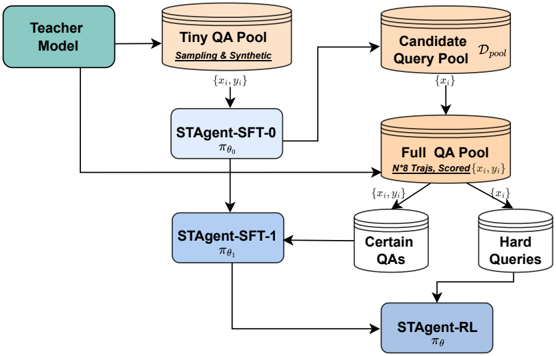

This image is a technical flowchart illustrating a multi-stage training pipeline for an AI agent named "STAgent". The diagram shows the flow of data and models through different training phases, starting from a teacher model and culminating in a reinforcement learning stage. The process involves creating and refining pools of question-answer (QA) data to train successive versions of the agent.

### Components/Axes

The diagram consists of several distinct components connected by directional arrows indicating data or process flow. Components are color-coded:

* **Green Box (Top-Left):** "Teacher Model"

* **Orange Cylinders (Data Pools):**

* "Tiny QA Pool" (Top-Center) with sub-text: "Sampling & Synthetic"

* "Candidate Query Pool D_pool" (Top-Right)

* "Full QA Pool" (Center-Right) with sub-text: "N^8 Trajs, Scored"

* **Blue Boxes (Models/Agents):**

* "STAgent-SFT-0" (Center-Left) with notation: π_θ₀

* "STAgent-SFT-1" (Center) with notation: π_θ₁

* "STAgent-RL" (Bottom-Right) with notation: π_θ

* **White Cylinders (Filtered Data):**

* "Certain QAs" (Center-Right, below Full QA Pool)

* "Hard Queries" (Center-Right, below Full QA Pool)

### Detailed Analysis

The process flow is as follows:

1. **Initialization:** The "Teacher Model" (green box, top-left) generates data for the "Tiny QA Pool" (orange cylinder, top-center). This pool is created via "Sampling & Synthetic" methods and contains data points denoted as `{xᵢ, yᵢ}`.

2. **First Training Stage (SFT-0):** The `{xᵢ, yᵢ}` data from the Tiny QA Pool is used to train the first model, "STAgent-SFT-0" (π_θ₀, blue box, center-left).

3. **Query Generation & Pool Expansion:** The trained STAgent-SFT-0 model generates outputs that contribute to two places:

* It helps populate the "Candidate Query Pool D_pool" (orange cylinder, top-right) with queries `{xᵢ}`.

* Its outputs are also fed into the "Full QA Pool" (orange cylinder, center-right).

4. **Full QA Pool Creation:** The "Full QA Pool" is a comprehensive dataset. It is formed by combining:

* Data from the "Candidate Query Pool D_pool" (`{xᵢ}`).

* Data from the "Teacher Model" (arrow from the green box).

* Data from "STAgent-SFT-0".

This pool is described as containing "N^8 Trajs, Scored" and holds scored trajectories `{xᵢ, yᵢ}`.

5. **Data Filtering:** The "Full QA Pool" is filtered into two specialized subsets:

* "Certain QAs" (white cylinder, center-right).

* "Hard Queries" (white cylinder, center-right).

6. **Second Training Stage (SFT-1):** The "Certain QAs" data is used to train the next model iteration, "STAgent-SFT-1" (π_θ₁, blue box, center).

7. **Reinforcement Learning Stage (RL):** The final model, "STAgent-RL" (π_θ, blue box, bottom-right), is trained using:

* The "Hard Queries" data.

* The output from the "STAgent-SFT-1" model.

### Key Observations

* **Iterative Refinement:** The pipeline shows a clear progression from a base model (SFT-0) to a refined model (SFT-1) and finally to a reinforcement learning-optimized model (RL).

* **Data-Centric Approach:** The core of the process is the creation and strategic filtering of QA data pools ("Tiny", "Candidate", "Full", "Certain", "Hard") to train increasingly capable agents.

* **Teacher-Student Architecture:** The initial "Teacher Model" seeds the entire process, indicating a knowledge distillation or supervision framework.

* **Notation:** Model parameters are denoted by π with subscripts θ₀, θ₁, and θ, indicating different training stages or parameter sets.

### Interpretation

This flowchart depicts a sophisticated, data-centric methodology for training a specialized AI agent (STAgent). The process emphasizes **curriculum learning** and **data quality**. It starts with a small, synthetic dataset for initial supervised fine-tuning (SFT-0). The key insight is the creation of a large, scored "Full QA Pool," which is then intelligently split. Training the next model (SFT-1) on "Certain QAs" likely reinforces reliable knowledge, while the final RL stage uses "Hard Queries" to improve robustness and performance on challenging cases. This staged approach, moving from supervised learning to reinforcement learning, is designed to produce an agent that is both knowledgeable and capable of handling complex, uncertain scenarios. The diagram serves as a blueprint for a reproducible and scalable agent training pipeline.