\n

## Diagram: Deconstruction of Depth 3 Question

### Overview

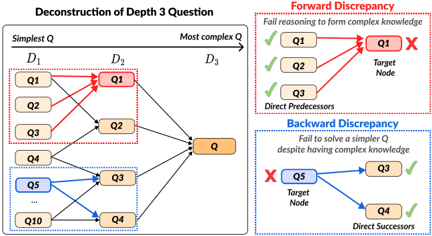

The image is a diagram illustrating the deconstruction of a Depth 3 question into simpler sub-questions, and highlighting potential discrepancies in reasoning – specifically, "Forward Discrepancy" and "Backward Discrepancy". The diagram uses a network-like structure with nodes representing questions (Q1, Q2, Q3, Q4, Q5, Q, Q10) and arrows indicating relationships between them. The diagram is divided into three main sections: a large central section showing the question decomposition, and two smaller sections on the right illustrating the discrepancies.

### Components/Axes

The diagram features the following components:

* **Title:** "Deconstruction of Depth 3 Question"

* **Horizontal Axis:** Labeled "Simplest Q" to "Most complex Q", representing the increasing complexity of questions. This axis spans the central section of the diagram.

* **Depth Labels:** D1, D2, and D3, marking stages of decomposition along the horizontal axis.

* **Nodes:** Represented by rounded rectangles, labeled Q1, Q2, Q3, Q4, Q5, Q, and Q10.

* **Arrows:** Indicate relationships between nodes. Red arrows signify failure in reasoning, while black arrows represent successful reasoning. Blue dashed arrows are also present.

* **Labels:** "Forward Discrepancy", "Fail reasoning to form complex knowledge", "Direct Predecessors", "Target Node", "Backward Discrepancy", "Fail to solve a simpler Q despite having complex knowledge", "Direct Successors".

* **Checkmarks and X Marks:** Used to indicate success or failure in reasoning.

### Detailed Analysis or Content Details

**Central Decomposition Section:**

* **D1:** Contains nodes Q1, Q2, Q3, and Q4.

* **D2:** Contains nodes Q1, Q2, Q3, and Q4.

* **D3:** Contains node Q.

* **Connections:**

* Q1 in D1 connects to Q1 in D2 via a red arrow with an 'X' mark.

* Q2 in D1 connects to Q2 in D2 via a black arrow with a checkmark.

* Q3 in D1 connects to Q3 in D2 via a black arrow with a checkmark.

* Q4 in D1 connects to Q4 in D2 via a black arrow with a checkmark.

* Q1 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q2 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q3 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q4 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q10 connects to Q4 via a blue dashed arrow.

* Q3 connects to Q4 via a blue dashed arrow.

* An ellipsis (...) indicates further connections from Q3 to Q4.

**Forward Discrepancy Section (Top-Right):**

* Nodes: Q1, Q1 (Target Node).

* Connections:

* Q1 connects to Q1 (Target Node) via a red arrow with an 'X' mark.

* Q2 connects to Q1 (Target Node) via a black arrow with a checkmark.

* Q3 connects to Q1 (Target Node) via a black arrow with a checkmark.

* Label: "Fail reasoning to form complex knowledge".

**Backward Discrepancy Section (Bottom-Right):**

* Nodes: Q5, Q3, Q4.

* Connections:

* Q5 connects to Q3 via a red arrow with an 'X' mark.

* Q5 connects to Q4 via a black arrow with a checkmark.

* Q3 connects to Q4 via a black arrow with a checkmark.

* Label: "Fail to solve a simpler Q despite having complex knowledge".

### Key Observations

* The diagram highlights two types of reasoning failures: forward and backward discrepancies.

* Forward discrepancy occurs when reasoning fails to build complex knowledge from simpler components.

* Backward discrepancy occurs when reasoning fails to solve a simpler question despite having complex knowledge.

* The use of red arrows and 'X' marks clearly indicates failures in reasoning.

* The diagram suggests a hierarchical structure where complex questions are broken down into simpler ones.

### Interpretation

The diagram illustrates a potential issue in knowledge representation and reasoning systems. It demonstrates that simply having the components of knowledge (simpler questions) doesn't guarantee the ability to synthesize them into more complex understanding (the target question). The "Forward Discrepancy" suggests a failure in the *constructive* process of reasoning – the system can't build up to the complex question. The "Backward Discrepancy" suggests a failure in the *reductive* process – the system can't break down the complex question to solve simpler ones.

The diagram is a visual representation of a potential problem in AI or cognitive architectures, where a system might possess individual pieces of information but struggle to apply them effectively in either direction – building up or breaking down knowledge. The use of the Depth (D1, D2, D3) labels suggests a layered approach to problem-solving, and the discrepancies highlight potential weaknesses in this layered structure. The diagram is not presenting data in a quantitative sense, but rather a conceptual model of reasoning failures. It's a qualitative illustration of potential pitfalls in knowledge processing.