## Bar Chart: Comparison of Average Episode Length Across Reinforcement Learning Algorithms

### Overview

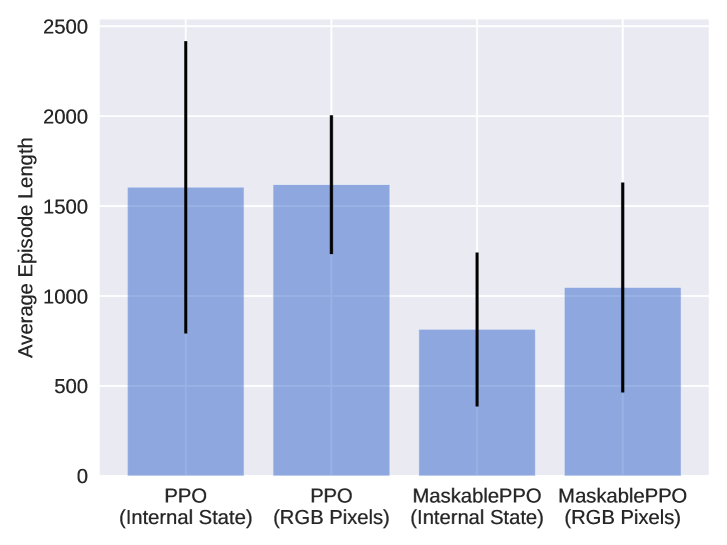

The image displays a vertical bar chart comparing the average episode length achieved by four different reinforcement learning algorithm configurations. The chart includes error bars for each category, indicating variability in the measurements.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Average Episode Length". The scale runs from 0 to 2500, with major gridlines at intervals of 500 (0, 500, 1000, 1500, 2000, 2500).

* **X-Axis (Horizontal):** Lists four distinct algorithm configurations as categories. From left to right:

1. `PPO (Internal State)`

2. `PPO (RGB Pixels)`

3. `MaskablePPO (Internal State)`

4. `MaskablePPO (RGB Pixels)`

* **Data Series:** A single data series represented by light blue bars. Each bar's height corresponds to the mean average episode length for that configuration.

* **Error Bars:** Black vertical lines extending above and below the top of each bar, representing the standard deviation or confidence interval of the measurements.

### Detailed Analysis

The following values are approximate, derived from visual inspection of the chart against the y-axis scale.

1. **PPO (Internal State):**

* **Bar Height (Mean):** Approximately 1600.

* **Error Bar Range:** Extends from approximately 800 to 2400. This is the largest range, indicating high variance.

* **Trend:** This configuration and the next show the highest average episode lengths.

2. **PPO (RGB Pixels):**

* **Bar Height (Mean):** Approximately 1600, nearly identical to the first bar.

* **Error Bar Range:** Extends from approximately 1250 to 2000. The variance is smaller than for PPO (Internal State).

3. **MaskablePPO (Internal State):**

* **Bar Height (Mean):** Approximately 800. This is the lowest average episode length.

* **Error Bar Range:** Extends from approximately 400 to 1250.

* **Trend:** This and the next configuration show notably lower average episode lengths than the standard PPO variants.

4. **MaskablePPO (RGB Pixels):**

* **Bar Height (Mean):** Approximately 1050.

* **Error Bar Range:** Extends from approximately 500 to 1600.

### Key Observations

* **Performance Grouping:** The chart reveals two distinct performance groups. The standard PPO algorithms (both Internal State and RGB Pixels) achieve average episode lengths around 1600. The MaskablePPO algorithms perform worse, with averages between 800 and 1050.

* **Input Modality Impact:** For PPO, the choice between using internal state or RGB pixels as input has a negligible effect on the *average* episode length (both ~1600). However, it significantly affects the *variance*, with internal state showing much wider error bars.

* **Algorithm Impact:** The MaskablePPO algorithm results in shorter average episodes compared to standard PPO, regardless of the input type.

* **Variance:** All configurations show substantial variance, as indicated by the tall error bars. The variance is particularly high for PPO using internal state.

### Interpretation

This chart suggests that for the specific task being measured, the standard PPO algorithm is more effective at sustaining longer episodes than MaskablePPO. The "Maskable" variant appears to lead to earlier episode termination on average.

The high variance, especially for PPO (Internal State), indicates that performance is not consistent across different training runs or environment seeds. This could imply sensitivity to initial conditions or a less stable learning process for that configuration.

The minimal difference in mean performance between internal state and RGB pixel inputs for PPO is a notable finding. It suggests that for this task, the agent can learn an effective policy from raw visual data (RGB Pixels) just as well as from a direct internal state representation, which has implications for the feasibility of training agents in environments where the internal state is not directly accessible.

**In summary, the data demonstrates a clear performance advantage for standard PPO over MaskablePPO in maximizing episode length, highlights significant performance variability, and shows that PPO can effectively utilize pixel-based inputs for this task.**