TECHNICAL ASSET FINGERPRINT

bdd5ecdaa1ea2ced77e42ce0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Neural Network Diagram: Federated Learning with Matryoshka Representations

### Overview

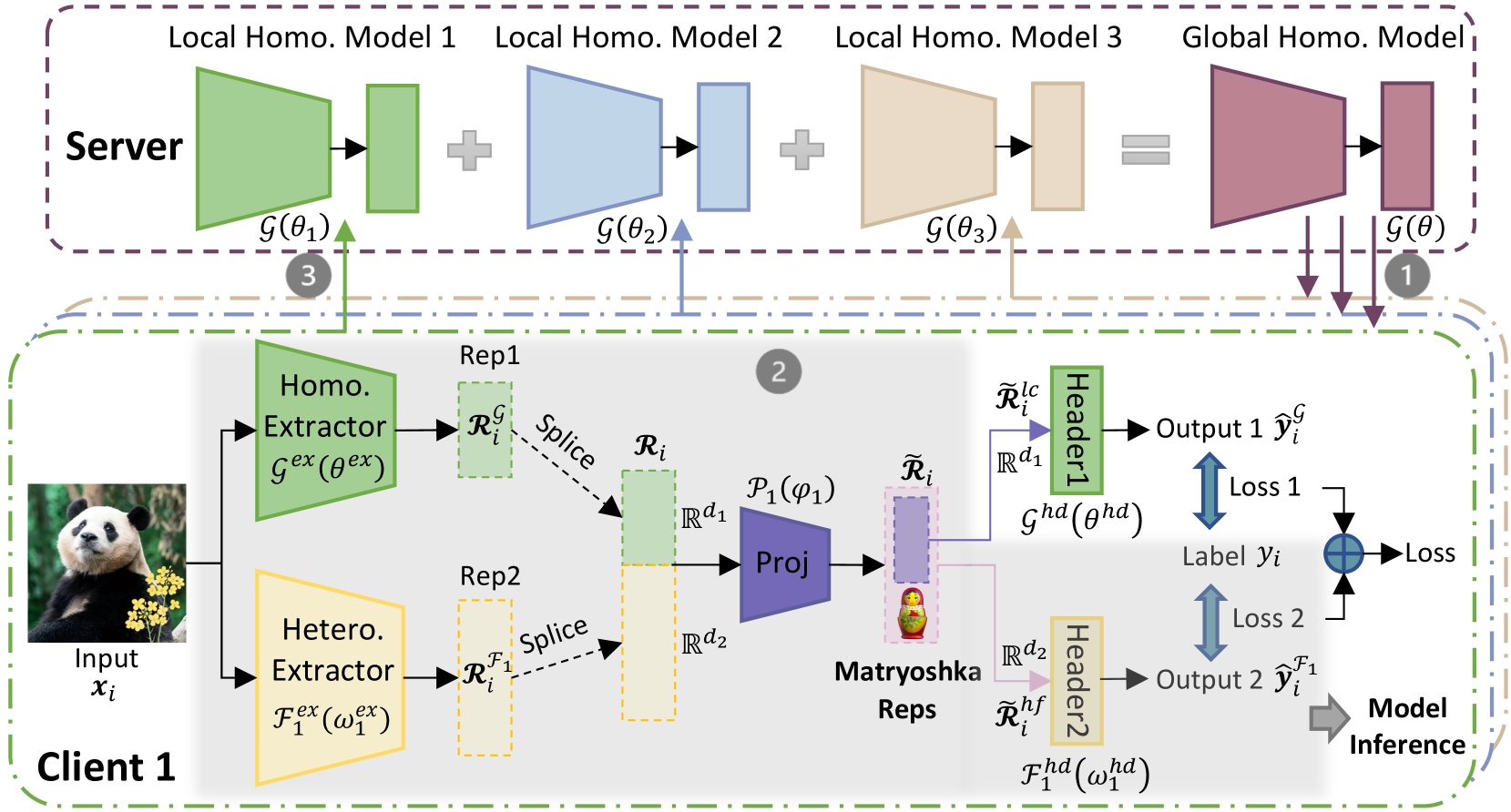

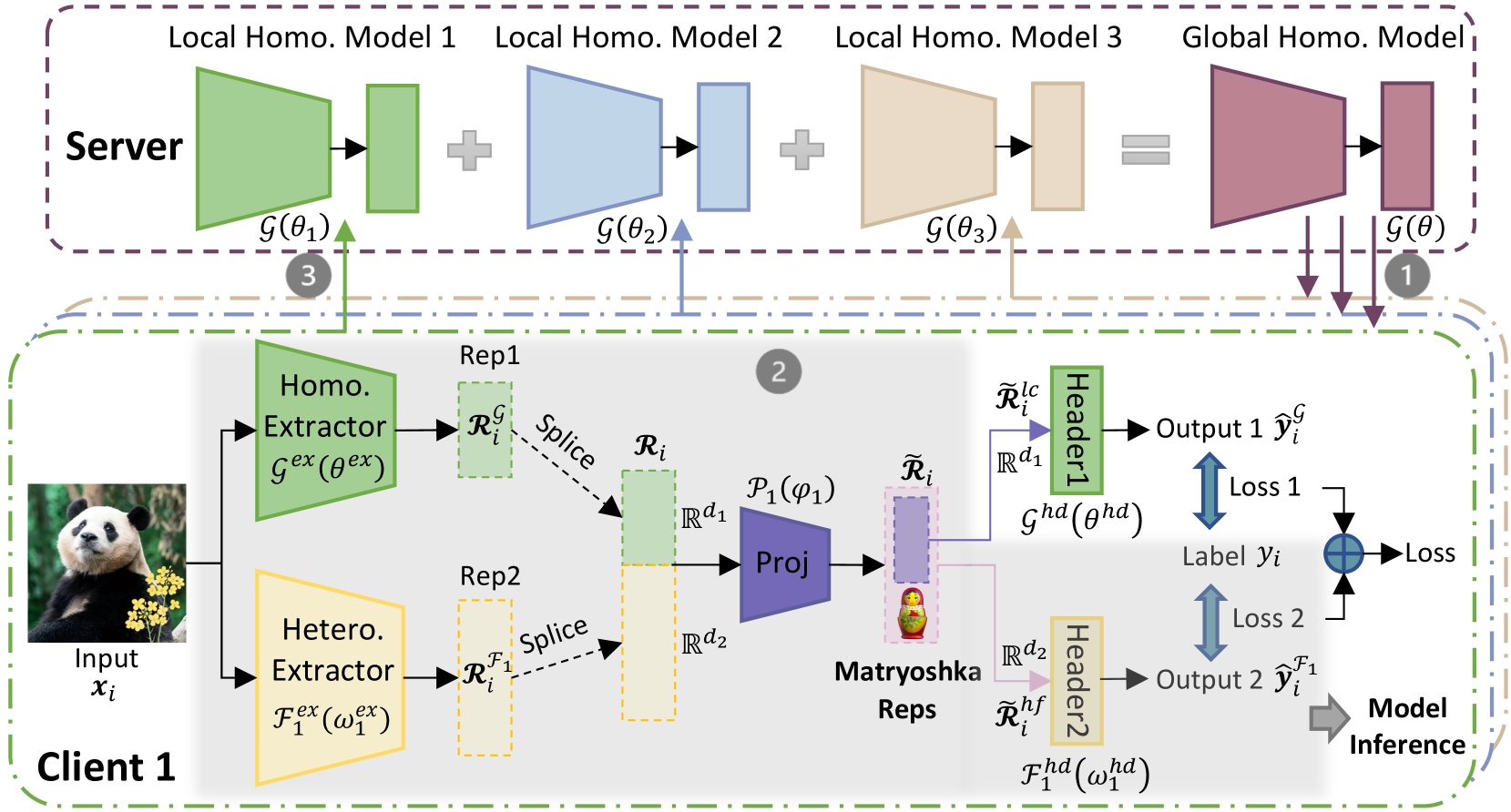

The image presents a diagram of a federated learning system, specifically focusing on the use of "Matryoshka Representations." The system involves a server and a client, with the client performing local feature extraction and the server aggregating information from multiple clients (in this case, represented by Local Homo. Models). The diagram highlights the flow of data and parameters between the client and server, as well as the internal processing steps within each.

### Components/Axes

**Regions:**

* **Server (Top):** Enclosed in a dashed purple box.

* **Client 1 (Bottom):** Enclosed in a dashed green box.

**Server Components:**

* **Local Homo. Model 1:** Represented by a green trapezoid and a green rectangle, labeled as G(θ1).

* **Local Homo. Model 2:** Represented by a blue trapezoid and a blue rectangle, labeled as G(θ2).

* **Local Homo. Model 3:** Represented by a tan trapezoid and a tan rectangle, labeled as G(θ3).

* **Global Homo. Model:** Represented by a maroon trapezoid and a maroon rectangle, labeled as G(θ).

**Client 1 Components:**

* **Input:** An image of a panda, labeled as "Input xi".

* **Homo. Extractor:** A green trapezoid labeled as "Gex (θex)".

* **Hetero. Extractor:** A tan trapezoid labeled as "Fex (ω1ex)".

* **Rep1:** A dashed green rectangle labeled as "RiG".

* **Rep2:** A dashed tan rectangle labeled as "RiF1".

* **Proj:** A blue trapezoid labeled as "P1(φ1)".

* **Matryoshka Reps:** A purple rectangle containing a Matryoshka doll.

* **Header1:** A green rectangle labeled as "Header1".

* **Header2:** A tan rectangle labeled as "Header2".

* **Output 1:** Labeled as "Output 1 ŷiG".

* **Output 2:** Labeled as "Output 2 ŷiF1".

* **Label:** Labeled as "Label yi".

**Arrows and Labels:**

* Arrows indicate the flow of data and parameters.

* "Splice" labels indicate the combination of representations.

* "Loss 1" and "Loss 2" indicate loss calculations.

* "Model Inference" indicates the final output.

* Rd1 and Rd2 indicate the dimensionality of the representations.

* R̃i lc and R̃i hf are representations after the Matryoshka Reps.

**Numerical Indicators:**

* (1), (2), and (3) in gray circles indicate different stages or steps in the process.

### Detailed Analysis

**Server Side:**

* The server aggregates information from three "Local Homo. Models." Each model consists of a trapezoidal shape followed by a rectangular shape.

* The outputs of the local models are combined (indicated by "+" signs) to form a "Global Homo. Model."

**Client Side:**

* The client takes an image as input and extracts features using two extractors: "Homo. Extractor" and "Hetero. Extractor."

* The outputs of the extractors are represented as "RiG" and "RiF1" respectively.

* These representations are spliced and projected using "Proj."

* The projected representation is then processed by "Matryoshka Reps."

* The outputs of the Matryoshka Reps are fed into "Header1" and "Header2" to produce "Output 1" and "Output 2."

* Losses are calculated based on the difference between the outputs and the "Label yi."

**Data Flow:**

* The "Global Homo. Model" parameters are sent to the client (indicated by arrow 1).

* The client processes the input and sends information back to the server (indicated by arrow 2).

* The server updates its local models based on the information received from the client (indicated by arrow 3).

### Key Observations

* The diagram illustrates a federated learning setup where the client performs local feature extraction and the server aggregates information from multiple clients.

* The use of "Matryoshka Representations" suggests a hierarchical or multi-scale approach to feature learning.

* The presence of two extractors ("Homo." and "Hetero.") indicates that the system is designed to capture different types of features.

### Interpretation

The diagram depicts a federated learning system that leverages "Matryoshka Representations" for feature learning. The system aims to learn a global model by aggregating information from multiple clients while preserving data privacy. The use of two extractors on the client side suggests that the system is designed to capture both homogeneous and heterogeneous features from the input data. The "Matryoshka Reps" likely play a role in creating a more robust and generalizable representation of the data. The diagram highlights the key components and data flow within the system, providing a high-level overview of its architecture and functionality. The system appears to be designed for a scenario where data is distributed across multiple clients, and it is not feasible or desirable to centralize the data for training.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Federated Learning with Matryoshka Representation

### Overview

This diagram illustrates a federated learning system employing a Matryoshka representation for privacy-preserving model training. The system involves a server and multiple clients (Client 1 is shown). Clients train local models on their private data and send model updates to the server, which aggregates them to create a global model. The Matryoshka representation adds an additional layer of privacy by encoding client data into a series of nested representations.

### Components/Axes

The diagram is segmented into two main sections: "Server" (top, light blue) and "Client 1" (bottom, yellow). Key components include:

* **Input (xᵢ):** Image of two pandas.

* **Homo. Extractor (Gex(θex)):** Homogeneous Extractor.

* **Hetero. Extractor (Tex(ωex)):** Heterogeneous Extractor.

* **Rep1 & Rep2:** Representations from the Homo. and Hetero. Extractors respectively.

* **Splice:** Operation combining Rep1 and Rep2.

* **Proj:** Projection operation.

* **Matryoshka Reps:** Nested representations (orange, apple, banana).

* **Header1 & Header2:** Classification headers.

* **Output 1 (ŷᵢ) & Output 2 (ŷᵢ¹):** Model outputs.

* **Loss 1 & Loss 2:** Loss functions.

* **Label (yᵢ):** Ground truth label.

* **Local Homo. Model 1, 2, 3:** Local homogeneous models on the server.

* **Global Homo. Model:** Global homogeneous model on the server.

* **G(θ), G(θ₁), G(θ₂), G(θ₃):** Model functions with parameters.

* **Rᵢ, Rᵢ¹, Rᵢᶜ, Rᵢᵈ¹, Rᵢᵈ²:** Intermediate representations.

* **Ghd(ghd), Fhd(whd):** Header functions.

* **Arrows with numbers (1, 2, 3):** Indicate the flow of information and aggregation steps.

### Detailed Analysis or Content Details

The diagram depicts the following flow:

1. **Client-Side Processing:**

* An input image (xᵢ) of two pandas is fed into both a Homogeneous Extractor (Gex(θex)) and a Heterogeneous Extractor (Tex(ωex)).

* The Homo. Extractor produces representation Rep1 (Rᵢᶜ).

* The Hetero. Extractor produces representation Rep2 (Rᵢᵈ²).

* Rep1 and Rep2 are spliced together to create Rᵢ.

* Rᵢ is then projected (Proj) to create a Matryoshka representation (tilde Rᵢ) containing nested representations (orange, apple, banana).

* The Matryoshka representation is fed into Header1 and Header2.

* Header1 produces Output 1 (ŷᵢ) and calculates Loss 1 based on the Label (yᵢ).

* Header2 produces Output 2 (ŷᵢ¹) and calculates Loss 2.

* Model Inference is performed.

2. **Server-Side Aggregation:**

* The server hosts three Local Homo. Models (Model 1, Model 2, Model 3) with parameters θ₁, θ₂, and θ₃ respectively.

* Each local model receives updates from clients (indicated by arrow 3).

* The server aggregates the updates to create a Global Homo. Model with parameters θ.

* The Global Homo. Model is then used to generate predictions.

3. **Information Flow:**

* Arrow 1 indicates the flow of the Global Homo. Model to the clients.

* Arrow 2 indicates the flow of the Matryoshka representation from the client to the server.

* Arrow 3 indicates the flow of updates from the client's Homo. Extractor to the server's Local Homo. Models.

### Key Observations

* The system utilizes both homogeneous and heterogeneous extractors to process client data.

* The Matryoshka representation appears to be a key component for privacy preservation, encoding data into nested representations.

* The server aggregates updates from multiple clients to create a global model.

* The diagram highlights the separation between client-side processing and server-side aggregation.

* The use of Loss 1 and Loss 2 suggests a multi-task learning or auxiliary loss setup.

### Interpretation

This diagram demonstrates a federated learning approach designed to protect client privacy. The use of both homogeneous and heterogeneous extractors suggests the system can handle diverse data types or feature spaces. The Matryoshka representation likely adds an additional layer of privacy by obscuring the original data while still allowing the model to learn useful features. The server's role is to aggregate model updates without directly accessing the raw client data. The two headers and associated losses suggest a potential for learning multiple representations or tasks simultaneously. The overall architecture aims to balance model accuracy with data privacy, a crucial consideration in many real-world applications. The nested representations (Matryoshka) are a clever way to encode information in a hierarchical manner, potentially making it more difficult to reconstruct the original data. The diagram suggests a complex system with multiple stages of processing and aggregation, designed to achieve a high level of privacy and accuracy.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: Federated Learning with Homogeneous and Heterogeneous Feature Extractors

### Overview

This image is a technical system architecture diagram illustrating a federated learning framework. It depicts a two-tiered process involving a central **Server** and a **Client** (specifically "Client 1"). The system processes an input image through parallel feature extractors (homogeneous and heterogeneous), projects and combines these features into "Matryoshka Representations," and uses them for model training and inference. The diagram uses color-coding, mathematical notation, and directional arrows to show data flow and component relationships.

### Components/Axes

The diagram is segmented into two primary regions, demarcated by dashed boxes:

1. **Server Region (Top, Purple Dashed Box):**

* **Components:** Three "Local Homo. Model" blocks (1, 2, 3) and one "Global Homo. Model" block.

* **Visual Structure:** Each model is represented by a trapezoid (likely a neural network layer) feeding into a rectangle (likely a feature representation or parameter set).

* **Labels & Notation:**

* `Local Homo. Model 1`, `Local Homo. Model 2`, `Local Homo. Model 3`, `Global Homo. Model`.

* Mathematical function notation below each model: `G(θ₁)`, `G(θ₂)`, `G(θ₃)`, and `G(θ)`.

* **Flow Indicators:** Plus signs (`+`) between the local models and an equals sign (`=`) before the global model, indicating an aggregation or averaging operation. Arrows labeled `1` (purple, downward from Global Model) and `3` (green, upward to Local Model 1) show communication with the client.

2. **Client 1 Region (Bottom, Green Dashed Box):**

* **Input:** An image of a panda, labeled `Input xᵢ`.

* **Feature Extractors (Parallel Paths):**

* **Path 1 (Green):** `Homo. Extractor` with notation `G^ex(θ^ex)`. Produces `Rep1` labeled `Rᵢ^G`.

* **Path 2 (Yellow):** `Hetero. Extractor` with notation `F₁^ex(ω₁^ex)`. Produces `Rep2` labeled `Rᵢ^{F₁}`.

* **Feature Fusion & Projection:**

* Both representations (`Rᵢ^G` and `Rᵢ^{F₁}`) undergo a `Splice` operation.

* The spliced result is fed into a `Proj` (Projection) block, denoted `P₁(φ₁)`.

* The output of the projection is labeled `R̃ᵢ` and visualized with a **Matryoshka doll icon**, explicitly labeled `Matryoshka Reps`.

* **Task-Specific Heads & Loss:**

* The Matryoshka Representation `R̃ᵢ` splits into two paths:

* **Path A (Green):** Labeled `R̃ᵢ^{lc}` with dimension `ℝ^{d₁}`. Goes to `Header1` (`G^{hd}(θ^{hd})`), producing `Output 1 ŷᵢ^G`.

* **Path B (Yellow):** Labeled `R̃ᵢ^{hf}` with dimension `ℝ^{d₂}`. Goes to `Header2` (`F₁^{hd}(ω₁^{hd})`), producing `Output 2 ŷᵢ^{F₁}`.

* **Loss Calculation:** Both outputs are compared against a `Label yᵢ` to compute `Loss 1` and `Loss 2`. These are combined (via a circled plus symbol) into a final `Loss`.

* **Inference:** A gray arrow labeled `Model Inference` points from the final loss/output area, indicating the trained model's use.

* **Communication Arrow:** A green arrow labeled `3` points from the `Homo. Extractor` path up to the Server's `Local Homo. Model 1`.

### Detailed Analysis

**Data Flow & Process:**

1. **Step 1 (Server to Client):** The global model `G(θ)` sends parameters (arrow `1`) to the client.

2. **Step 2 (Client Processing):** The client processes input `xᵢ`:

* Extracts homogeneous features `Rᵢ^G` and heterogeneous features `Rᵢ^{F₁}`.

* Splices and projects them into a unified, multi-scale representation `R̃ᵢ` (Matryoshka Reps).

* Uses two separate headers for specific tasks, generating predictions and computing a combined loss.

3. **Step 3 (Client to Server):** Updated parameters from the homogeneous extractor path (arrow `3`) are sent back to update the corresponding local model on the server.

**Mathematical & Notational Details:**

* **Functions:** `G` likely denotes a homogeneous model/function, `F₁` a heterogeneous one. Superscripts `ex` and `hd` probably stand for "extractor" and "header," respectively.

* **Parameters:** `θ`, `ω`, `φ` represent learnable parameters for different components.

* **Representations:** `R` denotes a representation tensor. Superscripts `G` and `F₁` denote the source extractor. `R̃` denotes the projected/fused representation. Subscript `i` likely indexes the data sample.

* **Dimensions:** The projected representation splits into subspaces of dimensions `ℝ^{d₁}` and `ℝ^{d₂}`.

**Spatial Grounding & Color Coding:**

* **Green** is consistently used for the homogeneous pathway: `Homo. Extractor`, `Header1`, `Local Homo. Model 1`, and the communication arrow `3`.

* **Yellow** is used for the heterogeneous pathway: `Hetero. Extractor` and `Header2`.

* **Purple** is used for the server's global model and its downward communication arrow `1`.

* The **Matryoshka doll icon** is centrally placed within the client box, visually anchoring the core concept of nested or multi-scale representations.

### Key Observations

1. **Hybrid Feature Learning:** The system explicitly combines features from two distinct types of extractors (homogeneous and heterogeneous) before projection.

2. **Matryoshka Representation:** The use of a nesting doll icon is a deliberate metaphor, suggesting the projected representation `R̃ᵢ` contains nested or hierarchical subspaces (`d₁` and `d₂`) suitable for different tasks or granularities.

3. **Federated Learning Structure:** The server aggregates multiple local homogeneous models (`G(θ₁)`, `G(θ₂)`, `G(θ₃)`) into a global model (`G(θ)`), a classic federated averaging pattern. The client updates only the homogeneous part (`G^ex`) based on arrow `3`.

4. **Multi-Task Objective:** The client computes two separate losses (`Loss 1`, `Loss 2`) from two headers, which are combined. This suggests the model is trained to perform two related tasks simultaneously, possibly leveraging the different feature subspaces.

### Interpretation

This diagram outlines a sophisticated federated learning system designed for **multi-task learning with heterogeneous data sources**. The core innovation appears to be the "Matryoshka Representation" module.

* **Purpose:** The framework likely aims to train a global model (`G(θ)`) on data from multiple clients while respecting data heterogeneity. Each client may have unique data distributions (hence the `Hetero. Extractor`).

* **Mechanism:** Instead of forcing all clients into a single homogeneous feature space, the system:

1. Learns client-specific heterogeneous features (`F₁`).

2. Projects these alongside generic homogeneous features (`G`) into a shared, structured latent space (`R̃ᵢ`).

3. This latent space is explicitly structured (like Matryoshka dolls) to contain information at different scales or for different tasks, served by dedicated headers.

* **Why It Matters:** This approach could improve model personalization and performance on non-IID (non-identically and independently distributed) data in federated learning. The homogeneous extractor facilitates knowledge aggregation on the server, while the heterogeneous extractor and Matryoshka projection allow the client to retain and utilize unique local information. The multi-task loss ensures the representation is useful for multiple objectives.

* **Notable Design Choice:** The server only aggregates homogeneous models. The heterogeneous component (`F₁`) remains entirely on the client side, which is a privacy-conscious design, preventing unique client data characteristics from being directly shared.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Federated Learning Architecture with Local and Global Model Aggregation

### Overview

The diagram illustrates a federated learning system where multiple clients (e.g., Client 1) train local homogeneous models on their data, which are aggregated into a global model on a server. The client-side workflow includes input processing, representation extraction, projection, and loss calculation for model inference. Key components include local homogeneous models, a global model, extractors, projectors, and Matryoshka representations.

---

### Components/Axes

#### Server-Side Components

1. **Local Homogeneous Models**:

- **Model 1**: Green block labeled `g(θ₁)`.

- **Model 2**: Blue block labeled `g(θ₂)`.

- **Model 3**: Beige block labeled `g(θ₃)`.

2. **Global Homogeneous Model**: Purple block labeled `g(θ)`, formed by aggregating local models.

3. **Flow**: Arrows indicate aggregation (`+`) from local models to the global model.

#### Client-Side Components (Client 1)

1. **Input**: Image of a panda labeled `Input x_i`.

2. **Homo. Extractor**: Green block labeled `g_ex(θ_ex)`.

3. **Hetero. Extractor**: Yellow block labeled `F₁_ex(ω₁_ex)`.

4. **Representations**:

- **Rep1**: Green block labeled `R_i^g`.

- **Rep2**: Yellow block labeled `R_i^F1`.

5. **Projection**: Purple block labeled `P₁(φ₁)`.

6. **Matryoshka Reps**: Purple block with nested doll icon.

7. **Headers**:

- **Header1**: Green block labeled `g_hd(θ_hd)`.

- **Header2**: Yellow block labeled `F₁_hd(ω₁_hd)`.

8. **Outputs**:

- **Output 1**: `ŷ_i^g` (green arrow).

- **Output 2**: `ŷ_i^F1` (yellow arrow).

9. **Loss Functions**:

- **Loss 1**: Blue arrow labeled `Loss` (global model).

- **Loss 2**: Blue arrow labeled `Loss` (heterogeneous model).

10. **Model Inference**: Gray arrow labeled `Model Inference`.

---

### Detailed Analysis

#### Server-Side

- **Local Models**: Three distinct local homogeneous models (`g(θ₁)`, `g(θ₂)`, `g(θ₃)`) are trained independently on client data.

- **Global Model**: Aggregated from local models via summation (`+`), resulting in `g(θ)`.

#### Client-Side

1. **Input Processing**:

- The panda image (`x_i`) is processed by two extractors:

- **Homo. Extractor**: Generates homogeneous representation `R_i^g`.

- **Hetero. Extractor**: Generates heterogeneous representation `R_i^F1`.

2. **Representation Splicing**:

- `R_i^g` and `R_i^F1` are spliced into a combined representation `R_i`.

3. **Projection**:

- Combined representation `R_i` is projected via `P₁(φ₁)` into a latent space.

4. **Matryoshka Reps**:

- Nested representations (`Ũ_i`, `Ũ_i^F1`) are derived, likely for hierarchical feature learning.

5. **Headers**:

- **Header1**: Processes `Ũ_i` via `g_hd(θ_hd)`.

- **Header2**: Processes `Ũ_i^F1` via `F₁_hd(ω₁_hd)`.

6. **Loss Calculation**:

- **Loss 1**: Measures discrepancy between `ŷ_i^g` and true label `y_i`.

- **Loss 2**: Measures discrepancy between `ŷ_i^F1` and true label `y_i`.

---

### Key Observations

1. **Model Heterogeneity**: The system handles both homogeneous (`g(θ)`) and heterogeneous (`F₁(ω)`) feature extractors.

2. **Matryoshka Reps**: Suggests a nested representation strategy, possibly for multi-task learning or robustness.

3. **Loss Functions**: Dual loss objectives for global and task-specific model refinement.

4. **Color Coding**:

- Green: Homogeneous components.

- Blue: Server-side aggregation.

- Yellow: Heterogeneous components.

- Purple: Projection and Matryoshka representations.

---

### Interpretation

This architecture demonstrates a hybrid federated learning approach:

- **Local Training**: Clients train task-specific models (`g(θ₁)`, `g(θ₂)`, `g(θ₃)`) on their data.

- **Global Aggregation**: Server combines local models into a unified `g(θ)`.

- **Client-Side Personalization**: Matryoshka representations (`Ũ_i`, `Ũ_i^F1`) allow adaptation to individual client data distributions while leveraging global knowledge.

- **Dual Objectives**: Loss 1 optimizes global model accuracy, while Loss 2 ensures task-specific performance via heterogeneous extractors.

The use of Matryoshka Reps implies a focus on hierarchical feature learning, enabling the model to capture both general (global) and client-specific (local) patterns. This design balances personalization and generalization, critical for privacy-preserving federated learning.

DECODING INTELLIGENCE...